Imagine spending months training a massive model only to find it can't do basic math or fails to understand a simple piece of code. You might blame the architecture or the dataset, but the culprit is often the tokenizer. Think of the tokenizer as the model's eyes; if it sees the world through a blurry lens, no amount of training will make the model truly "see" the patterns in the data. A poorly designed tokenizer can waste up to 30% of a model's capacity on useless splits, effectively blinding the AI to the very linguistic patterns it needs to learn.

| Factor | Primary Impact | Trade-off |

|---|---|---|

| Vocabulary Size | Accuracy & Sequence Length | Memory Usage vs. Processing Speed |

| Algorithm Choice | Compression Efficiency | Generalization vs. Granularity |

| Numerical Handling | Reasoning Accuracy | Custom Rules vs. Standard BPE |

The Core Mechanics of Tokenization

At its simplest, tokenization is the bridge between raw text and numbers. LLMs don't read words; they process tokens. Tokenizer Design is the process of determining how to break text into subword units to balance memory efficiency and semantic meaning. If you break every word into single characters, your sequences become too long for the model to handle. If you make every unique word a token, your vocabulary explodes and the model can't handle words it hasn't seen before.

Most modern models use subword segmentation. This means common words like "apple" stay as one token, but rare words like "apple-picking" might be split into "apple," "-," and "picking." This approach allows models to handle a virtually infinite variety of text with a finite vocabulary. However, the tokenizer design choices made here ripple through the entire training pipeline, affecting everything from inference speed to the model's ability to solve a multiplication problem.

Comparing the Heavy Hitters: BPE, WordPiece, and Unigram

You'll mostly encounter three algorithms in the wild. Each has a different philosophy on how to build its dictionary.

Byte-Pair Encoding (BPE) is an iterative algorithm that merges the most frequent pairs of characters or bytes into a single token. This is the gold standard for general-purpose models. OpenAI's GPT-4 and Meta's Llama 3 use versions of BPE because it's versatile and balanced. It doesn't over-split common phrases but keeps the vocabulary manageable.

WordPiece, used heavily in Google's BERT, doesn't just look at frequency. It uses a likelihood score to decide which characters to merge. This results in slightly higher granularity preservation-about 8-12% higher fertility-which is great for tasks that need deep token-level detail, though it can bump up computational costs by 10-15%.

Unigram takes a probabilistic approach. It starts with a massive vocabulary and systematically prunes tokens that don't contribute much to the overall likelihood of the text. If you're working with highly structured data like assembly code, Unigram is a powerhouse. Research shows it can achieve 15-22% better compression efficiency than BPE, meaning the model can "see" more instructions in a single batch.

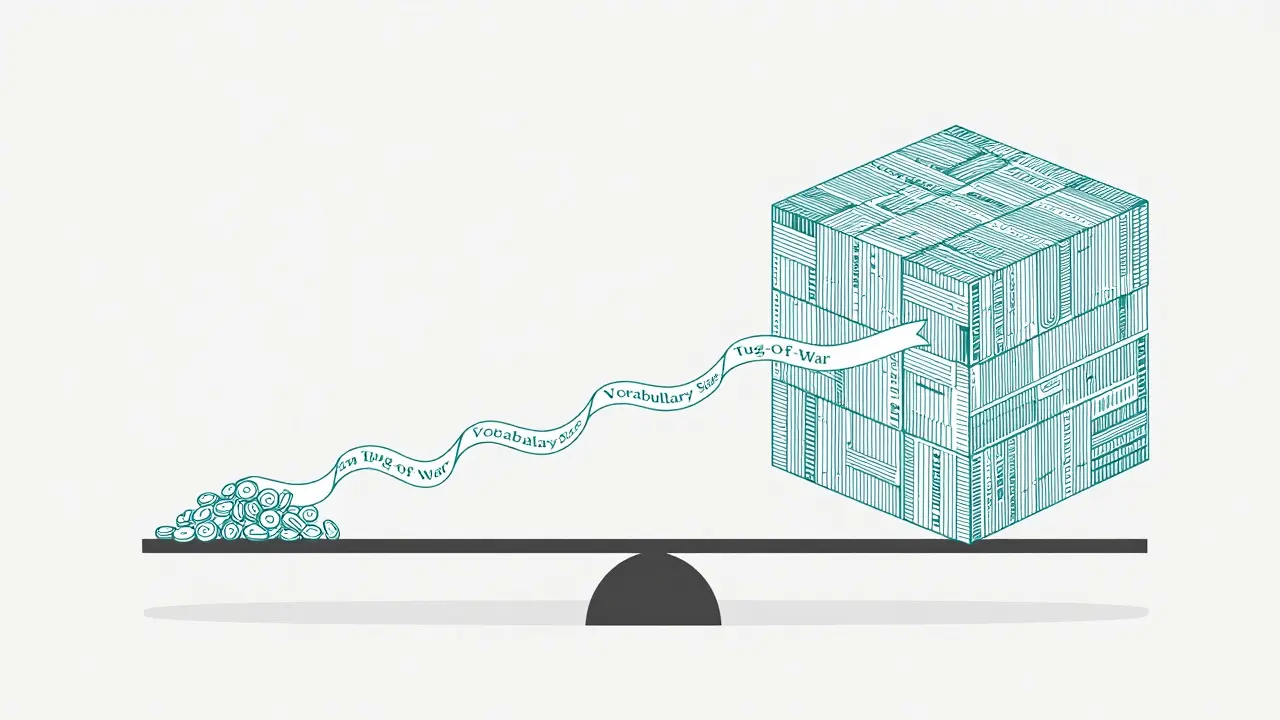

The Vocabulary Size Tug-of-War

Choosing a vocabulary size is a classic engineering trade-off. You're essentially balancing RAM against sequence length. Let's look at the numbers: a small vocabulary (around 3K tokens) can slash your embedding layer's memory overhead by 60%. But there's a catch-your sequences get 25-40% longer because the model has to use more tokens to describe the same sentence.

On the other end, a large vocabulary (like 128K tokens in Llama 3.2) makes sequences 30-45% shorter, which speeds up processing. However, it increases memory usage by 75-90%. In practical terms, if you're building a code generation model, moving from a 32K to a 64K vocabulary can jump your accuracy by 9% simply because the model encounters fewer "unknown" or fragmented tokens in complex function signatures.

The Achilles' Heel: Numerical Representation

One of the most frustrating parts of standard tokenization is how it handles numbers. Most tokenizers treat digits inconsistently. For example, the number "100" might be one token, while "1000" might be split into "10" and "00". This creates embedding inconsistencies that make LLMs struggle with basic arithmetic.

In the real world, this is a deal-breaker for financial apps. One financial analysis model suffered a 12.7% error rate in currency calculations simply because the tokenizer was splitting currency values unpredictably. The fix? Custom numerical tokenization rules. By forcing the tokenizer to treat digits individually or in consistent groups, developers have seen accuracy improvements of up to 18%.

Practical Implementation: How to Choose

If you're setting up a training pipeline today, don't just use the default tokenizer. Follow a systematic approach based on your domain:

- General Purpose: Stick with BPE. It provides the most stable performance across diverse datasets.

- Code or Technical Data: Consider Unigram. The improved compression means you can fit more logic into the model's context window.

- High-Precision Analysis: WordPiece is your best bet for tasks requiring granular token-level insights.

From a workflow perspective, start by collecting a representative corpus of at least 100 million tokens. If you use the Hugging Face tokenizers library, you can prototype different sizes quickly. Be prepared for a learning curve; it usually takes a developer about 15-20 hours of tinkering to truly optimize a custom vocabulary for a specific industry, like healthcare or law, where specialized terminology can otherwise cause massive "out-of-vocabulary" (OOV) issues.

The Future of Tokenization

We are moving away from static dictionaries. The next frontier is adaptive tokenization, where the vocabulary shifts dynamically based on the input content. This could potentially reduce sequence lengths by another 25-35% without losing any meaning. We're also seeing a push toward mathematical encoding. Instead of treating "pi" or "square root" as strings of characters, new prototypes from Google DeepMind are encoding numbers as mathematical expressions, which has already shown a 28% improvement in numerical reasoning in early tests.

Why does vocabulary size affect model accuracy?

Larger vocabularies reduce the number of "out-of-vocabulary" (OOV) tokens. When a model encounters a word it doesn't know, it has to break it into tiny, often meaningless fragments. A larger vocabulary allows the model to keep more complex words or phrases as single units, which preserves the semantic meaning and improves accuracy in specialized tasks like predicting function signatures in code.

Which tokenizer is best for coding tasks?

Unigram is often superior for coding and assembly language tasks because it offers better compression efficiency. It requires 12-18% fewer tokens per instruction than BPE or WordPiece, allowing the model to process more code within the same context window limit.

What is the 'fertility' of a tokenizer?

Fertility refers to the average number of tokens a tokenizer produces per word. Higher fertility means the tokenizer is more granular (breaking words into more pieces). WordPiece typically has 8-12% higher fertility than BPE, which is beneficial for tasks that need a very detailed breakdown of text but increases the computational load.

How do I fix numerical errors in my LLM?

The most effective method is implementing custom numerical tokenization rules. Instead of letting the BPE algorithm split numbers randomly, you can force the tokenizer to treat each digit as an individual token or use a specialized numerical handler. This prevents the embedding inconsistencies that lead to calculation errors.

Does BPE always outperform WordPiece?

Not necessarily. BPE is more versatile for general-purpose chat and text generation. However, WordPiece is often better for discriminative tasks (like classification) where high granularity is an advantage. The choice depends entirely on whether you prioritize versatility or detailed token-level precision.

Artificial Intelligence

Artificial Intelligence

Sanjay Mittal

April 6, 2026 AT 13:47The point about numerical representation is spot on. In my experience, simply treating digits as individual tokens is the most reliable way to stop the model from hallucinating basic arithmetic, especially when dealing with variable-length floats in scientific datasets.

Jeff Napier

April 7, 2026 AT 09:00prob is they want us to think it's just about "efficiency" but really it's about controlling how the ai perceives reality by limiting its vocabulary the whole thing is a social experiment to see how much we'll trust a black box that can't even count to ten without a custom rule

Sibusiso Ernest Masilela

April 8, 2026 AT 10:36Imagine actually believing that a simple change in vocabulary size is the silver bullet for "accuracy." It's honestly laughable that some people think tinkering with a BPE merge table for 20 hours constitutes actual engineering. This is basic stuff that any competent developer should have mastered years ago, yet here we are treating it like some divine revelation.

Ronak Khandelwal

April 8, 2026 AT 20:49This really makes me think about how we structure our own thoughts! 🌟 Just like these models, we have our own internal "tokenizers" for how we perceive the world around us. If we can be more inclusive in how we categorize others, we can expand our own mental vocabulary and see the beauty in the fragments! Let's all keep learning and growing together! ✨🚀

Mike Zhong

April 9, 2026 AT 13:42The obsession with "compression efficiency" is a symptom of a lazy industry. We're prioritizing hardware limitations over the actual ontological integrity of language. If you're sacrificing semantic nuance just to fit more tokens into a context window, you're not building an intelligence; you're building a sophisticated zip file that can talk back.

Daniel Kennedy

April 10, 2026 AT 10:38I've seen plenty of people struggle with this, but it's important to remember that the "right" tokenizer depends entirely on your specific domain data. For those of you just starting out, don't get intimidated by the math-just start with BPE and iterate based on your error logs. It's a learning process for everyone, so let's be patient and helpful as we figure this out together.

Salomi Cummingham

April 11, 2026 AT 21:04Oh my goodness, I cannot even begin to describe the absolute sheer agony of watching a model fail at a simple currency conversion because of a tokenizer split! It is truly a tragedy of epic proportions when you've spent weeks cleaning your data and the whole thing collapses because the number 1000 decided to split itself into two completely different entities! It's simply heartbreaking for any developer who cares about the quality of their output, and I honestly feel for anyone who has had to spend those grueling twenty hours of tinkering just to make the numbers behave themselves!

Jamie Roman

April 13, 2026 AT 12:48I've been spending a lot of time lately just pondering the implications of adaptive tokenization and how it might fundamentally change the way we interact with these systems, since if the vocabulary can shift on the fly, it almost feels like the model is developing a more fluid form of consciousness or at least a more nuanced way of grasping the specific context of a conversation, which is something I've always found fascinating in a way that makes me want to dive deeper into the research even though it's a bit overwhelming at times.

Taylor Hayes

April 14, 2026 AT 14:54That's a really interesting perspective on the fluidity of language. It sounds like you're really passionate about where this is going, and I think it's great to keep that sense of curiosity alive while we navigate these technical hurdles.