Picking the right infrastructure to serve your Large Language Model (LLM) can be the difference between a snappy, scalable app and a sluggish system that crashes under the first sign of real traffic. If you're building an API, you've likely narrowed your choices down to vLLM is an open-source library developed at UC Berkeley designed for high-throughput LLM inference and serving and TGI is Text Generation Inference, a toolkit created by Hugging Face for deploying and serving large language models in production . While both do the same basic job-taking a model and turning it into an API-they handle memory and scheduling in fundamentally different ways.

The core struggle in LLM serving is the "KV cache." This is the memory used to store the context of a conversation so the model doesn't have to re-process everything for every new token. Managing this cache is where the battle between vLLM and TGI is won or lost. If you need raw speed and the ability to handle hundreds of users at once, one is a clear winner. If you need a stable, "out-of-the-box" experience that integrates with the world's most popular model hub, the other might be your best bet.

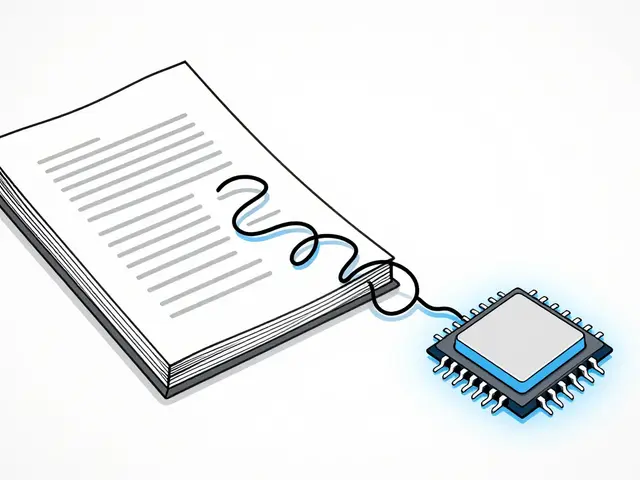

The Secret Sauce: PagedAttention vs. Contiguous Memory

To understand why these two perform differently, we have to look at how they use your GPU VRAM. TGI uses a traditional contiguous memory approach. This means it pre-allocates a big chunk of memory based on the maximum sequence length you've set. If your model is set for 2,048 tokens but the user only asks a one-sentence question, the rest of that allocated memory just sits there wasted. This is called internal fragmentation.

vLLM solves this with PagedAttention, which is a novel attention algorithm that manages the KV cache by splitting it into blocks, similar to how virtual memory works in an operating system . Instead of one giant block, vLLM assigns memory on demand. This eliminates fragmentation and lets the system pack far more requests into the same amount of VRAM. In real-world terms, vLLM can reduce memory consumption by 19% to 27% compared to TGI. That's not just a technical stat; it means you can either use cheaper GPUs or serve more users on the hardware you already have.

Throughput and the Concurrency Wall

If you're running a batch processing job or a popular public API, vLLM is the powerhouse here. Because of its efficient memory management, it can handle massive amounts of concurrency without breaking a sweat. In benchmarks using LLaMA-2-7B, vLLM hit a peak throughput of 15,243 tokens per second with 100 concurrent requests. TGI, while capable, managed about 4,156 tokens per second in the same scenario.

The gap gets even wilder as you push the system to the limit. At 200 concurrent requests, vLLM can deliver up to 24x the throughput of TGI. TGI tends to hit a "saturation point" much earlier, usually around 50-75 concurrent requests, where memory constraints start causing a performance dip. vLLM, however, keeps scaling linearly up to 100-150 requests before it finally starts to plateau.

| Metric | vLLM | TGI (Hugging Face) |

|---|---|---|

| Memory Management | PagedAttention (Dynamic) | Contiguous (Pre-allocated) |

| Peak Throughput | Extremely High (up to 24x TGI) | Moderate |

| Time-to-First-Token (TTFT) | Higher variance | Lower (Faster initial response) |

| Memory Efficiency | 19-27% better | Standard |

| Configuration | More granular/complex | Simple/Streamlined |

Latency: The Trade-off Between Speed and Consistency

Throughput isn't everything. If you're building a real-time chat app, you care about latency-how fast the first word appears and how smoothly the rest follow. This is where TGI holds its own. TGI generally offers a lower Time-to-First-Token (TTFT) in low-concurrency settings, often 1.3x to 2x faster than vLLM. This makes it feel more responsive for a single user.

The reason for this lies in their scheduling. vLLM uses continuous batching, which allows new requests to join the batch immediately at the iteration level rather than waiting for the previous batch to finish . This maximizes GPU usage, but it introduces more variance in how long an individual request takes. TGI uses dynamic batching with batch-level scheduling, which is a bit slower overall but provides more predictable, consistent latency.

However, when you look at the "tail latency" (the p99, or the slowest 1% of requests), vLLM usually wins. It's 1.5x to 1.7x better at preventing those random, long delays that frustrate users. For massive 70B parameter models, both frameworks struggle with latency, but vLLM's tail performance remains more stable.

Deployment: Ecosystem vs. Control

TGI is the "golden path" for anyone already using the Hugging Face ecosystem. If your models are hosted there, deploying TGI is almost trivial. You can fire up a Docker container with a simple command like text-generation-launch --model-id /models/myllama70b and you're live. TGI also comes with built-in production features like

OpenTelemetry and Prometheus metrics, meaning you can monitor your GPU health and request rates without building your own dashboard from scratch.

vLLM requires a bit more elbow grease. You'll need to be more specific with your configuration, such as defining the tensor-parallel-size to split a model across multiple GPUs. While the setup is more technical, it gives you far more control over the optimization parameters. If you're a performance engineer who wants to squeeze every last drop of power out of an H100, vLLM's granular tuning surface is a huge advantage.

Decision Matrix: Which One Do You Actually Need?

Stop looking at the benchmarks and look at your actual users. Your choice depends on the specific "job" your API needs to do:

- Batch Processing: If you're processing 10,000 documents overnight, use vLLM. The throughput advantage is too large to ignore.

- Low-Traffic Interactive Chat: If you have a few internal users and want the fastest possible response time for the first token, TGI is a great, simple choice.

- High-Traffic Public API: If you expect thousands of concurrent users, vLLM is the only way to keep costs down and avoid massive latency spikes.

- RAG Backends: For Retrieval-Augmented Generation, vLLM's memory efficiency helps when dealing with the long contexts typical of RAG. However, if your entire pipeline is built on Hugging Face tools, TGI's integration might save you more in developer time than you'd gain in raw speed.

- Memory-Constrained Hardware: If you're trying to fit a model onto a GPU that's *just* barely large enough, vLLM's PagedAttention gives you a fighting chance.

It's also worth noting that the landscape is moving fast. Other options like TensorRT-LLM from NVIDIA offer extreme optimization for specific hardware, and llama.cpp remains the king for local CPU or edge deployments. But for standard cloud GPU deployments, the vLLM vs TGI choice covers most bases.

Is vLLM always faster than TGI?

Not necessarily. vLLM is significantly faster in terms of throughput (total tokens per second across many users), but TGI often has a faster Time-to-First-Token (TTFT) for a single request in low-concurrency environments. Choose vLLM for scale and TGI for low-load responsiveness.

Do I need a lot of GPUs to run vLLM?

Not necessarily, but vLLM shines when you use distributed inference. By adjusting the tensor-parallel-size, you can split large models across multiple GPUs. However, thanks to PagedAttention, vLLM is actually more memory-efficient than TGI, often allowing you to run larger batches on a single GPU than TGI would allow.

Which framework is easier to set up?

TGI is generally easier to deploy, especially if you are already using Hugging Face. Its CLI is simpler and the Docker integration is very streamlined. vLLM requires more manual configuration of parameters like tensor parallelism and scheduling limits to get the best performance.

What is the main difference in how they handle memory?

The main difference is PagedAttention. TGI allocates contiguous memory for the KV cache, which leads to waste (fragmentation). vLLM uses a paging system that allocates memory in small blocks on demand, reducing waste by 19-27% and allowing for much higher batch sizes.

Can I use both in the same project?

Yes, you could use TGI for a highly responsive "pilot" feature and vLLM for a heavy-duty batch processing backend. However, most teams pick one to simplify their infrastructure and monitoring stack.

Next Steps for Your Deployment

If you're still undecided, start with a simple stress test. Deploy a small model like Llama-3-8B on both frameworks using a tool like Locust or JMeter. Simulate your expected user load: if you're expecting a steady stream of a few users, TGI's simplicity and low initial latency will win. If you expect sudden spikes of hundreds of users, you'll see vLLM maintain its stability while TGI begins to lag.

For those scaling to 70B+ models, prioritize vLLM's distributed inference capabilities. Be prepared to spend time tuning the max_model_len and block_size to optimize your VRAM usage. If you find yourself spending more time on infrastructure than on your product, moving toward TGI's managed ecosystem might be the right call, even if you sacrifice some raw throughput.

Artificial Intelligence

Artificial Intelligence

Kieran Danagher

April 7, 2026 AT 05:49Imagine actually caring about TTFT when your system is choking on 10 requests per second because you chose the "simple" path. vLLM basically makes TGI look like a calculator from the 80s in terms of memory handling. If you're okay with burning money on extra VRAM just to avoid a bit of config, by all means, go for the Hugging Face huggy-wuggy experience.

Shivam Mogha

April 8, 2026 AT 16:09vLLM is the better choice for scale.

poonam upadhyay

April 10, 2026 AT 12:43Omg!!! The sheer audacity of calling TGI "stable" when it's basically a memory-leaking dumpster fire compared to PagedAttention!!! 🙄 Honestly, if you're still using contiguous memory in 2026, you're basically a dinosaur in a tutu!!! Absolute clown show!!!

Kieran Danagher

April 10, 2026 AT 17:59Finally, someone recognizes the prehistoric nature of contiguous allocation. It's practically an art form in inefficiency at this point.

mani kandan

April 11, 2026 AT 02:21The dichotomy between throughput and responsiveness is a splendidly intricate dance. While vLLM offers a kaleidoscopic array of performance gains, the seamless integration of TGI provides a certain serene elegance for smaller projects. It is a magnificent trade-off between raw power and operational tranquility.

Sheetal Srivastava

April 12, 2026 AT 03:26It's honestly exhausting how people keep ignoring the stochastic nature of token generation in these comparisons. The latent space optimization in vLLM is obviously superior because it leverages a non-linear mapping of the KV cache, which any real architect would find intuitive. If you're struggling with the tensor-parallel-size configuration, perhaps you're simply not operating at a high enough cognitive level for production-grade deployments. Most of these "developers" are just playing with API wrappers while ignoring the actual linear algebra happening in the GPU kernels. It's a tragedy, really, that the industry prioritizes "ease of use" over the rigorous mathematical elegance of dynamic paging. I find the obsession with TTFT to be a pedestrian metric that ignores the broader systemic throughput bottlenecks. We are talking about asymptotic complexity here, not just whether a chat bot feels "snappy" to a layman. The architectural superiority of vLLM is an objective fact, yet we treat it like a mere "option" for the brave. The cognitive load of setup is a filter for competence. If you can't handle a few CLI flags, you have no business managing an H100 cluster. I've seen more sophisticated memory management in a toy program than in some of the TGI implementations I've audited recently. It is simply an exercise in mediocrity.

Anand Pandit

April 13, 2026 AT 05:55I think both are great tools for different needs! vLLM is definitely a game-changer for those of us running heavier workloads, but TGI's ease of setup is a huge win for beginners. It's all about finding the right fit for your specific project.

Rahul Borole

April 14, 2026 AT 04:09I strongly encourage all engineers to rigorously evaluate their concurrency requirements before selecting a framework. The implementation of PagedAttention provides a formidable advantage in resource utilization that cannot be overlooked in a professional production environment. It is imperative to optimize for the p99 latency to ensure a premium user experience.

ujjwal fouzdar

April 14, 2026 AT 17:19The struggle of the GPU is like the struggle of the soul. We seek the void of zero latency but find only the fragmentation of our own hopes. vLLM is the path to enlightenment, while TGI is the comfort of a gilded cage.

OONAGH Ffrench

April 15, 2026 AT 15:20efficiency is a quiet virtue in the noise of benchmarks... the flow of tokens reflects the architecture of thought... vLLM just makes more sense for the long term