Tag: vLLM

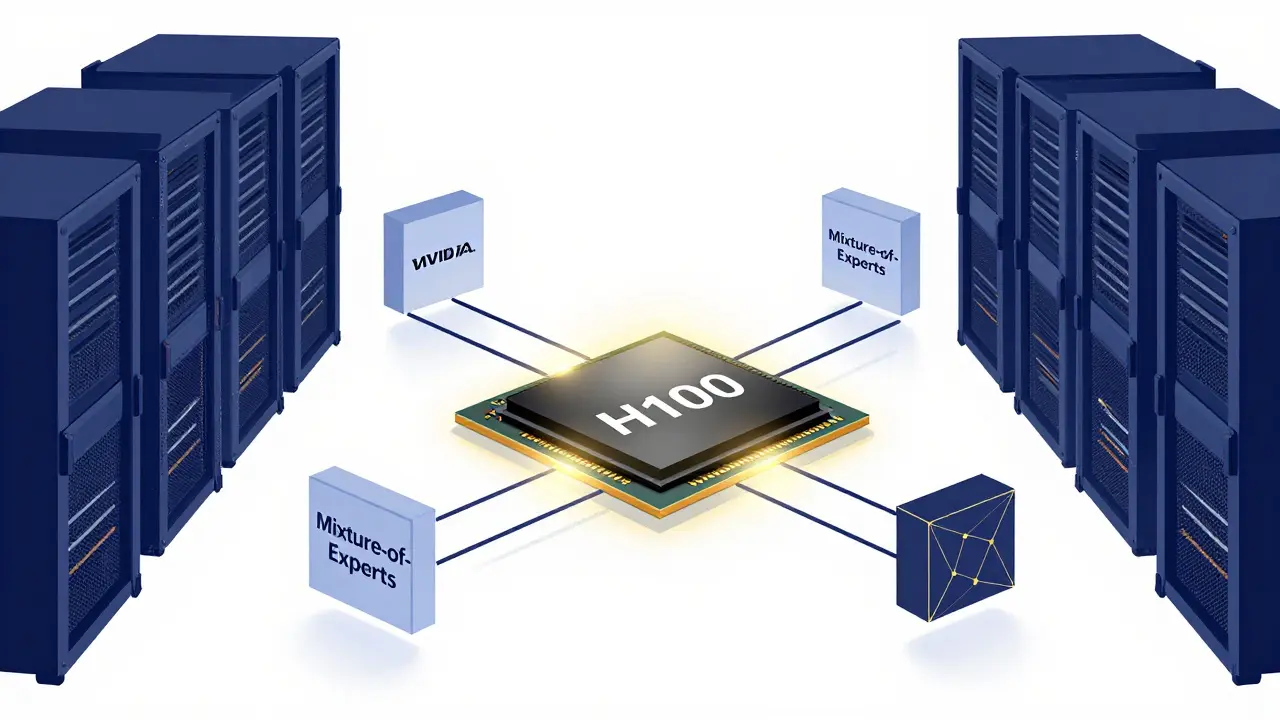

Learn how to scale open-source LLMs in 2026. Explore hardware needs for gpt-oss-120b, the role of SLMs, and professional serving stacks using vLLM and SGLang.

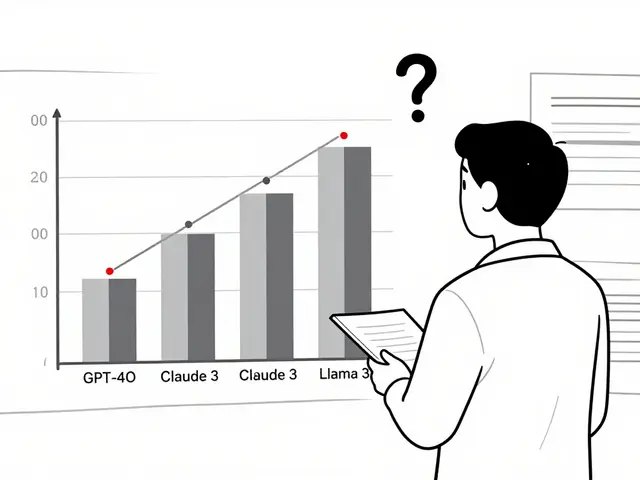

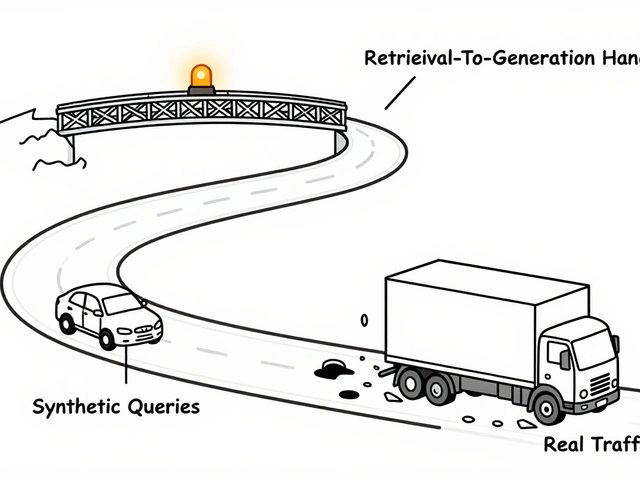

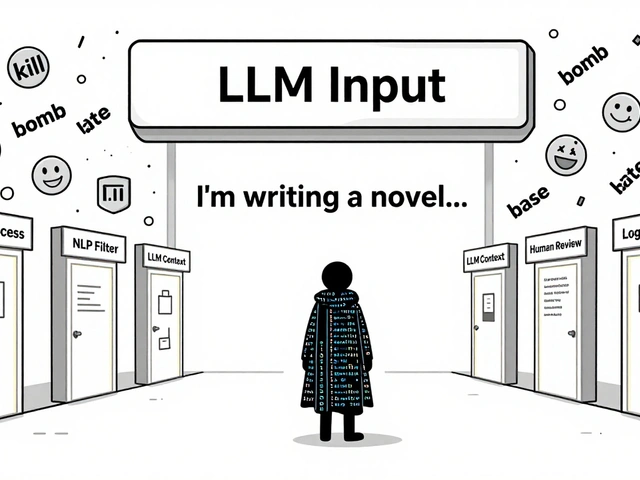

Compare vLLM and TGI for LLM serving. Learn about PagedAttention, throughput benchmarks, and which framework fits your API's latency and scale needs.

Categories

Archives

Recent-posts

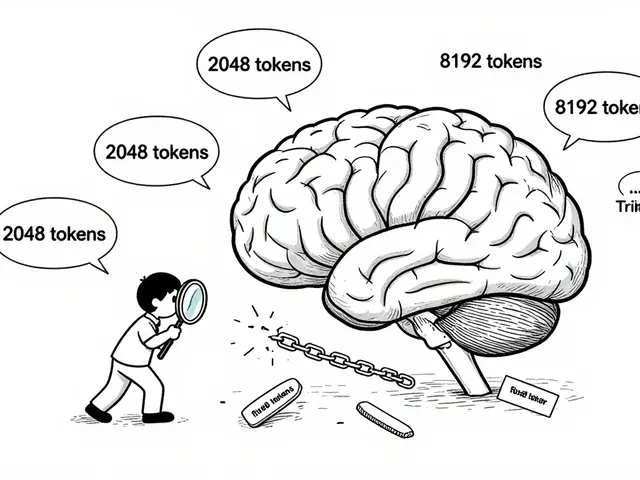

Key Components of Large Language Models: Embeddings, Attention, and Feedforward Networks Explained

Sep, 1 2025

Artificial Intelligence

Artificial Intelligence