The era of blindly chasing parameter counts is over. In 2026, the real battle in AI isn't about who has the biggest model, but who can actually run it efficiently without burning through their entire annual budget in a week. For years, enterprises relied on the convenience of closed APIs, but the gap between proprietary giants and open-weight models has virtually disappeared. With the release of gpt-oss-120b is a Mixture-of-Experts (MoE) model with 117 billion parameters released by OpenAI under the Apache 2.0 license, the power dynamic has shifted. You no longer need to hand over your data to a third-party provider to get frontier-level reasoning.

The Hardware Reality: Why Bigger Isn't Always Better

If you look at the download data from the Hugging Face ATOM Project, a striking pattern emerges: smaller models are deployed at far higher rates than the massive ones. While the average size of downloaded models has climbed to around 20.8 billion parameters, the median remains much lower. This tells us that while a few power users are pulling massive weights, most of the world is optimizing for the "edge." Why? Because cost, latency, and hardware availability are the real bottlenecks.

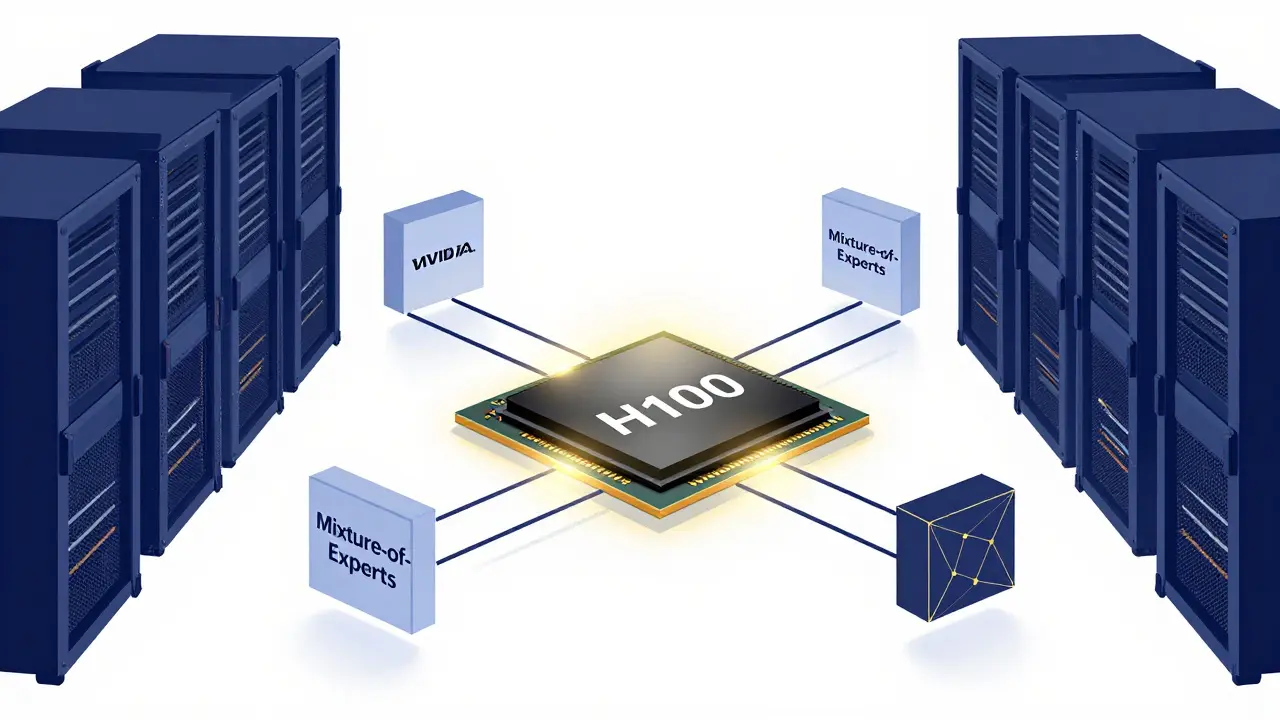

To scale effectively, you need to understand your memory constraints. A model like gpt-oss-120b is designed to fit on a single 80GB GPU, such as an NVIDIA H100 or an AMD MI300X. This is a game-changer. Previously, a 100B+ parameter model would require a complex cluster of GPUs, driving up both the cost and the latency due to inter-GPU communication. By utilizing Mixture-of-Experts (MoE), where only a fraction of the parameters are active for any given token, we get the intelligence of a massive model with the compute cost of a much smaller one.

| Model Tier | Parameter Range | Primary Use Case | Hardware Requirement |

|---|---|---|---|

| SLMs | < 10B | Tagging, Summarization | Consumer GPUs / Edge |

| Mid-Tier | 10B - 70B | Coding, Complex Reasoning | A100 / H100 (Single/Dual) |

| Frontier Open | 100B+ | Strategic R&D, High-End Logic | H100 / MI300X (80GB+) |

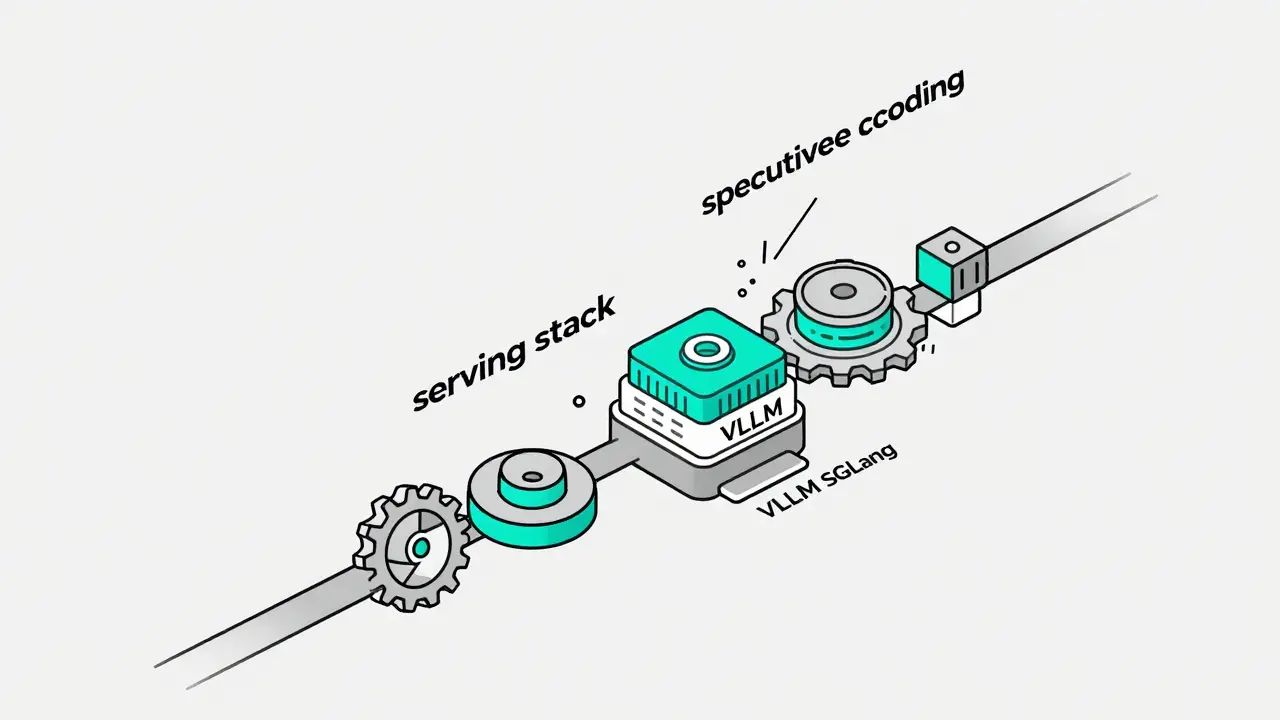

Optimizing the Serving Stack

Hardware is only half the battle. If you just load a model into VRAM and call it a day, you're leaving 70% of your performance on the table. Modern scaling requires a dedicated serving stack. This is where vLLM and SGLang come into play. These frameworks implement continuous batching and speculative decoding, which drastically reduce the time a GPU spends idling while waiting for the next token.

One of the biggest headaches in scaling is the KV cache. As your context window grows-especially with agentic workflows that remember long conversations-the memory required to store previous tokens skyrockets. If you don't manage this, you'll hit "Out of Memory" (OOM) errors long before you hit your compute limit. To combat this, the industry is moving toward Right-Sizing. Instead of just adding more GPUs, we're using attention head pruning and sparsity-based modeling to remove redundant components that don't contribute to the final answer.

When monitoring these stacks, forget about simple "uptime" percentages. You need to track three specific metrics to know if your system is actually usable:

- Time to First Token (TTFT): How long does the user wait before the first word appears? If this is high, your prompt processing is the bottleneck.

- Inter-Token Latency (ITL): The speed of the stream. If this stutters, your GPU memory bandwidth is likely maxed out.

- Token Throughput: The total volume of text processed per second across all users. This determines your cost-per-request.

The Rise of Small Language Models (SLMs)

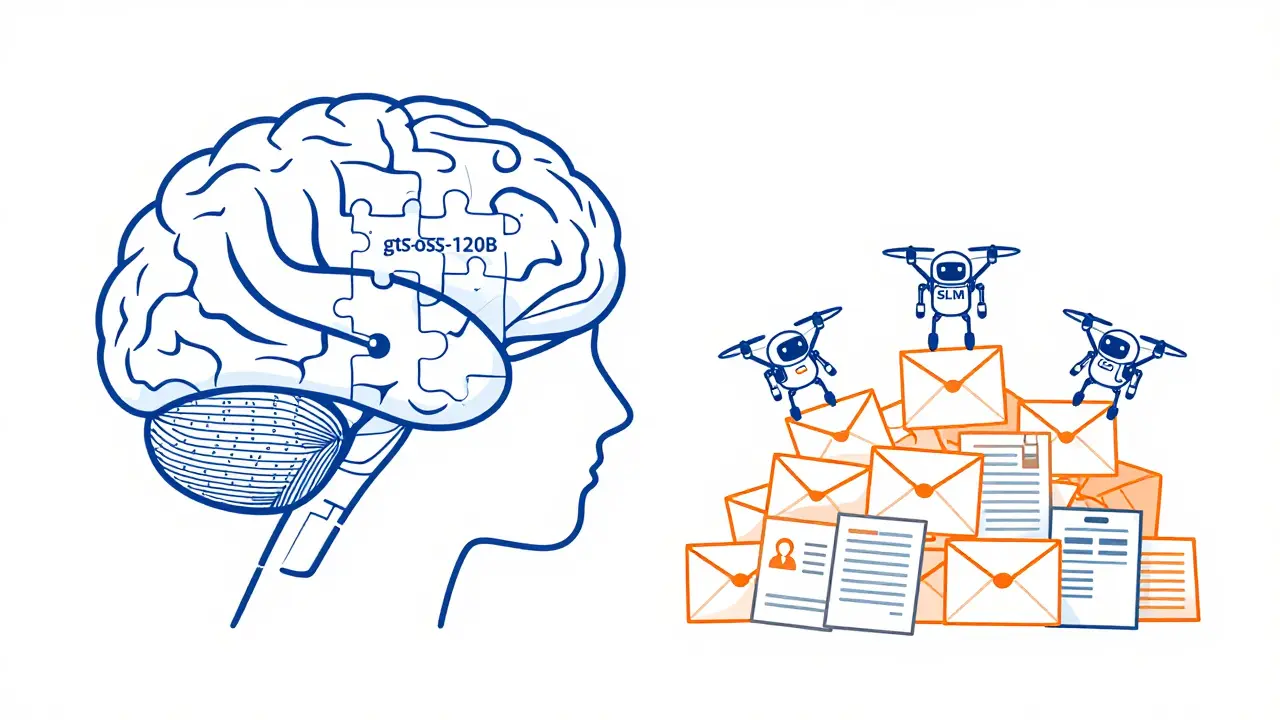

There is a pervasive myth that you need a massive model for every task. In reality, using a 120B parameter model to classify a support ticket is like using a semi-truck to deliver a single envelope. Small Language Models (SLMs) have become the high-efficiency workhorses of the enterprise. These are compact models, often under 10B parameters, engineered for surgical precision in repeatable workflows.

For instance, a healthcare provider doesn't need a general-purpose frontier model to summarize a patient's visit notes. An SLM, fine-tuned on medical terminology and clinical formatting, can do the job faster, cheaper, and more privately. This is why the most successful deployments in 2026 use a hybrid strategy. They use a strong model like gpt-oss-120b for complex reasoning and architectural planning, but route 90% of the high-volume operational work to a specialized SLM running on the edge.

Customization Playbook: Moving Beyond the Prompt

If you're only using prompt engineering, you're barely scratching the surface of what open-source LLMs can do. The real edge comes from parameter-efficient fine-tuning (PEFT) and the use of adapters. Instead of retraining a massive model-which would cost millions-enterprises are using small "adapter" modules that sit on top of the base model. This allows a company to bake in its brand voice or industry-specific jargon without altering the core weights of the model.

For those scaling from zero to production, here is the 2026 deployment playbook:

- Curate a Model Duo: Don't pick just one. Select one high-efficiency SLM for cost-optimized tasks and one frontier model (like gpt-oss-120b) for deep reasoning.

- Define Ownership: Decide explicitly where the weights live. If data sensitivity is paramount-like in insurance underwriting-keep the models in your own data center. If speed of iteration is key, use a private cloud instance.

- Target High-Value Control Use Cases: Start with areas where the "API approach" fails. Focus on things like healthcare triage or field operations where low latency and data privacy are non-negotiable.

Open-Source vs. Proprietary: The Final Calculation

The convenience of a closed API-like GPT-5.3 or Claude Sonnet 4-is undeniable. You get a URL, an API key, and it just works. But that convenience is a trap for scaling enterprises. Vendor lock-in means you are at the mercy of their pricing changes and "model drift," where a provider updates the model and suddenly your carefully crafted prompts stop working.

By scaling open-source, you eliminate that risk. You gain the ability to adjust reasoning levels-some models now let you toggle between low, medium, and high reasoning depth to save on compute. You also gain total privacy. When you run a model on your own hardware, the data never leaves your network. In a world of increasing regulation, that isn't just a technical advantage; it's a legal necessity.

What is the main advantage of gpt-oss-120b over proprietary models?

The primary advantage is full control over deployment and the elimination of vendor lock-in. Because it is an open-weight model under the Apache 2.0 license, enterprises can fine-tune it on private data and run it on their own hardware (like a single 80GB GPU), ensuring data privacy and predictable costs without relying on a third-party API.

How do SLMs differ from frontier models in a production environment?

SLMs (Small Language Models) are designed for high-volume, specific tasks like summarization or classification. They offer much lower latency, lower compute costs, and can be deployed on edge devices. Frontier models are used for complex reasoning, multi-step problem solving, and open-ended creative tasks where nuance is more important than speed.

What are the most critical metrics for monitoring a scaled LLM?

The three most critical metrics are Time to First Token (TTFT), which measures initial responsiveness; Inter-Token Latency (ITL), which measures the smoothness of the text generation; and Token Throughput, which determines the overall capacity and cost-efficiency of the hardware stack.

Can a single GPU really run a 120B parameter model?

Yes, provided the model uses a Mixture-of-Experts (MoE) architecture and efficient quantization. Models like gpt-oss-120b are optimized to fit within the 80GB VRAM of an H100 or MI300X, allowing for high-performance inference without the need for massive, expensive GPU clusters.

What is speculative decoding in a serving stack?

Speculative decoding is a technique where a smaller, faster "draft" model predicts the next few tokens, and the larger "target" model verifies them in a single pass. This significantly speeds up inference by reducing the number of times the massive model needs to be fully computed.

Artificial Intelligence

Artificial Intelligence

Ashley Kuehnel

April 14, 2026 AT 03:42Love the breakdown! People always forget about the KV cache bottlenecks when they're just starting out. I've seen so many teams hit that OOM wall and just throw more VRAM at it without actually fixing the serving stack. Pro tip: look into PagedAttention if you're using vLLM, it's a total lifesaver for memory managment!! also, don't sleep on the MI300X, the memory bandwidth is actually insane for these larger MoE weights.

Tyler Springall

April 14, 2026 AT 20:27The obsession with SLMs is just a cope for people who can't afford actual compute. It's a race to the bottom where we pretend that a glorified autocomplete for medical notes is 'innovation' just because it runs on a toaster. The real utility of LLMs is in the emergent properties of scale, which you lose the moment you start pruning heads to save a few cents on a cloud bill.

Amy P

April 15, 2026 AT 04:18Omg, wait!! A 120B model on a SINGLE GPU?! That is absolutely wild! I can't even imagine the speed boost from not having to deal with multi-GPU communication overhead. It's like going from a dial-up connection to fiber overnight! This completely changes the game for anyone trying to run local agents without a server farm in their basement!

Colby Havard

April 15, 2026 AT 23:42One must ponder the ethical implications of the "private data center" approach... For while it offers a sanctuary of privacy, it also creates a fragmented landscape of knowledge where the wealthy hoard intelligence within their own walls... The democratization of AI is a fragile dream; we risk replacing a corporate monopoly with a corporate feudalism, where the infrastructure of thought is owned by the few... It is a moral imperative that we weigh the cost of privacy against the cost of isolation!!!

Patrick Bass

April 16, 2026 AT 04:12Correct points on the MoE efficiency.