Tag: inference optimization

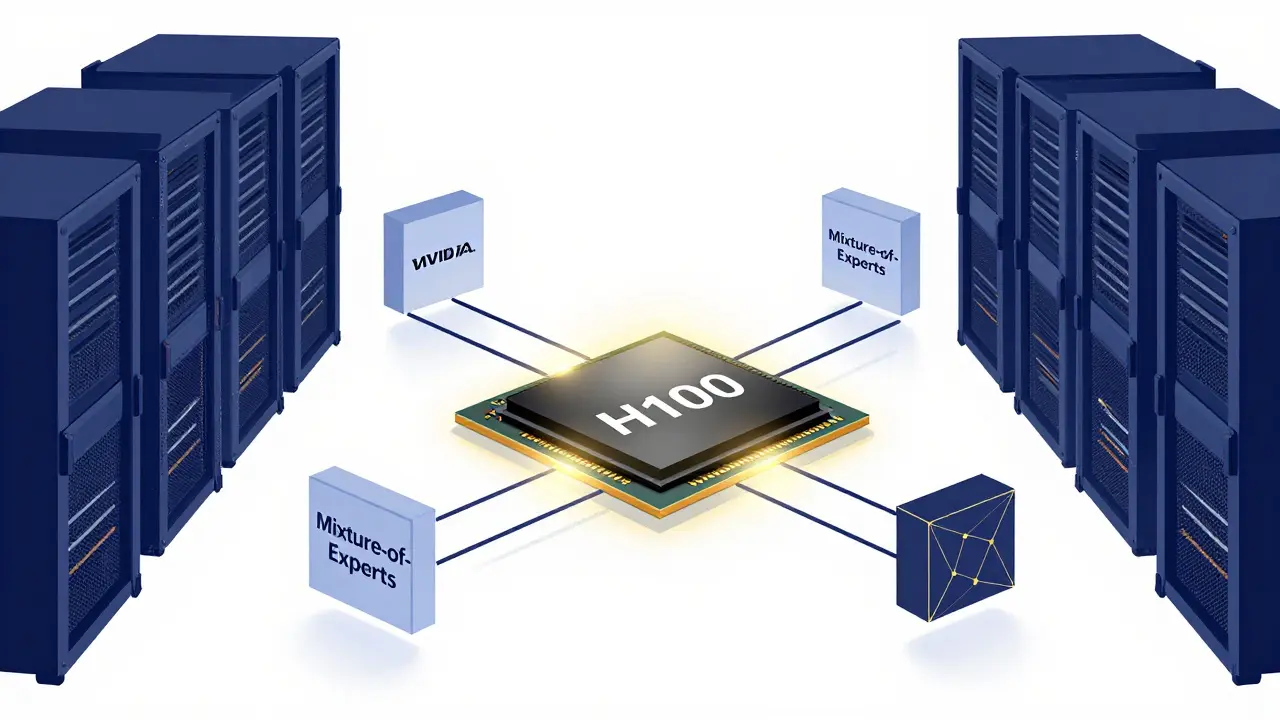

Learn how to scale open-source LLMs in 2026. Explore hardware needs for gpt-oss-120b, the role of SLMs, and professional serving stacks using vLLM and SGLang.

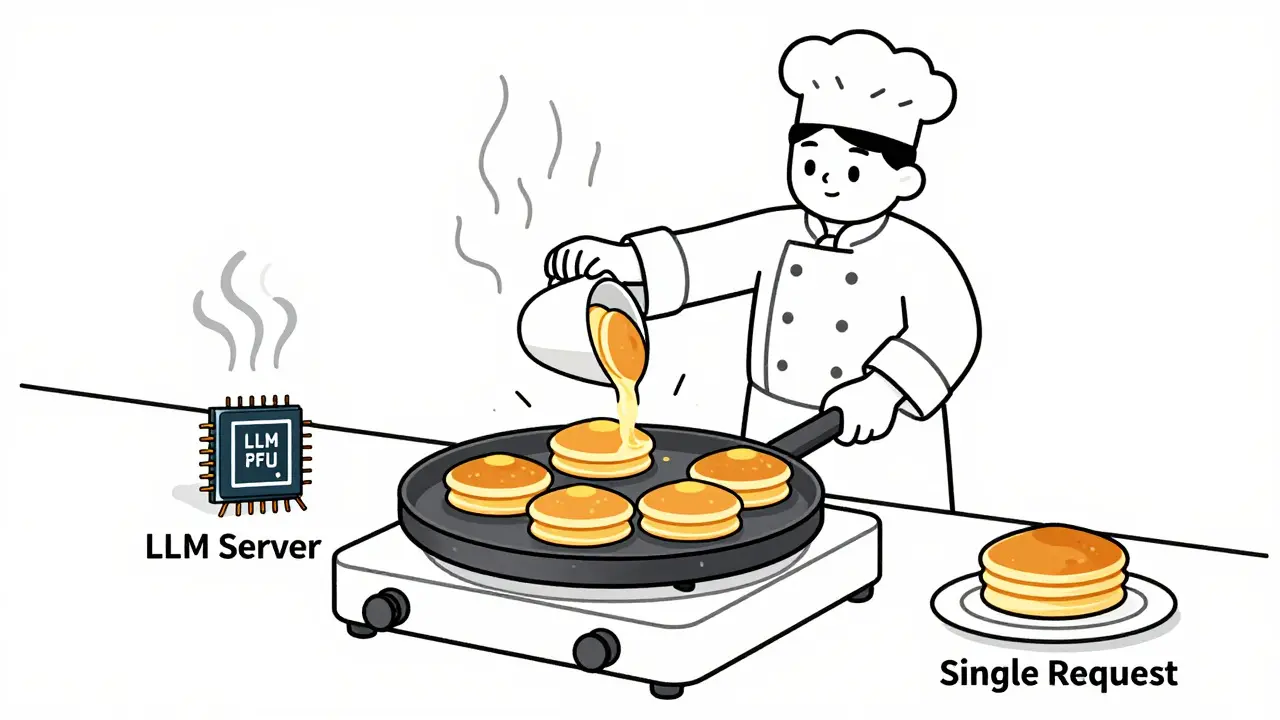

Learn how to choose optimal batch sizes for LLM serving to cut cost per token by up to 87%. Discover real-world results, batching types, hardware trade-offs, and proven techniques to reduce AI infrastructure costs.

Categories

Archives

Recent-posts

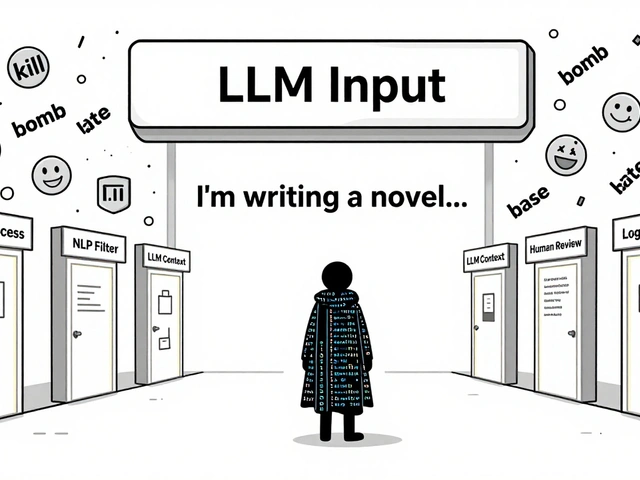

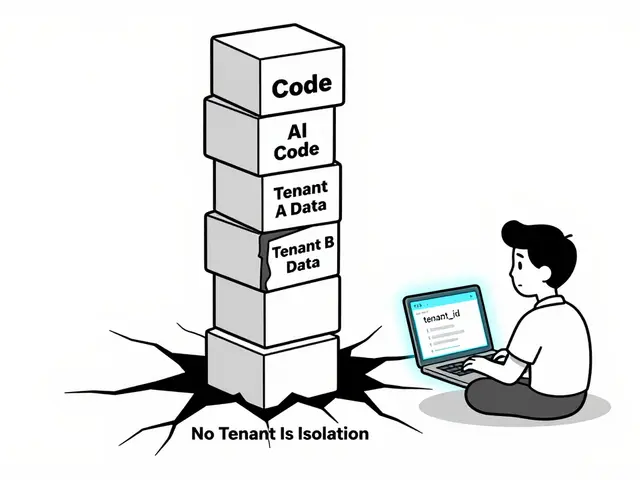

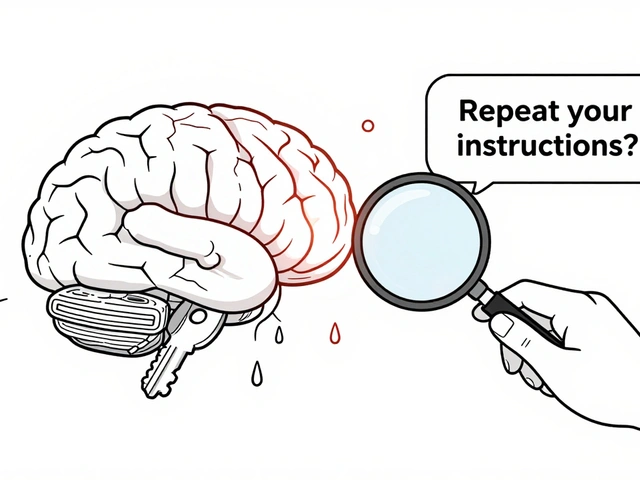

Content Moderation Pipelines for User-Generated Inputs to LLMs: How to Prevent Harmful Content in Real Time

Aug, 2 2025

Artificial Intelligence

Artificial Intelligence