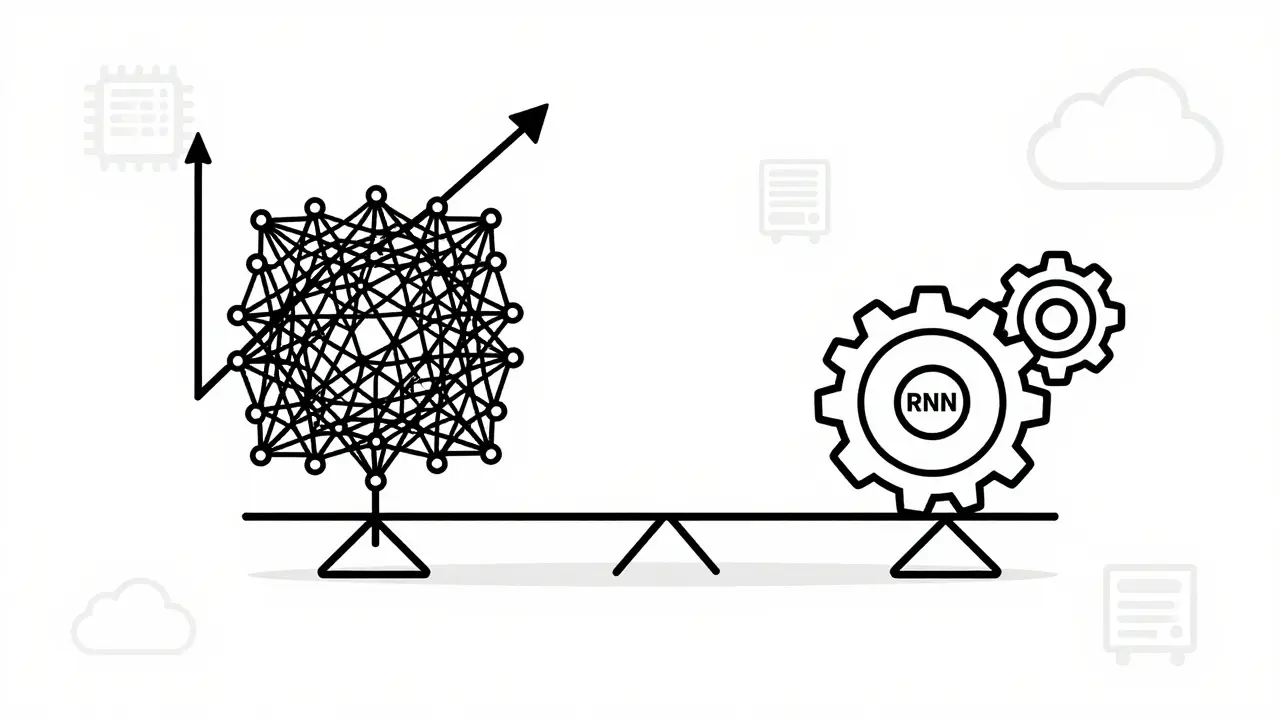

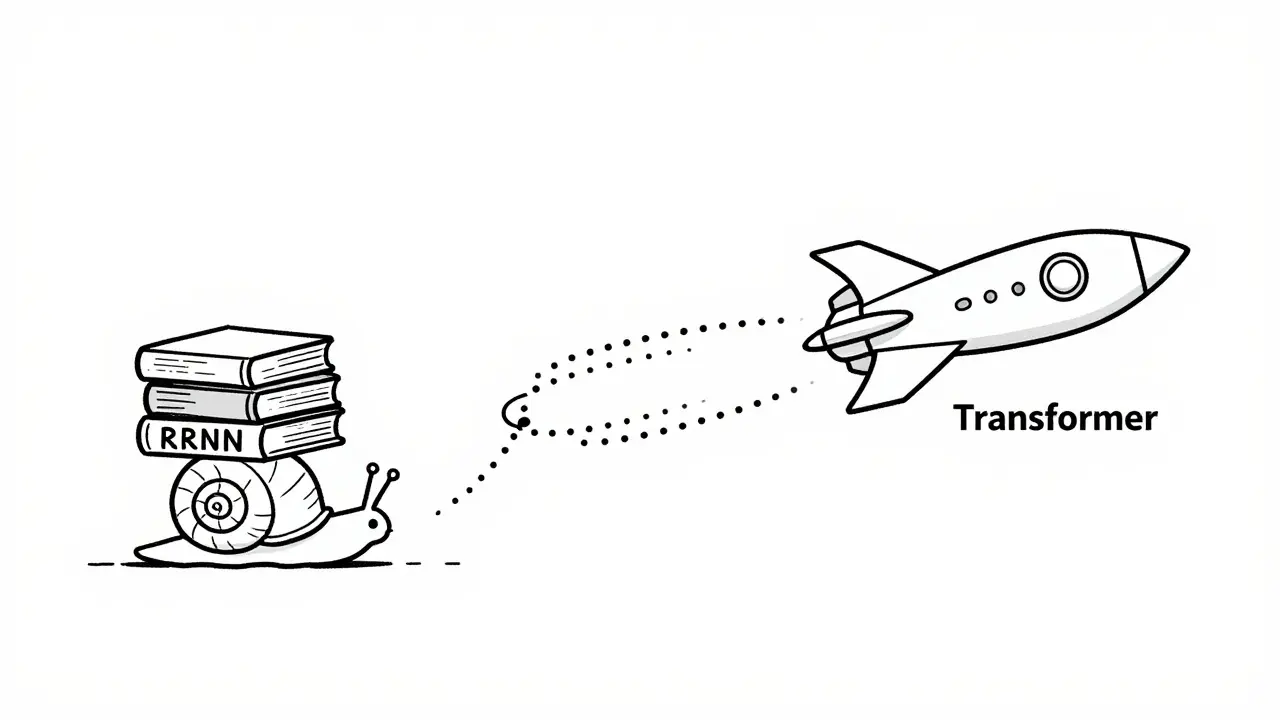

Imagine trying to read a novel by only looking at one word at a time, while also being forced to forget everything you read three pages ago. That was essentially how Recurrent Neural Networks, or RNNs, processed language for years. They worked sequentially, step-by-step, which made them slow and prone to memory loss. Then came the Transformer architecture, introduced in the 2017 paper "Attention is All You Need" by researchers at Google Brain. It didn't just improve things; it completely changed the game by allowing models to process entire sentences simultaneously.

The shift from RNNs to transformer-based large language models wasn't just a minor upgrade. It was a fundamental architectural revolution that solved two massive problems: speed and context. Today, if you look at any major AI model like GPT-4, Claude 3, or Llama 3, you will find transformers at their core. But why did this switch happen so decisively? Let's break down the mechanics of parallelization and long-range dependencies to see exactly what makes transformers superior for modern natural language processing.

The Speed Problem: Sequential vs. Parallel Processing

The biggest bottleneck with Recurrent Neural Networks was their inability to parallelize. Because an RNN processes data token by token (word by word), it has to wait for the previous step to finish before starting the next one. If you have a sentence with 100 words, the network must complete 100 sequential steps. This creates a linear time complexity, often denoted as O(n), where n is the length of the sequence. As sequences get longer, training times explode.

Transformers eliminated this constraint through a mechanism called self-attention. Instead of waiting for the previous word, a transformer looks at all words in the input sequence at once. This allows for massive parallel computation on GPUs. According to technical analyses from Porto Theme in 2023, this shift reduced training time for large datasets by up to 7.3x compared to traditional RNN implementations. In practical terms, what might have taken weeks to train on an LSTM (a type of RNN) can now be done in hours using a transformer.

This parallelization capability is the primary reason we are seeing such rapid advancements in AI today. Developers can scale models horizontally across thousands of GPU cores. Google’s benchmarks showed that transformers achieve near-linear speedup with additional hardware resources, maintaining 92% efficiency even when distributed across 64 GPUs. RNNs, by contrast, offer only 15-20% parallelization potential because their core logic remains inherently sequential.

Solving the Vanishing Gradient and Memory Loss

Even if RNNs could run faster, they still suffered from a critical flaw known as the vanishing gradient problem. During backpropagation-the process where the model learns from its errors-gradients often diminished to near-zero values (below 10^-7) when passing through long sequences. This meant that information from the beginning of a sentence would effectively disappear by the time the network reached the end.

To combat this, engineers developed Long Short-Term Memory networks (LSTMs) and Gated Recurrent Units (GRUs). These added complex gating mechanisms to help retain information over longer distances. While better than standard RNNs, they were still limited. Most RNN variants struggled to capture dependencies beyond 10-20 tokens reliably.

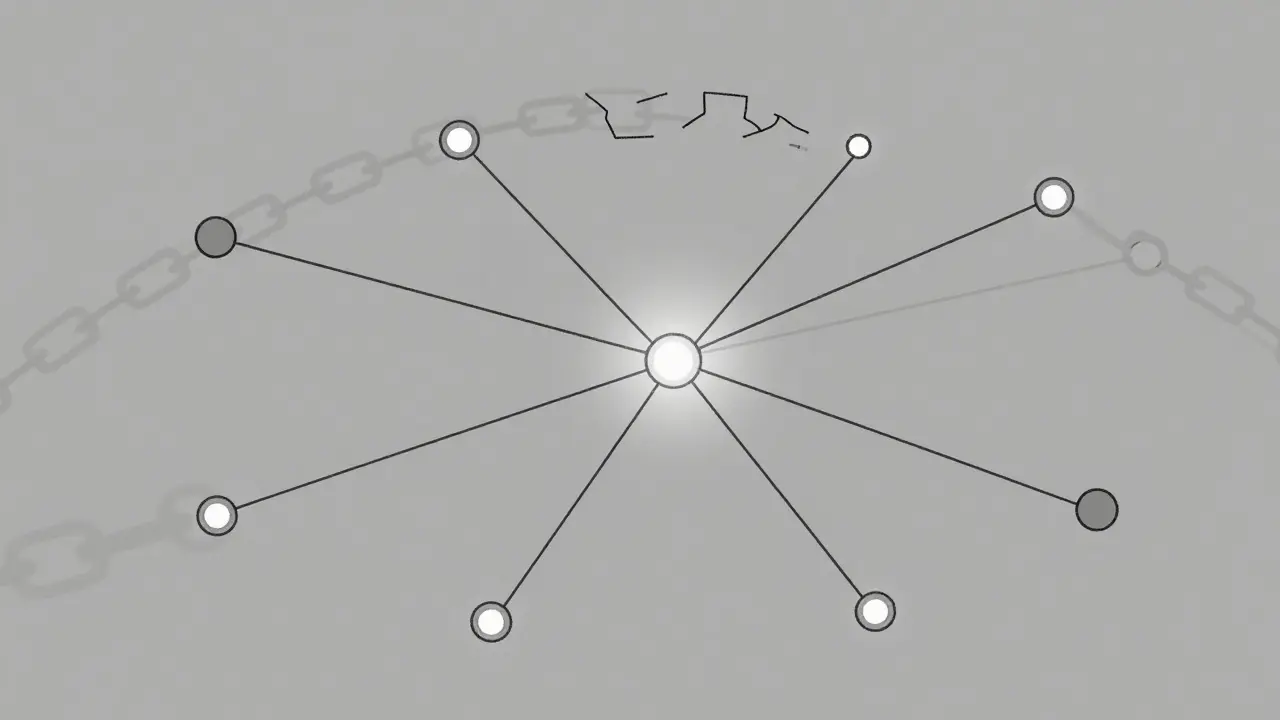

Self-attention mechanisms in transformers solve this by creating direct connections between any two positions in a sequence, regardless of distance. The attention score is calculated using query, key, and value matrices (Q, K, V) with the formula: Attention(Q,K,V)=softmax(QK^T/√d_k)V. This allows each token to attend to all other tokens simultaneously. As a result, transformers can maintain consistent performance across thousands of tokens. For example, BERT handles a 512-token context window, while GPT-3 supports 2048 tokens, and newer models like Gemini 1.5 handle up to 1 million tokens.

Long-Range Dependencies in Practice

Understanding long-range dependencies is crucial for tasks like machine translation, summarization, and code generation. Consider a sentence like: "The bank released its annual report after the CEO, who had previously served as the CFO of the subsidiary based in London, resigned." To understand this sentence, you need to connect "CFO" with "subsidiary" and "London," even though they are separated by several clauses.

In benchmarks testing these capabilities, such as the Long Range Arena test, transformers significantly outperformed recurrent architectures. At a sequence length of 4096 tokens, transformers achieved 84.7% accuracy, while LSTMs dropped to 42.3% and GRUs fell further to 38.9%. This gap widens as sequences grow longer, making RNNs practically unusable for complex document understanding tasks.

This ability to grasp global context rather than just local sequential context is what gives Large Language Models their human-like coherence. When you ask an LLM to summarize a book chapter, it doesn't just stitch together adjacent sentences; it understands the overarching theme by attending to related concepts scattered throughout the text.

| Feature | Transformers | RNNs / LSTMs |

|---|---|---|

| Processing Method | Parallel (Simultaneous) | Sequential (Step-by-step) |

| Context Window | Thousands to Millions of tokens | Limited (~10-20 tokens effective) |

| Training Speed | Fast (Highly parallelizable) | Slow (Bottlenecked by sequence length) |

| Memory Complexity | Quadratic O(n^2) | Linear O(n) |

| Gradient Stability | Stable (No vanishing gradient issue) | Unstable (Vanishing gradient problem) |

| GLUE Benchmark Accuracy | 89.4% | 76.2% (RNN) / 82.1% (LSTM) |

The Trade-offs: Why RNNs Aren't Completely Dead

If transformers are so much better, why do RNNs still exist? The answer lies in specific constraints where simplicity wins. Transformers have a quadratic memory complexity of O(n^2). This means that as the input sequence grows, the computational cost increases exponentially. For extremely long sequences, this becomes prohibitively expensive without specialized optimizations like sparse attention or mixture-of-experts architectures.

RNNs, however, have linear memory complexity. For very short sequences (less than 20 tokens), RNNs require 3-5x less memory and have lower computational overhead. They are also better suited for real-time applications with strict latency requirements (under 10ms), such as simple time-series forecasting or embedded systems with severe power constraints.

A study published in PubMed in 2023 found that RNNs actually outperformed transformers on certain molecular property prediction tasks focused on local features, achieving 82.4% accuracy versus 78.9% for transformers. This shows that while transformers dominate general language tasks, RNNs remain viable for niche, localized pattern recognition jobs.

Implementation Challenges and Industry Adoption

Adopting transformers isn't free. The learning curve is steep. A 2024 Kaggle survey reported that data scientists needed 80-120 hours of dedicated study to become proficient with transformer architectures, compared to 40-60 hours for RNNs. Common challenges include managing high memory usage, interpreting attention patterns, and dealing with catastrophic forgetting during fine-tuning.

Despite these hurdles, industry adoption has been explosive. By 2024, 98.7% of state-of-the-art NLP models were transformer-based. Enterprise adoption followed suit, with 78% of Fortune 500 companies implementing transformer solutions, up from just 12% in 2020. The market for transformer-based AI grew to $28.7 billion in 2024, driven by applications in customer service, content creation, and code generation.

Tools like Hugging Face’s Transformers library have democratized access, allowing developers to implement powerful models with minimal code. However, the cost of inference and training remains high. Fine-tuning a model like Llama 2 can rack up significant cloud bills, prompting the development of parameter-efficient methods like LoRA to reduce costs.

Future Directions: Beyond Pure Scaling

As we move into 2026, the focus is shifting from pure scaling to efficiency and reasoning. Current limitations include sustainability concerns-training GPT-3 emitted approximately 552 tons of CO2 equivalent-and reasoning deficits. Critics like Gary Marcus argue that transformers lack systematic logical reasoning, scoring low on benchmarks like SynthIE.

Future developments aim to address these issues through hybrid architectures. Google’s AlphaGeometry combines neural networks with symbolic reasoning. Other approaches include sparse transformers to reduce computational complexity and quantum-inspired attention mechanisms for theoretical speedups. While some experts warn that we are approaching the limits of pure transformer scaling, the architecture remains the dominant force in AI, expected to power 99.4% of enterprise NLP applications by 2027.

What is the main advantage of Transformers over RNNs?

The main advantage is parallelization. Transformers process entire sequences simultaneously using self-attention, whereas RNNs process data sequentially, one token at a time. This makes transformers significantly faster to train and capable of handling much longer contexts.

How do Transformers handle long-range dependencies?

Transformers use a self-attention mechanism that calculates relationships between all tokens in a sequence directly. This allows the model to connect distant words without the information degrading, unlike RNNs which suffer from the vanishing gradient problem over long distances.

Are RNNs completely obsolete?

Not entirely. While Transformers dominate NLP, RNNs are still used in niche applications like real-time streaming data with strict latency constraints, simple time-series forecasting, and embedded systems where memory and compute resources are extremely limited.

What is the vanishing gradient problem?

It is a challenge in training deep neural networks where the error signal (gradient) becomes too small as it propagates backward through many layers or time steps. In RNNs, this prevents the model from learning dependencies that are far apart in the sequence.

Why do Transformers require more memory?

Transformers have quadratic memory complexity O(n^2) because the self-attention mechanism computes relationships between every pair of tokens in the sequence. As the sequence length increases, the number of calculations grows exponentially, requiring more VRAM.

Artificial Intelligence

Artificial Intelligence