Tag: long-range dependencies

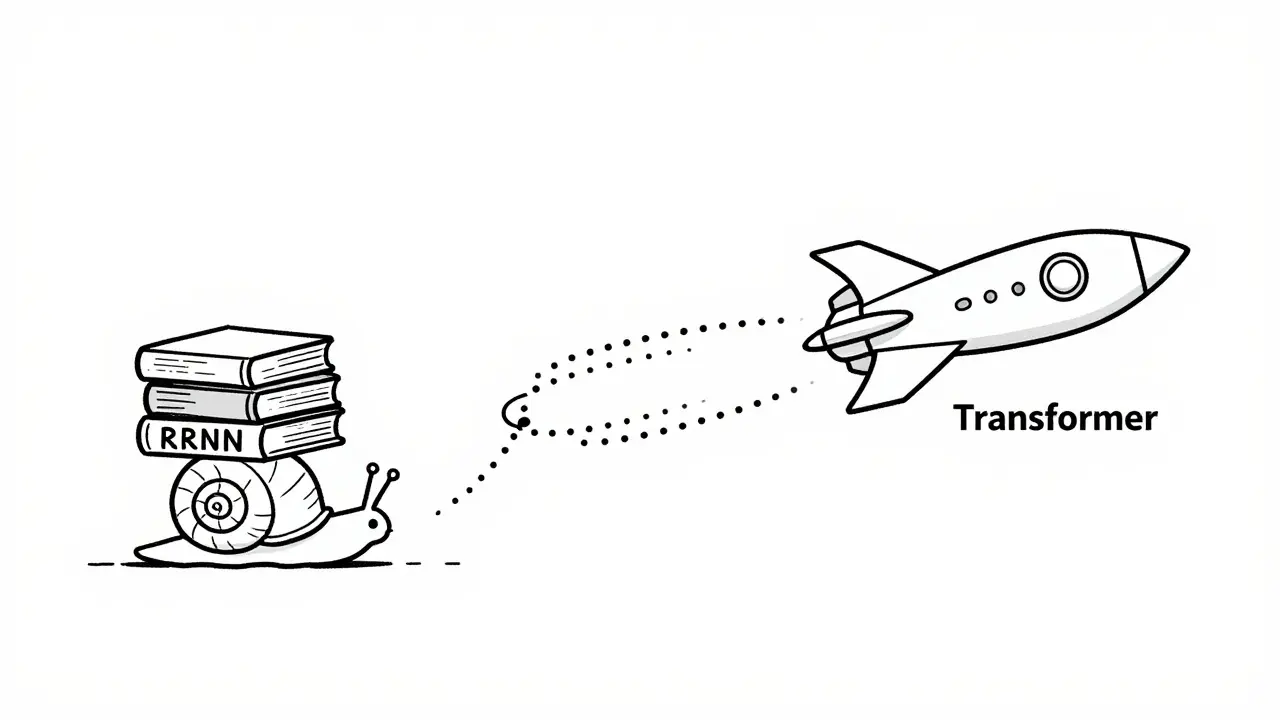

Discover why Transformers replaced RNNs in NLP. We explore parallelization benefits, long-range dependency handling, and the technical reasons behind the dominance of transformer-based LLMs.

Categories

Archives

Recent-posts

Template Repos with Pre-Approved Dependencies for Vibe Coding: Setup, Best Picks, and Real Risks

Feb, 20 2026

Key Components of Large Language Models: Embeddings, Attention, and Feedforward Networks Explained

Sep, 1 2025

Artificial Intelligence

Artificial Intelligence