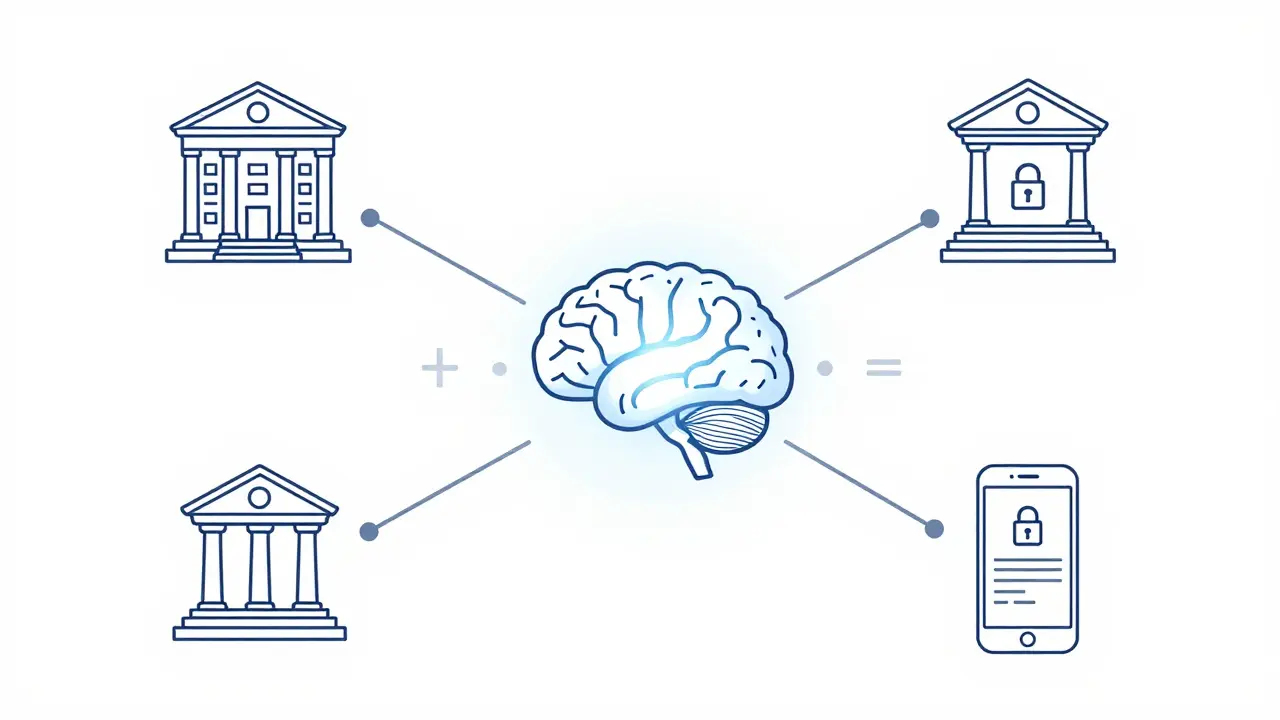

Most AI models today are trained by vacuuming up as much data as possible and dumping it into one giant central server. While that works for general knowledge, it's a nightmare for privacy. Imagine a hospital wanting to improve a medical AI but being unable to share patient records because of laws like HIPAA, or a bank that can't upload proprietary trading data to a cloud server. They're stuck with a choice: stay private and use a weaker model, or risk data leaks to get a smarter one. Federated Learning is a decentralized machine learning approach that allows a model to learn from data without that data ever leaving its original home. Instead of moving the data to the model, we move the model to the data.

How it actually works: The cycle of decentralized training

Think of traditional training like a teacher asking every student to send their private notebooks to a central office to write a textbook. With federated learning, the teacher sends a draft of the textbook to every student. Each student edits the draft using their own notes, and then sends only the corrections-not the notes themselves-back to the teacher. The teacher merges all those corrections into a better version and sends it back out. Repeat until the textbook is perfect.

In technical terms, the process follows a specific loop. First, a central server sends an initial version of a Large Language Model (LLM) to various client devices (like servers in different hospitals or individual smartphones). These clients perform local training on their own private datasets. Once the local training is done, they don't send the raw data back; they send model parameters-essentially the weights and mathematical adjustments the model made during learning. The central server then uses a method called Federated Averaging (FedAvg) to combine these updates into a new global model. This updated model is pushed back to the clients, and the cycle starts over.

Solving the "Compute Problem" in LLMs

Training a massive model isn't easy for a local device. A smartphone or a small clinic server can't handle the billions of parameters found in a modern LLM. This is where traditional federated learning often hits a wall. To fix this, new frameworks like FL-GLM use a technique called split learning. Instead of the client doing everything, the work is split. The client handles the embedding and output layers, while the heavy lifting of the core parameters is offloaded back to the server. This makes it possible to train high-end models without needing a supercomputer at every single client site.

| Feature | Centralized Training | Federated Learning |

|---|---|---|

| Data Location | Single central server | Distributed across clients |

| Privacy Risk | High (Data must be moved) | Low (Data stays local) |

| Bandwidth Use | High (Moving raw data) | Medium (Moving parameters) |

| Regulatory Ease | Difficult (GDPR/HIPAA hurdles) | Easier (Compliant by design) |

Why this beats local training: The power of diversity

You might wonder: why not just let each organization train its own private model? The problem is data scarcity. A single bank might have great data on fraud, but not enough to make a truly "smart" LLM. When they collaborate via Federated Learning, they get the best of both worlds: total privacy and a model that has seen a massive, diverse range of data.

Real-world results prove this. Using the OpenFedLLM framework-a research-friendly tool that supports various algorithms and instruction tuning-researchers found that federated models consistently beat models trained in isolation. In one financial benchmark, a Llama2-7B model fine-tuned through federated learning actually outperformed GPT-4. That's a huge deal because that level of performance was impossible for the model to reach using only one organization's private data.

Where we're using it today

This isn't just theoretical. We're seeing this play out in several high-stakes industries:

- Healthcare: Hospitals collaborate to detect rare diseases by training models on patient records across different continents without moving a single medical file.

- Finance: Banks build better fraud detection systems by learning from the transaction patterns of other banks without revealing their specific customer identities.

- Autonomous Vehicles: Car fleets share "lessons learned" from road anomalies. If one car in Seattle learns a new way to handle a specific intersection, the global model is updated, and cars in Miami benefit without the raw camera footage ever leaving the vehicle.

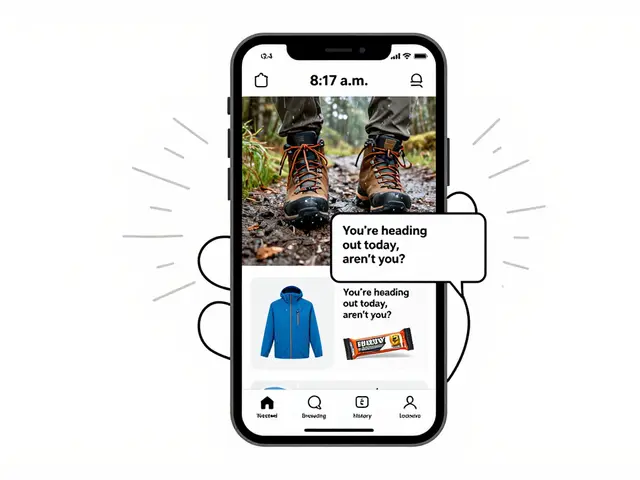

- Edge Computing: With 5G and IoT, your phone can help train a global language model while you sleep, adapting the AI to your local dialect or habits without sending your private texts to a corporate cloud.

The hurdles we still have to jump

It's not all sunshine and rainbows. There are a few big problems that researchers are still tackling. First is the communication cost. Sending model weights back and forth hundreds of times requires a lot of bandwidth. If the connection is slow, the whole process grinds to a halt.

Then there's the "statistical challenge" known as data heterogeneity. Not every client has the same kind of data. One hospital might specialize in pediatrics while another does geriatrics. This can make the model "confused" or biased toward the client with the most data. Finally, there's the risk of advanced attacks. Some clever hackers can try to "reverse engineer" the raw data just by looking at the weight updates. This requires adding extra security layers, like differential privacy, to mask the updates.

Does federated learning guarantee 100% privacy?

It's significantly more private than centralized training, but not perfectly foolproof. While raw data never leaves the device, some sophisticated attacks can potentially infer data from model updates. To stop this, developers use techniques like differential privacy or secure multi-party computation to add noise to the updates.

Is it slower than traditional AI training?

In terms of wall-clock time, yes. Because you have to wait for multiple clients to finish their local training and then send updates over a network, it's slower than having all the data on one supercomputer. However, it allows you to use data that was previously "unusable" due to privacy laws, which makes the final model much more valuable.

What is the difference between Split Learning and Federated Learning?

Standard Federated Learning sends the whole model to the client. Split Learning breaks the model into pieces; the client processes the first few layers, and the server processes the rest. It's essentially a way to make federated learning possible for massive models like LLMs that are too big for client hardware.

Can this be used on my smartphone?

Yes. This is actually one of the primary use cases for "Edge AI." Many modern keyboard predictions and voice assistants use basic forms of federated learning to adapt to your typing style without sending your private messages to a central server.

Why is OpenFedLLM important?

OpenFedLLM provides a standardized framework for researchers to test different federated algorithms. By offering a set of 8 datasets and 30+ metrics, it allows the community to figure out which methods actually work for instruction tuning and value alignment without starting from scratch.

What's next for your AI strategy?

If you're running a business with sensitive data, you have two paths. You can either keep your data siloed and accept a lower-performing AI, or you can look into federated frameworks. For those just starting, experimenting with split learning is a great way to reduce the hardware requirements on your end. If you're in a highly regulated field like finance or healthcare, your first step should be auditing your data governance to see if a federated approach would satisfy your legal requirements while unlocking the power of collaborative AI.

Artificial Intelligence

Artificial Intelligence