You spent money on Generative AI. You rolled out the tools. Your team is using them. But when your CFO asks for the return on investment, you’re stuck. This is the most common frustration in enterprise tech right now. The numbers don’t add up the way they used to.

The problem isn't that the technology doesn't work. The problem is that we are trying to measure a cognitive revolution with industrial-era rulers. Traditional ROI formulas-simple division of profit over cost-fail to capture the nuance of how AI changes work. If you only look at direct cost savings, you will likely conclude that your AI project is failing. In reality, you might be sitting on a goldmine of efficiency and quality gains that just haven't translated to the bottom line yet.

To get clarity, you need to shift your perspective. Instead of looking for a single magic number, you need to track value across three distinct layers: basic usage, workflow efficiency, and strategic transformation. Here is how you can actually measure the impact of generative AI in your organization today.

The Three Tiers of AI Value Measurement

Effective measurement requires a tiered approach. Research from Worklytics outlines a clear hierarchy for tracking AI value. Most companies get stuck at Tier 1, which leads to the "AI hype" disappointment. To see real returns, you must progress through all three tiers.

Tier 1: Action Counts (The Basics)

This is where most organizations start. You track API calls, login rates, and feature adoption. These metrics tell you if people are using the tool, but nothing more. It’s like measuring how many times employees open their email client-it proves activity, not productivity. While essential for baseline monitoring, relying solely on Tier 1 metrics gives you a false sense of security or failure.

Tier 2: Workflow Efficiency (Productivity)

This tier measures the actual change in work output. Are tasks being completed faster? Are there fewer errors? This is where you quantify time savings. For example, if a developer uses GitHub Copilot to write code, you measure the reduction in hours spent on boilerplate coding. If a marketing team uses Adobe Firefly for assets, you track the speed of campaign creation. These are "hard" metrics that directly impact operational costs.

Tier 3: Revenue Impact (Transformation)

This is the holy grail. It connects AI usage to business outcomes like revenue per employee, client satisfaction scores (NPS), and new product launches. This is difficult because the link between an AI prompt and a closed deal is often indirect. However, this is where the highest ROI lives. Organizations that successfully map AI to these strategic outcomes report significantly higher financial returns than those who stop at Tier 2.

Hard vs. Soft ROI: What to Track

Not all value looks like cash in the bank immediately. You need to separate hard financial metrics from soft strategic benefits to build a complete picture.

Hard ROI Metrics

- Labor Cost Reduction: Calculate the hours saved per task multiplied by the hourly wage. If AI cuts report generation time by 65%, that is direct labor saving.

- Operational Efficiency: Measure the percentage reduction in resource consumption. Fewer server requests, less manual data entry, reduced cloud storage needs.

- Conversion Rates: Look at customer-facing applications. Adobe reported that teams using AI in content supply chains saw 22% higher conversion rates.

- New Revenue Streams: Identify products or services created entirely due to AI capabilities. Organizations with holistic AI strategies report 30% higher ROI here.

Soft ROI Metrics

- Quality of Work: Track error reduction rates and output quality scores. A senior data scientist noted that while speed increased, the real value was a 30% increase in strategic insight quality recognized by executives.

- Employee Satisfaction: Use eNPS (employee Net Promoter Score) to measure morale. When AI removes mundane tasks, satisfaction rises. Organizations report 18% higher satisfaction when AI handles repetitive work.

- Innovation Capacity: Count patent filings or shorten product development cycles. If AI helps R&D teams prototype faster, that is a measurable innovation boost.

Why Traditional ROI Calculations Fail

You might have heard conflicting reports about AI success. MIT researchers claim 95% of generative AI projects fail to deliver measurable ROI. Meanwhile, Wharton reports that 72% of organizations are formally measuring Gen AI ROI with positive returns. How can both be true?

The difference lies in the definition of "ROI." MIT’s study uses a narrow, traditional financial lens requiring immediate deployment beyond pilot phases with strict KPIs. Under this rigid definition, most projects look bad because the financial payoff lags behind the implementation. Wharton’s research includes broader measures of value, including capability building and efficiency gains.

Dr. Erik Brynjolfsson from Stanford HAI argues that we are applying industrial-era metrics to a cognitive-era transformation. Traditional ROI misses the value of quality improvements and capability enhancements. If you cancel a promising AI project after six months because it hasn't paid for itself in pure dollars, you might be throwing away a long-term competitive advantage.

| Approach | Focus | Timeframe | Success Rate Perception |

|---|---|---|---|

| Traditional Financial ROI | Direct Cost Savings & Profit | Short-term (0-6 months) | Low (High failure rate perception) |

| Productivity-Focused | Time Savings & Error Reduction | Medium-term (3-9 months) | Moderate |

| Transformational Impact | Strategic Capabilities & Revenue Growth | Long-term (6-18+ months) | High (Highest reported ROI) |

Implementing Your Measurement Framework

Building a robust measurement system takes time. According to implementation data from 272 enterprise clients, full framework deployment typically takes 3 to 6 months. Don’t expect overnight results.

- Establish Baselines (Weeks 1-4): Before turning on the AI tools, document current performance. High-ROI organizations track 12+ KPIs before implementation. Know how long tasks take, what the error rates are, and what employee sentiment is without AI.

- Track Usage (Weeks 4-8): Monitor Tier 1 metrics. Who is using the tools? Which features are popular? Use platforms like Worklytics or native analytics from ChatGPT Enterprise and Claude Enterprise.

- Measure Efficiency (Months 2-4): Start calculating time savings. Conduct controlled experiments. For example, compare a legal research task done traditionally versus one assisted by AI. A global law firm reported 27% higher billable hour utilization after such comparisons.

- Connect to Strategy (Months 4-6): Begin linking efficiency gains to business outcomes. Did the faster report generation lead to better client decisions? Did the improved code quality reduce post-launch bugs and support costs?

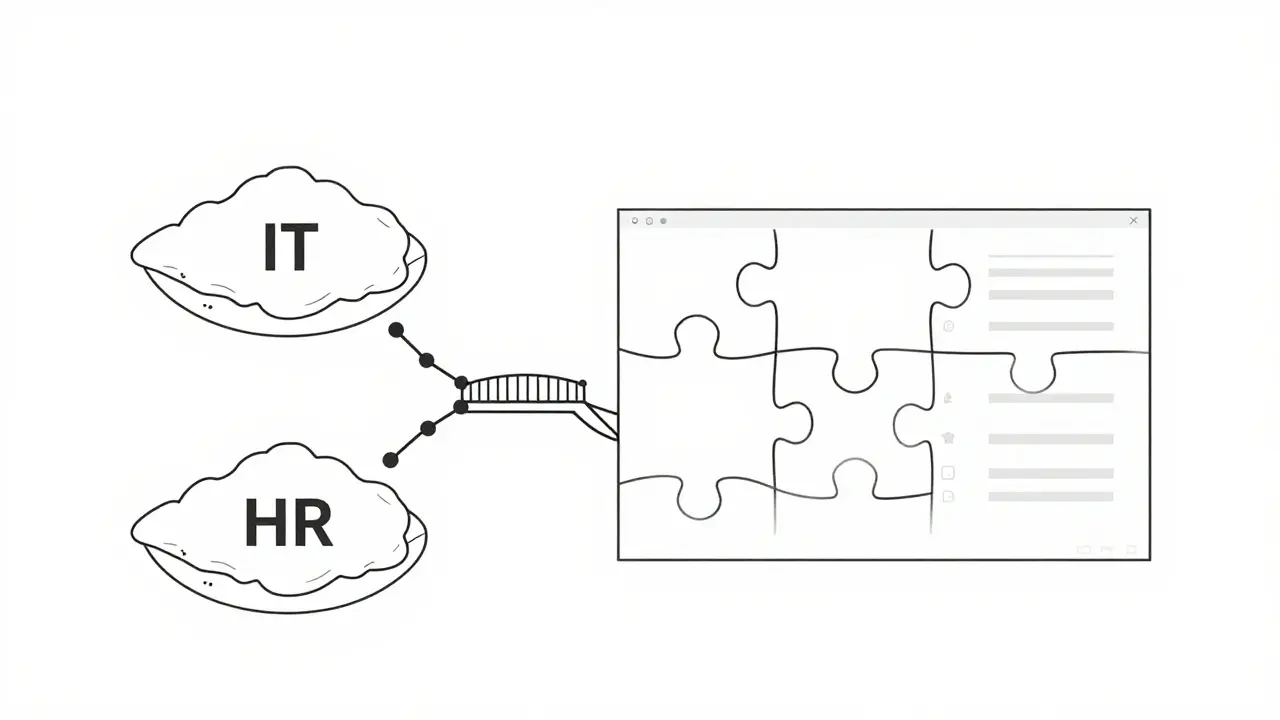

A major challenge is attribution. Data silos prevent 76% of organizations from seeing the full picture. You need unified analytics that connect IT data (API usage) with HR data (employee feedback) and Finance data (revenue). Without this integration, you’ll struggle to prove the value of your $2 million annual AI investment.

The Strategic Advantage of Holistic Measurement

Organizations that treat AI as a strategic partner rather than just a cost-cutting tool achieve better results. Thomson Reuters found that companies with formal AI strategies aligned to business goals achieve 2.3x higher ROI than those adopting AI informally.

This means moving beyond "how much time did we save?" to "what new capabilities did we unlock?" For instance, Google Cloud reports that organizations measuring AI agent impact see 37% higher ROI than those measuring only individual tool usage. Agents that can autonomously handle complex workflows create more value than simple chatbots answering FAQs.

Furthermore, regulatory pressures are increasing. The EU AI Act requires transparency for high-risk applications. Having a robust measurement framework isn't just about proving value to your board; it’s about compliance and risk management. European enterprises are already enhancing their frameworks to meet these standards.

Future Outlook: Predictive Analytics

The future of AI ROI measurement is predictive. New tools are emerging that forecast ROI based on early adoption patterns. Worklytics has introduced capabilities that predict AI ROI with 83% accuracy just eight weeks after implementation. This allows leaders to allocate resources more effectively, doubling down on high-performing use cases and pivoting away from low-value ones.

By 2026, Gartner predicts that 70% of enterprises will use AI-powered analytics to automatically attribute business outcomes to specific AI initiatives. This will eliminate the guesswork and provide real-time dashboards connecting every AI interaction to a dollar figure.

The key takeaway is simple: stop waiting for the perfect ROI number. Start measuring the journey. Track usage, quantify efficiency, and eventually, link it all to strategy. The organizations that win won't be the ones with the cheapest AI tools, but the ones that best understand the value those tools create.

What is the most accurate way to calculate Generative AI ROI?

There is no single formula. The most accurate method combines a three-tiered approach: tracking basic usage (Tier 1), measuring workflow efficiency and time savings (Tier 2), and connecting outcomes to revenue and strategic goals (Tier 3). Relying solely on traditional financial ROI often underestimates the value of quality improvements and innovation capacity.

Why do some studies say 95% of AI projects fail?

Studies like MIT's 'GenAI Divide' use narrow definitions of ROI that require immediate financial returns post-pilot. They often exclude soft benefits like employee satisfaction, quality improvement, and strategic capability building. Other reports, like Wharton's, show higher success rates by including these broader value metrics.

How long does it take to see ROI from Generative AI?

Full measurement framework deployment takes 3-6 months. Basic usage metrics can be tracked within weeks, while efficiency gains become visible in 2-4 months. Strategic revenue impact may take 6-18 months to fully materialize, depending on the complexity of the implementation.

What are "soft ROI" metrics in AI?

Soft ROI metrics include non-financial benefits such as improved quality of work, reduced error rates, higher employee satisfaction (eNPS), and increased innovation capacity (e.g., faster product development cycles). These metrics often precede direct financial returns but are critical for long-term success.

How can I overcome data silos when measuring AI ROI?

Use unified analytics platforms that integrate data from multiple sources, including IT systems (API logs), HR tools (employee feedback), and financial systems. Establish cross-functional teams to ensure consistent tracking and attribution of AI impact across different departments.

Artificial Intelligence

Artificial Intelligence