The Problem with Lying AI

You know the feeling. You ask your Large Language Model (LLM) a straightforward question about a company policy, and instead of answering, it confidently invents facts. This phenomenon is called hallucination, and it remains the biggest barrier to using generative AI in serious business settings. In production environments, trust is everything. If an AI assistant tells a customer support agent the wrong refund policy, the cost goes up fast.

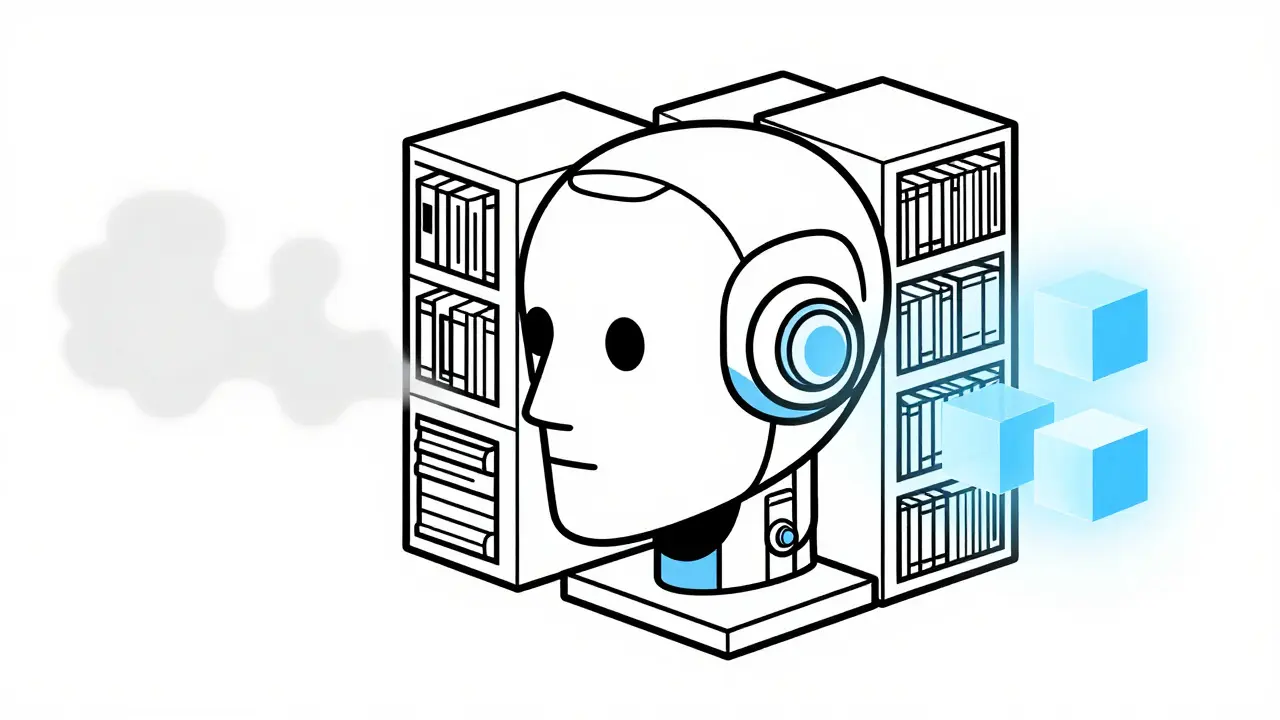

That’s where Retrieval-Augmented Generation comes in. RAG is not just a buzzword; it’s a practical architecture that changes how AI handles information. Instead of relying solely on the static training data it was fed years ago, an RAG system connects your model to a live library of verified documents. When you ask a question, the system looks up the answer first, then drafts the response based on what it found. According to MIT’s 2023 benchmark study, this approach reduces hallucination rates by between 47% and 63% compared to using a base model alone. As we move through 2026, RAG has become the industry standard for making AI trustworthy.

How Grounding Works Technically

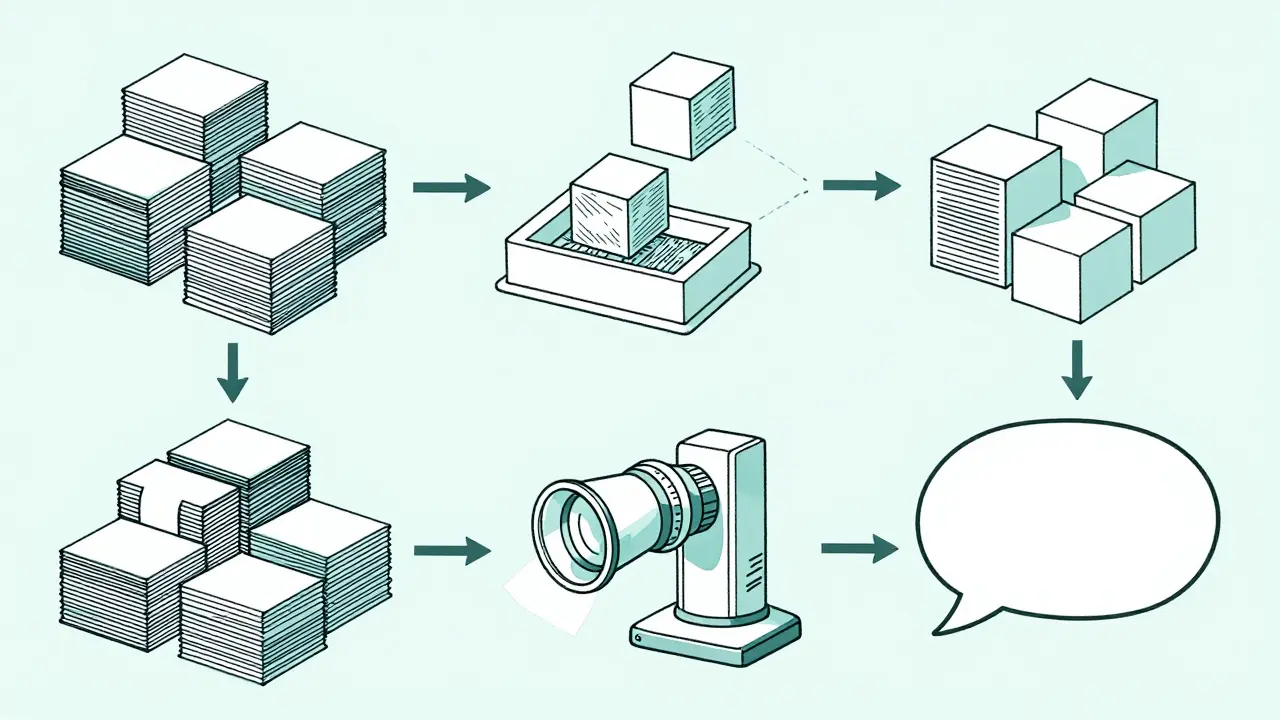

At its core, RAG functions as a bridge between your internal data and an AI model. Think of it as giving the AI a textbook before asking it to write an essay, rather than expecting it to recall every fact from memory. The process follows a strict four-stage pipeline that ensures data integrity at every step.

- Ingestion: Your documents-PDFs, spreadsheets, database entries-are chopped into smaller pieces called chunks. Typically, these are sized between 256 and 512 tokens. This size matters because it preserves context without overwhelming the model. During this phase, a text embedding model converts these chunks into vectors, which are mathematical representations of meaning.

- Storage: These vectors land in a vector database. Tools like Pinecone, Weaviate, or AWS OpenSearch store these 1024-dimensional numerical maps so they can be searched quickly.

- Retrieval: When you type a query, the system converts your question into a vector too. It then scans the database for the closest matches using similarity search algorithms like HNSW. A cosine similarity threshold of ≥0.78 usually signals a relevant document. Hybrid searches that combine keyword matching with vector search can improve recall by another 32%, according to Google Cloud’s 2024 whitepaper.

- Generation: Finally, the top three to five retrieved results are combined with your original query into a prompt. The LLM reads this context and writes the final answer.

This workflow allows the AI to reference "authoritative sources" without needing to be retrained. Meta AI researchers describe this as transforming models from static repositories into dynamic information synthesizers. It’s a shift from memorization to reasoning.

RAG versus Fine-Tuning

Many teams confuse RAG with fine-tuning. Both methods improve AI performance, but they solve different problems. Fine-tuning involves retraining the model itself on new data. While this excels for deep domain adaptation, such as integrating complex medical terminology where accuracy improved by 19.3% in Mayo Clinic trials, it is expensive and slow. Retraining a model can cost over $50,000 per iteration, as reported by Hugging Face in January 2025.

RAG, on the other hand, keeps the model frozen and updates the data layer instead. This offers a massive cost advantage, often running at just 5-8% of the cost of fine-tuning. If your financial regulations change quarterly, RAG maintains 92.7% accuracy by swapping out old documents, whereas a fine-tuned model might drop to 78.4% due to outdated weights. For most businesses dealing with fluctuating policies or documentation, RAG is the more flexible and economical choice.

| Attribute | Retrieval-Augmented Generation (RAG) | Fine-Tuning |

|---|---|---|

| Primary Use Case | Frequent knowledge updates (e.g., policies) | Deep structural adaptation (e.g., style, tone) |

| Cost Efficiency | Low (5-8% of fine-tuning cost) | High (>$50k per iteration) |

| Hallucination Risk | Significantly reduced via citations | Depends on training data quality |

| Maintenance Speed | Minutes to hours | Days to weeks |

Navigating Implementation Challenges

Even though the theory is solid, deploying RAG in the real world introduces friction. Developers often report increased latency. One user on Reddit noted their query time jumped from 1.2 seconds to 3.8 seconds after adding RAG. While a three-second wait might feel sluggish in chat apps, many enterprise users accept the trade-off for accuracy.

You also need to manage two common failure modes. First is "retrieval drift," where slightly altered queries retrieve irrelevant documents because the semantic matching is too loose. Second is "context overload." If you feed the LLM ten retrieved documents instead of three, it gets confused and performance drops. Solving this requires optimizing your chunk overlap strategies. Using a 15-20% token overlap improves context continuity by 28%. Additionally, reranking techniques, like Cohere’s Rerank v3.0, can boost precision by 34% by filtering out weak matches before they hit the generation stage.

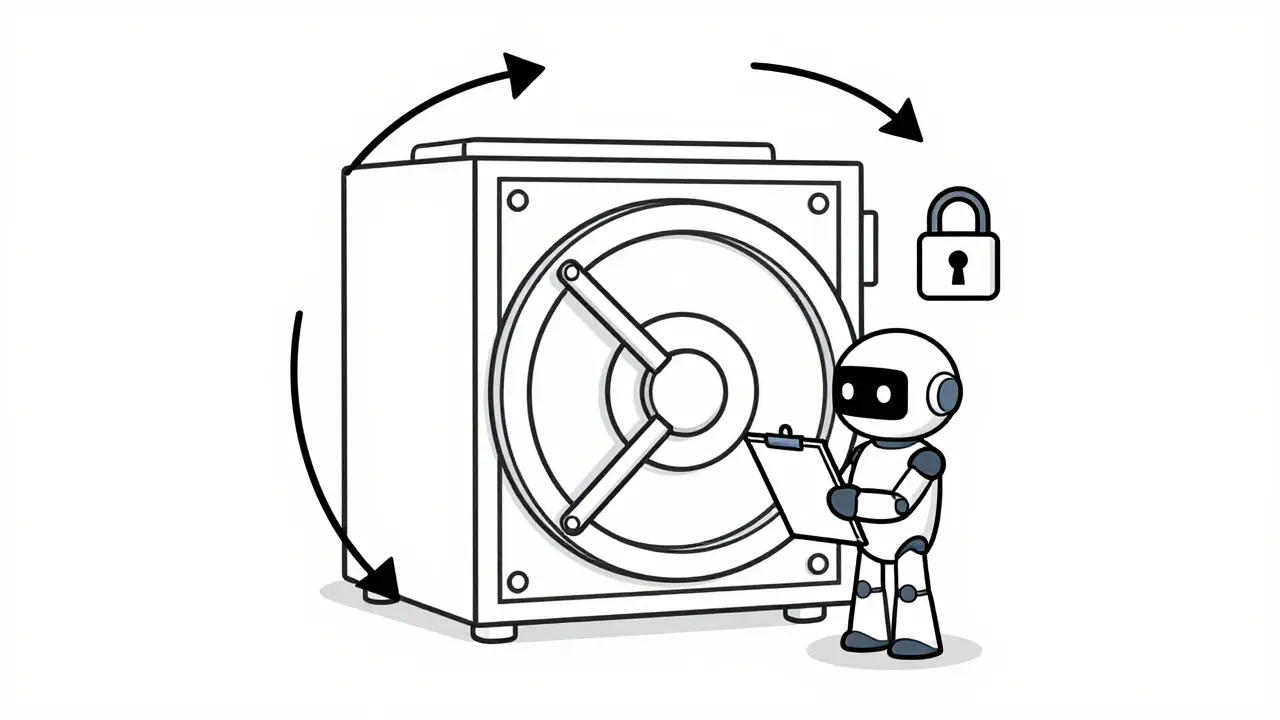

Security is another critical aspect. As highlighted by Carnegie Mellon University’s Security Lab in 2025, there is a risk of "retrieval poisoning." Adversaries could manipulate knowledge bases to generate misleading outputs. To prevent this, organizations must implement access controls on the ingestion layer and validate all incoming documents before they enter the vector index.

Adoption Trends in 2025 and Beyond

By early 2026, the technology has matured significantly. Gartner places RAG at the "Plateau of Productivity," indicating it is now a reliable solution rather than experimental hype. Adoption is surging across regulated sectors. About 68% of financial institutions use it for compliance queries, while 53% of healthcare organizations deploy it for clinical documentation support. Government agencies are also heavily invested, with 81% implementing RAG for public-facing document applications.

The future points toward agentic architectures. Microsoft’s AutoGen project demonstrated systems where multiple AI agents collaborate to refine queries and validate retrieved information, reducing error rates by nearly 30%. Furthermore, we are seeing the emergence of multimodal RAG. While today’s systems handle text, upcoming models like GPT-5 are expected to integrate native support for images and video in 2026. This means you won’t just ask the AI about a text document; you’ll ask it about a diagram or a video file, retrieving visual contexts alongside text snippets.

Choosing Your Tech Stack

If you’re building this today, your choices depend on scale and control. Cloud platforms offer managed services like AWS Bedrock Knowledge Base or Vertex AI, which simplify setup. However, open-source vector databases like ChromaDB give you more flexibility. Pricing varies wildly depending on query volume. AWS charges roughly $0.10 per 1K vector queries, while some specialized vendors charge less for high-volume tiers. Always calculate the total cost of ownership including the embedding API calls and compute resources required for the generation phase.

Ensuring Long-Term Viability

RAG systems require maintenance. Vector databases must stay fresh. If you are tracking time-sensitive data like stock prices or legal rulings, nightly updates are necessary. Without them, your system becomes obsolete. Monitoring is also key. You should track metrics like "source relevance" and "answer confidence" to catch silent failures where the AI finds something but doesn’t cite it properly. Professor Emily M. Bender warned that RAG’s citation capabilities create dangerous illusions of accuracy when retrieval fails silently. Regular auditing of the retrieval logs helps maintain trust in your application.

What exactly is Retrieval-Augmented Generation (RAG)?

RAG is an AI framework that retrieves external information from a database before generating a response. It combines a search engine with a language model to reduce hallucinations and ground answers in verified data.

Is RAG better than fine-tuning?

It depends on your goal. RAG is better for updating knowledge frequently (like policies) and costs much less. Fine-tuning is better for changing the model's tone or behavior fundamentally. Most companies use both for different tasks.

Does RAG completely stop hallucinations?

No, it reduces them significantly (by 47-63%). However, if the retrieved context is ambiguous or missing, the LLM can still hallucinate. Proper prompting and retrieval validation are required to minimize risk.

Which vector database should I use?

Popular options include Pinecone for managed cloud solutions, Weaviate for open-source flexibility, and ChromaDB for local development. Choice depends on your security needs and budget.

How does RAG affect response speed?

RAG adds latency because it performs a search before writing. Expect query times to increase, often doubling from under 1 second to 3-4 seconds depending on the database complexity.

Artificial Intelligence

Artificial Intelligence

Antwan Holder

March 28, 2026 AT 22:27The idea of grounding truth in machines is beautiful yet terrifying. We build systems that lie confidently to save face. It feels like we are training liars instead of thinkers. The latency numbers hide the deeper existential dread. Trust evaporates when the machine nods while hallucinating policies.

Colby Havard

March 30, 2026 AT 08:18Your perspective is deeply insightful!!!! The moral implication of automated lying is severe...!!! We must demand ethical boundaries on these models.....

Ashley Kuehnel

March 31, 2026 AT 01:13Hey everyone i hope u find this helpful for setup. Thsi pipeline works great for most docs but watch out for embeds. Make sure to clean ur data before ingesting into pinecone or weaviate. The overlap strategy saves so much time later.

Mongezi Mkhwanazi

April 1, 2026 AT 04:34People always think RAG fixes everything magically without reading the docs. They forget that bad data in means garbage out eventually for sure. The retrieval drift issue is actually quite scary for banks specifically. You saw how they mention latency in the original text clearly. That latency is fine for documents but absolutely bad for trading platforms. Most developers ignore the embedding quality entirely in their rush. It requires constant human oversight during ingestion of files. If the chunk size is wrong the context disappears completely every time. Then you get silent failures which nobody notices until it is too late. Security teams never audit the vector store permissions properly enough. An insider threat could poison the whole knowledge base easily then. We need stricter access controls on the embedding layer itself always. Otherwise the model outputs are useless for compliance work later. Companies will sue us when the financial advice goes wrong eventually. RAG is just a wrapper around old search problems really. You cannot automate trust without auditing the source constantly.

Amy P

April 1, 2026 AT 22:54Thanks for the tip on the chunks!!! This is such a game changer for us. Sometimes the vector db gets messy without proper cleanup routines. I love how flexible the stack looks now. The hybrid search feature makes me feel so much safer. We should implement this ASAP!

adam smith

April 3, 2026 AT 01:36Implementation costs are high indeed.

Mark Nitka

April 3, 2026 AT 09:13Valid points raised here but the tradeoff remains worth it overall. Security is paramount but stagnation kills products faster than leaks. We need balance between control and speed of deployment. Access layers are improving with new standards anyway. Just do not ignore the monitoring metrics entirely.