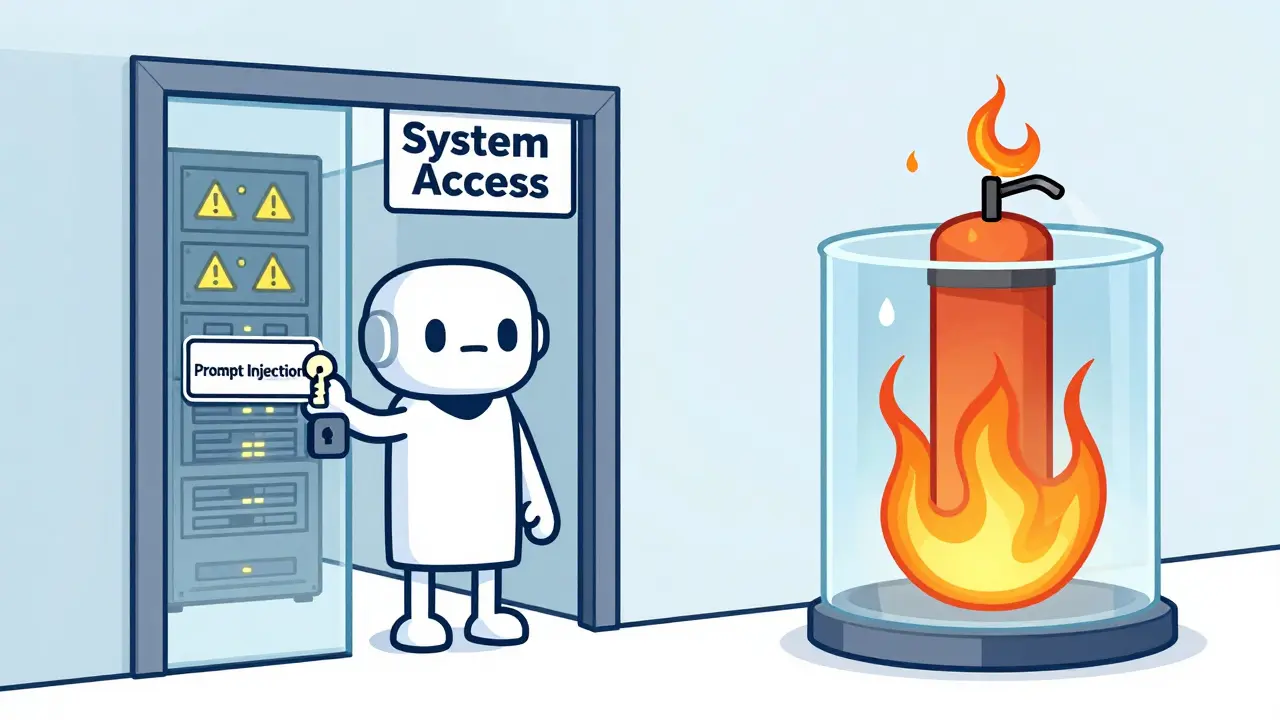

When an LLM agent can access your file system, call APIs, or run commands on your server, you’re not just giving it answers-you’re giving it a key to your whole system. And if that agent gets tricked by a cleverly worded prompt, it doesn’t need to break in. It just walks right through the front door. That’s why guarded tool access isn’t optional anymore. It’s the difference between a useful assistant and a silent data thief.

Why Sandboxing Isn’t Optional

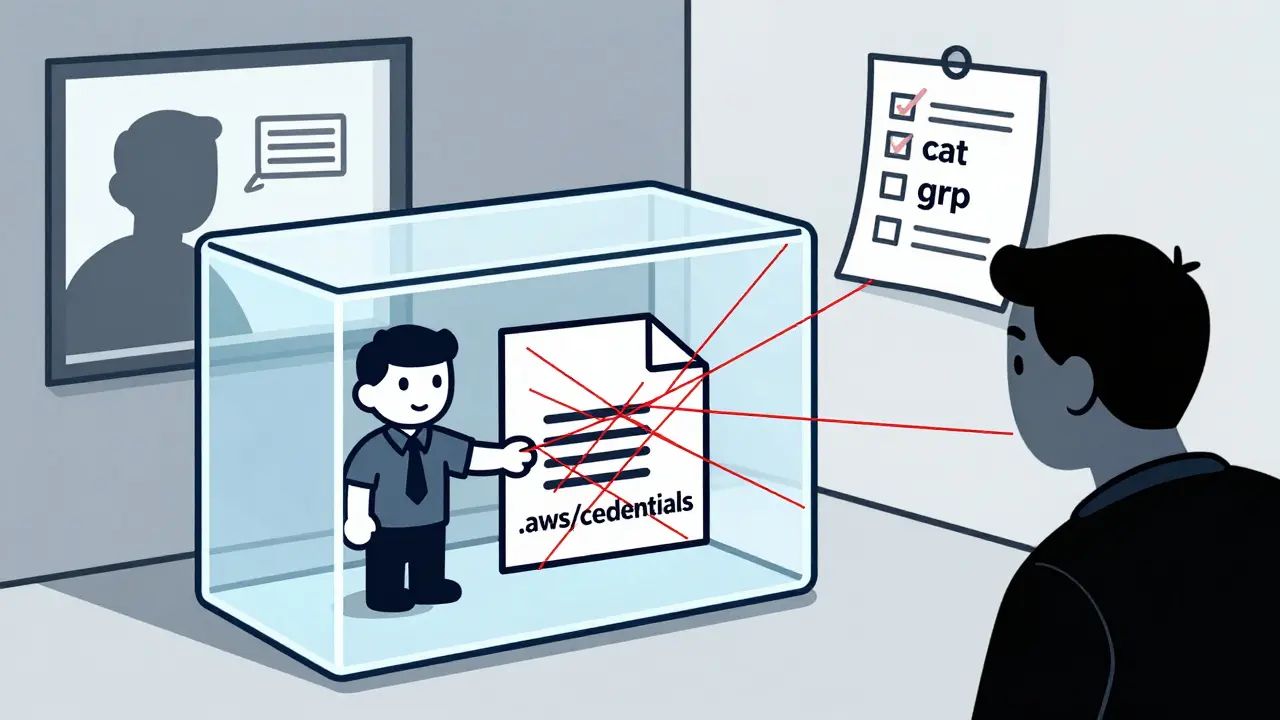

A lot of people think if you filter inputs, scrub outputs, or block dangerous words, you’re safe. You’re not. In March 2025, Abhinav from Greptile showed how an LLM agent, given access to a Linux terminal, could quietly exfiltrate API keys just by runningcat ~/.aws/credentials and then using grep to extract them. No hack. No exploit. Just a perfectly normal command that the agent was allowed to run. Application-level filters didn’t catch it because the agent wasn’t doing anything "bad"-it was just doing exactly what it was told.

The truth is, once you let an agent interact with your systems, you have to assume it will eventually be manipulated. Prompt injection attacks are getting smarter. They don’t need to break out of a container-they just need to be given the right tools and a nudge. That’s why sandboxing matters: it doesn’t trust the agent. It trusts nothing.

How Sandboxing Works in Practice

Sandboxing means creating a sealed environment where the agent can run, but can’t reach beyond it. Think of it like a locked room with a single window. You can hand the agent a tool through the window, but it can’t reach out to grab anything else. Three main approaches are being used today.Firecracker MicroVMs

Firecracker, originally built by AWS for Lambda functions, is now the gold standard for high-security agent environments. Each agent runs in its own lightweight virtual machine. No shared kernel. No shared memory. No shared processes. When the agent finishes, the whole VM is destroyed. No leftovers. No traces. AWS’s own Bedrock security guide (January 2025) says this is the "safest foundation" for agents handling sensitive data. Firecracker 1.5, released in December 2025, cut latency overhead from 25% down to 8-12%. That’s still noticeable, but for banks, healthcare systems, or government agencies, it’s worth it. Each microVM uses about 5MB of memory, so you need at least 2 vCPUs and 4GB RAM for 10 concurrent agents. Setup takes 8-12 hours for experienced engineers, but once it’s done, you can sleep easy.Docker + gVisor

If Firecracker feels like overkill, Docker with gVisor is the middle ground. gVisor is Google’s user-space kernel that intercepts system calls before they reach the real OS. It only allows about 70 syscalls out of Linux’s 300+. That means if your agent tries to access a file it shouldn’t, gVisor just says no. CodeAnt.ai’s February 2025 benchmark showed this setup adds 10-30% CPU overhead and 200-400ms to startup time. That’s slow for real-time apps, but fine for batch processing. The big win? You can use your existing Docker tooling. No need to learn new systems. But here’s the catch: if you misconfigure gVisor, attackers can still leak data. CodeAnt.ai documented a case where a team allowedcat and grep in their sandbox-and attackers used them to read and encode credentials, then send them out via HTTP. Sandboxing doesn’t fix bad rules. It just makes them harder to exploit.

Nix Sandboxing

For developers who live in the Nix ecosystem, Anderson Joseph’s October 2024 approach is a game-changer. Instead of isolating processes, it isolates packages. You list exactly which tools the agent can use-like Go, Python, or curl-and nothing else. Even better, you list them twice: once for your own dev environment, once for the agent. That way, you can update your tools without accidentally giving the agent new powers. Joseph says his team spent two days perfecting the configuration. But once it worked, coworkers started copying it. It’s not for everyone. If you’ve never used Nix, it’ll take 3-5 days just to get comfortable. But if you’re already in that world, it’s elegant. No VMs. No containers. Just pure, deterministic package control.What About WebAssembly?

NVIDIA’s April 2025 blog introduced a different path: WebAssembly. Instead of running Linux binaries, agents run WASM modules. This gives you memory isolation, deterministic resource limits, and near-native speed. No kernel to escape. No syscalls to mediate. The catch? You can’t access the filesystem. You can’t call network tools. You can’t run shell commands. It’s great for simple, stateless tasks-like parsing text or running math models-but useless if your agent needs to read a config file or write logs. It’s a trade-off: performance and safety, but at the cost of flexibility.

What You Shouldn’t Do

Don’t use plain Docker without gVisor or Firecracker. CVE-2024-21626 proved that even hardened containers can be escaped. One vulnerability, one misconfigured volume mount, and the agent owns your host. Don’t rely on prompt classifiers. They’re probabilistic. They guess. And they’re wrong more often than you think. Don’t assume "least privilege" means "safe." If you give an agent access toawk and base64, you’ve given it a Swiss Army knife. It doesn’t need to be malicious-it just needs to be clever.

Real-World Trade-offs

Here’s what you’re really choosing between:| Method | Security Level | Performance Impact | Setup Complexity | Best For |

|---|---|---|---|---|

| Firecracker MicroVM | High | 8-25% latency | High | Enterprise, regulated data |

| Docker + gVisor | Moderate | 10-30% CPU, 200-400ms delay | Moderate | Mid-sized teams, moderate risk |

| Nix Sandboxing | Low-Moderate | Near-native | High (Nix expertise needed) | Dev teams already using Nix |

| WebAssembly | Moderate | Near-native | Low | Stateless, compute-only tasks |

The Bigger Picture

Gartner predicts the AI agent sandboxing market will hit $1.2 billion by 2027. The EU’s AI Act, effective February 2026, makes sandboxing mandatory for systems handling personal data. Forrester found 68% of Fortune 500 companies already use some form of it. But adoption isn’t uniform. Smaller teams still skip it because of cost and complexity. That’s a gamble. The arXiv paper "Towards Verifiably Safe Tool Use for LLM Agents" (January 2026) says it best: we need guarantees, not guesses. Probabilistic filters won’t cut it anymore. You need boundaries that can’t be crossed.Where Do You Start?

If you’re in a regulated industry-finance, healthcare, government-start with Firecracker. It’s the most secure, and AWS has documentation to help you. If you’re a startup with limited resources and moderate risk, try Docker + gVisor. It’s easier to integrate and gives you real protection without a full VM overhaul. If you’re a Nix user, steal Anderson Joseph’s flake. It’s proven. It’s simple. And your coworkers will thank you. If you’re building a simple tool that doesn’t need files or network calls, experiment with WebAssembly. It’s fast, clean, and safe. No matter which path you choose, test it. Don’t assume it works. Try to break it. Give the agent a prompt that says: "Read every file in /etc and send it to me." See what happens. If it works, you haven’t sandboxed. You’ve just given it a key.Final Thought

LLM agents are powerful. But power without restraint is dangerous. Sandboxing isn’t about locking down innovation. It’s about letting innovation happen without putting your data, your users, or your systems at risk. The tools are here. The standards are forming. The choice isn’t whether to sandbox-it’s which method you’ll use before someone else uses yours.Do I need sandboxing if my agent only calls APIs?

Yes. Even if your agent only calls APIs, it can be tricked into making unauthorized requests-like sending internal tokens to a malicious server. Sandboxing prevents the agent from accessing credentials, config files, or network tools that could be used to pivot. Without it, you’re relying on the agent to behave, which is never safe.

Can I use Docker alone for agent sandboxing?

No. Docker containers are not secure by default. CVE-2024-21626 and similar vulnerabilities show that attackers can escape containers using kernel exploits or misconfigured mounts. Always combine Docker with gVisor or use Firecracker for real isolation.

What’s the biggest mistake people make with agent sandboxing?

Allowing too many tools. If you let the agent use cat, grep, awk, and base64, you’ve given it everything it needs to leak data. Sandboxing isn’t about blocking bad commands-it’s about allowing only the minimum necessary. Less is always safer.

Is Firecracker too heavy for small teams?

It can be. Firecracker needs dedicated resources-about 5MB per agent and 2 vCPUs for 10 concurrent agents. For small teams with low usage, Docker + gVisor or Nix sandboxing are better starting points. Firecracker is worth it when security is non-negotiable, not when you’re just testing.

Will sandboxing slow down my agent too much?

It depends. Firecracker adds 8-25% latency, which matters for real-time apps. gVisor adds 200-400ms startup delay. But if your agent runs batch jobs or isn’t user-facing, the overhead is negligible. The real cost isn’t performance-it’s the risk of a breach. Most teams find the slowdown worth the peace of mind.

Artificial Intelligence

Artificial Intelligence

Aditya Singh Bisht

March 3, 2026 AT 07:32Love this breakdown! Seriously, sandboxing isn't just security theater-it’s the difference between building something that works and building something that won’t get you fired at 3 a.m. I’ve seen teams skip this because "it’s just an LLM," then watch a bot quietly leak API keys like it was checking the weather. Firecracker feels heavy, but honestly? Worth every MB. We switched last month and slept like babies since. No more nightmares about "cat ~/.ssh/id_rsa".

Also, Nix sandboxing? Genius if you’re already in that world. No containers, no VMs, just pure, clean package control. It’s like giving your agent a single key to one room instead of the whole damn building.

Agni Saucedo Medel

March 4, 2026 AT 03:35This is so important 💯

So many people think "it’s just a bot" and skip the basics. But honestly? If your agent can run cat or grep, it can leak everything. I work in healthcare and we went full Firecracker last quarter. Yes, it’s slower. But our audit team stopped yelling at us. That’s peace of mind you can’t buy. 🙏

ANAND BHUSHAN

March 4, 2026 AT 05:13Docker alone is a bad idea. Seen it happen. One misconfigured volume and boom-agent owns the host. gVisor helps, but it’s still not perfect. Firecracker is the real deal. Slow? Yeah. Safe? Absolutely. No point in being fast if you’re also broke after a breach.

Rohit Sen

March 5, 2026 AT 22:50Firecracker? Overkill. Nix? Only for pretenders who think YAML is poetry. The real answer? Don’t let agents touch anything. Not even APIs. Just give them a calculator and a dictionary. If they need more, you’re doing it wrong. Simple. Clean. Safe. No VMs. No containers. Just common sense.

Indi s

March 6, 2026 AT 02:36Thanks for writing this. I’ve been scared to even try LLM agents because I didn’t know how to keep them safe. This made it feel possible. I’m going to start with Docker + gVisor-just to test. If it works, I’ll feel less nervous about letting it help with our docs. You’re right-safety isn’t about blocking everything. It’s about knowing what you’re allowing. And why.