When a customer calls a support line, they’re not just asking for help-they’re telling a story. Their tone, hesitation, repetition, even the way they pause before saying "I just want this fixed"-all of it matters. Traditional contact center analytics used to miss most of it. Keyword matching, pre-set categories, and simple sentiment scores couldn’t capture the real meaning behind the words. But now, with Large Language Models, contact centers are finally hearing what customers actually mean.

How LLMs Turn Raw Calls Into Actionable Insights

Before LLMs, call centers relied on speech-to-text tools that turned voice into text, then ran basic filters: "Did they say 'refund'?" or "Was the word 'angry' mentioned?" That’s like judging a movie by its trailer. You get the headline, but miss the plot. LLMs change that. These models, trained on millions of real conversations, understand context. They don’t just look for words-they look at how words connect. A customer saying, "I’ve been waiting three weeks," isn’t just complaining about time. They’re signaling frustration, distrust, and possibly churn risk. An LLM picks up all of it. The process starts with transcription. Every call, chat, or email is turned into text. Then, the LLM breaks it down into "call drivers"-the core reason the customer reached out. Instead of forcing calls into rigid buckets like "Billing Issue" or "Technical Support," the model clusters similar phrases naturally. For example, it might group:- "I can’t log in after the update."

- "The app keeps crashing when I try to pay."

- "I got an error message after signing in."

Sentiment Isn’t Just Positive or Negative

Old-school sentiment analysis said: "happy," "neutral," or "angry." Real people aren’t that simple. A customer might sound calm while saying, "I guess I’ll just cancel," but their underlying emotion is betrayal. Another might shout, "I’m so mad!"-but then thank the agent and stay loyal. Modern LLMs detect emotional trajectories. They track how sentiment shifts during a conversation. Did the customer start frustrated, then calm down after the agent offered a solution? Or did they start calm, then spiral into anger because the agent kept reading from a script? These models also spot tone. Is the customer being sarcastic? Defensive? Hopeful? One study found that customers who expressed "confidence" in their statements-"I know this is fixable," "I’ve done this before"-were 40% more likely to stay loyal if their issue was resolved quickly. LLMs catch that. Humans don’t.Intent Detection: What They Really Want

Intent detection is where LLMs truly shine. It’s not about what the customer says-it’s about what they mean. Take this exchange: > Customer: "I tried resetting the password, but it still won’t let me in." > Agent: "Have you tried clearing your cache?" > Customer: "I don’t even know what that means." Traditional systems might classify this as "Password Reset Issue." But the LLM sees the real intent: "I need a simple, step-by-step guide I can follow without tech jargon." Even more powerful is intent chaining. Customers rarely have one goal. They might call about a billing error, but while talking, reveal they’re considering switching providers because of poor service over the last six months. An LLM can track both intents simultaneously-billing fix + retention risk-and alert the agent in real time. This isn’t theoretical. Companies using intent chaining report 30% fewer repeat calls and 22% higher customer satisfaction scores.

From Analysis to Action: Automating the Work

The real value isn’t in seeing patterns-it’s in acting on them. LLMs now automate tasks that used to eat up agent time:- Auto-generating wrap-up notes: After a call, the system writes a summary for the CRM-no typing required.

- Smart knowledge base updates: If five customers say, "I can’t find the invoice download button," the LLM pulls the exact phrases from transcripts, generates a FAQ, and pushes it to the help center automatically.

- Real-time agent assist: While the agent is on the call, the LLM suggests responses based on what the customer just said. If the customer says, "I’ve been a customer for 10 years," the system prompts: "Acknowledge loyalty and offer a goodwill gesture."

Language, Scale, and Multilingual Support

Global companies don’t just serve English speakers. An LLM-powered contact center can handle Spanish, Mandarin, Arabic, and Hindi-all with the same accuracy. No need for separate teams or translation services. These models don’t just translate words. They adapt tone. A customer in Japan might say something indirectly, expecting the agent to read between the lines. An LLM trained on cultural speech patterns knows that. A customer in Germany might be blunt. The system adjusts its response style accordingly. This isn’t just convenience-it’s fairness. Customers get the same quality of service, no matter their language or location.Predicting Problems Before They Happen

The next leap isn’t just understanding past calls-it’s predicting future ones. LLMs now analyze trends in real time. If 15 new calls in one day all mention "delayed shipping," and none of them match existing clusters, the system flags it as a potential emerging issue. It doesn’t wait for a spike. It catches the first ripple. Even more powerful: churn prediction. By combining sentiment, intent, and historical behavior, the system can estimate the likelihood a customer will leave. If someone has called twice in two weeks about the same issue, and their sentiment dropped from neutral to angry, the system triggers a retention workflow-maybe a manager calls them before they even hang up. One telecom provider reduced churn by 18% in six months using this method. They didn’t wait for customers to leave. They intervened before the frustration became irreversible.Why Generic AI Models Fail in Contact Centers

You might think: "Why not just use ChatGPT?" The answer is simple: context. A general-purpose LLM like GPT-3.5 doesn’t know what a "billing cycle adjustment" means in a utility company. It doesn’t understand internal codes like "Case Type 7B" or "Tier 2 Escalation." It doesn’t recognize that "I need this done ASAP" in a healthcare call means something very different than in a retail call. Observe.AI tested this. They compared GPT-3.5 against contact-center-trained LLMs using real call data. The general model got the "reason for call" right only 58% of the time. The specialized model? 94%. Why? Because the specialized models were trained on millions of real support interactions. They learned the language of the industry-not just grammar.The Cost of Power

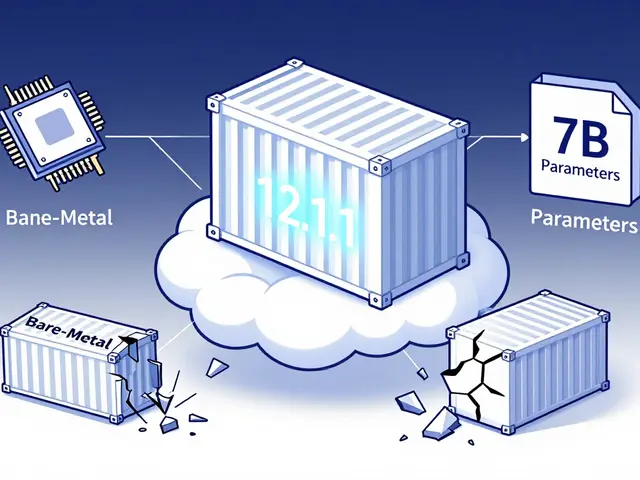

Running LLMs at scale isn’t cheap. A 30B-parameter model is accurate-but uses 10x more computing power than a 7B model. For a contact center handling 100,000 calls a day, that adds up fast. The smartest companies don’t go all-in on the biggest model. They use a tiered approach:- Use a smaller model (7B-13B) for real-time agent assist-fast, low-latency.

- Use a larger model (20B-30B) for overnight batch analysis of trends and sentiment.

- Apply length penalties: if a call transcript is over 800 words, the system truncates it. Why? Long calls often include rambling, filler, or emotional outbursts that create noisy, unhelpful clusters.

What’s Next?

The future of contact centers isn’t just smarter bots-it’s proactive, intelligent ecosystems. Imagine this: - A customer logs into their account and sees a message: "We noticed you’ve had two recent issues with your bill. Here’s a one-click fix, and we’ve waived the late fee as a thank you." - That message wasn’t written by a human. It was generated by an LLM that connected data from billing, call logs, and customer history. LLMs are turning contact centers from cost centers into strategic intelligence hubs. Companies that use them aren’t just fixing problems-they’re anticipating them, improving products, and building loyalty before customers even realize they’re at risk. The voice of the customer isn’t just heard anymore. It’s understood. And that changes everything.How do LLMs detect sentiment better than older systems?

Older systems used keyword lists and simple scoring-like counting negative words. LLMs analyze context, tone, and emotional shifts across entire conversations. They can tell if a customer is being sarcastic, hesitant, or genuinely relieved-even if no "angry" word is used.

Can LLMs handle multiple languages in a contact center?

Yes. Modern LLMs are trained on multilingual datasets and can process, understand, and respond in over 100 languages with native-level accuracy. They adapt tone and cultural nuance, so a customer in Brazil gets the same quality of service as one in Germany.

Why not just use ChatGPT for contact center analytics?

General-purpose models like ChatGPT lack domain-specific knowledge. They don’t understand internal terms like "Tier 2 escalation" or "billing cycle adjustment." Contact-center-trained LLMs, on the other hand, are fine-tuned on real support data and achieve up to 94% accuracy in identifying call reasons-compared to 58% for general models.

How do LLMs help reduce agent workload?

LLMs automate tasks like writing CRM summaries, suggesting responses during calls, and generating FAQs from customer transcripts. One company reduced documentation time by 65%, letting agents spend more time helping customers and less time typing.

What’s the biggest risk when using LLMs in contact centers?

The biggest risk is hallucination-where the model generates false or misleading insights. This can happen if the model isn’t properly trained on industry-specific language. The solution is using models fine-tuned on real contact center data and validating outputs with human oversight.

Do LLMs improve customer retention?

Yes. By detecting early signs of frustration and predicting churn risk, LLMs enable proactive interventions. One telecom company reduced customer churn by 18% in six months by using LLMs to flag at-risk customers before they called to cancel.

How are emerging issues identified in real time?

LLMs use outlier detection to spot new patterns. If a cluster of calls suddenly appears with a phrase not seen before-like "app freezes after update"-the system flags it as a potential new issue. This lets teams respond before the problem becomes widespread.

What’s the role of HDBSCAN in contact center analytics?

HDBSCAN is a clustering algorithm that finds natural groupings in conversation data without requiring you to guess how many groups exist. Unlike older methods like K-means, it works well with messy, real-world data and helps uncover hidden themes in customer calls.

Can LLMs generate FAQs automatically?

Yes. By analyzing groups of similar customer questions, LLMs can extract the most common phrasing and turn them into clear, well-structured FAQs. This cuts manual work by up to 80% and keeps knowledge bases updated in real time.

Are LLM-based systems scalable for large contact centers?

Yes, but scalability depends on model size and deployment strategy. Companies use smaller models for real-time tasks and larger ones for batch analysis. Length penalties and tiered processing help reduce costs while maintaining accuracy across high-volume operations.

Artificial Intelligence

Artificial Intelligence

Amanda Ablan

March 14, 2026 AT 21:38Meredith Howard

March 15, 2026 AT 05:29Yashwanth Gouravajjula

March 15, 2026 AT 12:00Kevin Hagerty

March 16, 2026 AT 06:07Janiss McCamish

March 17, 2026 AT 18:28Kendall Storey

March 18, 2026 AT 13:25Richard H

March 19, 2026 AT 20:07Ashton Strong

March 20, 2026 AT 00:42Dylan Rodriquez

March 20, 2026 AT 19:38Steven Hanton

March 21, 2026 AT 00:45