Imagine typing "budgeting for a cloud transition" into a search bar and getting results for "server migration cost." In the old days of search, this would never happen unless both pages happened to use the exact same words. But today, we are moving away from simple character matching toward something much more powerful: semantic search. This shift isn't just a minor upgrade; it's a complete transformation of how machines understand human intent.

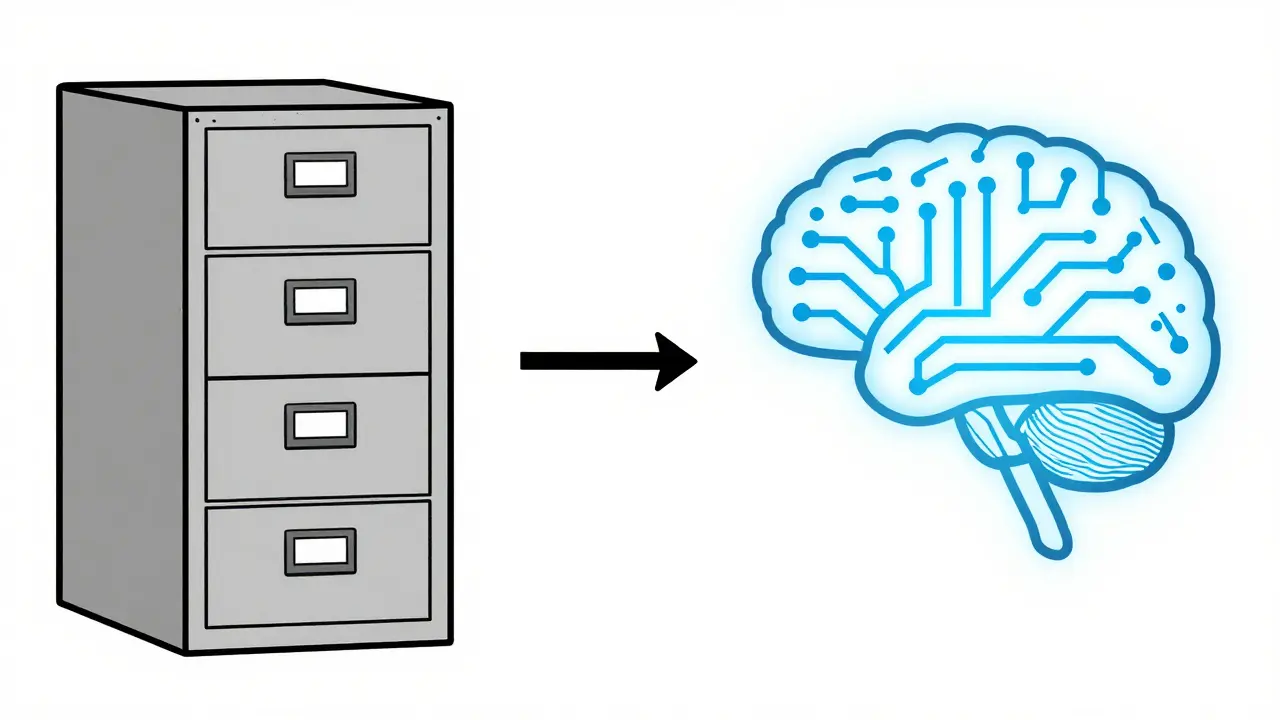

Traditional search engines are basically high-speed filing cabinets. They look for keywords. If you don't use the specific word the author used, you might miss the best answer. Large Language Models (LLMs) are changing that by acting as the "brain" behind the search. They don't just look at words; they look at meaning, context, and the conceptual relationships between ideas. By using LLMs, search systems are evolving from lookup tools into intelligent answer engines that actually get what you're asking.

The Engine Under the Hood: How LLMs Redefine Meaning

To understand how this works, we have to talk about Vector Embeddings. Think of an embedding as a mathematical map. Instead of treating a word as a string of letters, an LLM converts text into a long list of numbers (a vector) that represents its meaning in a multi-dimensional space. Words or phrases with similar meanings are placed close together on this map.

Unlike early embedding models, modern LLMs are trained on colossal datasets using Transformer Architecture. This allows them to handle nuance. For instance, they can tell the difference between "Apple" the fruit and "Apple" the tech company based on the words around it. This is achieved through a process called masked language modeling, where the model learns to predict hidden words in a sentence, effectively teaching itself the rules of human logic and grammar.

When you combine these embeddings with Dense Retrieval, the search engine stops looking for exact matches. Instead, it looks for the "closest" meaning in the vector space. This is why a query about "cloud transition" can successfully find a document about "migration costs"-the LLM knows these concepts are practically the same thing in a business context.

The Three-Stage Pipeline for Precision and Recall

Scaling semantic understanding isn't just about having a big model; it's about the workflow. Most high-performance semantic search systems use a three-stage process to balance speed and accuracy.

- Query Expansion: Users are often bad at describing exactly what they want. An LLM can take a simple query like "Oracle Cloud" and automatically expand it into a set of related concepts, such as "OCI cost comparison" or "database migration benefits." This ensures the system doesn't miss relevant documents just because the user was too brief.

- Initial Retrieval: Searching through billions of vectors is slow. The system performs a fast, coarse-grained search to grab the top 100 or 1,000 most likely candidates using fast vector similarity.

- Re-ranking: This is where the real intelligence kicks in. A more powerful, specialized model looks at those top results and performs a deep analysis. It re-orders them based on exact contextual relevance. A page that mentions "cloud migration" once might survive the initial search, but the re-ranker will push a page that provides a detailed "cloud migration strategy" to the very top.

| Feature | Keyword Search (BM25) | Semantic Search (LLM) |

|---|---|---|

| Matching Logic | Exact character match | Conceptual meaning (Vectors) |

| User Intent | Ignored (only keywords matter) | Central to the process |

| Handling Synonyms | Requires manual synonym lists | Native understanding via embeddings |

| Result Quality | High precision, low recall | High precision AND high recall |

Real-World Applications Across Industries

This technology isn't just for Google. It's being woven into almost every digital experience where a search bar exists. In e-commerce, for example, if a customer searches for "something for a rainy day," a keyword search might show them raincoats. A semantic search system, however, understands the intent and might suggest umbrellas, waterproof boots, and indoor activities, recognizing the broader context of the request.

In the world of enterprise document management, semantic search is a lifesaver. Imagine a legal team searching through ten thousand contracts for "termination clauses." A semantic system can find documents that discuss "ending the agreement" or "contract expiration," even if the word "termination" never appears. This removes the need for tedious manual tagging and categorization.

We're also seeing a rise in Direct Answer Generation. Instead of giving you a list of blue links and making you do the reading, the LLM synthesizes information from the top three most relevant documents to give you a clear, summarized answer. It's the difference between being given a map and being given a tour guide.

Overcoming the Implementation Hurdle

If it's so great, why isn't every search bar semantic? Because it's computationally expensive. Generating and storing billions of vectors requires significant memory and processing power. However, In-memory Indexing and specialized vector databases have made this more feasible for mid-sized companies.

Another challenge is Fine-tuning. A general-purpose LLM knows a lot about the world, but it might not know your company's internal jargon. To fix this, developers take a pre-trained model and train it further on a smaller, domain-specific dataset. This teaches the model that in your company, the word "Project Phoenix" refers to a specific software migration and not a mythical bird.

For those who can't afford a total overhaul, re-ranking is the "cheat code." It's the fastest way to inject LLM intelligence into an existing system. You keep your old keyword search for the initial pull and simply add an LLM-based re-ranker at the end to polish the results. It provides a huge boost in quality without needing to re-index the entire database.

The Future of Information Retrieval

The relationship between search algorithms and LLMs is symbiotic. LLMs make the search more intuitive, and the structured data retrieved by search algorithms provides the "ground truth" that prevents LLMs from hallucinating. We are moving toward a world where the "search query" disappears and is replaced by a conversation.

As we integrate more Knowledge Graphs-structured networks of entities and their relationships-into the pipeline, the system will not only understand the meaning of your words but also the factual reality of the world. Search will stop being about finding a document and start being about acquiring a specific piece of knowledge.

What is the main difference between keyword search and semantic search?

Keyword search relies on exact matches of characters or words. If you search for "couch," it won't find "sofa" unless a synonym list is manually created. Semantic search uses LLMs and vector embeddings to understand the meaning behind the words, allowing it to find "sofa" because it knows both terms refer to the same conceptual object.

How does query expansion actually work?

Query expansion uses an LLM to analyze a user's short query and generate related terms or long-tail phrases that are likely to appear in a relevant document. For example, "best laptop" might be expanded to include "top rated notebooks 2026," "laptop performance benchmarks," and "portable computer reviews," widening the net to ensure no great results are missed.

Why is re-ranking necessary if we already have embeddings?

Embeddings are great for speed and broad relevance, but they can be "fuzzy." A vector search might return a document that is conceptually similar but doesn't actually answer the specific question. Re-ranking uses a more computationally expensive model to perform a deep, critical analysis of the top few results, ensuring the absolute best answer is at position one.

Can I implement semantic search without replacing my entire system?

Yes. The most efficient method is to implement a re-ranking layer. You can keep your existing keyword-based retrieval system to find a candidate set of documents and then use an LLM-powered re-ranker to order those candidates. This gives you a significant boost in relevance without the need to rebuild your entire index.

What are the biggest challenges in scaling semantic search?

The primary challenges are latency and cost. Calculating vector similarity across millions of documents in real-time requires specialized hardware (like GPUs) and optimized vector databases. Additionally, models may require domain-specific fine-tuning to understand industry-specific terminology accurately.

Artificial Intelligence

Artificial Intelligence

Christina Morgan

April 27, 2026 AT 20:46It's honestly so refreshing to see this broken down so clearly. The e-commerce example really hits home because we've all been there, fighting with a search bar that just doesn't get what we're looking for. I love how the transition from simple keyword matching to actual intent is explained here.

Anuj Kumar

April 28, 2026 AT 21:46This is just a way for them to track our thoughts. First they map our words, then they map our brains. It's all a trap to control what we find.

Jack Gifford

April 28, 2026 AT 23:26That's a bit extreme, but I see where the privacy concern comes from. Still, the technical leap in vector embeddings is objectively massive. The way it handles polysemy-like the Apple example-is a game changer for accessibility and user experience.

Kathy Yip

April 29, 2026 AT 01:44Makes me wonder if we're losing the art of serendipity tho... like if the machine always gives us the 'perfect' match, do we stop finding the weird stuff we didnt know we needed? It feels like the algorithm is narrowing our world even while it claims to expand it. There is a certain beauty in the messy human way of searching and stumbling upon things that are only vaguely related but spark a new idea. Maybe the 'fuzzy' nature of old search was actually a feature not a bug because it let us wander. I wonder if we are trading discovery for efficiency and if that's a deal we actually want to make in the long run. It's a bit scary to think that our conceptual boundaries are being defined by a multi-dimensional map created by a corporation. The shift toward 'knowledge acquisition' instead of 'document finding' sounds efficient but also a bit clinical. Who decides what the 'ground truth' is in these knowledge graphs? If the training data is biased, the semantic meaning becomes a reinforced loop of that same bias. We might just be building a more polished mirror of our own errors. I hope there's still room for the unexpected in this new architecture.

Bridget Kutsche

April 30, 2026 AT 18:56For anyone trying to implement this on a budget, definitely look into the re-ranking 'cheat code' mentioned. It's the most pragmatic way to get those LLM benefits without spending a fortune on infrastructure right away!