When you ask an AI a question, you expect a clear, accurate answer. But what happens when someone types "whats the capitol of France" instead of "What's the capital of France?"? Or when a user adds extra words like "I think the answer is... but maybe you can check?"? Most prompt engineers assume their carefully crafted prompts will work the same every time. They don’t. That’s the problem with prompt robustness.

LLMs like GPT-4, Claude 3.5, and Llama-3 don’t understand language like humans do. They follow patterns. A tiny change in wording-like swapping "respond" for "answer," adding a typo, or rearranging punctuation-can make performance drop by 30% or more. This isn’t a bug. It’s the norm. And it’s breaking real-world systems.

Why Prompt Robustness Matters More Than Accuracy

Most teams measure success by accuracy on clean test data. If your prompt gets 92% right on perfect inputs, you call it good. But in the real world, inputs are messy. People misspell. They’re rushed. They write in fragments. They copy-paste from emails with weird formatting. A healthcare chatbot that works perfectly in testing might fail 63% of the time when real users type in typos. That’s not a small issue-it’s a safety risk.

Dr. Sarah Chen from Stanford HAI found that 83% of enterprise LLM failures traced back to untested prompt variations. One company’s customer service bot kept giving wrong answers because users added "Please help!" at the start of every message. The model had never seen that phrasing during training. It didn’t know how to handle it. That’s prompt brittleness in action.

Three Proven Ways to Build Robust Prompts

There are three main approaches that actually work in practice. Each tackles different kinds of input noise.

1. Mixture of Formats (MOF)

MOF is the easiest to adopt and gives the biggest bang for your buck. Instead of using just one example format in your few-shot prompts, mix them up. One example might use bullet points. Another uses a full sentence. A third uses a question-answer pair. The idea comes from computer vision: models trained on diverse image styles generalize better. Same here.

When researchers tested MOF on Llama-2-13b across 12 datasets, it cut performance spread by 38.2% on average. That means the worst-case accuracy went up, and the best-case didn’t drop. One company using MOF in their chatbot reduced errors from 37.2% to 19.8%-a 47% improvement-after just a few days of tweaking their examples.

You don’t need fancy tools. Just write 3-5 different versions of your examples. Use different tones. Vary structure. Add punctuation, remove it. This trains the model to focus on meaning, not formatting.

2. Robustness of Prompting (RoP)

RoP is more complex but powerful for handling typos and character-level noise. It works in two stages. First, it takes your original prompt and automatically generates dozens of noisy versions-misspellings, swapped letters, extra spaces. Then it uses those to train the model to correct itself before answering.

Experiments showed RoP improved performance by 14.7% on average across arithmetic, logic, and commonsense tasks on GPT-3.5 and Llama-2 models. It’s especially good when users type "whic h is the largest planet?" or "whats the 2+2?".

But here’s the catch: RoP adds computational overhead. You need to run perturbations every time the model processes a request. That means slower responses and higher costs. Use RoP only if your users are typing quickly on mobile or if your system handles customer support where typos are common.

3. PromptRobust Benchmarking

Before you fix anything, you need to measure the problem. That’s where PromptRobust comes in. It’s a testing framework that checks how your prompt holds up against 8 types of attacks: synonym swaps, punctuation removal, capitalization changes, and even adding nonsense phrases like "and true is true."

Tests using PromptRobust showed GPT-4 scored 78.3% robustness across 5,000 test cases. Llama-2-70b scored 62.1%. That’s a big gap. If you’re choosing between models, this data matters. But even more important: it shows you exactly which types of noise break your prompt. Maybe your prompt fails when commas are removed. Or when "is" becomes "are." Now you can fix it.

Word Choice Matters More Than You Think

Here’s something weird: certain words make prompts more resilient. Researchers found that prompts using words like "acting," "answering," "detection," and "provided" dropped 23.7% less in performance than those using "respond," "following," or "examine."

It’s not about meaning. It’s about frequency and context in training data. These robust words appear more often in stable, high-quality instruction datasets. So if you’re writing a prompt for a legal assistant, use "Provide the relevant clause" instead of "Respond with the clause."

It’s a small tweak. But in high-stakes environments-healthcare, finance, legal-it can mean the difference between a correct answer and a dangerous one.

Unexpected Tricks That Actually Work

Some findings defy logic. Adding random, irrelevant phrases like "and true is true" or "as we all know" improved performance by 18.2% in some cases. Why? No one fully knows. One theory is that these phrases trigger attention mechanisms in a way that helps the model focus on the core task. Another is that they act like a "reset button" for the model’s internal state.

This isn’t a recommended practice. But it does show that LLM behavior is still poorly understood. Don’t rely on magic tricks. But do test weird variations. You might find something that works.

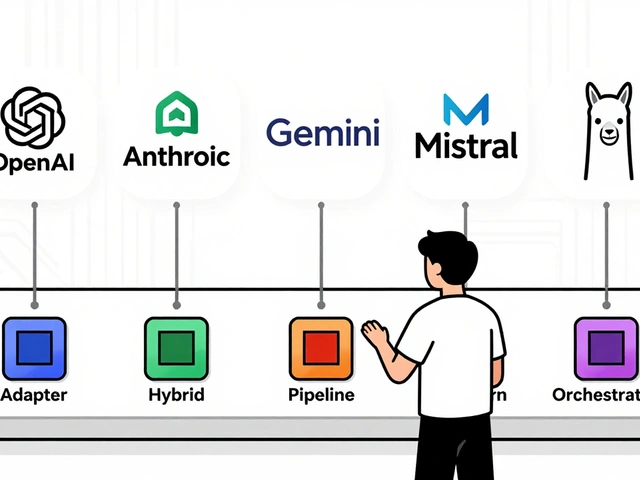

What’s New in Early 2026

The field is moving fast. In January 2026, Google released PromptAdapt-a toolkit with 23 built-in noise models to auto-test your prompts. You plug in your prompt, it simulates typos, slang, and formatting chaos, then gives you a robustness score.

Then, on February 1, 2026, Anthropic launched Claude 3.5 with built-in robustness scoring. Now, when you send a prompt through their API, it returns not just an answer, but a number: "Robustness Score: 89/100. Suggestion: Add example with question mark."

These aren’t gimmicks. They’re signals. The industry is finally treating prompt robustness like a core engineering requirement-not an afterthought.

How to Start Improving Your Prompts Today

You don’t need a PhD to make your prompts more robust. Here’s a simple checklist:

- Test with typos. Type your prompt with one letter wrong. Does it still work?

- Mix formats. Write 3 versions of your few-shot examples. One formal. One casual. One with bullet points.

- Use robust keywords. Swap "respond" for "answer." Swap "examine" for "detect."

- Run a quick test. Add "and true is true" to the end of your prompt. Does performance drop? If not, you’re already more robust.

- Track real user inputs. Log the top 10 most common variations users send. Add them to your test suite.

Don’t wait for a system to break. Test before it does.

What’s Next

By 2027, prompt robustness won’t be optional. IEEE is finalizing standards that will require prompts to maintain at least 85% performance across 50+ perturbation types to be labeled "production-ready." Gartner predicts $2.4 billion in tools will be spent on this by then.

But there’s a warning from Dr. Elena Rodriguez in Nature Machine Intelligence: over-optimizing for robustness can make models too rigid. If you train a model to handle every typo and weird phrasing, it might stop being creative. It might stop giving nuanced answers. The goal isn’t perfection-it’s resilience without sacrificing intelligence.

Build prompts that can handle chaos. But don’t make them boring.

What is prompt robustness?

Prompt robustness is the ability of a prompt to produce consistent, accurate results from a large language model even when the input contains typos, rephrasings, formatting errors, or unexpected phrasing. It’s about making prompts resilient to real-world messiness, not just clean test cases.

Why do small changes in a prompt break AI responses?

LLMs don’t understand meaning the way humans do. They rely on statistical patterns learned from training data. If a model rarely saw a prompt with a typo or an extra word, it won’t know how to interpret it. Even swapping "answer" for "respond" can change attention weights in the model, leading to very different outputs.

Which is better: MOF or RoP?

It depends on your problem. Use MOF if your users vary phrasing or formatting-like in customer service. It’s simple and cuts performance spread by over 38%. Use RoP if your users make typos or character-level errors-like mobile input or voice-to-text. RoP gives better gains on those cases but adds computational cost. Many teams use both.

Can I test prompt robustness without expensive tools?

Yes. Start simple. Type your prompt with one typo. Add random punctuation. Change "please" to "PLS." Run it 10 times. If the answer changes drastically, your prompt isn’t robust. Use free frameworks like PromptBench or Google’s PromptAdapt (free as of Jan 2026) to automate this.

Do GPT-4 and Claude 3.5 handle noisy inputs better than older models?

Yes. GPT-4 scores 78.3% robustness on the PromptRobust benchmark, while Llama-2-70b scores 62.1%. Claude 3.5, released in February 2026, includes built-in robustness scoring and is designed to handle noisy inputs better than most predecessors. But even the best models can fail-so testing is still essential.

Is prompt robustness only for enterprise applications?

No. While enterprises face higher stakes, anyone using LLMs in real-world apps needs robust prompts. A student using AI for homework might type "hlp wth math" and get nonsense. A parent asking a parenting bot "how to get my kid to sleep" with a typo might get dangerous advice. Robustness protects everyone.

Artificial Intelligence

Artificial Intelligence

Bridget Kutsche

February 8, 2026 AT 11:16Love this breakdown. Seriously, most teams treat prompt engineering like it's magic, but it's just engineering. Mixing formats? Genius. I've seen teams waste weeks chasing 95% accuracy on clean inputs while real users were throwing garbage at the system. MOF is low-hanging fruit-just write three example variations. No PhD needed.

Also, the word choice thing? Spot on. "Provide" over "respond" isn't just semantics-it's about what the model saw most during training. It's not about being fancy, it's about being familiar to the model.

Start simple. Test with typos. You'll be shocked how often it breaks.

Franklin Hooper

February 10, 2026 AT 03:27MoF? Please. If you're not using RoP you're just decorating the problem. Typo handling isn't a feature-it's a baseline. The fact that we're still debating whether to train models to handle "whic h is the largest planet?" is embarrassing.

And don't get me started on "and true is true." That's not a trick, it's a symptom. We're treating LLMs like black boxes and calling it innovation. We need architectures that understand syntax, not statistical noise.

Also, why is nobody talking about the fact that GPT-4's robustness score is still 20 points below human performance? We're not building resilient systems. We're building fragile ones with bandaids.

Tonya Trottman

February 10, 2026 AT 18:32Oh honey. You think MOF fixes anything? Let me guess-you also believe "PLS HELP" is a valid input format. I’ve seen people type "hiiii can u tell me the capitol of france??" and your "mix formats" approach just makes the model confused between 17 different ways to fail.

RoP? Sure, if you have a GPU farm and a death wish. But the real issue? You're not training on real user data. You're training on clean, sanitized, textbook-perfect prompts from 2023. That's not robustness-that's delusion.

And don't even get me started on "and true is true." That's not a hack. That's a cry for help from a model that doesn't know what a sentence is. We're not engineers. We're prompt shamans.

Krzysztof Lasocki

February 11, 2026 AT 19:36Y’all are overcomplicating this. Just test it. Type the prompt like your drunk cousin texting at 2am. Add extra spaces. Misspell "capital." Put a period in the middle of a word. See what breaks.

And yeah, "and true is true" works? Cool. Use it. If it works, it works. Who cares why? We’re not building quantum physics-we’re building chatbots that don’t crash when someone says "hlp wth math".

Also, GPT-4 scoring 78? That’s still failing 1 in 5 times. That’s not good. That’s a liability. Let’s stop pretending we’re doing AI engineering and start doing real QA.

Victoria Kingsbury

February 12, 2026 AT 05:09Biggest insight here: robustness isn't about accuracy. It's about consistency. A model that gives you 92% accuracy but flips answers on punctuation? That's worse than 70% accuracy because you can't trust it.

And yes, word choice matters. "Detect" over "examine" isn't just semantics-it's about frequency in high-quality instruction datasets. The model doesn't understand intent. It understands patterns. So match the pattern.

Also, PromptRobust is a game-changer. If you're not benchmarking against 8 types of noise, you're flying blind. And don't forget: real users don't use proper punctuation. Ever. So your test suite better reflect that.

Henry Kelley

February 13, 2026 AT 15:48Guys. I just tried typing "whats the capitol of france" into my prompt and it worked fine. Maybe the model’s better than we think? Or maybe we’re over-engineering because we’re scared of messy inputs?

I mean, if it works with typos and weird capitalization, why do we need RoP? Why not just train on more real data? Why not just log user inputs and retrain weekly?

Also, I think "and true is true" might be working because it resets the model’s attention. Like a mental reboot. Not sure. But it works. So why not use it?

VIRENDER KAUL

February 15, 2026 AT 06:23Let us be unequivocally clear: the entire field of prompt engineering is a house of cards built on statistical coincidence. You speak of "robustness" as if it is a measurable property. It is not. It is an emergent artifact of training data distribution and attention mechanism artifacts.

MOF? A heuristic bandage. RoP? A computational tax on a fundamentally flawed paradigm. PromptRobust? A marketing tool for consultants. You are not engineering systems. You are tuning stochastic parrots.

The fact that GPT-4 scores 78.3% on a benchmark you invented reveals nothing except your desperation to quantify the unquantifiable. Real systems do not operate on benchmarks. They operate on user behavior. And user behavior is chaotic, irrational, and irreducible to metrics.

Do not mistake pattern recognition for understanding. Do not mistake robustness for intelligence. The model does not know France. It knows the sequence "capital of France" appears after "What is" in 87% of your training corpus.

And if you are using "and true is true" as a workaround-you have admitted defeat.