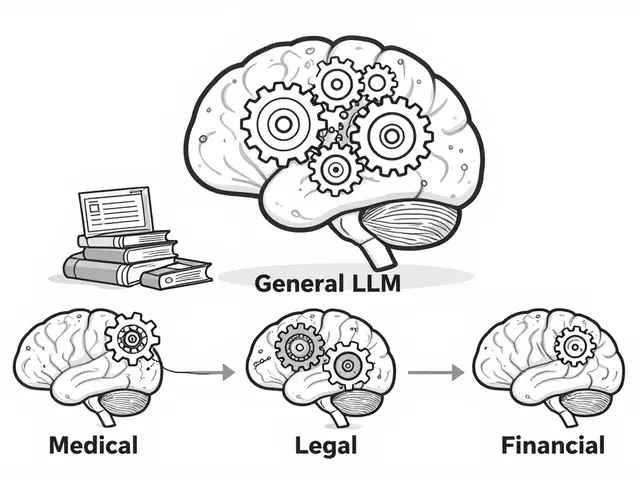

Tag: 4-bit quantization

Learn how calibration and outlier handling keep quantized LLMs accurate when compressed to 4-bit. Discover which techniques work best for speed, memory, and reliability in real-world deployments.

Categories

Archives

Recent-posts

Marketing Content at Scale with Generative AI: Product Descriptions, Emails, and Social Posts

Jun, 29 2025

Human-in-the-Loop Operations for Generative AI: Review, Approval, and Exceptions Strategy Guide

Mar, 26 2026

Artificial Intelligence

Artificial Intelligence