Picking the right model for a project often feels like a guessing game. One day a new "state-of-the-art" architecture drops, and the next, a smaller, faster variant claims to do the same job for a fraction of the cost. But when you're dealing with real-world workloads-like a customer support bot handling thousands of tickets or a legal tool scanning 300-page contracts-the gap between a marketing slide and actual performance is huge. If you choose a model that's too heavy, your latency spikes and your budget disappears. If you go too light, your accuracy plummets.

The reality is that no single Transformer is a silver bullet. Since the original "Attention Is All You Need" paper, we've seen a massive split in how these models are built. Some are designed to be bidirectional powerhouses for understanding text, while others are autoregressive giants built for generation. To make a smart choice, you need to look past the parameter count and focus on how these variants behave under actual pressure.

Quick Guide: Matching Architectures to Your Goals

Before diving into the technical benchmarks, here is a high-level cheat sheet for decision-making based on common project requirements:

| Primary Goal | Recommended Variant | Key Strength | Trade-off |

|---|---|---|---|

| Max Accuracy & Reasoning | GPT-4 / Claude 4 | Complex logic, coding | High cost, proprietary |

| Speed & Multimodal | Gemini 2.5 Flash | Rapid inference, vision | Lower reasoning depth |

| Full Control / Local | RoBERTa / Nemotron-4 | Weight access, privacy | Limited generative power |

| Ultra-Long Documents | GPT-4 Turbo / Transformer XL | 128k+ token windows | High VRAM usage |

The Heavy Hitters: Generative Giants

When people talk about LLMs today, they usually mean the autoregressive models like the GPT series. These are the gold standard for tasks that require creating content or solving complex problems. For instance, GPT-4 Turbo is a beast for long-form context, supporting 128,000 tokens. To put that in perspective, you can feed it roughly 300 pages of text in one go. This makes it an obvious choice for legal document synthesis or deep research.

But there is a real-world cost to this power. One company migrated their Zendesk support bot from GPT-3.5 to GPT-4 and saw their Customer Satisfaction (CSAT) scores jump by 12 percentage points. The catch? Their operational costs quadrupled. This is the classic "performance vs. price" wall. If your application doesn't actually need high-level reasoning-say, it just needs to categorize emails-using a model like this is an expensive mistake.

On the other side of the fence, we have the Gemini 2.5 Flash. This variant is built for the "fast and light" lane. It balances multimodal capabilities (handling text and images simultaneously) with a massive context window, making it a better fit for apps where users expect instant responses and the budget is tighter.

The Precision Tools: The BERT Family

Not every workload needs a model that can write a poem. Many businesses just need to know if a sentence is positive or negative, or which category a piece of text belongs to. This is where the BERT (Bidirectional Encoder Representations from Transformers) family shines. Unlike GPT, which reads text from left to right, BERT looks at the whole sentence in both directions at once.

If you need something robust, RoBERTa is often the better choice. A RoBERTa-Large variant (355M parameters) consistently hits high marks for speed and price efficiency. Then you have DistilBERT, which is basically a "shrunken" version of BERT. It manages to keep about 97% of the original accuracy while being 40% smaller. If you're deploying a model to an edge device or a mobile app where memory is scarce, DistilBERT is your best bet.

For those who want a middle ground, T5 (Text-to-Text Transfer Transformer) is interesting because it treats every task as a text-to-text problem. Whether it's translation or summarization, T5 provides a flexible framework that often outperforms basic BERT models in versatility while staying more manageable than a full-scale GPT model.

Handling the "Long Haul": Transformer XL and Context Limits

Standard transformers have a hard ceiling on how much text they can "remember" at once-usually around 512 tokens for older BERT variants. This is a nightmare for anyone working with DNA sequences, long-form music generation, or massive technical manuals. Transformer XL solves this by using a recurrence mechanism. Instead of starting from scratch for every segment, it remembers the state of the previous segment.

In benchmarks, a 1.5 billion parameter Transformer XL variant can push context windows beyond 3,000 tokens, a six-fold increase over the baseline. However, it's not a "plug-and-play" solution. To get this to work efficiently, you often need custom CUDA kernels, and you won't find nearly as much community support on forums as you would for a Llama or GPT model. It's a specialist tool for specialist problems.

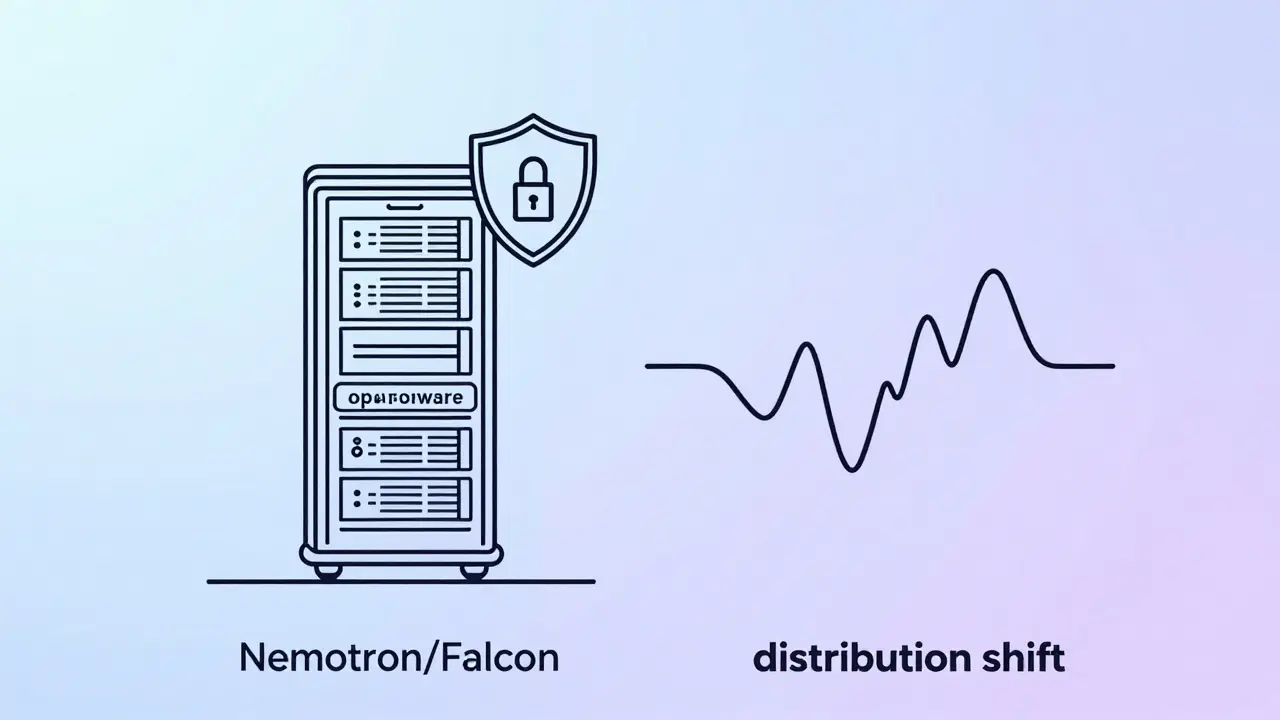

The Open-Source Shift: Nemotron and Falcon

The tide is shifting toward open-source (or open-weight) models because enterprises hate being locked into a proprietary API. Nemotron-4, developed by Nvidia, is a prime example. It's built on Llama-3 foundations and comes in various sizes, from a massive 340 billion parameter version down to mini-models designed for a single GPU. This allows a company to run a high-performance LLM entirely on their own hardware, ensuring their data never leaves their building.

Similarly, the Falcon series from the Technology Innovation Institute provides a strong alternative. Falcon 2 brings multimodal capabilities, while Falcon 3 offers a range of sizes (up to 180B) that can be deployed via Hugging Face or AWS. When you benchmark these against proprietary systems, the gap is closing. For many tasks, a fine-tuned Nemotron-4 will perform as well as a closed-source model while giving you total transparency over the weights.

The Distribution Trap: Where Transformers Fail

One of the most dangerous assumptions in AI is that a transformer will always beat a traditional model. In the real world, data is messy and often shifts. When we test transformers against simple baselines like Lasso regression or Multilayer Perceptrons (MLP) in "low-data regimes," we see some surprising results.

In a perfect scenario where data is well-correlated, a transformer might score a 0.693 against an MLP's 0.435. But when a "cross-sectional shift" (CS-Shift) occurs-meaning the data in production looks different from the training data-transformer performance can tank, sometimes dropping to 0.118. Meanwhile, a simple MLP often stays stable. This tells us that while transformer variants are incredibly powerful, they can be fragile. If your data distribution is volatile, you might actually be better off with a simpler, more stable linear model.

What's Next? Moving Beyond the Transformer

We are starting to hit the limits of the standard attention mechanism. The computational cost of processing long sequences grows quadratically, meaning doubling the text length quadruples the work. This has led to the rise of Mamba and other state-space models. These alternatives aim to provide the same reasoning capabilities but with linear scaling, making them far more efficient for massive datasets.

We're also seeing more "sparse attention" mechanisms. Instead of every token looking at every other token, the model only focuses on the most relevant ones. This dynamic sparsification allows for faster inference without a huge hit to accuracy. As we move through 2026, the goal isn't just making models bigger, but making them smarter about how they use their compute.

Which transformer variant is best for a budget-conscious startup?

If you need generative capabilities and fast response times, Gemini 2.5 Flash is currently one of the most cost-effective options. If you are doing text classification or sentiment analysis, look at DistilBERT or a small Nemotron-4 variant that you can host on a single GPU to avoid per-token API costs.

How do I handle documents longer than 100 pages?

You need a model with a large context window. GPT-4 Turbo supports 128,000 tokens, which is ideal for roughly 300 pages of text. For specialized temporal data like DNA or music, Transformer XL is a stronger architectural choice due to its recurrence mechanisms.

Are open-source models actually as good as GPT-4?

In many general-purpose benchmarks, the gap is very small. Models like Nemotron-4 (340B) and Falcon 180B are highly competitive. While proprietary models often have a slight edge in complex reasoning, open-source variants win on privacy, transparency, and the ability to be fine-tuned on your specific private data.

What is the main difference between BERT and GPT?

BERT is bidirectional, meaning it reads the whole sequence at once to understand context-perfect for analysis and classification. GPT is autoregressive, meaning it predicts the next token in a sequence-perfect for generating text and conversation.

Why would I use an MLP over a Transformer?

Stability and efficiency. In scenarios with very limited data or where the data distribution shifts frequently (CS-Shift), transformers can be unstable. A Multilayer Perceptron (MLP) or Lasso regression is often more robust and significantly faster for simple numerical or linear predictions.

Next Steps for Deployment

If you're currently deciding on a model, start by defining your "failure state." Is a slow response worse than a slightly inaccurate one? Is a data leak a business-ending event? If privacy is non-negotiable, start with Nemotron-4 or RoBERTa. If you need the absolute highest intelligence and budget isn't the primary concern, go with Claude 4 or GPT-4. For everything else, run a small A/B test using a distilled model like DistilBERT to see if you can get 90% of the value for 10% of the cost.

Artificial Intelligence

Artificial Intelligence

Bridget Kutsche

April 4, 2026 AT 15:04This is such a great breakdown! I've been seeing a lot of people struggle with the cost-benefit analysis of GPT-4 versus smaller models, and this matrix really simplifies things.

For anyone starting out, I'd suggest trying the DistilBERT route first if you're doing classification-it's honestly a lifesaver for your cloud bill.

Jack Gifford

April 4, 2026 AT 16:48Spot on. Most people just chase the highest benchmark score without thinking about the actual latency for the end user. It's all about the trade-offs!

Nathan Pena

April 5, 2026 AT 17:33The mention of the "distribution trap" is the only part of this entire piece that borders on being useful. Most "developers" today simply wrap an API and call it an AI solution without understanding the basic linear algebra or why a simple MLP would annihilate their fragile transformer setup the moment the data shifts by a fraction. It is truly exhausting to witness the industry gravitate toward computationally expensive overkill while ignoring the fundamental stability of simpler architectures. One would think a basic understanding of variance and covariance would be prerequisite for this field, but apparently, that is too much to ask of the current crop of prompt engineers.

Kathy Yip

April 7, 2026 AT 11:24i wonder if the shift toward mamba is just another cycle of hype or if it actualy solves the deep philosophical problem of how a machine "remembers" context without just burning through vram. it feels like we are just optimizeing the plumbing rather than the actual intelligence... but maybe thats just me being too deep into the weeds lol.

Sarah Meadows

April 9, 2026 AT 02:21If you're not using Nemotron or Falcon, you're basically handing your sovereign data over to foreign interests on a silver platter. We need total domestic control over the weights and the hardware stack to ensure national security and maintain our edge in the global AI arms race. Proprietary APIs are just Trojan horses for data exfiltration and dependency.

Mike Marciniak

April 9, 2026 AT 15:02The "privacy" they mention with open-source is a joke. Those weights are probably backdoored from the start so the creators can see exactly what you're running on your local hardware. Once you download the model, they've already won.

VIRENDER KAUL

April 10, 2026 AT 16:22The analysis provided regarding CS-Shift is rudimentary at best and the conclusion that an MLP is superior in volatile regimes is a gross oversimplification of stochastic gradient descent dynamics in high-dimensional space you lack the rigor to properly define the boundary conditions for such a claim