When generative AI tools like ChatGPT, Gemini, and Claude exploded into classrooms, labs, and newsrooms in 2023, no one asked if they should be used - they just were. But now, in 2026, the question isn’t whether AI helps, but how it’s used. And that’s where ethics, community input, and real transparency become non-negotiable.

Why Ethics Can’t Be an Afterthought

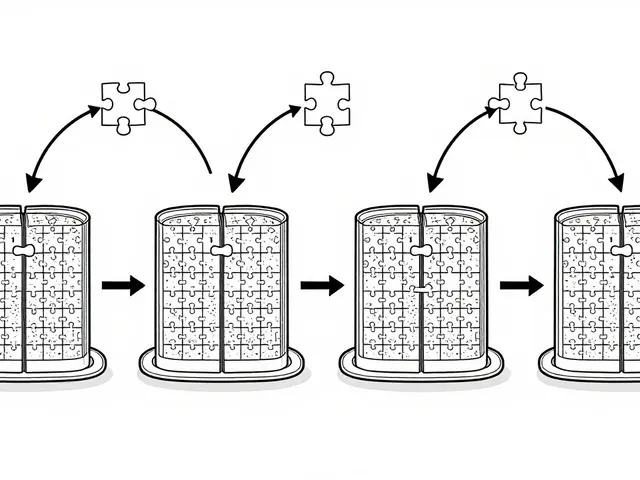

Generative AI doesn’t just write essays or draft emails. It learns from everything - books, research papers, social media, legal documents. That means it inherits biases, inaccuracies, and even harmful stereotypes. A 2025 study by the Alan Turing Institute found that 61% of institutional AI ethics policies don’t measure whether their own guidelines actually reduce harm. That’s not oversight - it’s negligence. Take a simple example: a student uses AI to write a history paper. The tool generates a paragraph about colonialism based on outdated textbooks. The student submits it. The professor grades it. No one questions the source. That’s not innovation - it’s passive replication of error. Ethics isn’t about stopping AI. It’s about making sure humans stay in control of what gets created, shared, and believed.Transparency Isn’t Just a Word - It’s a Process

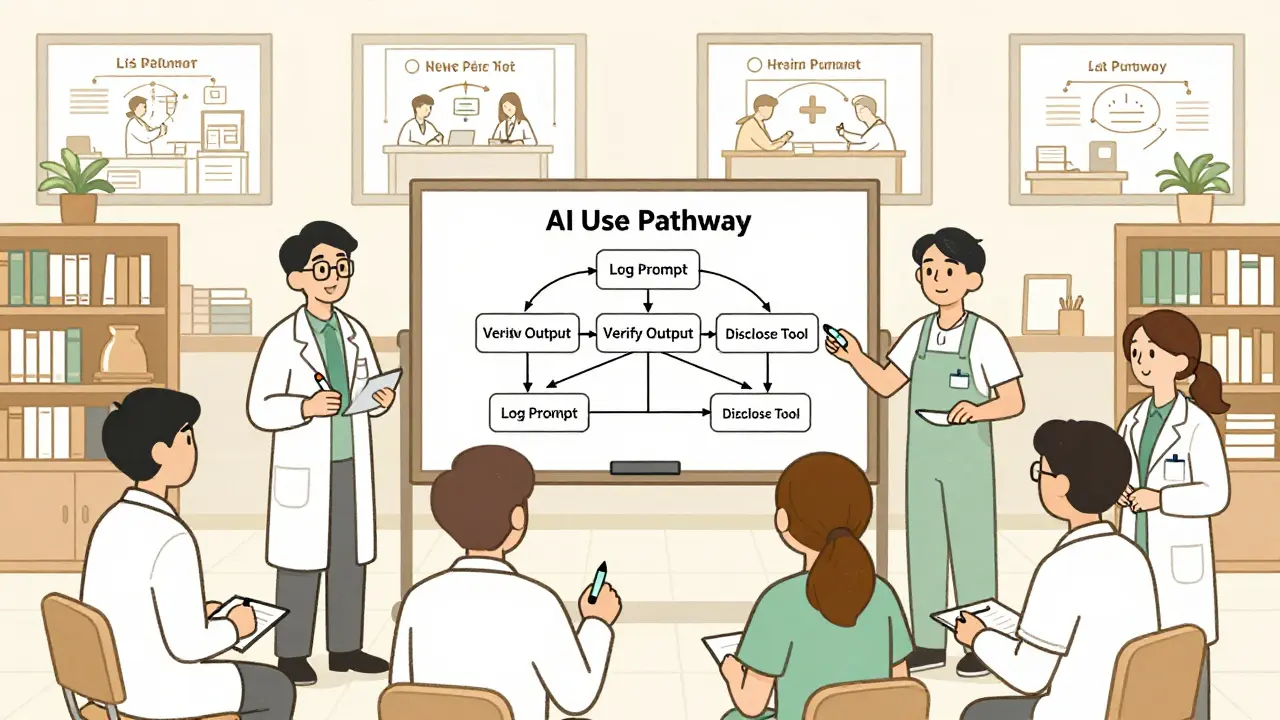

Many universities say they want "transparency." But what does that mean in practice? Harvard’s January 2024 policy doesn’t just say "disclose AI use." It requires researchers to log every prompt, tool version, and output used in a project. That’s not paperwork - it’s accountability. Columbia University’s March 2024 policy demands the same, adding that AI-generated content must be verifiable. If you can’t trace how an AI reached a conclusion, you can’t trust it. The European Commission’s 2024 framework for research is even clearer: AI-generated data must be reproducible. If another scientist can’t run the same prompt and get the same result, the finding doesn’t count. That’s science. Not magic. Not convenience. And it’s not just academia. The U.S. National Institutes of Health made AI disclosure mandatory in grant applications starting September 25, 2025. Researchers now have to say: "Which tool did I use? What did I ask it? Did I edit the output?" No more hiding behind "I just used it to help brainstorm." If you’re using AI, you own the result.Stakeholder Engagement: Who Gets a Seat at the Table?

Ethics isn’t decided by a committee of tech CEOs or university administrators alone. Real ethical frameworks involve everyone affected. UNESCO’s 2021 recommendation, updated through 2025, calls this "multi-stakeholder and adaptive governance." That means students, librarians, janitors, lab techs - anyone who uses or is impacted by the tech - must be heard. East Tennessee State University’s February 2025 policy created an anonymous ethics reporting system. In their April 2025 internal report, 63% of faculty concerns came from student misuse - not because students were cheating, but because they didn’t know what was allowed. That’s a communication failure. ETSU responded by training every instructor on how to explain AI use in syllabi. Result? Confusion dropped by 40% in six months. Meanwhile, at the University of California, AI literacy workshops became mandatory for all graduate students. By May 2025, 87% of participants said they could now properly cite AI use in research papers. One student told reporters: "I used to think AI was a shortcut. Now I know it’s a tool - and like any tool, it’s only as good as the hand that uses it."

Where Policies Go Wrong

Not all frameworks work. Many are too vague. A November 2025 survey of 500 faculty members found 68% said their institution’s AI policy was "too vague to follow consistently." Another 52% reported students still didn’t understand what counted as acceptable use. Harvard’s strict rules on confidential data - banning Level 2+ information (like medical records or financial data) from public AI tools - are well-intentioned. But they created a bottleneck. A June 2025 Reddit thread from r/HigherEd had a professor from a major research university say: "I can’t collaborate with industry partners because I’d have to clear every prompt through legal. It’s easier to just not use AI at all." Columbia’s policy, while comprehensive, added 15-20 hours of administrative work per research project. That’s not ethics - it’s bureaucracy. And when people feel burdened, they find workarounds. That’s how bad practices spread. The real problem? Most policies focus on rules, not understanding. They treat AI like a banned substance instead of a new collaborator. That’s why Dr. Timnit Gebru, founder of the Distributed AI Research Institute, called out universities in her May 2025 Stanford talk: "Most frameworks ignore how generative AI reinforces harmful stereotypes. You can’t just say ‘don’t use it’ - you have to teach people why it’s dangerous."What Works: Concrete Steps Forward

The most effective institutions aren’t just writing policies - they’re changing culture. - EDUCAUSE (June 2025) recommends embedding AI ethics into student honor codes and course syllabi - not as an add-on, but as part of core academic values. - Oxford’s Communications Hub trains writers on how to disclose AI use in journalism, with a 92% satisfaction rate among staff. Their rule: "If you used AI to generate or edit content, say so - clearly and upfront." - Real Change Media (December 2025) banned AI for story ideas and data analysis entirely - not because they fear AI, but because they believe human judgment must lead journalism. And then there’s the training. Harvard requires 8.5 hours of security training just to use approved AI tools. ETSU makes faculty complete a 3-hour ethics module before using AI in class. Completion rates? 76%. That’s not perfection - but it’s progress.

The Future: From Policy to Practice

By 2027, Gartner predicts 90% of large enterprises will have AI ethics frameworks. But Dr. Virginia Dignum warns: "Without standardized metrics, many will be performative. They’ll look good on paper but change nothing in practice." The real shift is happening where it matters: in classrooms, labs, and newsrooms. More universities are now integrating AI ethics into required courses - not as a standalone module, but woven into writing, research, and data science curricula. By December 2025, 47% of institutions were piloting this approach. The goal isn’t to eliminate AI. It’s to make sure it serves people - not the other way around. That means:- Clear, specific rules - not vague guidelines

- Training that’s practical, not theoretical

- Accountability that includes consequences

- Transparency that’s built into workflows, not added as an afterthought

- Listening to the people who use the tech daily - students, researchers, journalists, nurses

What You Can Do Today

You don’t need to be a policymaker to make a difference.- If you’re a student: Always ask - "Did I use AI? What did I ask it? Did I verify the output?" Then document it.

- If you’re a professor: Don’t just ban AI. Teach how to use it ethically. Include a clear section in your syllabus.

- If you’re a researcher: Log your prompts. Save your outputs. Disclose everything. Your integrity is your most valuable asset.

- If you’re a leader: Stop treating AI ethics as a compliance checkbox. Make it part of your culture. Reward transparency. Punish deception.

Why is transparency more important than restriction when it comes to generative AI?

Transparency turns AI from a black box into a tool you can trust. Restriction pushes users underground - they’ll still use AI, but without accountability. Transparency requires you to say: "I used AI. Here’s how. Here’s what I changed." That’s how you build integrity. It’s not about banning the tool - it’s about owning your use of it. Institutions like the European Commission and the NIH now require disclosure because they know: hidden use erodes trust. Visible, documented use builds it.

What’s the difference between AI ethics policies at universities versus companies?

Universities focus on academic integrity, reproducibility, and education. Their policies often require detailed logging of prompts and outputs because research must be verifiable. Companies, especially in finance and healthcare, prioritize data privacy and legal compliance. For example, Harvard bans confidential data from public AI tools, while a bank might only allow AI tools approved by its cybersecurity team. Companies also tend to be less transparent publicly - their policies are often internal. Universities, under public pressure, are more likely to publish theirs.

Do AI ethics frameworks actually reduce misuse?

Yes - but only when they’re specific and supported by training. ETSU’s policy, which included mandatory ethics modules and anonymous reporting, cut student misuse confusion by 40% in six months. UC’s AI literacy workshops led to 87% satisfaction and better compliance. But frameworks that are vague, poorly communicated, or lack enforcement - like many early 2024 policies - have little effect. The difference isn’t the policy itself. It’s whether people understand it and feel supported in following it.

Can generative AI ever be truly ethical?

AI itself isn’t ethical or unethical - people are. A tool can be used to help a doctor diagnose a rare disease or to spread misinformation. The ethics come from how humans design, deploy, and use it. Frameworks like UNESCO’s and the EU’s aim to guide those human decisions. They don’t fix the AI - they fix the context around it. True ethical AI means humans stay accountable, informed, and in control.

What should I do if I see someone misusing AI in research or academics?

Start by understanding your institution’s policy. If it has an ethics council or anonymous reporting system - use it. If not, talk to a trusted professor, advisor, or librarian. Don’t assume malice - often, people don’t know what’s wrong. Many students think "using AI to rewrite my essay" is fine if they "edit it." They don’t realize that’s still plagiarism. Education, not punishment, is the first step. But if there’s clear fraud - falsified data, stolen work, false citations - then formal reporting is necessary. Protecting integrity isn’t about policing - it’s about preserving trust.

Artificial Intelligence

Artificial Intelligence

Jeff Napier

March 22, 2026 AT 10:17Sibusiso Ernest Masilela

March 23, 2026 AT 04:31Daniel Kennedy

March 24, 2026 AT 23:20Taylor Hayes

March 25, 2026 AT 21:28Sanjay Mittal

March 26, 2026 AT 16:02