Generative AI isn’t just getting smarter-it’s getting specialized. While everyone talks about ChatGPT and DALL·E, the real game-changer in enterprise AI isn’t the biggest model. It’s the one trained on your industry’s data, not the internet’s chaos. In 2026, companies aren’t asking, "Can AI write an email?" They’re asking, "Can AI spot a rare cancer subtype from a pathology slide with 98% accuracy?" That’s the power of domain-specialized generative AI models.

Why General AI Falls Short in Real-World Jobs

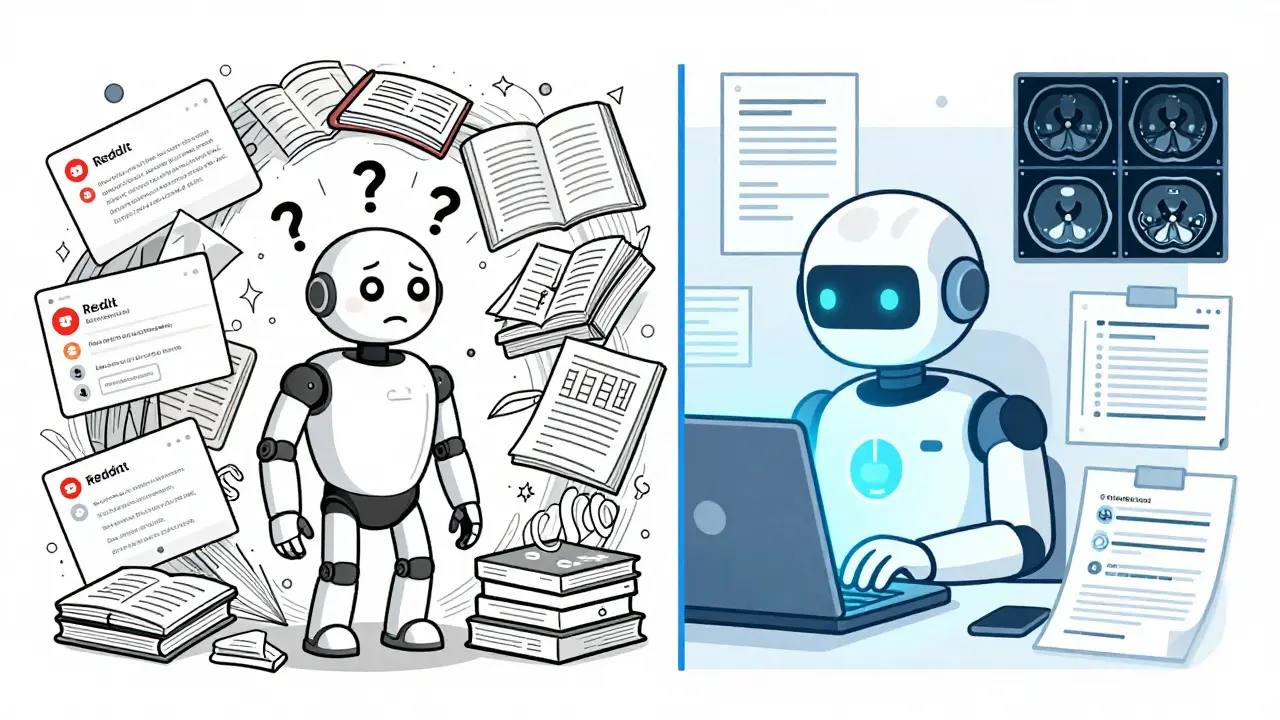

General-purpose AI models like GPT-4 or Claude 3 are trained on everything: Reddit threads, Wikipedia, cookbooks, sci-fi novels, and broken forum posts. That’s great for chatting or writing poems. But when you need to interpret a legal contract, assess loan risk, or analyze a CT scan, general models stumble. They hallucinate. They miss jargon. They get regulatory details wrong. Take healthcare. A 2024 Stanford study compared a 2.75-billion-parameter medical AI called PubMedGPT against a general model of the same size. The medical model answered clinical questions with 89.7% accuracy. The general one? Just 76.2%. Why? Because PubMedGPT wasn’t trained on random web text. It was trained on 14 million medical papers, 40,000 clinical trials, and 600,000 patient records. It learned how doctors think-not how internet users argue. In finance, general AI might confuse a "call option" with a "phone call." A finance-specialized model doesn’t. It understands SEC filings, Basel III requirements, and how interest rate swaps behave in recessions. IBM’s finance model cut false positives in loan approvals by 38%. That’s not a small win. It’s millions in avoided losses.How Domain-Specialized AI Is Built

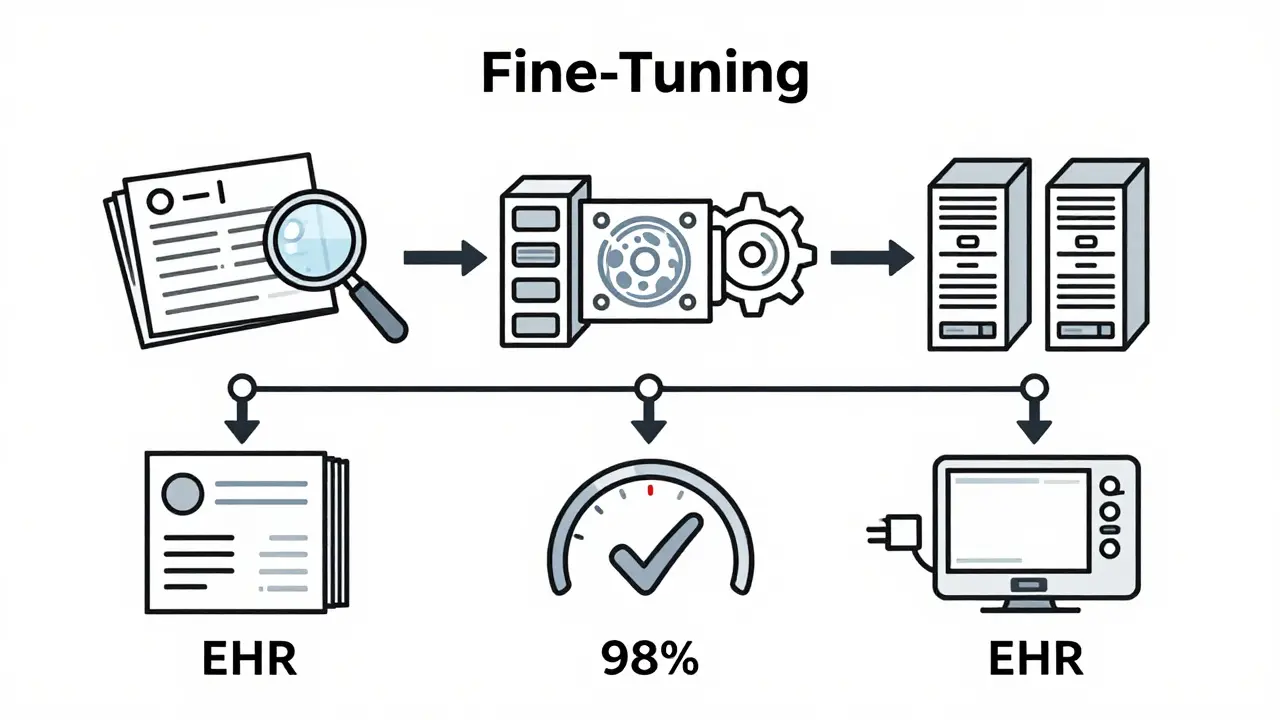

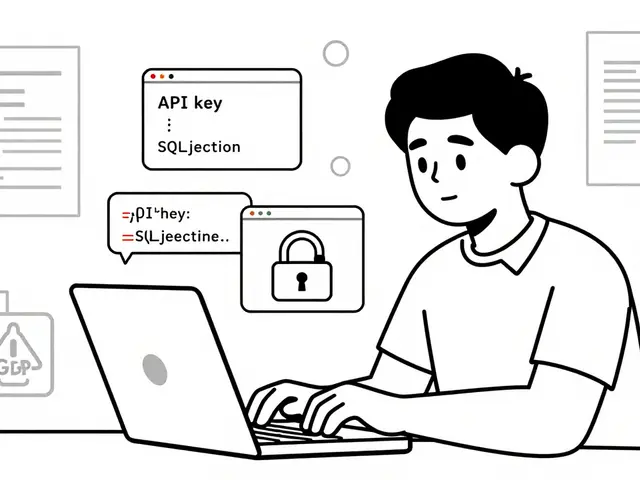

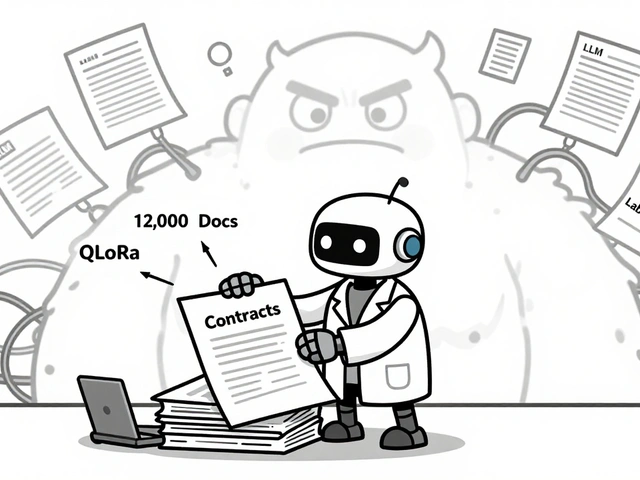

You don’t train these models from scratch. You start with a foundation model-like a pre-built engine-and then teach it your industry’s language. This is called fine-tuning. The process has five clear steps:- Domain data inventory: Gather all your internal documents-medical records, contracts, financial reports, support logs. You need 50,000 to 500,000 high-quality files.

- Data curation and labeling: Clean, annotate, and tag the data. A radiology team might label 250,000 CT scans with tumor types. This step takes 6-12 weeks and costs $50,000-$300,000.

- Model selection and fine-tuning: Pick a base model (like Llama 3 or Mistral) and train it on your dataset. This takes 2-8 weeks.

- Validation: Test against real-world benchmarks. In healthcare, accuracy must hit 90%+ to deploy. In legal, it’s 85%+.

- Integration: Plug the model into your existing systems-EHRs, CRM, ERP. 78% of successful implementations integrate with legacy platforms like Salesforce or SAP.

It’s not easy. One healthcare startup spent 6 months and $320,000 just curating data. But the payoff? A 32% jump in diagnostic accuracy and a 45% drop in radiologist workload.

Where Domain-Specialized AI Shines

These models don’t do everything. But where they’re used, they’re unbeatable.- Healthcare: PathAI’s 2026 oncology model identifies cancer subtypes from pathology slides with 98.3% accuracy. General models? Around 85%. That 13-point gap saves lives.

- Finance: A European bank used a finance-specialized model to cut fraud detection false positives by 38%. That means fewer innocent customers flagged and less manual review.

- Legal: Kira Systems’ contract analyzer reads complex agreements and flags risky clauses with 85% accuracy. General AI misses 40% of those clauses.

- Recruitment: Carv.com’s hiring AI understands niche job titles like "Quantitative Risk Analyst (Fixed Income, Basel III)"-something general models can’t parse. They reduced misqualified candidates by 47%.

These aren’t lab experiments. They’re live in hospitals, banks, and law firms right now. And the numbers don’t lie: domain-specialized AI achieves 89% accuracy on specialized tasks. General AI? 62%.

The Hidden Costs and Risks

This isn’t magic. There’s a price.- High upfront cost: Building a domain model averages $120,000-$750,000. Data curation alone can cost $500,000.

- Dependency on experts: You need doctors, lawyers, or compliance officers to label data and validate outputs. Without them, the model learns wrong.

- Integration headaches: 47% of failed projects stumbled trying to connect to old systems. EHRs and legacy ERP tools weren’t built for AI.

- Over-specialization: A finance model can’t write a press release. A legal model won’t help with inventory forecasting. Gary Marcus at NYU warns this creates brittle systems. If your industry shifts-say, new regulations hit-your model may need retraining, adding 25-40% to long-term costs.

That’s why most smart companies use a hybrid approach. RTS Labs found 85% of successful AI deployments combine a domain-specialized model for core tasks (like diagnosis or compliance) with a general model for creative or cross-domain work (like drafting emails or brainstorming).

What the Data Says About Adoption

By Q4 2024, 68% of healthcare orgs, 61% of financial institutions, and 54% of legal firms had deployed domain-specialized AI, according to McKinsey. The market hit $18.7 billion in 2024, with healthcare leading at $6.2 billion.Why now? Because the ROI is undeniable. Dr. Fei-Fei Li at Stanford calls domain specialization "the most significant productivity multiplier in enterprise AI." Companies using it see 2.3x faster time-to-value and 37% higher employee adoption than those using generic tools.

But adoption isn’t uniform. It’s focused. 83% of implementations target one or two high-value use cases-not whole departments. A hospital doesn’t replace all its AI with a medical model. They use it for radiology. A bank doesn’t automate everything. They use it for fraud detection.

The Future: Specialization, Not Singularity

Forrester predicts that by 2027, 75% of enterprise AI will be domain-specialized-up from 42% in 2025. Why? Because businesses care about results, not parameters.IBM just released Watsonx.governance, trained on 12 million legal documents across 30 countries. It spots regulatory changes with 94.7% accuracy. PathAI’s 2026 model improved cancer detection by 5.2 percentage points over last year’s version. These aren’t incremental updates. They’re breakthroughs.

Still, there’s a warning. MIT’s AI Ethics Lab cautions that if every industry builds its own model, we could end up with 10,000+ siloed AIs by 2030. That means no interoperability. No shared learning. Just a mess of narrow, expensive tools.

The smart path forward? Build specialized models for core tasks-but keep a general model around for flexibility. Use APIs to let them talk. And invest in frameworks that let knowledge transfer between domains-like how a legal AI might learn from a compliance AI in finance.

Generative AI’s future isn’t about bigger models. It’s about smarter, more focused ones. The best AI in 2026 doesn’t know everything. It knows your business. And that’s worth more than any general-purpose chatbot.

What’s the difference between a general AI and a domain-specialized AI?

General AI is trained on broad internet data and can handle many tasks, but it often gets details wrong in specialized fields. Domain-specialized AI is fine-tuned on industry-specific data-like medical records or legal contracts-so it understands jargon, regulations, and workflows unique to that field. For example, a general AI might misread a medical term; a domain-specialized model won’t.

How accurate are domain-specialized AI models compared to general ones?

Domain-specialized models typically achieve 89% accuracy in their specific tasks, while general models average just 62%. In regulated fields, the gap is even wider: healthcare (92% vs. 58%), finance (87% vs. 61%), and legal (85% vs. 54%). PathAI’s oncology model, for instance, hits 98.3% accuracy in cancer detection-far above the 85% of general models.

Is domain-specialized AI more expensive than general AI?

Yes, initially. Building one costs $120,000 to $750,000, mostly due to data curation ($50,000-$500,000) and domain expert time. But the ROI often justifies it. Companies see 200-400% higher productivity gains and 37% higher employee adoption. The cost of errors-like misdiagnoses or fraudulent loans-can be far higher.

Can domain-specialized AI be used outside its original field?

Not well. A model trained on legal contracts won’t help with medical imaging. It’s designed for narrow tasks-usually 3 to 5 specific functions. That’s why most enterprises use a hybrid approach: a specialized model for core tasks and a general model for broader, creative, or cross-domain work.

What industries benefit most from domain-specialized AI?

Regulated, data-rich industries lead: healthcare, finance, and legal services. Healthcare uses it for diagnostics (PathAI), finance for fraud detection (IBM Watson), and legal for contract analysis (Kira Systems). These fields have high stakes, strict rules, and massive volumes of structured data-perfect for specialized models.

What are the biggest challenges in implementing domain-specialized AI?

The top three: lack of quality domain data (68% of failures), shortage of domain experts to guide training (52%), and difficulty integrating with legacy systems (47%). Many companies underestimate how long it takes to clean and label data-sometimes 6+ months. Without accurate, labeled data, even the best model fails.

Artificial Intelligence

Artificial Intelligence

OONAGH Ffrench

March 9, 2026 AT 14:39There's something deeply human about specialization. We don't expect a general practitioner to perform neurosurgery, so why should we expect a general AI to parse a Basel III clause?

Domain models aren't just more accurate-they're more *respectful*. They acknowledge that expertise isn't generic, it's earned.

It's not about intelligence. It's about context.

And context? That's where the real value lives.

poonam upadhyay

March 10, 2026 AT 18:16Ugh, I HATE when people treat domain-specific AI like it’s some kind of divine revelation-like, sure, it’s better at detecting cancer, BUT did you account for the fact that 70% of these models are trained on biased, outdated, or outright toxic datasets?!!

And don’t even get me started on the $500K data-curation bill-what about the junior radiologists who spent 18 months labeling scans only to be replaced by a model trained on THEIR work?!

It’s not innovation-it’s extraction with a fancy API.

Also, why is everyone ignoring the fact that these models are LOCKED in proprietary silos? No transparency, no audit trails, just ‘trust us, it’s 98.3% accurate’-with NO explainability?!

And don’t even mention ‘hybrid models’-that’s just corporate speak for ‘we’re using two broken systems so we can blame both’.

Also, the ‘ROI’ argument? LOL. ROI for whom? The hospital execs? Or the patient who got misdiagnosed because the model hallucinated a tumor in a benign cyst?!!

Also, MIT’s warning about 10,000 siloed AIs? That’s not a warning-it’s a prophecy. And we’re all just dancing to it.

Also, I’m not mad. I’m just disappointed.

Shivam Mogha

March 11, 2026 AT 21:37Accuracy matters. Cost matters. Integration matters. All three.

mani kandan

March 13, 2026 AT 03:54I’ve watched this space for years-and honestly, the most underrated insight here is the hybrid approach.

It’s not either/or. It’s both/and.

Imagine a surgeon using a domain-specialized AI to spot a rare tumor subtype, then handing off the patient summary to a general model to draft a compassionate note to the family.

One does the precision. The other does the humanity.

That’s not just efficient-it’s elegant.

And yes, the upfront cost is brutal. But I’ve seen hospitals cut readmission rates by 22% using this exact model. That’s not just ROI-that’s redemption.

Also, the data curation phase? Painful. Necessary. Non-negotiable.

It’s not AI that’s the revolution. It’s the quiet, tedious work of humans curating knowledge so machines can finally understand it.

Rahul Borole

March 14, 2026 AT 11:01Domain-specialized AI represents a paradigm shift in enterprise intelligence, grounded not in scale, but in fidelity to operational reality.

The empirical evidence is unequivocal: in regulated domains, accuracy differentials of 25-30 percentage points are not marginal-they are existential.

Furthermore, the integration of fine-tuned models with legacy systems, while technically challenging, enables organizational continuity without sacrificing innovation.

It is imperative to recognize that the cost of failure in healthcare, finance, or legal contexts far exceeds the capital expenditure of deployment.

Therefore, the strategic imperative is not whether to adopt, but how to institutionalize governance, human oversight, and knowledge transfer mechanisms to ensure sustainable, ethical, and scalable deployment.

Organizations that treat this as a technical problem, rather than a systemic transformation, will inevitably falter.

Leadership must prioritize cross-functional alignment, expert engagement, and long-term model maintenance-not just initial accuracy metrics.

The future belongs not to the largest models, but to the most responsibly curated ones.

Let us not confuse specialization with limitation.

Specialization is the disciplined application of intelligence to purpose.

And purpose, in enterprise, is everything.