The dream of having a powerful AI assistant in your pocket usually comes with a catch: your phone isn't doing the thinking. Most of the time, your device is just a fancy window into a massive data center miles away. But the tide is shifting. We're seeing a move toward Edge Inference is the process of running machine learning models directly on local hardware-like smartphones or IoT devices-rather than relying on a remote cloud server.

For years, we thought you needed a trillion parameters to get a useful answer. Now, we're discovering that for many tasks, a "small" model is not just enough-it's actually better. But when does it actually make sense to ditch the cloud and go local? It comes down to a trade-off between raw brainpower and the practical realities of latency, cost, and privacy.

The Rise of the Small Language Model

When we talk about Small Language Models (or SLMs), we aren't talking about a slight trim. While a giant like GPT-4 operates with over a trillion parameters, an SLM typically lives in the range of 100 million to 5 billion parameters. These are decoder-only transformer architectures built for speed and efficiency.

You might wonder why we'd settle for less. The answer is that SLMs are specialized. While a massive model knows everything from 14th-century poetry to quantum physics, an SLM can be tuned to be an expert in one specific thing. For example, the Qwen-2-math 1.5B model is a powerhouse for mathematical reasoning. Even though it's tiny, it hits accuracy levels comparable to general-purpose models that are nearly five times its size. By focusing the "brain" on a specific domain, you get high-quality results without needing a server farm to run a single query.

Why Move to the Edge?

Cloud AI is convenient, but it has three big enemies: lag, cost, and prying eyes. When you send a request to the cloud, your data travels to a server, gets processed, and travels back. That round trip creates latency. If you're building a real-time voice assistant or a smart home controller, a two-second delay feels like an eternity. Local inference happens in milliseconds because the data never leaves the device.

Then there's the money. Running huge models in the cloud is expensive. Every token generated costs a fraction of a cent, which adds up when you have millions of users. Shifting the compute to the user's own hardware effectively offloads the electricity and hardware costs from the company to the device already in the customer's hand.

Finally, there is the privacy factor. Processing sensitive health data or private messages locally means that information never touches a third-party server. For many users, the peace of mind knowing their data is physically locked on their device is a winning feature.

Making it Fit: The Art of Model Compression

You can't just cram a massive model onto a smartwatch; it would crash the system instantly. This is where Model Compression comes in. It's the process of shrinking a model's footprint without destroying its intelligence. There are a few key ways this happens:

- Quantization: This is like rounding numbers to save space. Instead of using high-precision 32-bit floats, we use 8-bit or even 4-bit integers. It's a massive space saver with surprisingly little impact on accuracy.

- Pruning: Not every single connection in a neural network is useful. Pruning identifies and removes redundant weights-essentially "trimming the fat" from the model.

- Knowledge Distillation: Think of this as a teacher-student relationship. A massive "teacher" model trains a smaller "student" model to mimic its behavior, transferring the essential logic into a much smaller package.

| Model | Parameters | Primary Strength | Approx. Memory Needs |

|---|---|---|---|

| Phi-3.5-mini | 2.7B | General Reasoning | Low-Medium |

| Qwen-2-math | 1.5B | Mathematics | Very Low |

| SmolLM | Varies | High-Quality Data Efficiency | Very Low |

| LLaMA 3.1 | 8B | Broad Versatility | Medium-High |

The Hardware Reality Check

Deploying on the edge isn't all sunshine and rainbows. You're fighting for every megabyte of RAM. On a high-end smartphone, you might have a few gigabytes to spare, but on a wearable or an IoT sensor, you're working with tiny margins. One of the biggest bottlenecks is the prefill stage. This is when the model processes the initial input context. Because we often want personalized AI, we feed the model a lot of local context, which can spike memory usage and slow down the initial response.

The "decode stage"-where the model actually spits out words-tends to scale linearly with the model size. This means if you double the parameters, you generally double the time it takes to generate a word. This is why picking the right size (0.1B to 3B parameters) is critical. If you go too big, the device overheats and the battery drains in an hour. If you go too small, the AI starts hallucinating or forgetting the start of the sentence.

Edge vs. Cloud: When to Choose Which?

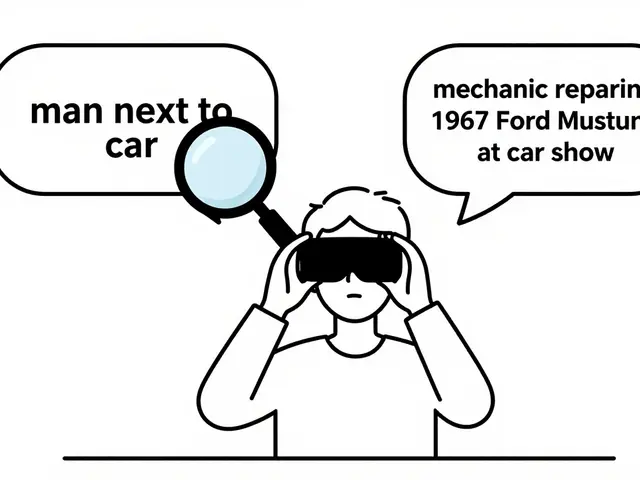

The real question isn't "which is better?" but "which one does this specific job?" You don't need a supercomputer to tell you if a light is on in your living room, but you probably do need one to write a complex legal contract from scratch.

Edge inference is the clear winner for:

- Domain-specific tasks: Simple classification, math, or a dedicated customer service bot for one product.

- Privacy-first apps: Health trackers, private journals, or secure corporate tools.

- Offline functionality: Apps that need to work in airplanes, basements, or remote areas.

- High-frequency interactions: Small, quick adjustments and rapid-fire responses.

Cloud fallback remains essential when you hit a "reasoning wall." If a user asks a complex, multi-step problem that requires deep general knowledge or state-of-the-art logic, an SLM will likely fail. The smartest systems use an adaptive inference strategy: they try to solve the problem locally first, and if the confidence score is too low, they seamlessly hand the request off to a cloud-based LLM.

Practical Steps for Implementation

If you're looking to deploy a model on-device, don't just grab the smallest model you find. Start by defining your constraints. How much RAM is available? What is the acceptable latency for a first response? Once you have those numbers, follow this workflow:

- Select a Base Model: Choose an SLM like Phi-3.5 or Qwen that aligns with your primary task.

- Apply Compression: Use 4-bit quantization to bring the memory footprint down.

- Benchmark Locally: Don't trust simulator numbers. Run the model on the actual hardware you'll be targeting.

- Optimize Context: Limit the length of the input prompt to keep the prefill stage fast.

- Set Up Fallbacks: Create a trigger that sends the request to the cloud if the local model is struggling.

Do small language models actually work as well as big ones?

It depends on the task. For general-purpose knowledge, no. But for specialized tasks-like math or specific coding languages-SLMs can be incredibly accurate. For instance, specialized 1.5B parameter models have matched the performance of 7B general models in specific benchmarks.

Will on-device AI drain my battery?

Yes, inference is computationally expensive. However, by using quantization and pruning, the energy cost is significantly lower than running a full-sized model. The goal is to find a balance where the model is "just enough" for the task to avoid unnecessary power draw.

What is the difference between an SLM and an LLM?

The primary difference is scale. LLMs usually have hundreds of billions or trillions of parameters and require massive cloud clusters. SLMs typically have between 100 million and 5 billion parameters and can run on a high-end phone or laptop.

Is my data really safer with edge inference?

Generally, yes. Because the data is processed on your local hardware, it doesn't need to be sent over the internet to a corporate server, which eliminates the risk of data interception during transit or misuse by a cloud provider.

What is 'quantization' in simple terms?

Imagine you're measuring something with a ruler that has marks every millimeter, but you realize you only need to know the measurement to the nearest centimeter. Quantization is the process of reducing the precision of the model's numbers to save space and speed up calculations.

Artificial Intelligence

Artificial Intelligence

Ben De Keersmaecker

April 5, 2026 AT 11:52The part about adaptive inference is actually the most interesting bit. It makes a lot of sense to have a local layer for basic tasks and only ping the cloud when things get complex. I wonder how that impact on battery life scales when the device is constantly deciding which model to use.

Adrienne Temple

April 6, 2026 AT 00:32This is such a great explanation! 😊 I love how it breaks down the technical stuff into things we can actually understand. It's really cool that we can have more privacy just by changing where the brain lives! 🌟

Chris Heffron

April 7, 2026 AT 20:50I think the point about quantization is spot on. It's basically just a clever way to cheat the hardware limits. 😅

Aaron Elliott

April 9, 2026 AT 15:15One must ponder whether the pursuit of edge inference is merely a convenient mask for corporate cost-cutting. By offloading the computational burden to the consumer's hardware, these entities essentially monetize the user's own electricity and silicon. It is a fascinating paradigm shift in the economic distribution of AI infrastructure, though one might argue it diminishes the perceived value of the service when the 'magic' is simply running on a chip in one's pocket. The philosophical implication of decentralized intelligence is far more profound than this text suggests, yet it remains tethered to the mundane reality of RAM limitations.

Sandy Dog

April 10, 2026 AT 12:41Oh my god, can you even imagine the absolute disaster if your phone just completely dies in the middle of a local inference task because the battery couldn't handle the load? 😱 Like, imagine being in the middle of a crisis and your AI assistant just gives up on life because it's too "computationally expensive" to think for two more seconds!! I am literally shaking just thinking about the potential for these things to overheat in your pocket and just melt through your jeans, which would be such a tragedy and a total fashion nightmare!! 💅✨

Nick Rios

April 12, 2026 AT 05:47I can see both sides here. It's definitely a win for privacy, but Aaron has a point about the cost shift. Still, for people in remote areas or those with sensitive data, the trade-off is completely worth it.

Amanda Harkins

April 12, 2026 AT 20:15It's just a cycle of shrinking things until they fit. We always want the power of the cloud but the convenience of a toy. It's kind of a weird human contradiction.

Jeanie Watson

April 14, 2026 AT 17:14Meh, most people won't even notice the difference between 4-bit and 8-bit anyway.