Tag: model compression

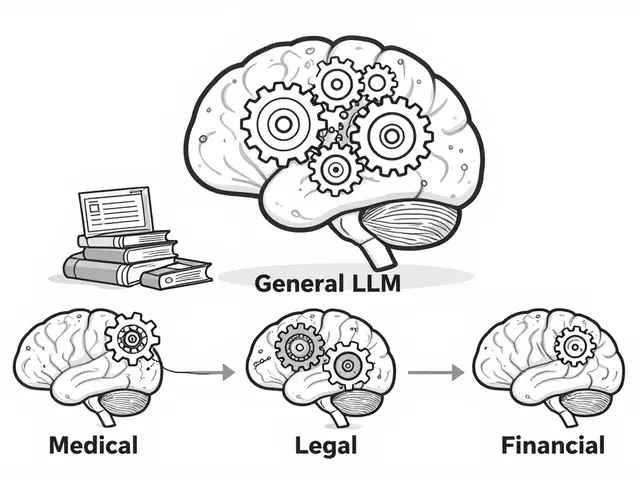

Learn how compression and quantization enable Large Language Models to run on edge devices, improving privacy, reducing latency, and saving memory.

Explore when to use Edge Inference and Small Language Models (SLMs) over the cloud. Learn about model compression, latency, and on-device AI trade-offs.

Learn how calibration and outlier handling keep quantized LLMs accurate when compressed to 4-bit. Discover which techniques work best for speed, memory, and reliability in real-world deployments.

Categories

Archives

Recent-posts

Key Components of Large Language Models: Embeddings, Attention, and Feedforward Networks Explained

Sep, 1 2025

Artificial Intelligence

Artificial Intelligence