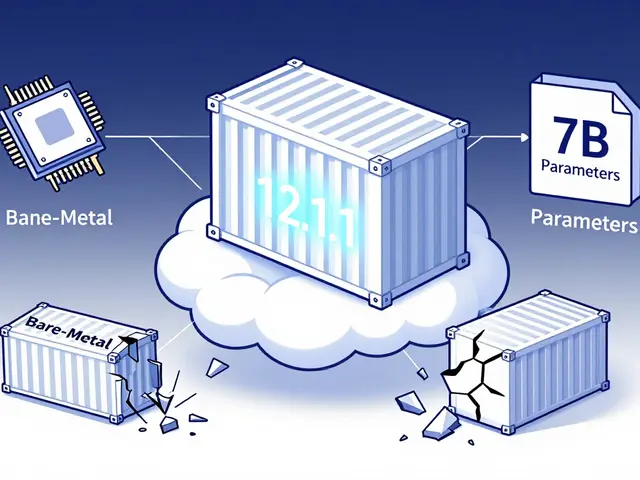

Tag: edge inference

Explore when to use Edge Inference and Small Language Models (SLMs) over the cloud. Learn about model compression, latency, and on-device AI trade-offs.

Categories

Archives

Recent-posts

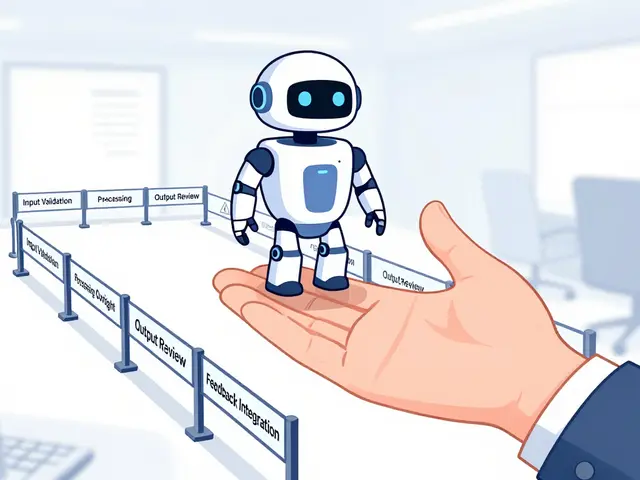

Human Oversight in Generative AI: Review Workflows and Escalation Policies That Actually Work

Mar, 24 2026

Artificial Intelligence

Artificial Intelligence