Generative AI can write emails, draft legal briefs, generate marketing copy, and even code software. But if you let it run wild without human input, you're asking for trouble. Companies that skip proper oversight end up with biased content, compliance violations, or public relations disasters. The real question isn't whether to use AI-it's how to keep it under control. That’s where review workflows and escalation policies come in. They’re not optional extras. They’re the safety rails that keep your AI from going off the tracks.

Why Human Oversight Isn’t Optional

Think of generative AI like a highly skilled intern. It’s fast, it’s smart, and it can produce great work. But it doesn’t understand context the way a human does. It doesn’t know when a tone is offensive, when a fact is outdated, or when a decision could cost a customer trust. Without someone watching, AI will happily generate convincing lies, repeat harmful stereotypes, or miss critical legal nuances. A 2025 BCG study found that organizations with no structured human oversight process were 3.7 times more likely to experience a high-impact AI failure-like a public apology, regulatory fine, or loss of customer data. The problem isn’t the AI. It’s the belief that automation means zero human involvement. That’s a myth. Responsible AI isn’t about removing humans. It’s about using them where it matters most.The Four Stages of a Solid Review Workflow

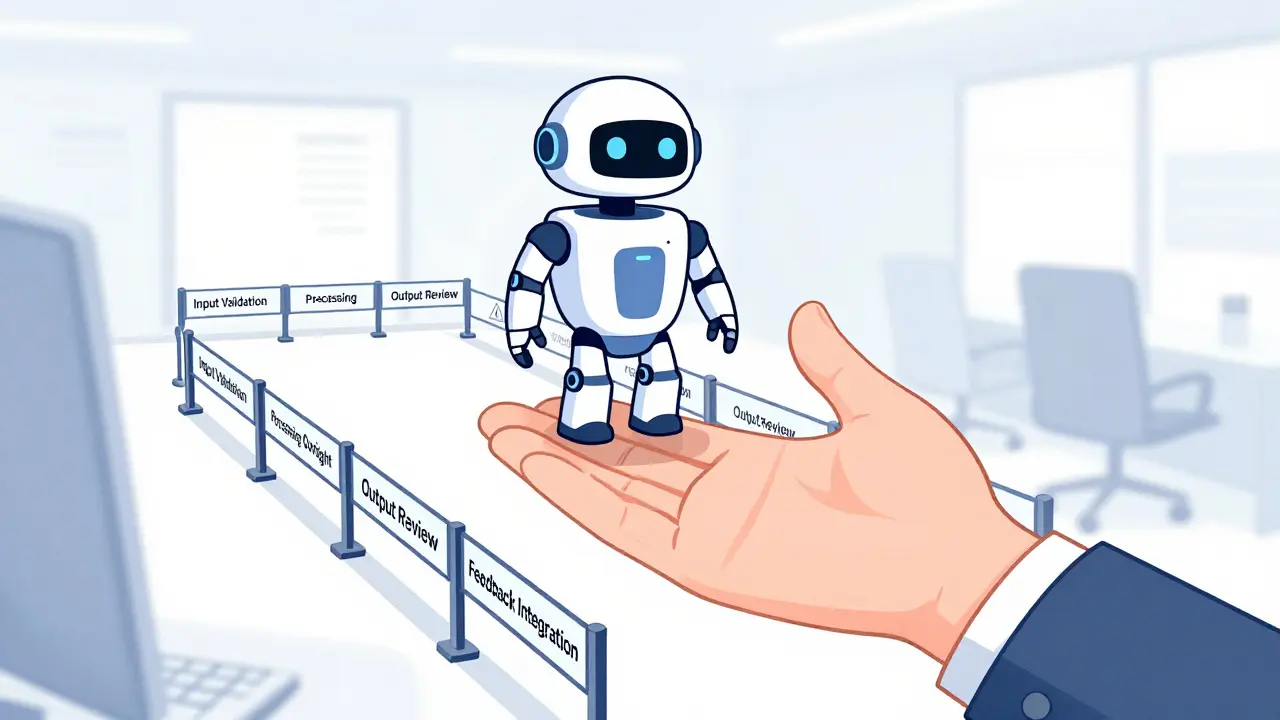

A good review workflow doesn’t just happen. It’s built in stages, each with clear roles and triggers. Here’s how it works in practice:- Input Validation: Before the AI even starts, someone checks the data feeding into it. Is the training data clean? Are the prompts specific? If you feed garbage in, you’ll get garbage out. A marketing team using AI to draft product descriptions might flag prompts that use outdated pricing or discontinued product names before they’re processed.

- Processing Oversight: While the AI works, monitors watch for red flags. This isn’t about staring at a screen all day. It’s about dashboards that alert you when output confidence drops below 85%, when the AI repeats the same phrase three times, or when it references sources not in your approved knowledge base. Real-time alerts let teams intervene before the output is even finalized.

- Output Review: This is where most of the work happens. A human reviews the AI’s final output against a checklist: Is it accurate? Is it aligned with brand voice? Does it comply with regulations? For customer service bots, this might mean checking responses for empathy, clarity, and compliance with data privacy rules. One financial services firm reduced compliance violations by 68% after introducing a mandatory two-person review for all AI-generated client communications.

- Feedback Integration: Every flagged issue, every corrected output, every tweak to a prompt gets logged. This isn’t just for audit purposes-it’s how you improve the AI. If reviewers notice the AI keeps misinterpreting “refund” as “exchange,” that’s data. That’s a prompt update. That’s a model retrain. Without this step, you’re stuck with the same mistakes forever.

Escalation Policies: When to Step In

Not every output needs a human. That’s the whole point of AI. But some outputs are high-risk. That’s where escalation policies come in. These aren’t vague guidelines. They’re rules with clear thresholds. Here’s how a smart escalation policy works:- By Risk Level: A chatbot answering FAQs about shipping times? One review per 100 responses. A system generating loan approval recommendations? Every single output gets reviewed. The risk level determines the human effort.

- By Decision Type: If the AI suggests a price change, a legal disclaimer, or a medical recommendation-that’s an automatic escalation. If it suggests a subject line for an email newsletter? Maybe not.

- By Volume Threshold: If the AI produces 500 responses in an hour and 12% are flagged by automated checks, the system triggers a full team review. No manual triage needed. The system escalates itself.

Training Humans to Outsmart Bias

Here’s the scary part: humans get lazy around AI. We call it automation bias. You see an AI output that looks professional, and you just click “approve.” You don’t question it. You don’t fact-check. You assume it’s right. To fix this, top teams use a trick: intentional errors. Every week, they insert a known mistake into the AI’s input stream-a fake product name, a false statistic, a biased phrase. If the reviewer doesn’t catch it, they get feedback. Not punishment. Just feedback. “You missed this. Here’s why it’s wrong.” One health tech company saw reviewer accuracy jump from 72% to 94% in three months using this method. The reviewers weren’t smarter. They were just more alert.Who’s on the Team?

Human oversight isn’t one person’s job. It’s a team sport.- Content Editors: They ensure tone, clarity, and brand alignment.

- Legal & Compliance Officers: They flag regulatory risks-GDPR, HIPAA, advertising rules.

- Data Scientists: They track model drift, prompt degradation, and data quality issues.

- End-Users: The people who actually use the AI daily. They know when something feels “off.”

Documentation Isn’t Bureaucracy-It’s Insurance

If you ever get audited, sued, or asked to explain why your AI said something harmful, you need proof you tried to prevent it. That’s what audit trails are for. Every human review should log:- Timestamp of the review

- Who reviewed it

- What was changed

- Why it was changed

- Which AI model version was used

- Which prompts and training data were involved

Common Mistakes (and How to Avoid Them)

- Mistake: Reviewing every single output. Solution: Use risk-based escalation. Only review what’s high-stakes.

- Mistake: Training reviewers once and forgetting. Solution: Run quarterly refreshers and intentional error tests.

- Mistake: Letting IT own oversight. Solution: Oversight needs legal, compliance, content, and user input. It’s not a tech problem-it’s a business one.

- Mistake: Waiting until after launch to add oversight. Solution: Build it into the design phase. If you’re building a GenAI tool, oversight should be in the spec sheet from day one.

Artificial Intelligence

Artificial Intelligence

Adithya M

March 25, 2026 AT 05:25Start small? Sure. But start with teeth. Mandatory human review on anything that touches legal, financial, or customer trust. No exceptions.

Tina van Schelt

March 25, 2026 AT 22:27But here’s the kicker: we didn’t train our reviewers to catch errors. We trained them to *question*. That’s the difference between compliance and culture. One team does it because they have to. The other does it because they can’t imagine not doing it.

Jessica McGirt

March 27, 2026 AT 14:34Also, end-users spotting tone issues? Absolutely. Frontline staff hear the emotional subtext customers don’t say out loud. They’re the canaries in the coal mine. If your AI sounds robotic to them, it’s already failed.

Mark Tipton

March 28, 2026 AT 16:32Here’s what nobody says: the moment you automate decision-making, you outsource accountability. And accountability? That’s the one thing no algorithm can fake.

Every time you say ‘trust the system,’ you’re gambling with someone’s job, reputation, or safety. The only way to win is to never fully trust it. Ever.

And don’t get me started on ‘feedback integration.’ That’s just a fancy term for ‘we’re feeding our mistakes back into the model like a self-licking ice cream cone.’ You’re not improving the AI-you’re reinforcing its biases. The model doesn’t learn from corrections. It learns from patterns. And your patterns are flawed.

Stop pretending this is a workflow problem. It’s a philosophical one. We’re outsourcing judgment to machines that don’t understand consequence. And we’re terrified to admit it.

Donald Sullivan

March 29, 2026 AT 19:57Bottom line: if your review process doesn’t make people uncomfortable, it’s not working. We now require reviewers to write a 1-sentence justification for every approved output. If they can’t explain why it’s safe, it gets blocked.

It’s slow. It’s annoying. It’s the only thing keeping us out of court.