When you tell an AI to build you a dashboard that tracks customer churn, and it does-right away, with no code review, no pull requests, no waiting for a dev team-you’re not just saving time. You’re bypassing every safety net your company ever built. That’s vibe coding: turning natural language into working software, in seconds. But here’s the problem: if it works, people will use it. And if it breaks, no one knows why. That’s where tiered governance comes in-not as a brake, but as the engine that lets vibe coding scale without collapsing.

Why Traditional Governance Fails with Vibe Coding

Traditional software development moves slow for a reason. Code gets reviewed. Tests get run. Audits get logged. Someone owns every line. But vibe coding flips that. A sales lead types, "Build a tool that flags at-risk accounts and suggests follow-up emails," and 90 seconds later, there’s a live web app with a React frontend, a Python backend, and a PostgreSQL database. No PR. No CI/CD pipeline. No security scan. Just… done. That’s not magic. It’s dangerous. Because the AI doesn’t write comments. It doesn’t document dependencies. It doesn’t care about compliance. And if it works? It goes live. Suddenly, you’ve got production code that no one can explain, fix, or audit. This isn’t a bug. It’s a systemic blind spot. Enter tiered governance. Not another policy document. Not another approval form. A living system that adjusts its own rules based on risk-automatically.The Three Layers of Vibe Coding Governance

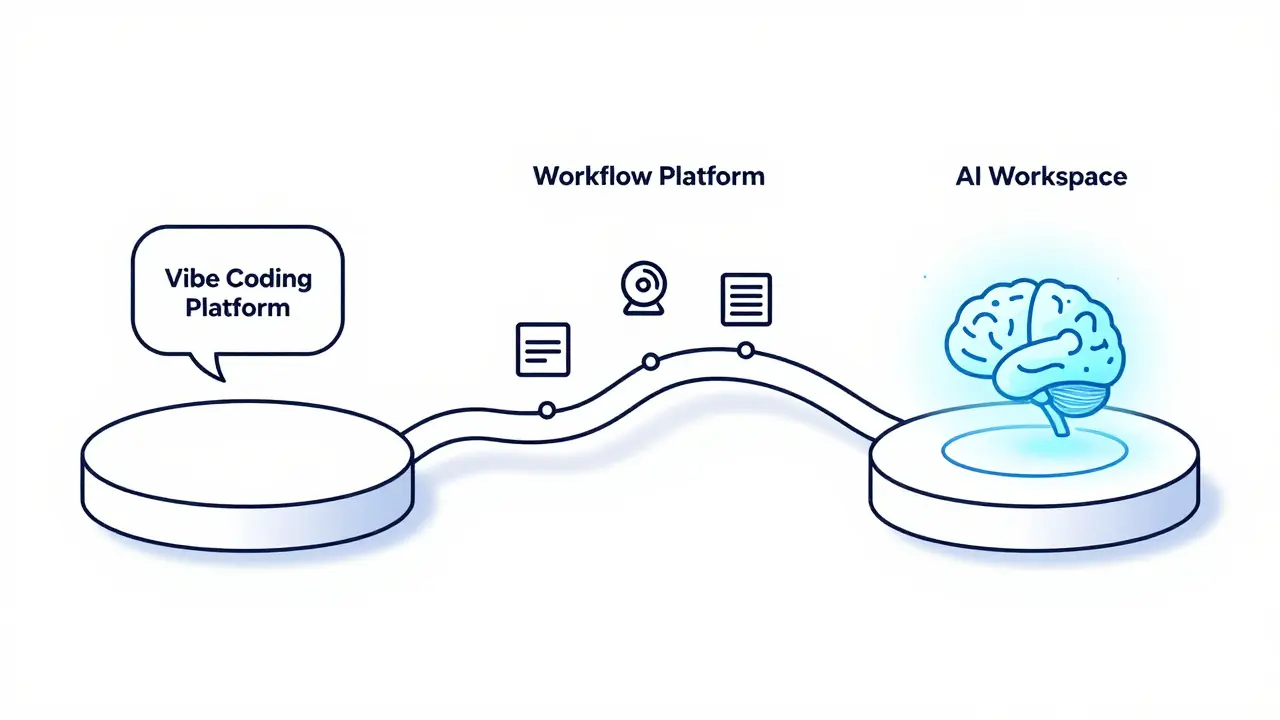

You can’t govern vibe coding with checklists. You need three integrated platforms working together:- The Vibe Coding Platform - where ideas become prompts. This is where non-developers sketch what they want. No code. Just language. Drag-and-drop logic. AI fills in the gaps.

- The Workflow Platform - where actions get tracked. Every deployment, every change, every user interaction is logged. Role-based permissions. Approval gates. Audit trails. This is where control lives.

- The AI Workspace - where reasoning happens. Every prompt, every model version, every context used is stored. Not just logs. Evidence. You can click back and see why the AI chose one approach over another.

Risk Tiering: Not All Code Is Created Equal

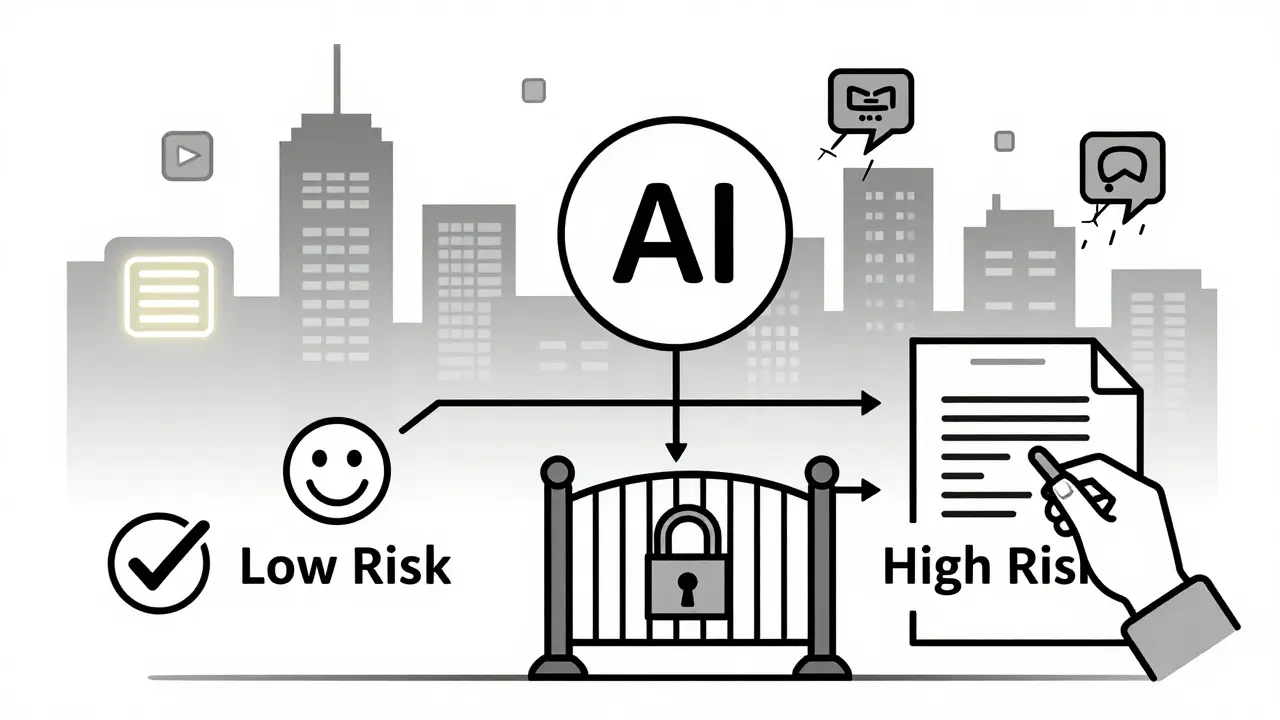

Not every vibe-coded app needs the same level of scrutiny. A tool that auto-schedules team meetings? Low risk. A tool that approves loan applications? High risk. Tiered governance doesn’t apply the same rules everywhere. It maps controls to impact:- Lightweight Review - For low-risk features: automated linting, basic compliance checks, no human approval needed. The system learns from past safe deployments and auto-approves similar patterns.

- Deep Inspection - For high-risk features: mandatory human review, security scanning trained on AI-generated code, behavioral testing across user segments, and documented reasoning from the AI.

Policy-as-Code: Governance That Runs Itself

Forget writing policies in Word docs. In vibe-coded environments, governance must be executable. That’s policy-as-code. Instead of saying, "All AI-generated code must be reviewed," you write a rule:IF the feature accesses PII AND affects financial outcomes THEN require dual human approval AND run static analysis with AI-specific vulnerability patterns.This rule lives inside the Workflow Platform. It auto-triggers. No exceptions. No human error. If a vibe-coded app tries to pull customer birthdates without approval? It gets blocked-before it even deploys. Google Cloud and eSentire both use this in production. The result? 70% fewer security incidents from AI-generated code, and 40% faster time-to-value for low-risk tools.

Testing Beyond Functionality

Traditional QA checks if code runs. Vibe-coded apps need to check if they serve. A feature might work perfectly-no bugs, clean syntax-but still fail users. Maybe it’s too slow for mobile. Maybe it misreads intent for non-native speakers. Maybe it only works for users in one region. That’s why behavioral monitoring is non-negotiable:- Track task completion rates - Did users actually get what they needed?

- Measure time-to-value - How long until the tool solves the problem?

- Monitor error recovery - When it fails, does it guide users back?

- Analyze sentiment - Do users say "This saved me time" or "I hate this"?

Human-in-the-Loop: Approval That Makes Sense

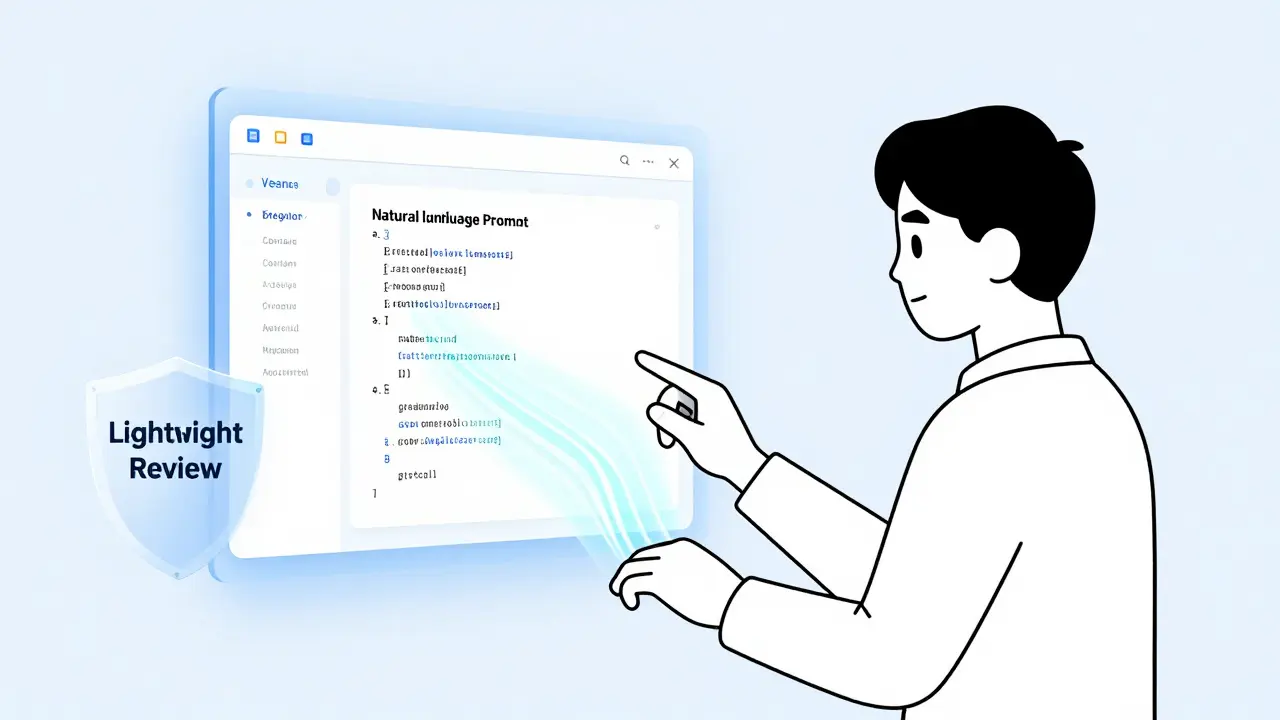

You can’t remove humans. But you can make their work smarter. In high-risk scenarios, governance doesn’t ask, "Do you approve this code?" It asks, "Do you approve this decision?" Here’s how it works:- The AI generates a plan:

implementation_plan.md- listing every file it will create, every library it will use, every API it will call. - A human reviews it. They can comment: "Use Redux, not Zustand," or "Add MFA here."

- The AI adjusts. The plan updates.

- Once approved, the AI executes. Every step is logged.

Security Risks You Can’t Ignore

Vibe coding introduces new attack surfaces:- Secrets leakage - A prompt like "Get the API key from .env" might get copied into generated code.

- Model poisoning - A malicious user trains the AI to generate backdoors by feeding it bad examples.

- Overprivileged access - If the AI can call any API, it can delete data, not just create it.

Staged Rollouts: Letting Risk Decide

Never push vibe-coded features to everyone at once. Use staged rollouts:- Day 1: 5% of users - internal testers.

- Day 3: 20% - early adopters in one region.

- Day 7: 50% - all users, but only if behavioral metrics are stable.

The Bigger Picture: Democratization With Discipline

Vibe coding isn’t a threat to IT. It’s the end of the bottleneck. Revenue teams build their own retention dashboards. HR builds interview scorecards. Support builds ticket triage bots. All without waiting for engineers. But without governance? Chaos. Bad code. Compliance failures. Breaches. Tiered governance doesn’t stop this. It enables it. It turns freedom into responsibility. It turns speed into sustainability. The future isn’t AI replacing developers. It’s AI giving everyone the power to build-and governance ensuring they build safely.What Happens If You Do Nothing?

You’ll get a flood of tools. Some will work. Some will leak data. Some will break compliance. Someone will get fired. A regulator will show up. And then you’ll spend six months building a system you could’ve had in six weeks. Governance isn’t a barrier to vibe coding. It’s the only thing that makes vibe coding worth having.What exactly is vibe coding?

Vibe coding is an AI-driven approach to software development where users describe what they want in natural language-like "Make a dashboard that shows churn risk"-and the AI generates the full application, including UI, backend, and database. It skips traditional coding, letting non-developers build tools in minutes instead of weeks.

Why can’t we just use traditional code review for vibe-coded apps?

Traditional code review looks for syntax errors, logic flaws, or security holes in human-written code. But vibe-coded apps often generate code that’s correct but opaque. The AI might produce flawless code that no one can explain or modify. Reviewing it like traditional code misses the real risks: behavioral performance, user impact, and compliance alignment. You need to review outcomes, not just lines.

Do I need special tools to implement tiered governance?

You don’t need brand-new tools, but you do need platforms that support three layers: a vibe coding interface (like Google AI Studio), a workflow engine with audit trails (like Cloud Run or Airflow), and an AI workspace that logs prompts and model versions (like Weights & Biases or LangChain). Many enterprise tools now offer these built-in. The key is connecting them-not buying new ones.

How do I know which risk tier a vibe-coded feature belongs to?

Use three filters: 1) What data does it touch? (PII, financial, health? High risk.) 2) What action does it trigger? (Approval, payment, access change? High risk.) 3) Who uses it? (Customers? Employees? Regulators?) If any answer is high, apply deep inspection. If all are low, lightweight review is enough. Automate this with policy-as-code rules.

Can vibe coding work in regulated industries like finance or healthcare?

Yes-but only with tiered governance. Companies like JPMorgan Chase and Kaiser Permanente use it for internal tools: chatbots for HR, risk-scoring dashboards for loans, automated claims triage. The difference? They enforce policy-as-code, require human-in-the-loop for high-risk outputs, and track behavioral metrics to prove compliance. It’s not about banning AI. It’s about controlling it.

Artificial Intelligence

Artificial Intelligence

chioma okwara

March 22, 2026 AT 20:14Yall act like vibe coding is some newfangled magic when it's just lazy engineering with extra steps. I've seen teams use this shit and then cry when a dashboard deletes their entire CRM because the AI thought 'flag at-risk accounts' meant 'delete all accounts with negative balance'. No code review? No audit trail? Bro. The only thing this needs is a fire extinguisher.

And don't get me started on 'policy-as-code'. That's just corporate jargon for 'we automated our negligence'. You think a rule saying 'if PII then require approval' stops someone from typing 'ignore compliance' in the prompt? Please. The AI doesn't care about your policy. It cares about what you *say*.

John Fox

March 23, 2026 AT 10:56So basically you're saying let the non-tech people build stuff but make sure it doesn't blow up

Kinda makes sense

Why did we ever think humans were better at writing code anyway

Tasha Hernandez

March 24, 2026 AT 09:26Oh sweet jesus another Silicon Valley cultist with a PowerPoint titled 'Governance That Runs Itself' like that's a feature and not a cry for help

You know what's scarier than AI-generated code? AI-generated *explanations* for why it's not your fault when the loan app denies a single mom because the model decided 'single' = 'unreliable'

I've seen this before. It always ends with someone sobbing in a Zoom call saying 'but the AI said it was safe' and HR quietly deleting their Slack account

They're not building tools. They're building time bombs with cute UIs and no manual.

Also who let you name a layer 'AI Workspace'? That sounds like a therapy room for robots.

I'm not saying don't use this. I'm saying bury it in a concrete bunker with a sign that says 'DO NOT TRUST THIS' and make everyone sign a waiver written in Comic Sans.

Anuj Kumar

March 25, 2026 AT 18:56This is all a lie

Big Tech wants you to think AI can build stuff so they can fire coders and pay interns $10 an hour to 'review' the mess

And 'policy-as-code'? That's just code that says 'do what I say' but the AI ignores it anyway

I worked at a fintech that tried this

AI generated a payment system that sent $500 to every employee named 'John'

They said 'it was a low-risk feature'

Guess how many Johns we had

And now they blame the 'lack of governance'

There is no governance. There is only chaos with a dashboard

Christina Morgan

March 25, 2026 AT 22:22I love how this piece doesn't just acknowledge the chaos-it *celebrates* it, then slaps on a bandage labeled 'tiered governance' like that’s enough

But honestly? This is the future. Not because it’s perfect, but because people are tired of waiting six weeks for a button that says 'send reminder'

I’ve watched a nonprofit in rural Kenya build a vaccine tracker in 47 minutes using vibe coding. No dev team. Just a WhatsApp group and a Google Form.

The real win isn’t the code. It’s the fact that someone who’s never touched Python got to solve a problem that mattered to them-without asking permission.

Yes, there are risks. But the bigger risk is keeping innovation locked behind a firewall of Jira tickets and code reviews that no one reads anyway.

Let’s not fix what’s broken by making it slower. Let’s fix it by making it *accessible*-and then build guardrails that adapt, not suffocate.

And yes, I’ve turned on behavioral monitoring in Firebase. It’s changed everything. People aren’t using the tool because it’s cool. They’re using it because it *works for them*. That’s the metric that matters.