You spend weeks training a large language modela powerful AI system capable of understanding and generating human-like text, building its general knowledge base carefully. Then, you fine-tune it on a specific dataset, like legal documents or medical records. Suddenly, the model forgets how to answer basic questions. This isn't just an occasional glitch; it's a fundamental issue known as Catastrophic ForgettingDisruptive Learningthe phenomenon where neural networks lose previously acquired knowledge when trained on new tasks. If you aren't using the right mitigation strategies, your custom model might end up knowing less than the generic version.

Why Neural Networks Forget So Fast

The core problem lies in how optimization works. When you update parameters during fine-tuning, the model moves its weights to minimize error on the new task. Without guardrails, these updates overwrite the delicate adjustments made during pre-training. Imagine tuning a piano string so perfectly for one song that you ruin the tension for every other song. In deep learning terms, the loss landscape shifts dramatically, pushing the model away from the "general knowledge" region and into a narrow peak optimized only for the new data.

This was highlighted in research presented in early 2025 regarding GPT-J and LLaMA-3 models. Experiments showed that extensive fine-tuning on scientific tasks could degrade performance on general reasoning by significant margins. The model wasn't just ignoring old data; it actively destroyed the pathways required to recall it. Understanding this mechanism helps you realize why simply stopping early or using smaller learning rates often fails to solve the root cause.

Traditional Methods: EWC and Rehearsal

One of the earliest defenses is Elastic Weight Consolidationa technique that regularizes training to protect important parameters from previous tasksmachine learning. EWC calculates the importance of each parameter using the Fisher Information Matrix. It essentially puts "elastic bands" around critical weights. If the fine-tuning process tries to move those crucial parameters too far, the penalty increases, forcing the model to find solutions elsewhere. It's mathematically sound, but historically, calculating these matrices has been computationally expensive and difficult to scale for massive LLMs.

Another classic approach is rehearsal. You keep a subset of the original pre-training data and feed it back into the model alongside the new task data. Think of it like studying flashcards while learning a new subject-you review the basics daily to keep them fresh. While effective, this requires access to the original data, which is often proprietary or impossible to store for modern foundation models. Storing terabytes of replay buffer is rarely a viable option for production teams.

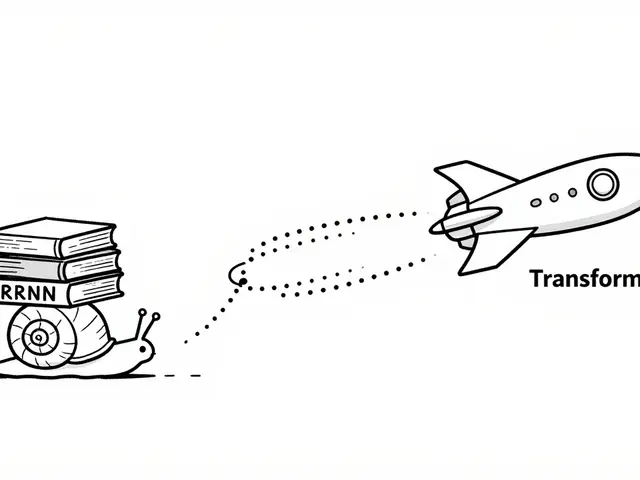

The LoRA Reality Check

Many developers turned to Low-Rank AdaptationLoRAa parameter-efficient fine-tuning method that freezes base weights and trains small low-rank matrices assuming it would solve the problem automatically. LoRA keeps the main backbone frozen and only updates tiny adapter matrices. The logic was simple: if you don't change the big weights, you can't destroy the general knowledge. However, recent analysis published in 2025 contradicts this assumption.

Research comparing LoRA with Functionally Invariant Paths revealed that LoRA does not inherently prevent catastrophic forgetting in continual learning scenarios. Even though the weight changes are small, they can still shift the functional behavior enough to erase prior capabilities. This is a crucial distinction for anyone building production pipelines. You cannot rely on Peft adapters alone if maintaining multi-task consistency is your priority. You need a strategy that explicitly manages functional stability, not just parameter magnitude.

Geometric and Novel Approaches

To get past the limitations of LoRA and EWC, we look at geometry-based methods. Functionally Invariant PathsFIPan advanced technique that optimizes model weights along paths preserving functional performance treats the weight space as a curved Riemannian manifold. Instead of looking at raw distance between numbers, FIP ensures the newly trained network stays close to the original in functional space. Surprisingly, FIP allows larger changes to parameters compared to LoRA but achieves better retention of old tasks because it respects the topology of the loss landscape.

A newer contender is Selective Token MaskingSTMa method mitigating forgetting by masking high-perplexity tokens during training. Published in 2025, STM takes a different angle entirely. It masks tokens that confuse the model (high perplexity) rather than focusing solely on parameters. By preventing the model from latching onto confusing noise in the new data, it preserves the ability to generalize. Experiments across Llama-3 and Gemma 2 demonstrated consistent effectiveness, suggesting a shift toward input-level control over output-level regularization.

Choosing the Right Strategy

Selecting a technique depends on your compute budget and data availability. If you have full access to your pre-training data, replay methods remain robust despite the storage cost. For most teams constrained by memory, a hybrid approach like EWCLoRA combines the efficiency of LoRA with the parameter protection of EWC. Below is a comparison of the most relevant methods for 2026 workflows:

| Technique | Computational Cost | Data Access Required | Best Use Case |

|---|---|---|---|

| EWC | High | No | Critical legacy task preservation |

| LoRA (Standalone) | Low | No | Rapid prototyping (risk of forgetting) |

| FIP | Medium-High | No | Multitask Continual Learning |

| STM | Low | No | Token-level precision tasks |

| Replay | Medium | Yes | High-fidelity domain adaptation |

If you are working with consumer-grade GPUs, you might lean toward hybrid PEFT methods. Remember to evaluate your model not just on the new task, but on a representative hold-out set of previous tasks after every training epoch. This validation step is the only way to know if you are actually fighting forgetting effectively. Some frameworks now offer dynamic regularization coefficients that adjust layer-wise importance automatically, reducing the need for manual tuning of hyperparameters.

Don't settle for the first tool you find. The landscape changed significantly in late 2025, proving that assumptions about frozen weights need re-evaluation. Whether you choose geometric paths or token masking, the goal remains the same: adaptability without amnesia.

Frequently Asked Questions

Does freezing model weights completely stop catastrophic forgetting?

Not necessarily. Recent studies show that even methods that freeze base weights (like standard LoRA) can still cause functional degradation if the adapters push the hidden states into unstable regions. Freezing helps, but it is not a guarantee against losing general capabilities.

What is the fastest method to mitigate forgetting?

Hybrid approaches like EWCLoRA offer a good balance, but Selective Token Masking (STM) has shown superior speed in some 2025 benchmarks, requiring less computation than calculating complex information matrices for every parameter.

Can I fix forgetting after training is complete?

Once the weights are shifted, recovery is difficult without original pre-training data. It is much more efficient to apply regularization techniques during the fine-tuning process itself rather than trying to reverse-engineer performance afterward.

Is LoRA safe for mission-critical applications?

Standard LoRA carries risks regarding functional drift. For mission-critical systems requiring strict reliability across multiple domains, consider combining LoRA with explicit regularization (EWCLoRA) or switching to FIP-based methodologies.

How much memory does FIP require compared to Full Fine-Tuning?

While FIP modifies more parameters than LoRA, it does not require storing the entire model gradient history like EWC does. It generally fits within the memory constraints of modern data center GPUs, though it uses slightly more overhead than pure parameter-efficient methods.

Artificial Intelligence

Artificial Intelligence

Albert Navat

April 2, 2026 AT 11:50LoRA creates false confidence about functional stability since hidden state divergence happens before weight shifts ever become visible.

Pamela Tanner

April 2, 2026 AT 20:17Elastic Weight Consolidation remains a robust solution despite the computational overhead associated with calculating the Fisher Information Matrix. It effectively prevents parameter drift that typically occurs during sequential task learning scenarios. Teams who prioritize accuracy over inference speed should consider this approach seriously.

Steven Hanton

April 3, 2026 AT 22:03Your observation regarding functional drift aligns with the recent findings presented in the twenty-fifth analysis. It is indeed crucial to distinguish between parameter stability and functional invariance during optimization phases. Implementing hybrid strategies often yields the best results in high-stakes environments where reliability is paramount. We should always validate against a hold-out set from previous tasks to confirm retention metrics.

ravi kumar

April 5, 2026 AT 17:47Selective Token Masking offers a lightweight alternative that does not require storing massive replay buffers. It helps maintain performance without needing access to the proprietary pre-training datasets most companies guard closely. The input-level control seems promising for smaller teams with limited compute resources.

Kristina Kalolo

April 7, 2026 AT 13:38The trade-off between storage costs and model fidelity is often underestimated in deployment pipelines. Many practitioners overlook the logistical challenges involved in maintaining a representative replay buffer. Storing terabytes of historical data requires significant infrastructure investment that smaller firms cannot justify. Furthermore, regulatory concerns around data privacy can restrict access to original training corpora entirely. This limitation forces engineers to rely on regularization techniques that do not depend on external memory. Methods like EWC attempt to approximate the benefit of rehearsal through internal weight constraints. However, these approaches struggle to scale effectively across the vast parameter space of modern architectures. Computational expenses grow exponentially when calculating importance scores for every single parameter in the network. Recent studies suggest that functional paths provide a more efficient geometric interpretation of the problem. By navigating the loss landscape along specific manifolds, models retain general capabilities better. This ensures that the model does not slide into narrow local minima defined only by new tasks. Validation protocols must include diverse benchmarks to capture any degradation in reasoning abilities. Monitoring perplexity on old domains during fine-tuning serves as an early warning signal. Ignoring these signals can lead to catastrophic failure in downstream applications relying on general knowledge. Ultimately, choosing a strategy depends on the specific resource constraints and risk tolerance of the organization.