Tag: LLM fine-tuning

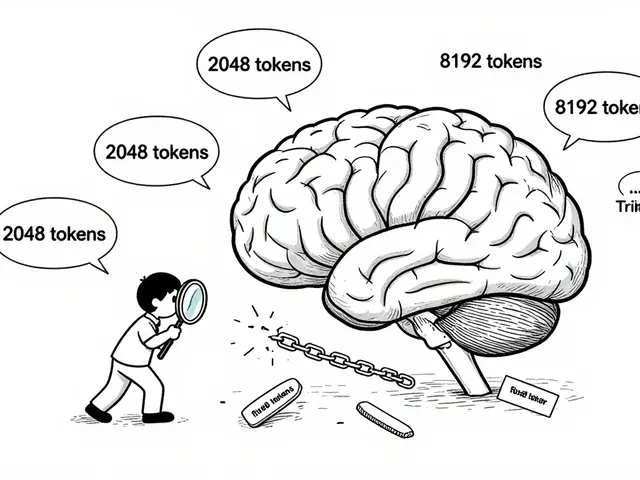

Explore proven techniques to prevent catastrophic forgetting in LLM fine-tuning. We analyze LoRA, EWC, FIP, and hybrid methods to help you preserve model knowledge.

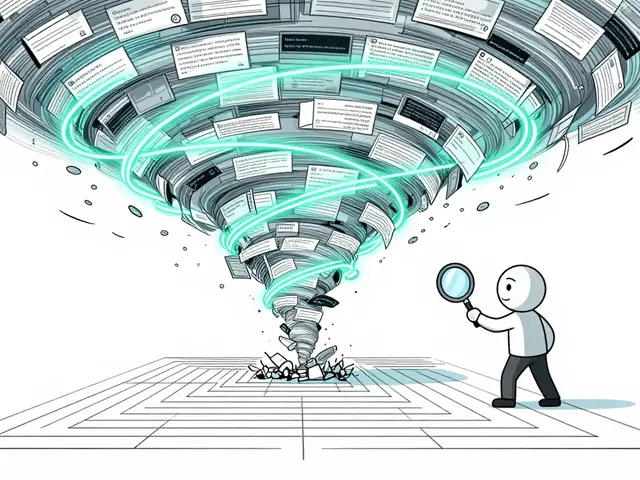

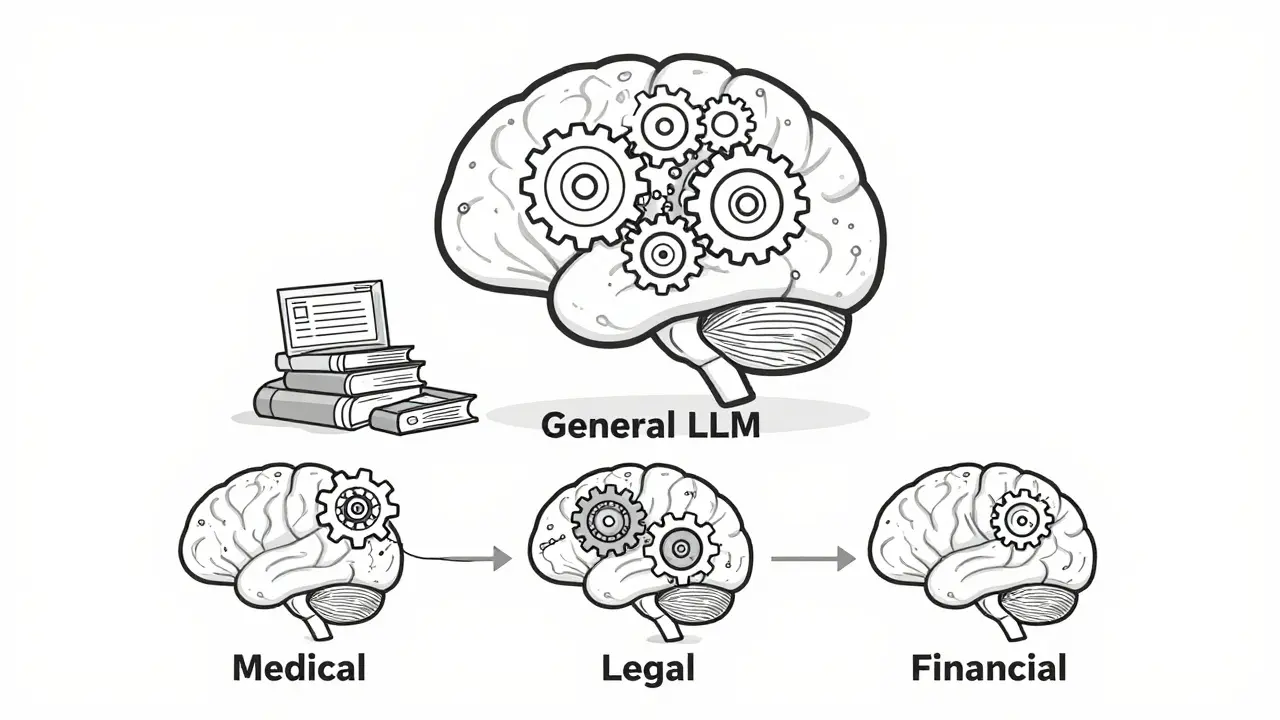

Domain adaptation in NLP lets you fine-tune large language models to understand specialized fields like medicine, law, or finance. Learn how it works, what methods deliver the best results, and why it's essential for real-world AI applications.

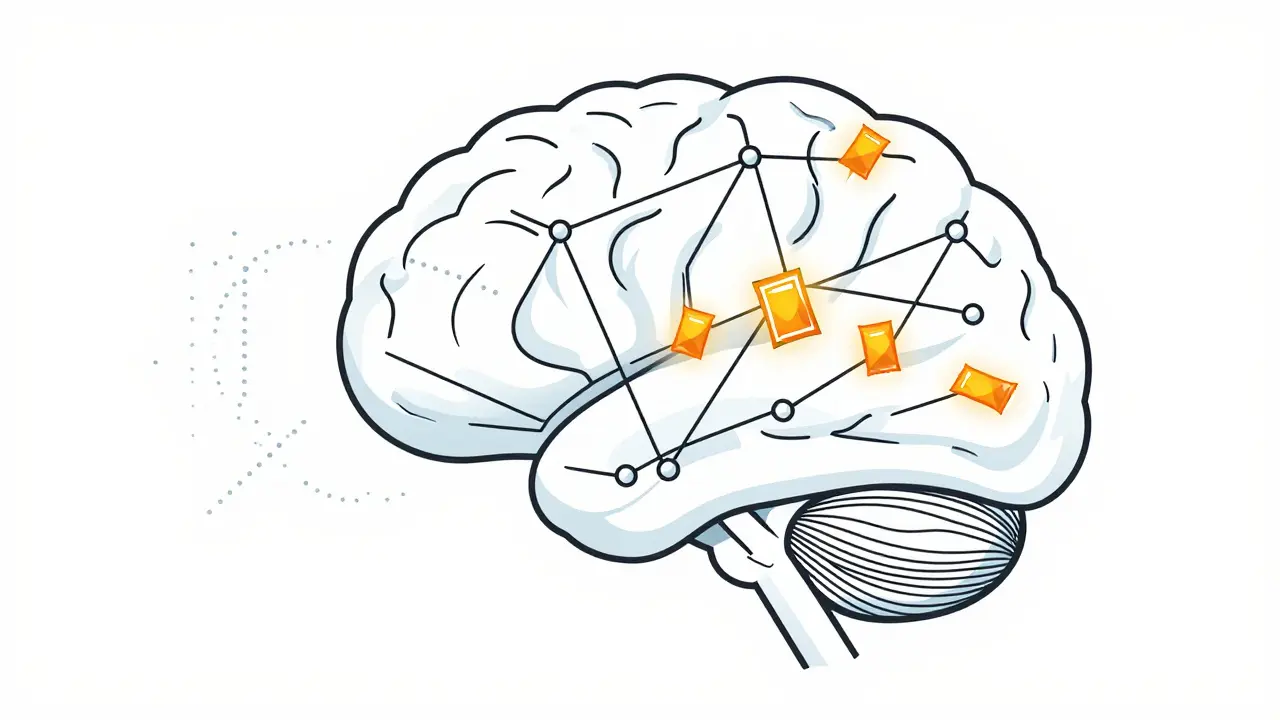

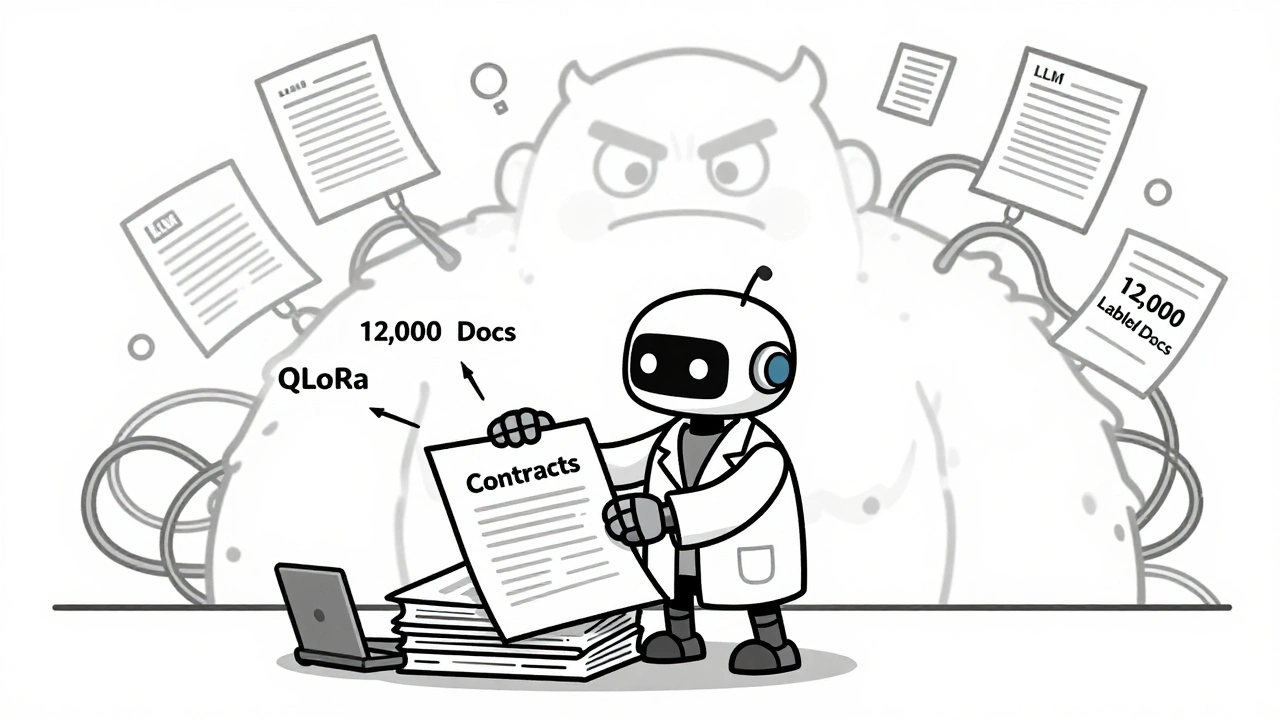

Fine-tuned LLMs outperform general models in niche tasks like legal analysis, medical coding, and compliance. Learn how specialization beats scale, when to use QLoRA, and why hybrid RAG systems are the future.

Artificial Intelligence

Artificial Intelligence