Writing the perfect prompt often feels like casting a spell; one wrong word and your AI output goes from brilliant to bizarre. For most of us, spending hours tweaking a single sentence is a waste of time. This is where Prompt Libraries is a curated collection of high-quality prompts designed to provide consistent, reliable instructions for generative AI systems. By using a library, you stop guessing and start scaling, moving from a trial-and-error approach to a standardized system of excellence.

The Real Value of Using a Prompt Library

If you are still writing every prompt from scratch, you are working harder than you need to. Research from MIT in 2024 showed that using a library can lead to 63% faster task completion and a 41% jump in output quality. Why? Because you are leveraging the collective intelligence of thousands of engineers who have already failed so you don't have to.

Whether you use a public platform like PromptHero (which holds about 32% of the market) or an internal corporate database, the goal is the same: repeatability. When a company finds a prompt that generates perfect quarterly reports, they don't want every employee reinventing the wheel. They want a single, governed source of truth.

Core Prompt Categories and Patterns

Not all prompts are created equal. To organize a library effectively, you need to categorize them by function. Based on industry standards, most successful libraries split prompts into these specific types:

- Contextual Prompts: These provide the background. Instead of saying "Write a blog," you provide the target audience, brand voice, and goal.

- Directive Prompts: Direct, task-oriented instructions. "Summarize this transcript into five bullet points."

- Exploratory Prompts: Open-ended queries designed to brainstorm or expand ideas.

- Sequential Prompts: A series of prompts designed to be used in a specific order to reach a complex outcome.

Beyond categories, professional libraries utilize Prompt Patterns. For example, the "Persona Pattern" tells the AI to "Act as a Senior DevOps Engineer," while the "Audience Persona Pattern" asks the AI to "Explain this to me as if I am a five-year-old." Using these patterns ensures that the AI doesn't just provide a correct answer, but an answer in the right tone and format.

| Platform | Primary Audience | Key Strength | Pricing Model |

|---|---|---|---|

| PromptHero | Creatives / General | Cross-platform compatibility | Freemium |

| FlowGPT | Collaborative Teams | Community editing | Tiered Subscription |

| PromptBase | Professionals/Enterprise | High-end curated prompts | Marketplace / Per Seat |

Solving the Versioning Nightmare

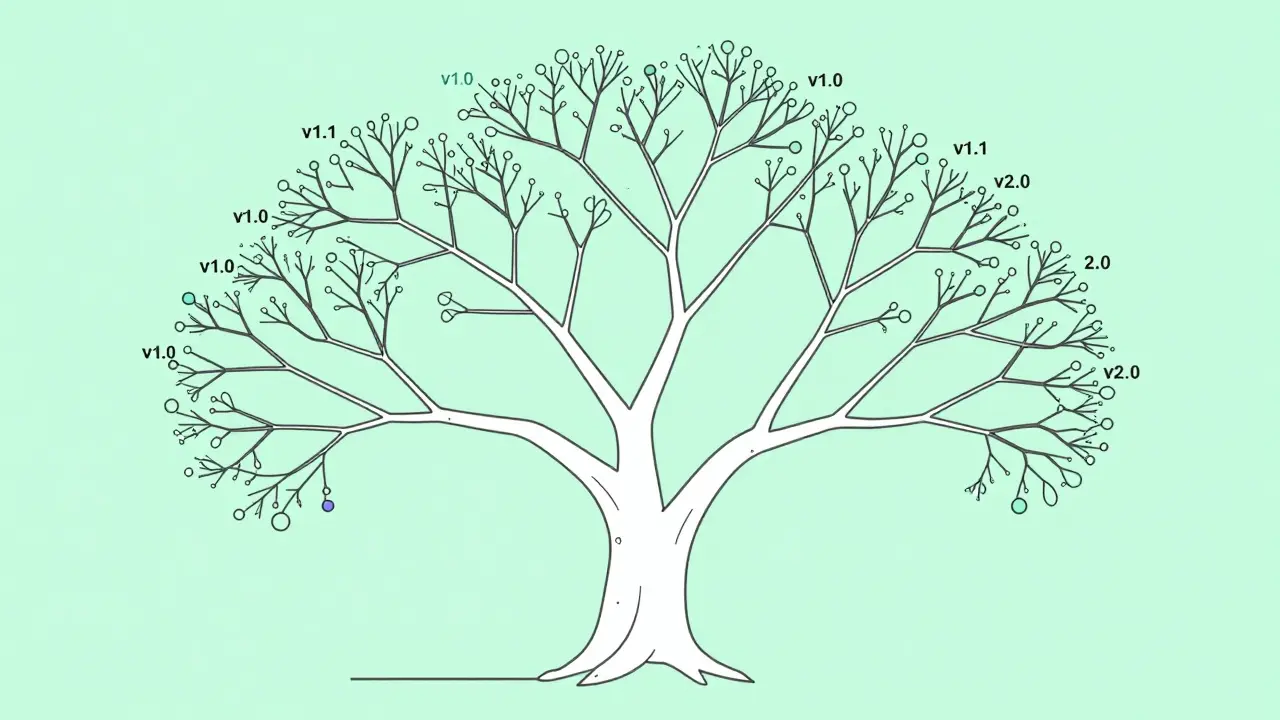

Here is the problem: AI models change. A prompt that worked perfectly in GPT-4 might produce hallucinations in GPT-5. This is why Prompt Versioning is non-negotiable for any professional setup. You cannot simply overwrite a prompt; you need a history.

About 78% of enterprise libraries now use Git-based versioning. This allows teams to track major versions (v1.0, v2.0) for big structural changes and minor versions (v1.1, v1.2) for small tweaks in wording. If a model update breaks your workflow, you can instantly roll back to a previous version that was stable.

When managing versions, follow these a few rules of thumb:

- Tag by Model: Always label prompts with the specific model version (e.g., "MidJourney v6.1") they were tested on.

- Log the Change: Don't just change "helpful" to "concise." Document why the change was made and if the output improved.

- Test Side-by-Side: Before promoting a v1.2 prompt to the main library, run it against v1.1 using the same input to verify the quality gain.

Governance: Keeping the AI on the Rails

For businesses, a prompt library isn't just a convenience-it's a compliance requirement. With the implementation of the EU AI Act, documented governance is now mandatory for business-critical prompts. Prompt Governance is the process of auditing, approving, and monitoring prompts to ensure they are safe and unbiased.

The danger of "standardized" prompts is a phenomenon called homogenization. When everyone uses the same top-rated template, creativity drops, and existing biases are amplified. IBM research found that 68% of common templates actually introduce demographic bias. This is why you need a governance layer that includes:

- Bias Auditing: Regularly testing prompts with diverse inputs to ensure the AI doesn't lean into stereotypes.

- Access Control: Not every employee needs access to the prompt that handles payroll logic; use role-based permissions.

- Approval Workflows: New prompts should be vetted by a "Prompt Lead" before being added to the official corporate library.

Following the NIST Prompt Governance Framework helps organizations move from chaotic, individual usage to a structured corporate asset. This shift reduces the risk of "shadow AI," where employees use unvetted prompts that might leak sensitive company data.

Pro Tips for Building Your Own Library

You don't need a $500/month enterprise subscription to be effective. Whether you are using a Notion database or a GitHub repo, these best practices apply. First, focus on specificity. A prompt that says "Write a professional email" is useless. A prompt that says "Write a professional follow-up email to a lead who hasn't replied in 3 days, emphasizing the value of our 20% discount, and keeping the tone curious but not pushy" is a library-grade asset.

Second, implement a feedback loop. If a colleague uses your prompt and it fails, they should be able to flag it immediately. A prompt library is a living organism; if it isn't being updated based on real-world failures, it's decaying.

Finally, avoid the "prompt trap" of over-engineering. Some users spend hours adding flowery language to prompts, believing it makes the AI "smarter." In reality, clarity and structure (like using delimiters such as ### or ---) usually perform better than complex adjectives.

What is the difference between prompt engineering and a prompt library?

Prompt engineering is the act of designing and refining a specific prompt to get a desired output. A prompt library is the systematic storage and management of those engineered prompts so they can be reused by others without needing to redo the engineering work.

Do I really need version control for prompts?

Yes, especially if you rely on AI for business operations. LLMs are updated frequently, and a "model drift" can occur where a prompt that worked yesterday suddenly produces poor results today. Versioning allows you to track these changes and roll back to a working state.

Are public prompt libraries safe to use for corporate data?

Public libraries are great for templates, but you should never put sensitive corporate data into a prompt you find online. Always use the library for the structure of the prompt and then plug in your private data within a secure, enterprise-grade AI environment.

How do I handle prompt bias in my library?

The best way to handle bias is through iterative testing. Use "adversarial prompting"-intentionally trying to trigger a biased response-to see if your template is prone to stereotypes. If it is, refine the prompt to explicitly demand neutrality or diverse perspectives.

Which prompt library platform is best for beginners?

PromptHero is generally the best starting point due to its massive community and freemium model. It allows beginners to see what works for others across multiple models like MidJourney and ChatGPT before committing to a paid tool.

Next Steps and Troubleshooting

If you're just starting, don't try to build a 1,000-prompt library overnight. Start with your five most repetitive tasks. Document the exact prompt that works, save it in a shared document, and label it with the AI model you used. That is the seed of your governance system.

If you find that your prompts are still inconsistent despite being in a library, check your model compatibility. A common mistake is using a prompt designed for Claude 3.5 in GPT-4o; while they are similar, the subtle differences in how they handle instructions can lead to vastly different results. When in doubt, rewrite the prompt using the specific guidelines provided by the model's creator.

Artificial Intelligence

Artificial Intelligence

Nathaniel Petrovick

April 15, 2026 AT 18:14This is a great breakdown! I've been using a basic Notion page for my prompts and it's honestly a lifesaver for my team. Definitely agree on the specificity part-the more detail, the better the output.

Jason Townsend

April 16, 2026 AT 05:24governance is just another word for control they want to track every thought you feed the machine so they can map your brain and replace it with a corporate template wake up people

Elmer Burgos

April 17, 2026 AT 09:58i think it's cool how we can all share what works and help each other out without getting too bogged down in the technical stuff

Sally McElroy

April 17, 2026 AT 23:26The homogenization of thought is the true tragedy here...!!! We are sacrificing the divine spark of individual creativity for the sake of "efficiency," and it is frankly abhorrent to witness the death of the human spirit in a spreadsheet...!!!

Jeroen Post

April 19, 2026 AT 13:09the eu ai act is just the start of the digital panopticon they use the term bias to scrub any truth that doesnt fit the narrative and now we have libraries to ensure the lies are consistent across all platforms its all a game of shadows and we are the pawns

Angelina Jefary

April 21, 2026 AT 05:45I cannot believe how many people ignore basic grammar when writing prompts, yet they wonder why the AI fails. Also, the notion that "governance" is a safety measure is laughable; it is clearly a mechanism for surveillance and behavioral modification under the guise of corporate ethics.

Honey Jonson

April 22, 2026 AT 22:21this is so helpful!! i tried the persona thing and it totallly changed how my ai writes for my blog’s stuff. its kinda like havng a lil helper who knows exactly what i want lol

Sara Escanciano

April 24, 2026 AT 12:47The fact that IBM research shows 68% of templates introduce bias and we're still talking about "best practices" instead of dismantling these flawed systems is disgusting. This isn't an optimization problem, it's a moral failure of the highest order!

Antwan Holder

April 25, 2026 AT 12:08Oh, the sheer agony of the versioning nightmare! I can feel the psychic weight of a thousand broken prompts crushing my soul! We are not just managing text, we are managing the fragmented echoes of our own desperation in a cold, digital void where the only constant is a 404 error of the heart!

Destiny Brumbaugh

April 26, 2026 AT 01:47America needs its own prompt libaries that put US values first!! stop following EU rules and start making tools that make our tech the best in the hole world!! get rid of the bias stuff and just make it win!