Tag: prompt engineering

Learn how per-token pricing works for LLM APIs. We break down input vs output costs, compare OpenAI and Anthropic rates, and share tips to reduce your AI bill.

Learn how to use error messages and feedback prompts to help LLMs self-correct. Reduce structured output errors by 45% using Intrinsic, Multi-Turn, and FTR methods.

Master the art of prompt libraries for Generative AI. Learn the essentials of governance, version control, and best practices to scale AI output and maintain quality.

Learn how to identify and mitigate AI hallucinations. Explore practical strategies like RAG, RLHF, and prompt engineering to ensure your generative AI outputs are reliable.

Structured prompts using role, rules, and context are the key to reliable enterprise LLM use. Learn how role-based prompting, chain-of-thought reasoning, and iterative testing improve accuracy, reduce hallucinations, and align outputs with business needs.

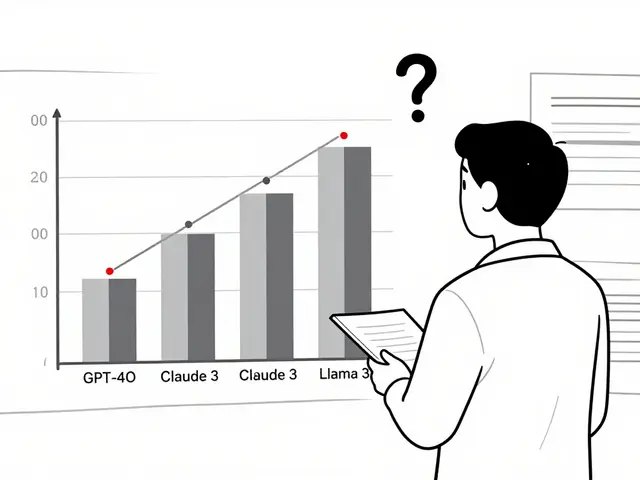

Reinforcement Learning from Prompts (RLfP) automates prompt optimization using feedback loops, boosting LLM accuracy by up to 10% on key benchmarks. Learn how PRewrite and PRL work, their real-world gains, hidden costs, and who should use them.

NLP pipelines and end-to-end LLMs aren't competitors-they're complementary. Learn when to use each for speed, cost, accuracy, and compliance in real-world AI systems.

Small changes in how you phrase a question can drastically alter an AI's response. Learn why prompt sensitivity makes LLMs unpredictable, how it breaks real applications, and proven ways to get consistent, reliable outputs.

Categories

Archives

Recent-posts

Human-in-the-Loop Operations for Generative AI: Review, Approval, and Exceptions Strategy Guide

Mar, 26 2026

Artificial Intelligence

Artificial Intelligence