When enterprises start using Large Language Models (LLMs), they quickly realize that typing vague questions like "Tell me about our sales data" doesn’t work. The model doesn’t know what "our" means, what format to use, or what decision to support. That’s where prompt engineering becomes the difference between useful output and noise. It’s not about making the model smarter-it’s about making your instructions clearer, more structured, and more aligned with real business needs.

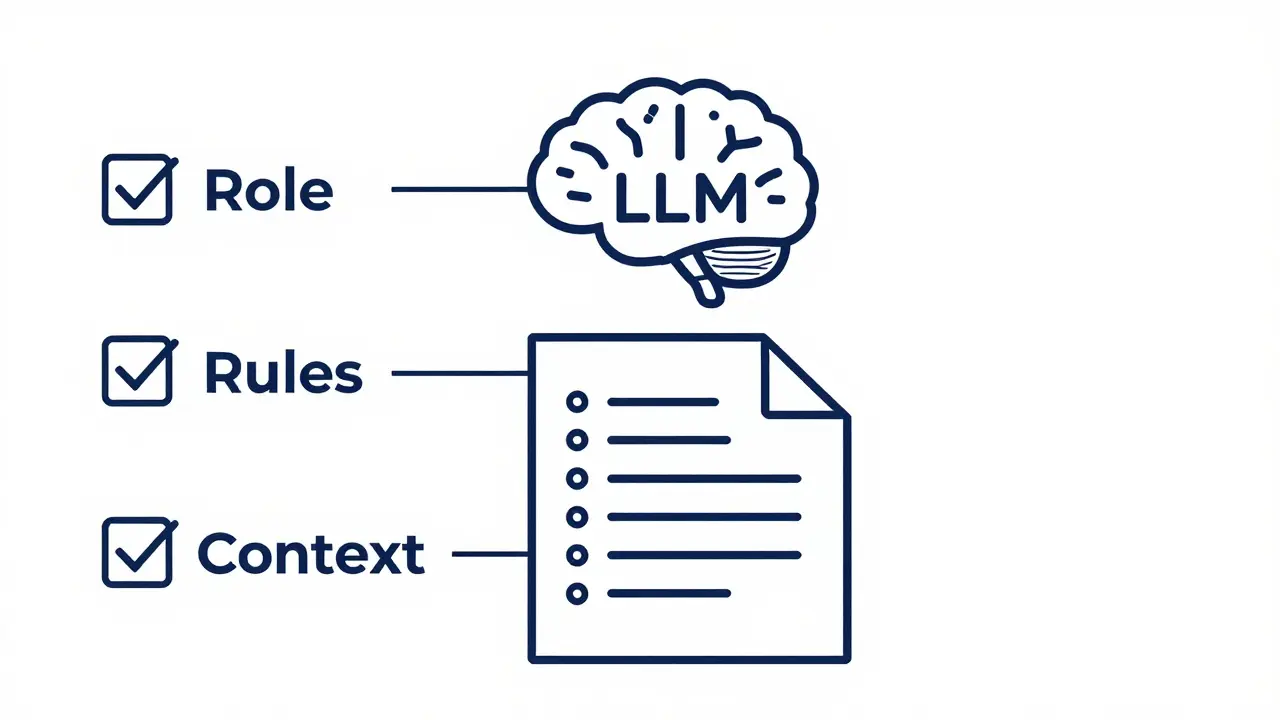

Start with Role: Make the Model Act Like an Expert

The most effective enterprise prompts begin by assigning the LLM a clear role. Instead of asking a general question, you tell the model who it is. "You are a senior financial analyst at a Fortune 500 company. Your job is to summarize quarterly earnings reports for the executive team." This isn’t just fluff. Research shows that role-based prompting improves output accuracy by up to 40% in enterprise settings. Why? Because the model uses its training to simulate the behavior, tone, and knowledge of that role. A legal advisor will cite precedents. A customer support agent will apologize first. A data analyst will structure numbers in tables. Enterprises that succeed with LLMs use system prompts to lock in roles permanently. For example, a healthcare chatbot might have a system prompt that says: "You are a licensed clinical triage nurse. Always prioritize patient safety, avoid diagnoses, and refer to official clinical guidelines." Once the role is set, user prompts can focus on tasks: "Analyze this patient’s symptoms and suggest next steps." The model already knows how to respond because it’s been told who it is.Use Rules: Tell It What to Do, Not What Not to Do

Rules guide behavior. But most people get them backward. Instead of saying "Don’t use jargon," they should say "Use plain language understandable by a high school graduate." Negative instructions like "Don’t make up facts," "Avoid opinions," or "Don’t use bullet points" create confusion. LLMs are trained to interpret language, not logic gates. When told what not to do, they often guess what’s allowed-and sometimes guess wrong. Positive rules work better. Here’s a real example from a legal firm: > "You are a paralegal preparing a motion to dismiss. Use formal legal tone. Structure your response in three parts: background, legal basis, and conclusion. Cite only the U.S. Code Title 28, Section 1404. Do not reference case law unless explicitly provided." This prompt gives the model a clear template, a tone standard, and a source constraint-all as positive instructions. The result? Outputs that require 70% less editing than those from vague prompts. Enterprises also use rules to enforce consistency. A retail company might add: "All product descriptions must include: product name, key feature, target user, and one emotional benefit. Keep length between 80-100 words." These aren’t suggestions. They’re rules baked into every prompt.Layer in Context: The Secret Weapon of High-Performing Prompts

Context is where most prompts fail-and where the best ones win. A prompt like "Write a product description for our new smart thermostat" is weak. It assumes the model knows the product. It doesn’t. A strong prompt says: "Write a product description for the Nest Pro 2026 smart thermostat. It uses AI to learn household routines, reduces HVAC energy use by up to 28% based on EPA data, and integrates with Alexa and Apple HomeKit. Target audience: homeowners aged 35-55 who value convenience and energy savings. Tone: trustworthy, slightly technical, no hype." That’s context. And context is what turns a generic response into a tailored one. Enterprises that excel use three types of context:- Background data: Company policies, product specs, compliance rules.

- Examples: One or two past outputs that match the desired style (few-shot prompting).

- Constraints: Word limits, formatting rules, data sources to use or avoid.

Combine Techniques: The Power of Multi-Strategy Prompts

The best enterprise prompts don’t rely on just one technique. They layer them. Take this example from a cybersecurity team: > "You are a senior threat analyst. Below are two examples of incident reports from last month. Analyze the new report step by step: First, identify the attack vector. Second, cross-reference with our threat intelligence database. Third, rate severity from 1 to 5. Fourth, recommend one immediate action. Then output your findings in a bullet-point summary." This single prompt combines:- Role-based prompting: "senior threat analyst"

- Few-shot learning: "two examples of incident reports"

- Chain-of-thought: "First... Second... Third... Fourth..."

- Structured output: "bullet-point summary"

Iterate: Prompt Engineering Is a Process, Not a One-Time Fix

No prompt is perfect on the first try. That’s not a flaw-it’s the norm. Enterprises that treat prompt engineering like software development outperform those that treat it like a one-off script. The workflow looks like this:- Write an initial prompt based on your goal.

- Run it and review the output.

- Ask: Did it meet the objective? Was it too vague? Too long? Off-tone?

- Adjust: Add context. Clarify the role. Tighten the rules.

- Test again.

- Repeat until output is reliable.

Validate with Human and Machine Feedback

Even the best prompts need checking. Enterprises that rely solely on automated scoring miss critical nuances. A machine can count words, check grammar, or verify data citations-but it can’t tell if a response sounds condescending, misses cultural context, or misaligns with brand voice. The most effective teams use a dual-rating system:- Human raters: Use a simple rubric: Accuracy (1-5), Tone (1-5), Relevance (1-5). Have domain experts review 10-20 outputs weekly.

- Machine raters: Use another LLM to score consistency, length, and factual alignment. Tools like Google’s Model Garden or Anthropic’s Claude Console let you run side-by-side comparisons.

Tools That Make Prompt Engineering Practical

You don’t need to build everything from scratch. Major platforms now offer tools designed for enterprise prompt workflows:- Google’s Model Garden (Vertex AI): Lets you test prompts across multiple models, save versions, and compare outputs side-by-side.

- Anthropic’s Claude Console: Allows you to edit model memory and store system prompts as reusable templates.

- OpenAI’s ChatGPT Enterprise: Includes prompt versioning, team libraries, and usage analytics.

- K2View’s GenAI Data Fusion: Integrates prompts with live enterprise data via RAG, ensuring responses are grounded in real-time records, not just training data.

What Happens When You Skip This?

Enterprises that skip structured prompting don’t just get bad answers. They get dangerous ones. A bank used a vague prompt: "Summarize customer complaints." The LLM mixed real complaints with fictional ones, leading to a false report that triggered unnecessary audits. A healthcare provider asked: "What’s the best treatment for this condition?" The model cited a study that was retracted six months earlier. These aren’t edge cases. They’re predictable failures of unstructured prompting. Structured prompts with roles, rules, and context prevent this. They turn LLMs from unpredictable tools into reliable team members.Why does role-based prompting work better than generic instructions?

Role-based prompting works because it activates the model’s internal training on professional behaviors. When you say "You are a tax consultant," the model doesn’t just answer-it simulates how a tax consultant thinks, what language they use, and what details they prioritize. This reduces ambiguity and hallucinations by grounding responses in real-world expertise rather than guessing what the user might want.

Can I use the same prompt across different LLMs like GPT-4, Claude, and Gemini?

You can, but results will vary. Each model has different strengths. GPT-4 excels at complex reasoning, Claude handles long-context better, and Gemini integrates well with Google’s data. A prompt optimized for one may need tweaking for another. The best practice is to test each prompt on each model and create version-specific templates. Don’t assume one-size-fits-all.

How much context should I include in a prompt?

Include as much as needed-no more, no less. Too little context leads to guesswork. Too much overwhelms the model and wastes token limits. A good rule: if the model can’t answer without it, include it. For example, if you’re asking for a sales forecast, include the last 12 months of data, not just "sales are up." Context should be specific, relevant, and trimmed of fluff.

Is chain-of-thought prompting only useful for technical tasks?

No. Chain-of-thought works for any task requiring logic, judgment, or sequencing. It’s useful for customer service scripts ("First, acknowledge the concern. Then, verify the account. Then, offer the solution.") or marketing copy ("First, identify the pain point. Then, position the product as the fix. Then, add social proof."). Anytime you need the model to think step-by-step, not just spit out an answer, CoT improves reliability.

How do I know if my prompt is working?

Measure three things: accuracy (does it answer correctly?), consistency (does it repeat the same style and structure?), and alignment (does it match your business goals?). If outputs are accurate 80%+ of the time, repeat the same structure. If they vary, refine the role, rules, or context. Track improvements over time-prompt engineering is a metric-driven practice.

Enterprise LLMs aren’t magic. They’re tools that respond to structure. The companies winning with AI aren’t the ones with the biggest models-they’re the ones who’ve mastered the art of clear, layered, and tested prompts. Start with role. Add rules. Layer in context. Test. Iterate. Repeat. That’s the formula.

Artificial Intelligence

Artificial Intelligence

Zelda Breach

March 1, 2026 AT 05:35Alan Crierie

March 2, 2026 AT 19:08Nicholas Zeitler

March 2, 2026 AT 20:12Teja kumar Baliga

March 3, 2026 AT 18:27k arnold

March 3, 2026 AT 18:30Tiffany Ho

March 5, 2026 AT 02:34Fred Edwords

March 5, 2026 AT 04:21