Have you ever looked at your monthly bill from an AI provider and wondered why the number jumped so much? You sent a simple question, got a quick answer, yet the cost seems high. The reason lies in how these systems charge us: not by the second, but by the per-token pricing model. This is the hidden currency of the artificial intelligence world, and understanding it can save you thousands.

When companies like OpenAI, Anthropic, or Google sell access to their Large Language Models (LLMs), they don't just let you use the software freely. They count every piece of text you send and receive. These pieces are called tokens. If you want to build applications that talk to users, analyze data, or write code, you need to know exactly what you're paying for. Let's break down how this works, why output costs more than input, and how you can predict your bills.

What Exactly Is a Token?

To understand the price tag, you first need to understand the unit of measurement. In human language, we count words. In machine language, LLMs count tokens. A token isn't always a whole word. It can be a single character, part of a word, or a full word depending on the complexity of the text.

Most major providers use a method called Byte-Pair Encoding (BPE) to split text into these chunks. Think of it like breaking a sentence into Lego bricks. Common words like "the" or "and" often become one token. Rare words might get broken into three or four tokens. For English text, a good rule of thumb is that 1,000 tokens equal about 750 words. However, this ratio changes with different languages. Hebrew, for instance, uses about 30% more tokens per word than English because of its script structure. If you are building a global app, this difference matters significantly for your budget.

| Language | Tokens per Word (Approx.) | Notes |

|---|---|---|

| English | 1.33 | Standard baseline |

| Hebrew | 1.73 | Higher token density due to script |

| Chinese | 1.0 - 1.5 | Varies by tokenizer version |

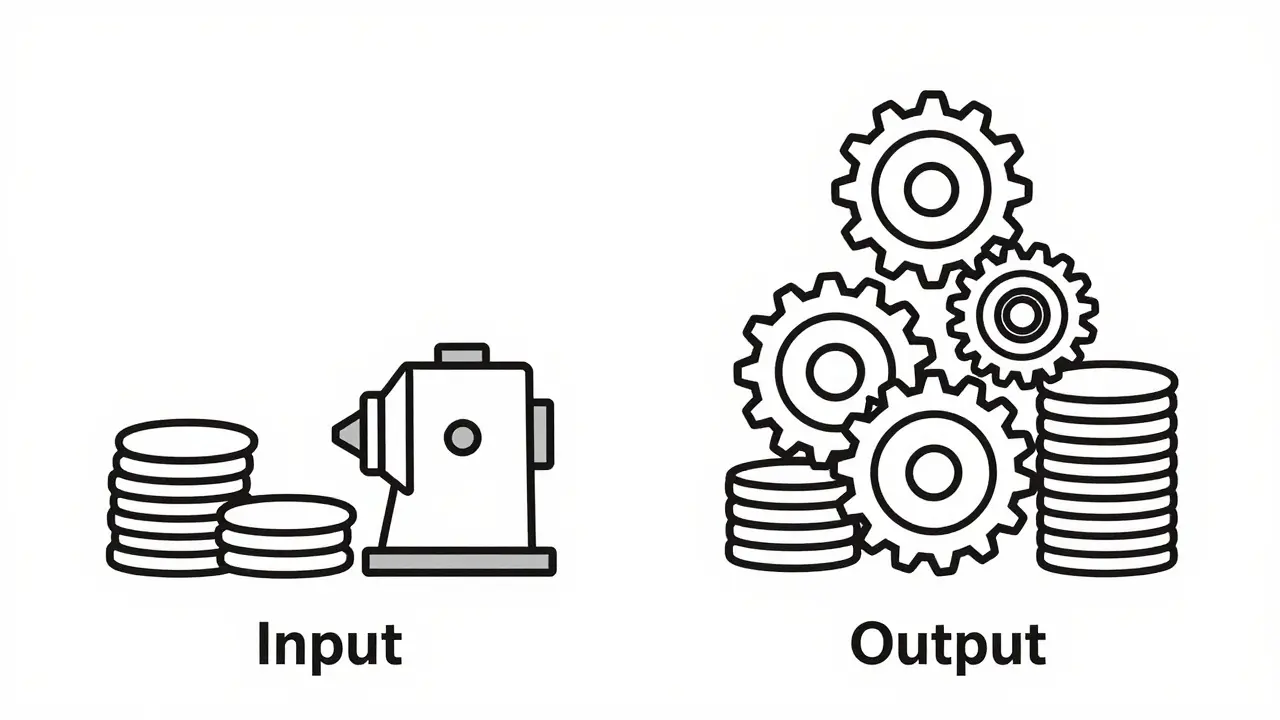

Why Output Costs More Than Input

You will notice that API prices are always listed as two numbers: one for input (prompt) and one for output (completion). Why is there a difference? The short answer is computational effort.

When you send a prompt, the model processes all those tokens in parallel. It reads your entire message at once to understand context. This is efficient. But when the model generates an answer, it works autoregressively. It predicts one token, then uses that token to help predict the next one, and so on. Each step requires a full pass through the neural network. Because of this, generating output takes 3 to 5 times more computation than reading input. Providers reflect this heavy lifting in their prices. Typically, output tokens cost 2 to 4 times more than input tokens across all major platforms.

Comparing Prices Across Major Providers

The market for generative AI APIs grew to $4.2 billion in 2024, and competition is fierce. As of late 2024 and early 2025, pricing has dropped significantly, but disparities remain between models based on their capability levels. Here is how the big players stack up.

| Model | Input Price ($) | Output Price ($) | Best For |

|---|---|---|---|

| GPT-3.5-Turbo | $0.50 | $1.50 | Simple tasks, high volume |

| Claude Haiku | $0.25 | $1.25 | Cost-sensitive applications |

| GPT-4o | $5.00 | $15.00 | Balanced performance/cost |

| Claude Sonnet | $3.00 | $15.00 | Complex reasoning |

| Claude Opus | $15.00 | $75.00 | High-stakes professional work |

Notice that GPT-3.5-Turbo is drastically cheaper than GPT-4 models-up to 60 times cheaper for input. If your application doesn't need advanced reasoning, sticking to older or lighter models is the smartest financial move. Anthropic's Haiku model competes directly with GPT-3.5 on price, offering a viable alternative for developers looking to diversify their providers.

Hidden Costs and Billing Surprises

Even with clear per-token rates, bills can spike unexpectedly. One common issue is token counting discrepancies. Developers often use local libraries like `tiktoken` (for OpenAI) or Anthropic's official counter to estimate costs before sending requests. However, these estimators aren't always perfect. Switching model versions can change how text is tokenized. For example, moving from an older GPT-3.5 version to a newer one increased token counts by 8% for some users without changing the actual content.

Special characters also play tricks on you. Emojis, mathematical symbols, and non-standard punctuation can inflate token counts. A single emoji might add 4 tokens to your request. While $0.0000002 per occurrence sounds tiny, if you process millions of messages containing emojis, those micro-costs add up. Another surprise comes from fine-tuning. If you train a custom model, you pay extra for training tokens plus higher usage fees for the deployed model. Always check if the base model meets your needs before investing in customization.

How to Optimize Your Token Usage

You can control your costs by being strategic about how you interact with the API. First, trim your prompts. Remove unnecessary pleasantries like "Please be polite." The model understands instructions without fluff. Second, implement caching. If your app answers the same FAQ questions repeatedly, store the responses locally instead of hitting the API every time. This can reduce token usage by 15-25% for support bots.

Third, monitor your rate limits. Providers cap usage in Tokens Per Minute (TPM). Azure OpenAI, for instance, limits standard deployments to 60,000 TPM. Hitting this limit doesn't just stop your service; it can cause errors that require retries, which waste more tokens. Finally, choose the right model tier. Don't use a top-tier model like Claude Opus to summarize a blog post if a mid-tier model like Sonnet or GPT-4o can do it just as well for half the price.

How many tokens are in a typical email?

A standard business email of 200-300 words usually contains between 250 and 400 tokens. Short emails may have fewer, while detailed reports can exceed 1,000 tokens easily.

Is it cheaper to send longer prompts or shorter ones?

It depends on the outcome. While shorter prompts cost less in input tokens, they might lead to vague outputs requiring follow-up queries. Concise, precise prompts often result in accurate, shorter responses, saving money on expensive output tokens.

Do I pay for tokens if the API returns an error?

Generally, yes. Most providers charge for the input tokens processed even if the generation fails or hits a safety filter. Check specific provider terms, but assume you pay for the attempt.

What is the maximum context window size?

As of 2025, leading models offer windows up to 200,000 tokens (Claude) or 128,000 tokens (GPT-4o). Larger windows allow more context but may increase latency and cost slightly due to memory overhead.

Will token prices continue to drop?

Analysts forecast 15-20% annual reductions through 2027 as infrastructure efficiency improves. However, prices for the most advanced reasoning capabilities may stabilize as development costs rise.

Artificial Intelligence

Artificial Intelligence