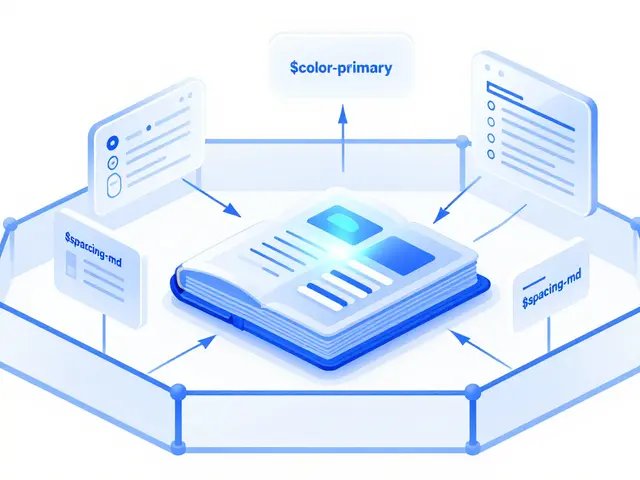

Tag: tokenization

Learn how per-token pricing works for LLM APIs. We break down input vs output costs, compare OpenAI and Anthropic rates, and share tips to reduce your AI bill.

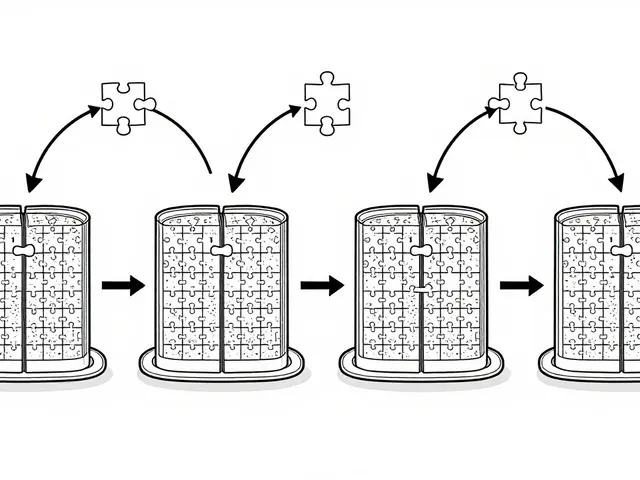

Despite the rise of massive language models, tokenization remains essential for accuracy, efficiency, and cost control. Learn why subword methods like BPE and SentencePiece still shape how LLMs understand language.

Categories

Archives

Recent-posts

Localization and Translation Using Large Language Models: How Context-Aware Outputs Are Changing the Game

Nov, 19 2025

Artificial Intelligence

Artificial Intelligence