Why AI Hallucinates in the First Place

To fix the problem, we have to understand that Large Language Models (LLMs) are not databases. They are probabilistic prediction engines. When you ask a question, the model isn't "looking up" an answer in a file. It is calculating the statistical probability of which token (word or part of a word) should come next based on the massive amount of text it saw during training. This creates a fundamental tension between novelty and usefulness. If a model is too focused on being useful, it might just regurgitate training data word-for-word. If it's too focused on novelty, it starts "guessing" to fill in the gaps. When the model lacks specific data about a niche topic, it doesn't hit a wall; it simply predicts what a correct answer *should* look like. This is why hallucinations often look so authentic-they follow the structural patterns of truth without containing any actual facts. Several specific triggers make this worse:- Knowledge Cutoffs: If a model was trained on data ending in 2023, asking about a 2025 event forces it to guess based on older patterns.

- Data Poisoning: If the training set contained biases or flat-out errors, the AI learns those errors as truth.

- Decoding Strategies: Techniques like top-k sampling, which are used to make AI sound more creative and less robotic, actually increase the chance of a hallucination by allowing the model to pick less likely (and potentially incorrect) words.

- Context Window Drift: In long conversations, the AI can "forget" a detail mentioned ten paragraphs ago, leading it to contradict itself or invent a new detail to maintain the flow.

Technical Mitigation: Moving Beyond the Guesswork

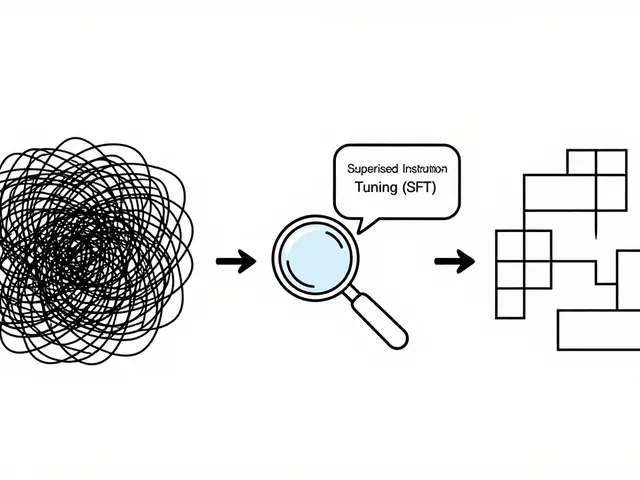

We can't just tell an AI to "stop lying." We have to change the architecture and the incentives. One of the most effective ways to do this is through Retrieval-Augmented Generation (RAG). Instead of relying solely on its internal weights, a RAG system forces the AI to search a trusted external knowledge base (like your company's PDF manuals or a verified database) before generating a response. By grounding the answer in a specific document, you shift the AI's role from "inventor" to "summarizer." Another heavy lifter in the reliability space is Reinforcement Learning from Human Feedback (RLHF). This is essentially a rigorous tutoring process. Human reviewers rank different AI responses, specifically penalizing those that fabricate information and rewarding those that admit uncertainty. Over time, the model learns that saying "I am not sure about that" is a high-reward behavior, whereas making up a fake citation is a low-reward one.| Strategy | How it Works | Primary Benefit | Trade-off |

|---|---|---|---|

| RAG | Connects AI to external data | Fact-based accuracy | Higher latency/setup cost |

| RLHF | Human-guided fine-tuning | Better alignment/honesty | Requires expensive human labor |

| Few-Shot Prompting | Providing a few examples | Consistent formatting | Limited by context window |

| Temperature Tuning | Lowering randomness (Temp $ o$ 0) | More deterministic output | Can become repetitive/boring |

The Prompt Engineering Fix

While you might not be able to retrain a model like GPT-4 or Claude, you can change how you talk to them. Vague prompts are breeding grounds for hallucinations. If you ask, "Tell me about the legal risks of this contract," the AI might invent a law to be helpful. If you ask, "Using only the provided text, list the legal risks. If the text does not mention a risk, state that no risk was found," you've created a constraint that discourages guessing. Try these practical prompt tweaks to reduce errors:- Give it an "Out": Explicitly tell the AI: "If you don't know the answer, say you don't know. Do not attempt to make up an answer."

- Chain-of-Thought Prompting: Ask the AI to "think step-by-step." When the model explains its logic before giving the final answer, it often catches its own factual errors before they reach the final output.

- Role Specification: Instead of a general assistant, tell it to act as a "fact-checker" or a "skeptical editor." This shifts the model's probabilistic weighting toward precision rather than creativity.

Changing the Way We Grade AI

One of the biggest reasons hallucinations persist is that our evaluation metrics are broken. For years, AI was graded on accuracy-did it get the answer right? The problem is that in a multiple-choice environment, guessing is a viable strategy. If an AI is rewarded for every correct answer and not penalized for every confident wrong answer, it will keep guessing. We need to move toward a system that rewards epistemic honesty. This means grading the model on whether it correctly identified its own uncertainty. A model that says "I'm 60% sure this is X" is far more valuable in a production environment than one that says "This is X" with 100% confidence and is wrong. This requires a shift in the fine-tuning phase, where the goal isn't just the "right" answer, but the "honest" answer.The Human-in-the-Loop Necessity

Despite all the technical patches, the reality is that as of 2026, no generative AI is 100% hallucination-free. Because these systems lack a conscious understanding of the world, they cannot "feel" when they are lying. They are simply completing a pattern. For high-stakes applications-medical advice, legal filings, or financial reporting-a "human-in-the-loop" workflow isn't optional; it's a requirement. This means treating AI output as a first draft, never a final product. Every factual claim, date, and citation must be cross-referenced with a primary source. If you are using tools like Gemini or Copilot, use them to structure your thoughts or brainstorm ideas, but verify the hard data using a traditional search or a verified database.Can I completely stop an AI from hallucinating?

No. Hallucinations are a byproduct of the probabilistic nature of LLMs. While you can significantly reduce them using RAG, RLHF, and strict prompting, you cannot eliminate them entirely because the model is always predicting the next token based on patterns, not querying a fixed database of truths.

What is the difference between a hallucination and a mistake?

A mistake is often a failure to follow a prompt or a calculation error. A hallucination is the creation of a plausible-sounding but entirely fake piece of information-like a fake URL or a non-existent book title-presented with total confidence.

Does lowering the temperature always stop hallucinations?

Lowering the temperature makes the model more deterministic, meaning it will pick the most likely word more consistently. While this reduces "random" hallucinations, the model can still confidently output a factually wrong answer if that wrong answer was a strong pattern in its training data.

How does RAG actually help with accuracy?

Retrieval-Augmented Generation (RAG) provides the AI with a set of reference documents to look at before it answers. Instead of relying on its memory (weights), it uses the provided text as the sole source of truth, which drastically reduces the need for the model to guess.

Which AI models are most prone to hallucinations?

Generally, models that are optimized for creativity or those with smaller training sets on specific domains are more prone to hallucinations. However, even the most advanced models from OpenAI, Google, and Anthropic still hallucinate, especially when pushed into highly technical or obscure territories.

Artificial Intelligence

Artificial Intelligence

k arnold

April 12, 2026 AT 18:27Groundbreaking stuff here. Truly. Imagine telling the world that a probabilistic model doesn't actually know things... wow, what a revelation. I'm sure the few people who haven't spent five minutes on Twitter are absolutely floored by this discovery. Maybe next time you can explain that water is wet or that gravity makes things fall down. Truly a masterclass in stating the obvious while pretending it's a strategy guide.

Tiffany Ho

April 13, 2026 AT 18:31this is so helpful thanks for sharing

michael Melanson

April 14, 2026 AT 12:30RAG really is the game changer for a lot of our internal workflows. Once we stopped relying on the base model and started feeding it our actual documentation, the error rate plummeted. It's a much more sustainable way to scale these tools in a professional environment.

lucia burton

April 14, 2026 AT 21:10The synergy between implementing a robust RAG architecture and optimizing the hyperparameters for temperature tuning is absolutely paramount for anyone attempting to mitigate stochastic parrots in a production-grade environment where high-fidelity output is the only acceptable metric for success. We really need to be leveraging the latent capabilities of these transformer-based architectures by implementing multi-stage verification pipelines that can programmatically identify semantic drift before the end-user even sees the tokenized output because that is how we truly scale reliability in the enterprise space!

Denise Young

April 16, 2026 AT 10:59Oh sure, just add a "human-in-the-loop" and everything magically fixes itself, because who doesn't love spending four hours a day manually auditing a bot that was supposed to save us time in the first place. It's just wonderful how we've transitioned from "automation" to "babysitting a very confident liar" while using fancy terms like epistemic honesty to make the whole disaster sound like a sophisticated academic journey. I absolutely love how the solution to bad AI is just... more humans doing the actual work that the AI was bought to replace. Truly the peak of technological efficiency we've all been dreaming of during our quarterly optimization meetings.

Sam Rittenhouse

April 18, 2026 AT 09:17It is honestly heartbreaking to think about the sheer amount of frustration people feel when they trust these systems with their most vulnerable legal or medical needs only to be met with a polished, synthetic lie. We must hold the hand of those transitioning into this new era and ensure that the human element isn't just a safety check, but a compassionate shield against the cold, calculating randomness of a machine that can mimic empathy without ever feeling a single heartbeat of truth!