You type a complex prompt asking for code in Python, formatted as JSON, with specific comments, and the model delivers exactly that. Five years ago, this would have been a miracle. Today, it’s Tuesday. But why do some models nail your instructions while others hallucinate or ignore half your constraints? The answer lies in how we’ve taught Large Language Models, or LLMs, massive neural networks trained on vast amounts of text data to predict the next word in a sequence. They aren’t just getting smarter; they are getting better at listening.

The shift from raw text generation to precise instruction following is the single biggest usability leap in AI history. It transformed these tools from autocomplete engines into cooperative assistants. This article breaks down the technical mechanisms behind this improvement, from early training methods to the cutting-edge techniques dominating 2025 and 2026.

Key Takeaways

- Instruction tuning converts generic text predictors into helpful assistants using small, high-quality datasets.

- Modern alignment uses Direct Preference Optimization (DPO) instead of older Reinforcement Learning from Human Feedback (RLHF).

- New automated data synthesis methods like AutoIF improve complex instruction adherence by over 4 percentage points without hurting general reasoning.

- Inference-time techniques like Activation Steering and InstABoost allow real-time correction of model behavior without retraining.

- Proprietary models still lead in complex multi-step tasks, but open-source bases combined with efficient fine-tuning are closing the gap rapidly.

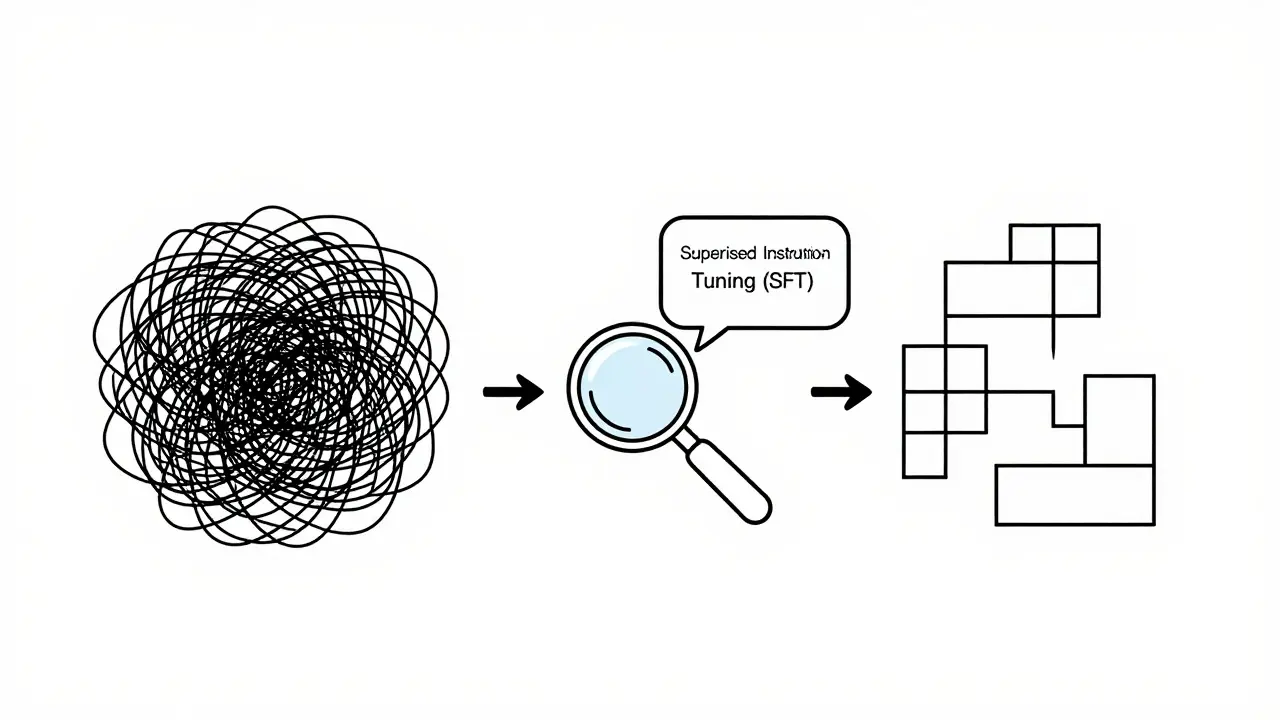

The Foundation: How We Taught Models to Listen

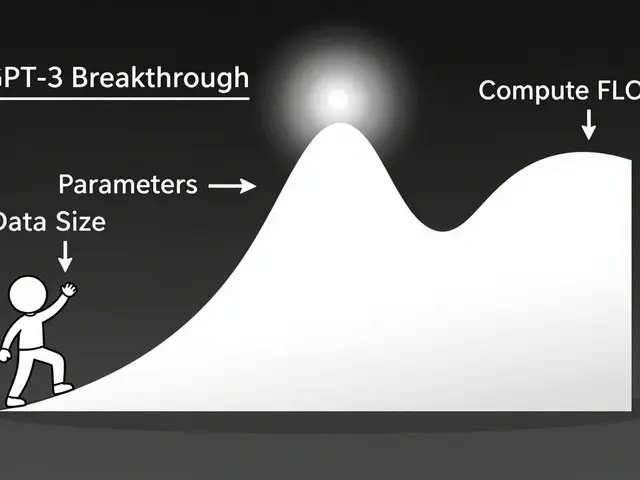

To understand where we are, you need to see where we started. Early transformer models, like GPT-3, were brilliant at continuing text but terrible at following directions. If you asked them to "summarize this," they might just start writing a story about summaries. The breakthrough came between 2021 and 2023 when researchers realized that adding a second training layer specifically focused on instructions changed everything.

This process, known as Supervised Instruction Tuning, or SFT, involves training a pre-trained model on curated pairs of user instructions and ideal model responses., acts less like teaching new facts and more like adjusting a lens. It teaches the model to interpret your message as an actionable command rather than just another sentence to complete.

OpenAI’s InstructGPT, released in January 2022, was the first major system to demonstrate this effect clearly. By showing the model thousands of examples of good versus bad responses, they reduced harmful outputs by 25-50% and increased user preference rates from around 20-40% to 60-70%. Google followed with FLAN, or Fine-tuned Language Net, which showed that instruction-tuning a 137B-parameter model on just 62 tasks improved accuracy on 1,836 held-out tasks by up to 28 percentage points. The key insight? You don’t need massive data volumes for this step. Datasets ranging from 10,000 to 1 million examples-tiny compared to the trillions of tokens used in pre-training-are enough to dramatically reorganize the model’s behavior.

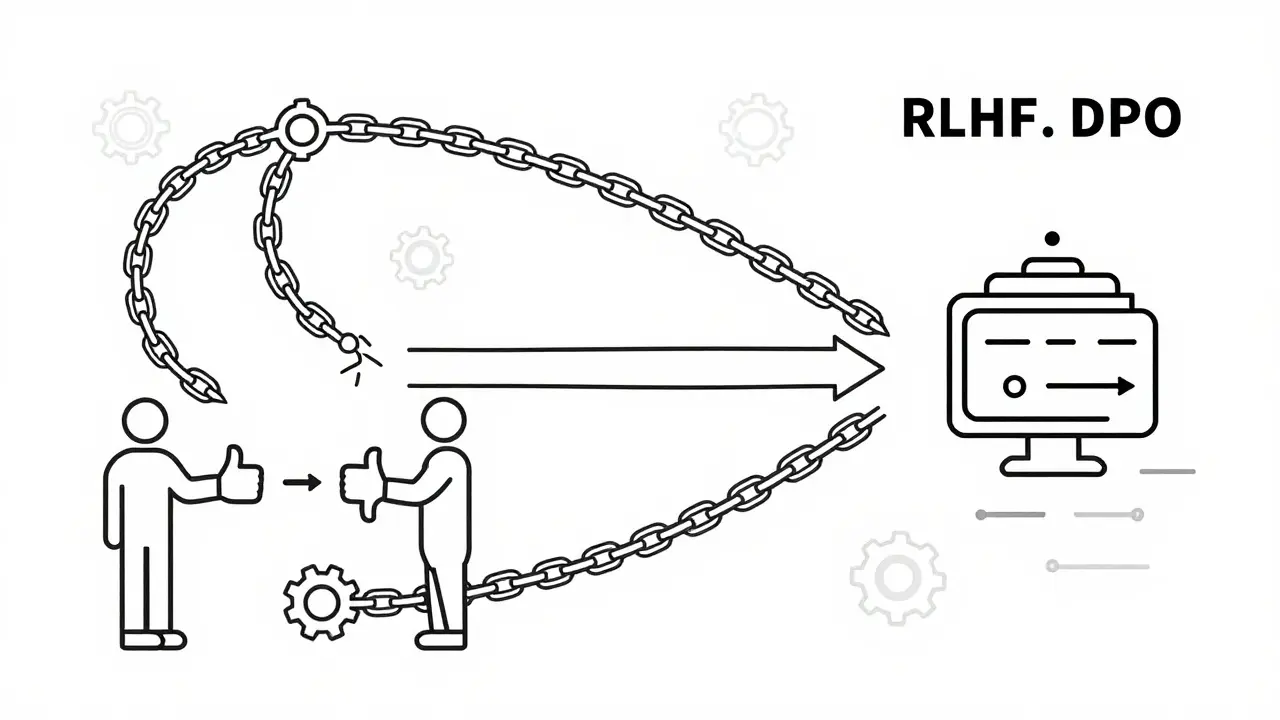

From RLHF to DPO: Refining Preference

Once a model can follow basic instructions, you need to ensure it follows them *helpfully* and *safely*. This is where alignment comes in. For a few years, Reinforcement Learning from Human Feedback, or RLHF, a training method where human raters rank model outputs to guide reinforcement learning algorithms toward preferred behaviors, was the gold standard. InstructGPT used RLHF to teach models not just what to say, but how humans wanted things said.

However, RLHF is expensive and computationally heavy. It requires training a separate reward model and then running complex reinforcement learning loops. In 2023, researchers introduced Direct Preference Optimization, or DPO, a simpler alternative to RLHF that directly optimizes the language model's parameters based on preferred vs. rejected response pairs without needing a separate reward model. DPO achieves similar or better results with fewer computational resources. It has become the standard for modern models like Meta’s Llama-3-Instruct and Anthropic’s Claude family.

Another variant, Reinforcement Learning from AI Feedback, or RLAIF, uses a stronger AI model to generate feedback and rankings instead of relying solely on human annotators, helps scale this process further. These methods ensure that when you ask for a "brief summary," the model doesn’t write a novel, and when you ask for code, it doesn’t include dangerous commands.

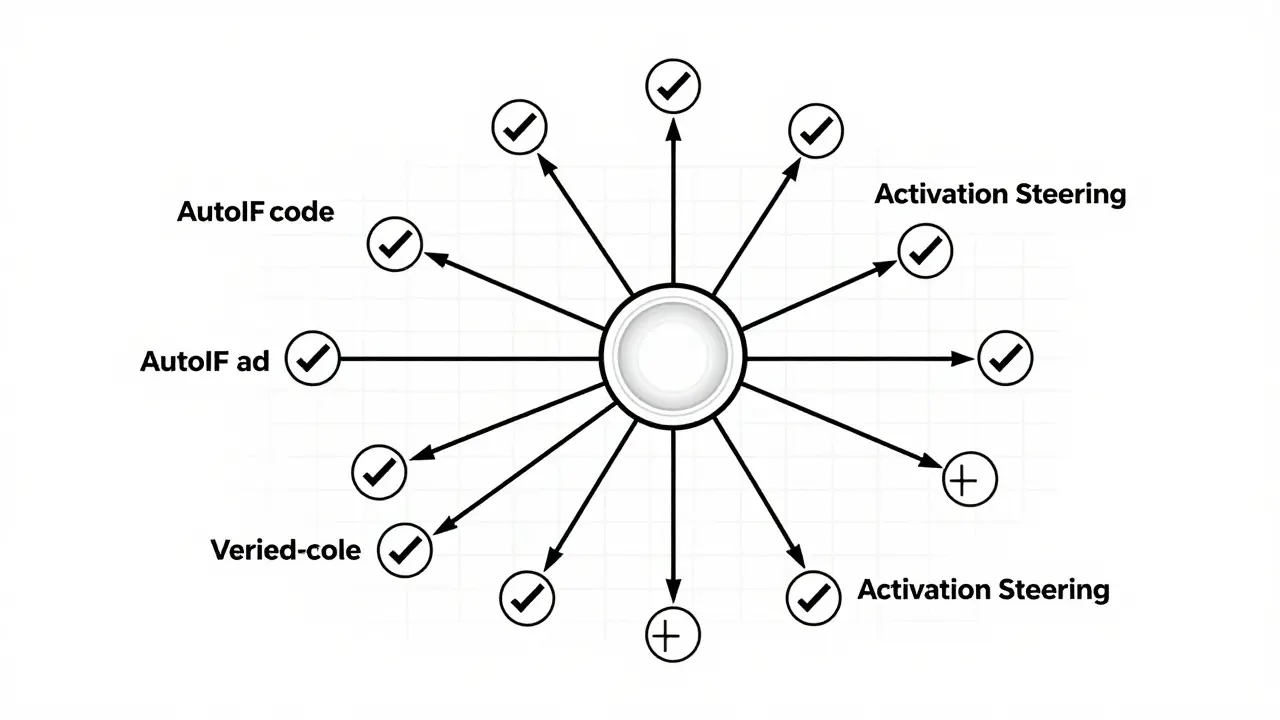

Automated Data Synthesis: The AutoIF Breakthrough

The quality of instruction following depends heavily on the quality of the training data. Historically, this meant hiring humans to write prompts and check answers. That’s slow and expensive. A major frontier in 2024 and 2025 has been automating this process.

A significant advancement came with AutoIF, or Automated Instruction Following, a method that uses strong teacher models to synthesize complex instruction-response pairs and validates them through programmatic execution feedback. Instead of guessing if a model followed instructions, AutoIF actually runs the code or checks the structured output to verify correctness.

Researchers using Qwen2 and LLaMA3 found that AutoIF improved average scores on instruction-following benchmarks like FollowBench by over 4 percentage points for smaller models (7B-8B parameters) and by 4.6 to 5.6 percentage points for larger teacher models (72B-70B parameters). Crucially, this didn’t hurt their general reasoning abilities on tests like MMLU or GSM8K. This proves that aggressive instruction-focused training no longer requires sacrificing the model’s broader intelligence.

Steering the Model at Inference Time

What if you could fix instruction-following errors without retraining the model at all? Recent research focuses on latent steering-manipulating the model’s internal activations during inference.

Microsoft Research demonstrated that Activation Steering, a technique that adds linear vectors to a model's hidden states to enforce specific behaviors like safety constraints or formatting rules can significantly improve adherence. By computing vectors from the difference between "constraint-satisfying" and "constraint-violating" outputs, they could steer a model toward better compliance. This improved constraint satisfaction rates by 10-20 percentage points on evaluated tasks without making the text sound robotic.

Similarly, a 2025 preprint introduced InstABoost, or Instruction Attention Boosting, a method that increases the model's attention weights on instruction tokens during decoding to ensure they are prioritized over context. In tasks where plain prompting yielded 90% accuracy, InstABoost raised it to 93%, matching or outperforming other latent steering baselines. These methods are particularly useful for high-risk contexts where strict adherence to safety or formatting rules is non-negotiable.

Efficiency Meets Performance: LoRA and WeGeFT

Not everyone can afford to train a 70-billion parameter model from scratch. Efficiency-oriented fine-tuning methods allow organizations to specialize large models with minimal hardware. LoRA, or Low-Rank Adaptation, updates only a tiny fraction of model parameters (0.1-10%) via low-rank matrices, enabling fine-tuning on consumer-grade GPUs became the industry standard.

However, newer methods are pushing boundaries further. In 2025, North Carolina State University researchers highlighted WeGeFT, or Weighted Geometric Fine-Tuning, a parameter-efficient fine-tuning method that improves performance on commonsense reasoning, arithmetic, and instruction following compared to LoRA without increasing computational cost. Early tests showed WeGeFT matching or exceeding LoRA on downstream tasks, suggesting that better mathematical approaches to parameter updates can deliver stronger instruction following with equal hardware budgets.

| Method | Primary Function | Computational Cost | Key Advantage |

|---|---|---|---|

| Supervised Instruction Tuning (SFT) | Teaches basic instruction interpretation | Medium | Fundamental behavioral shift |

| RLHF | Aligns with human preferences | High | Nuanced tone and safety |

| DPO | Direct preference optimization | Low-Medium | Simpler, stable training |

| AutoIF | Automated data synthesis | Medium | Scalable, verified data |

| Activation Steering | Inference-time control | Very Low | No retraining required |

Evaluating Success: Benchmarks That Matter

How do we know these improvements are real? We rely on specialized benchmarks. General metrics like perplexity don’t capture instruction adherence. Instead, the community uses:

- FollowBench: Introduced in 2024, this targets complex instruction following involving multi-constraint outputs, tool usage, and structured formats.

- MT-Bench: Measures multi-turn conversation quality and helpfulness, scored by GPT-4 or humans. Top models like GPT-4 and Claude 3 Opus score in the 7-9/10 range, while earlier base models often scored 3-5/10.

- AlpacaEval: Uses pairwise comparisons to determine which model produces a more helpful response. It highlights the gap between proprietary leaders and open-source alternatives.

- HELM: Holistic Evaluation of Language Models measures robustness across dozens of scenarios, showing that instruction-tuned models outperform base models by wide margins on constrained tasks.

These benchmarks reveal a clear trend: proprietary models like GPT-4o and Claude 3 still lead in complex, multi-step instruction following. However, open-source models like Llama-3-70B-Instruct and Mixtral-8x7B-Instruct are closing the gap, especially when enhanced with techniques like AutoIF or WeGeFT.

Real-World Challenges and Future Directions

Despite these advances, challenges remain. Users frequently report "instruction drift" in very long conversations, where the model forgets earlier constraints. Negated constraints (e.g., "do not mention X") are also notoriously difficult for LLMs to handle perfectly. Conflicting instructions can cause brittle behavior.

Looking ahead, the focus is shifting toward multi-modal instruction following. Models like Gemini 1.5 Pro and GPT-4o now support image, audio, and video I/O. Asking a model to "edit this image according to these constraints" requires the same rigorous alignment principles applied to text. Additionally, regulatory frameworks like the EU AI Act emphasize transparency and controllability, driving demand for auditable instruction-following methods.

For developers, implementing these improvements involves curating diverse, high-quality datasets, normalizing response styles, and using parameter-efficient fine-tuning for domain-specific adaptation. At deployment, wrapping models with clear system prompts and leveraging runtime steering mechanisms ensures consistent behavior.

What is the difference between instruction tuning and pre-training?

Pre-training teaches the model general language patterns and world knowledge using massive datasets. Instruction tuning is a subsequent step that teaches the model to interpret natural language commands as actionable requests, transforming it from a text predictor into a helpful assistant.

Why is DPO preferred over RLHF?

DPO (Direct Preference Optimization) is simpler and more computationally efficient than RLHF (Reinforcement Learning from Human Feedback). It achieves similar alignment results by directly optimizing model parameters based on preferred vs. rejected responses, eliminating the need for a separate reward model and complex reinforcement learning loops.

Can open-source models match proprietary ones in instruction following?

They are getting close. While proprietary models like GPT-4 and Claude 3 currently lead in complex multi-step tasks, open-source models like Llama-3 and Qwen2, when combined with advanced techniques like AutoIF and WeGeFT, are narrowing the gap significantly, especially for specific enterprise use cases.

What is AutoIF and why does it matter?

AutoIF (Automated Instruction Following) is a method for generating high-quality training data automatically. It uses strong teacher models to create instruction-response pairs and validates them through programmatic execution. This reduces reliance on manual labeling and improves instruction adherence scores by over 4 percentage points without harming general reasoning capabilities.

How does Activation Steering work?

Activation Steering manipulates the internal state of a language model during inference. By adding specific linear vectors derived from contrasting outputs, it can force the model to adhere to safety constraints, formatting rules, or style guidelines without requiring any retraining or fine-tuning.

Artificial Intelligence

Artificial Intelligence