When you hear that a new large language model has 100 billion parameters, it’s easy to assume it’s automatically better than the one with 10 billion. But that’s not the whole story. The real question isn’t just how big the model is-it’s how much you train it, with what data, and at what cost. The breakthrough in AI over the last few years hasn’t come from wild new architectures. It’s come from something simpler: scaling laws. These aren’t guesses. They’re math. And they let you predict exactly how much performance you’ll gain when you scale up a model.

What Scaling Laws Actually Tell You

Scaling laws are equations that describe how a model’s performance improves as you increase three things: the number of parameters, the size of the training dataset, and the total amount of compute (measured in floating point operations, or FLOPs). These relationships aren’t linear. They follow a power law. That means doubling your compute doesn’t just double your accuracy-it gives you a predictable, mathematically defined boost. For example, research from teams at NVIDIA and DeepMind showed that if you double the training FLOPs, you can expect a consistent drop in test loss. This holds across models as small as 100 million parameters and as large as 17 billion. The same pattern shows up in downstream tasks like answering science questions (SciQ), solving logic puzzles (ARC-E), or understanding common sense (HellaSwag). In one study, researchers ran 130 different experiments. Every time, the accuracy climbed in a smooth, predictable curve. No surprises. No plateaus. Just math. This is huge. Before scaling laws, teams would train a model, test it, and then guess what would happen if they scaled up. Now, they can run the numbers before spending millions on GPU time. If you know your target accuracy, you can calculate exactly how much compute you need. No more guessing. No more wasted resources.Bigger Models Aren’t Always Better-Data Matters More

For a long time, everyone thought: more parameters = better performance. Then came Chinchilla. DeepMind trained a model with 70 billion parameters-half the size of their previous model, Gopher-and trained it on twice as much data. Chinchilla beat Gopher on every benchmark. Why? Because data quantity matters as much as model size. A huge model trained on too little data is like a genius who never left their house. It has the capacity, but never learned how to use it. The rule? For optimal performance, you need to balance model size and data size. The sweet spot isn’t “as big as possible.” It’s “as much data as you can afford, paired with a model that can absorb it.” Chinchilla proved that a smaller model trained on more data can outperform a larger one trained on less. This flipped the old assumption on its head. And it gets even weirder. Larger models are more sample-efficient. That means they reach the same performance level with less data. So, technically, you could train a 100B model on 10% of the data you’d use for a 10B model-and get the same result. But here’s the catch: you’d still need to train it for longer to reach convergence. And that’s expensive. In practice, most companies train smaller models on more data because the inference cost (running the model) is cheaper. Training cost is a one-time expense. Inference cost is ongoing. A 7B model running on a few servers costs far less than a 70B model, even if it was trained on less data.The GPT-3 Moment: When Scaling Broke the Rules

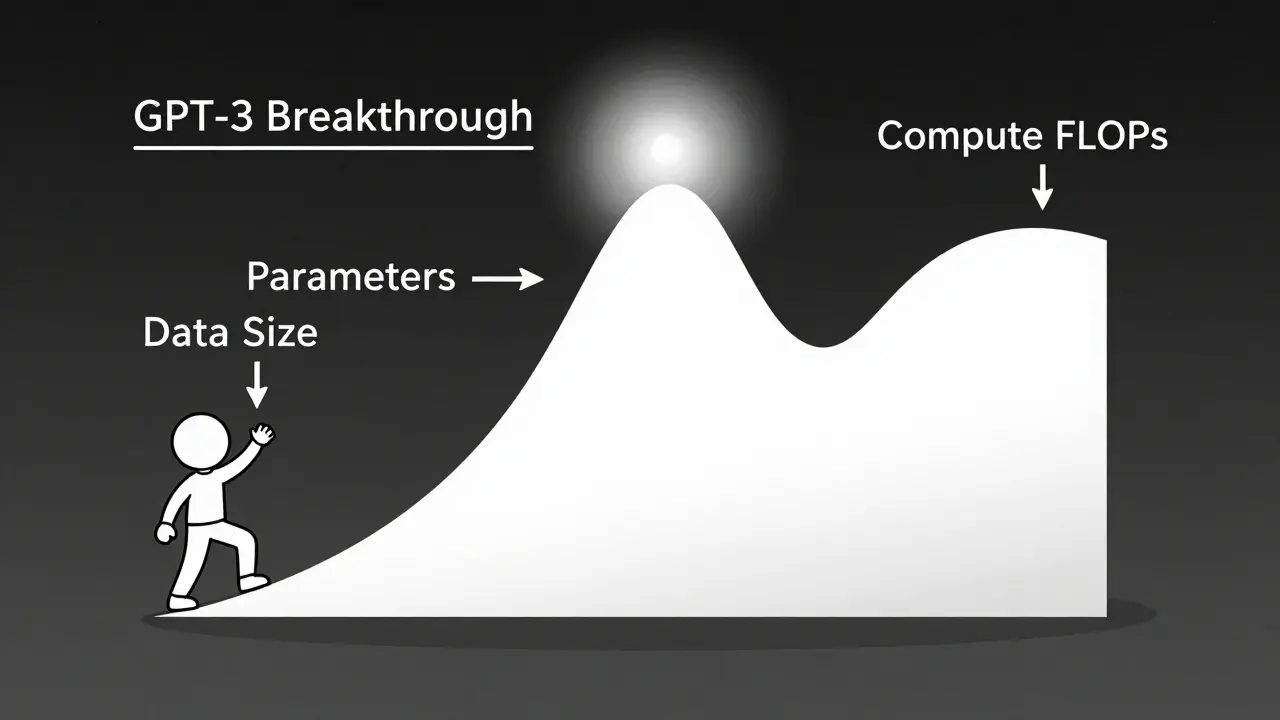

GPT-2 was good. GPT-3 was something else. It wasn’t just bigger-it was 100 times bigger. And suddenly, it could write code. Solve math problems. Follow complex instructions. It didn’t just get better at language tasks. It gained new abilities that didn’t exist in smaller models. This wasn’t a gradual improvement. It was a qualitative leap. That’s what scaling laws predict: at some threshold, performance doesn’t just improve-it changes kind. A model might go from barely understanding a question to solving it with few examples. From generating nonsense to writing working Python scripts. From memorizing facts to reasoning through logic puzzles. GPT-3’s success wasn’t luck. It was the result of scaling beyond a critical point. And researchers now use this pattern to predict where the next breakthroughs might happen. If you want to see emergent abilities, you don’t need a new architecture. You just need to scale.

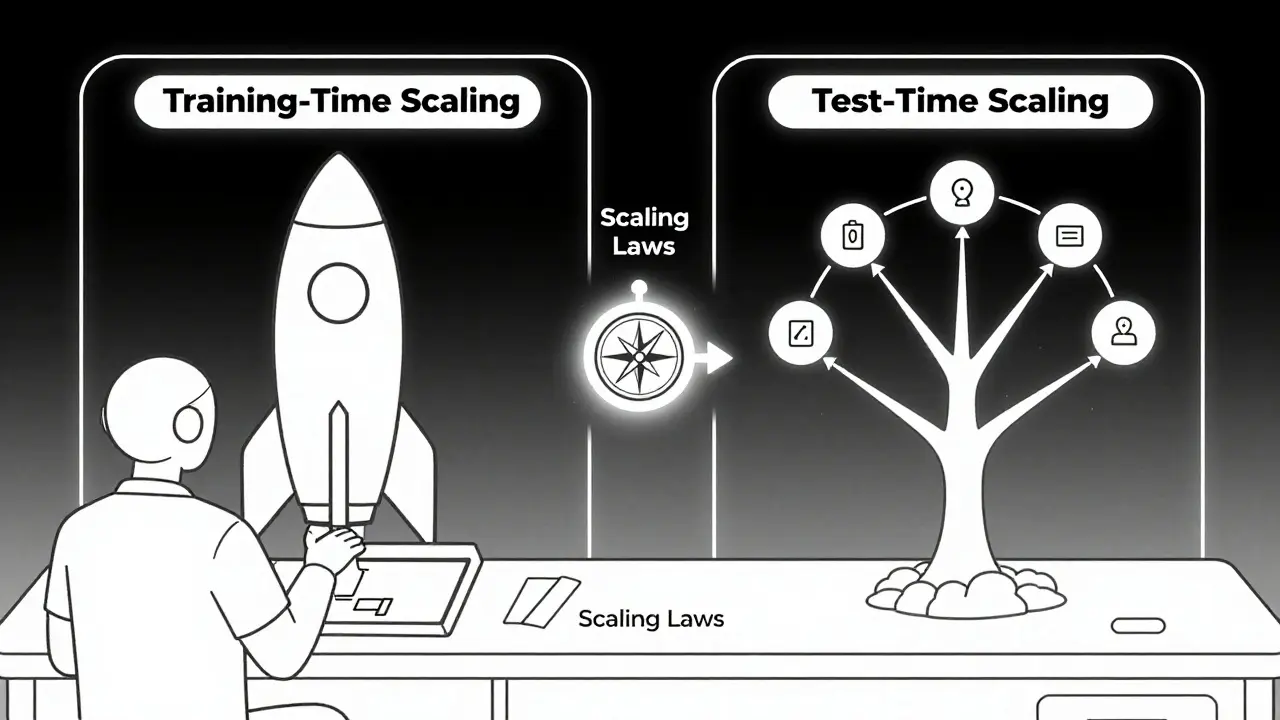

Test-Time Scaling: The New Frontier

What if you could make a model smarter at inference time instead of just training it harder? That’s the idea behind test-time scaling. Instead of running a model once and calling it done, you let it think multiple times-like a human working through a problem step by step. NVIDIA’s research shows this can dramatically improve accuracy on complex reasoning tasks, especially in math, coding, and science. Think of it like this: a small model might give you a quick answer. A test-time scaled model might run 10 different reasoning paths, check for contradictions, and then pick the best one. It’s slower. It uses more compute. But it’s more accurate. This changes the game. Instead of betting everything on training a massive model, you can train a moderately sized one and use extra compute at inference time when you need precision. It’s more flexible. More efficient. And it’s already being used in AI assistants that solve multi-step problems.Smaller Models Can Predict Bigger Ones

Here’s one of the most surprising findings: you can use small models to predict how big ones will perform. MIT researchers thought smaller models behaved differently from larger ones. They assumed the scaling rules wouldn’t apply across sizes. But when they tested it, they were wrong. The same power law held-from 100 million to 100 billion parameters. The relationship between compute and performance didn’t break. It just got smoother. This means you can train a small model cheaply, measure its performance, and then extrapolate exactly how a 10x larger version will do. No need to train the big one first. You can plan ahead. You can optimize your budget. It also means that architectural improvements aren’t just about new layers or attention mechanisms. They’re about making models more scalable. A model that scales better than its predecessor doesn’t just perform better-it’s more predictable. And that’s gold for anyone building AI systems.

What’s Next? Efficiency Over Explosion

The era of “bigger is better” is ending. Why? Because it’s too expensive. Training a single model now can cost tens of millions of dollars. GPUs are in short supply. Energy use is under scrutiny. The smartest teams aren’t just scaling up-they’re scaling smart. Here’s what’s working now:- Using parameter-efficient architectures that get close to full-size performance with fewer parameters.

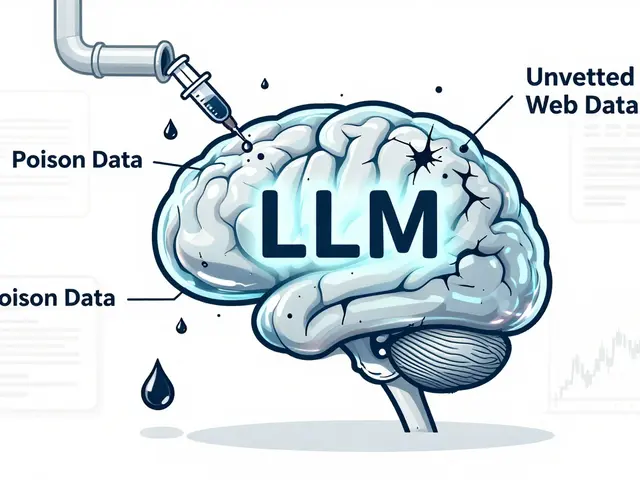

- Optimizing data quality over quantity. Clean, diverse data beats noisy, massive datasets.

- Shifting from training-time scaling to test-time scaling for high-stakes tasks.

- Tracking inference cost as hard as training cost. A 100B model that costs $1 per query isn’t worth it if a 7B model does 90% of the job for $0.02.

Practical Takeaways

If you’re working with LLMs, here’s what you should do:- Don’t assume bigger = better. Test your task with smaller models first.

- Use scaling laws to estimate compute needs before training. If your target accuracy is 85%, calculate the FLOPs required instead of guessing.

- Balance model size and data. If you can’t get more data, don’t just add parameters.

- Consider test-time scaling for complex tasks. It’s cheaper than training a giant model.

- Track inference costs religiously. Hosting costs often dwarf training costs.

Can scaling laws predict performance for any task?

Scaling laws work best for tasks that are well-represented in the training data and measured with consistent benchmarks-like those used in ARC-E, HellaSwag, or LAMBADA. They’re less reliable for highly specialized tasks, like legal document analysis or medical diagnosis, where data is scarce or evaluation is subjective. But even then, they give you a strong starting point for estimating how scaling might help.

Do scaling laws apply to models other than LLMs?

Yes. Early scaling law research focused on language models, but similar patterns have been observed in vision models (like ViTs), multimodal models, and even reinforcement learning agents. The core idea-that performance improves predictably with scale-is holding across domains. The exact power-law exponents change, but the trend remains.

Why does data quality matter more than quantity?

A model trained on 1 trillion tokens of low-quality, repetitive, or biased data may perform worse than one trained on 100 billion tokens of clean, diverse, and well-curated data. Quality affects how efficiently the model learns patterns. High-quality data reduces noise, improves generalization, and helps the model avoid memorizing errors. Recent studies show that even small improvements in data filtering can outperform doubling the data size.

Is training to convergence always the best strategy?

No. For large models, training to full convergence is often inefficient. Because larger models are more sample-efficient, you can reach your target performance with far less training. Stopping early saves compute and reduces risk of overfitting. The goal isn’t to train until loss hits zero-it’s to train until performance meets your needs.

How do I know if my model is under-scaled?

If your model’s performance is still improving significantly as you increase training compute, it’s under-scaled. A simple test: if doubling your training budget improves accuracy by more than 5%, you haven’t reached the scaling regime yet. At that point, you’re still in the steep part of the power law. Once gains drop below 1-2% per doubling, you’re near saturation.

Artificial Intelligence

Artificial Intelligence

Anuj Kumar

March 16, 2026 AT 00:39Christina Morgan

March 17, 2026 AT 00:14Kathy Yip

March 18, 2026 AT 16:15Bridget Kutsche

March 19, 2026 AT 08:55Jack Gifford

March 20, 2026 AT 12:40Sarah Meadows

March 21, 2026 AT 23:32Mbuyiselwa Cindi

March 23, 2026 AT 13:58Victoria Kingsbury

March 24, 2026 AT 11:06