Tag: AI performance

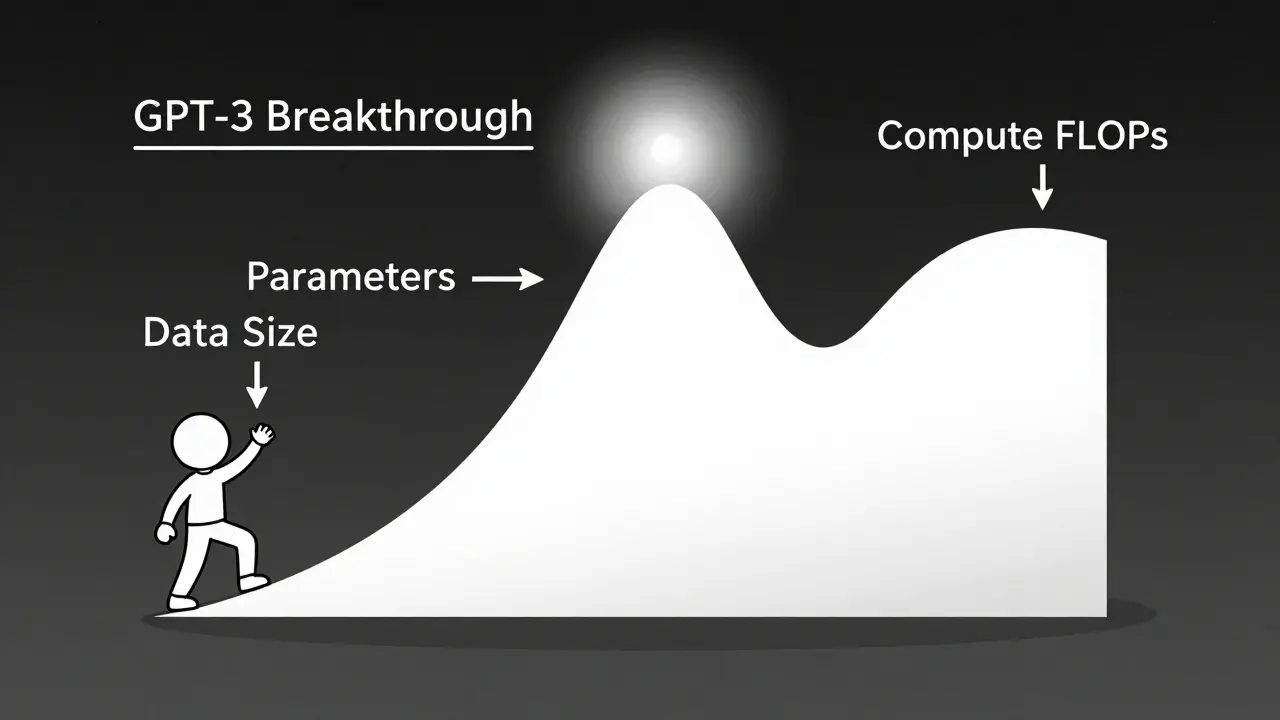

Scaling laws let you predict exactly how much performance improves when you increase model size, data, or compute. Learn how math, not just bigger models, drives AI breakthroughs-and why efficiency now beats raw scale.

Categories

Archives

Recent-posts

Key Components of Large Language Models: Embeddings, Attention, and Feedforward Networks Explained

Sep, 1 2025

Artificial Intelligence

Artificial Intelligence