How the Draft and Verifier System Works

To understand this, you have to realize that big models are slow because they have to run through billions of parameters for every single token. Speculative decoding breaks this cycle by using two different models working together. First, a Draft Model-which is a much smaller, faster, and "cheaper" model-predicts a sequence of tokens. Instead of just one, it might guess the next 3 to 12 tokens. Because it's small, it does this almost instantly. Then comes the Verifier Model (the target LLM). Instead of generating tokens one by one, it looks at the entire draft in a single forward pass. It checks if the draft tokens align with what it would have predicted. If the draft is correct, the verifier accepts them all at once. If the draft hits a wrong word, the verifier keeps the correct part, fixes the mistake, and the process starts over. The magic here is that the final output is 100% identical to what the big model would have produced alone; it's just significantly faster.Measuring Success: The Acceptance Rate

Not every draft is a winner. The efficiency of this system relies heavily on the Acceptance Rate, often denoted as $\alpha$. This is the probability that the verifier model agrees with the draft model's suggestions. If your draft model is well-aligned with the target model, you'll see high acceptance rates, leading to massive speedups. For example, in structured tasks like code generation, draft models often perform better because code follows more predictable patterns. You might see an acceptance rate of 58% for Python code, while an open-ended creative writing task might drop to 32%. When the acceptance rate is too low-typically below 30%-the system can actually become slower than standard decoding. This happens because you're wasting compute power on drafts that get rejected, effectively doing the work twice for nothing. Finding the right pair of models is the secret sauce to making this work in production.

Different Flavors of Speculative Decoding

As the tech has evolved since 2022, we've moved beyond just pairing two separate models. Depending on your hardware and memory, you might choose a different approach.| Method | Mechanism | Typical Speedup | Main Advantage | Trade-off |

|---|---|---|---|---|

| Standard Draft-Target | Two separate models (e.g., T5-small $\rightarrow$ T5-XXL) | ~3x | High peak speed | Higher memory footprint |

| Self-Speculative | Layer-skipping within one model | ~1.99x | No extra memory needed | Slower than dual-model |

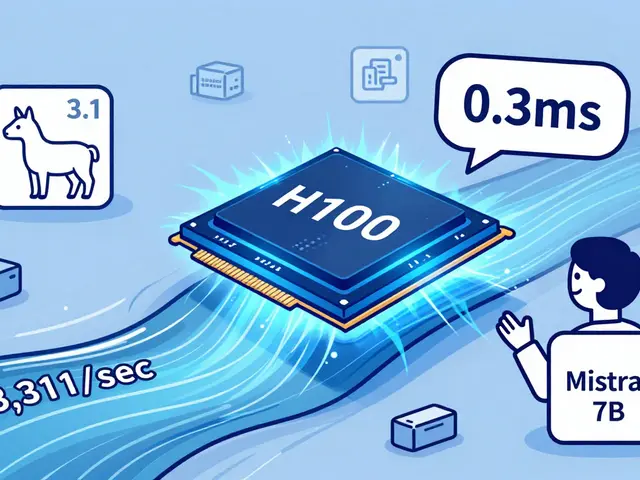

| Speculative Speculative (SSD) | Asynchronous hardware parallelization | Up to 5x | Extreme low latency | Requires separate GPUs |

Practical Implementation and Pitfalls

Setting this up isn't as simple as flipping a switch. Most developers use engines like vLLM or Text Generation Inference (TGI) to handle the heavy lifting. One of the biggest headaches is tuning the value of K (the number of draft tokens). You might think "more is better," but NVIDIA's research shows diminishing returns after K=8. If you set K too high, you spend too much time drafting tokens that are likely to be rejected, which drags down your overall throughput. Another common trap is distribution drift. Over time, if your draft model was trained on a different dataset than your target model, the "agreement" between them drops. This is why some newer frameworks, like the Draft, Verify, and Improve (DVI) system, use online learning to let the verifier "teach" the drafter in real-time, keeping them in sync.Real-World Impact on AI Costs

This isn't just a theoretical win; it's changing the economics of AI. Enterprise adoption is huge because faster inference means fewer GPU hours. AWS reported that customers using speculative decoding in Bedrock saw inference costs drop by 63%. For a real-time chat interface, a 5x speedup is the difference between a user feeling like they're talking to a human and feeling like they're waiting for a webpage to load. By reducing the time the GPU spends idling while waiting for the next token, companies can serve more users with the same amount of hardware. According to industry data, about 78% of enterprise LLM frameworks now include some form of speculative decoding in their stack.Does speculative decoding change the quality of the AI's answer?

No. Because the verifier model (the big LLM) checks every single token proposed by the draft model, the final output is mathematically identical to what the big model would have generated on its own. There is zero quality loss.

What happens if the draft model is completely wrong?

If the verifier rejects the very first token proposed by the drafter, the system simply reverts to standard autoregressive decoding for that step. You lose a small amount of time on the failed draft, but the accuracy remains perfect.

Which GPU is best for this technique?

NVIDIA Ampere architecture or newer is generally recommended. For high-end implementations like SSD (Saguaro), having multiple GPUs is necessary to run the draft and verifier models in parallel across different devices.

Why is code generation faster than creative writing with this method?

Code is more structured and predictable. There are fewer ways to write a standard "for loop" in Python than there are ways to describe a sunset in a poem. This makes it much easier for the small draft model to guess correctly, leading to a higher acceptance rate.

Can I use this with any LLM?

Yes, but you need a compatible draft model. You can either use a separate smaller model (like TinyLlama for CodeLlama) or use self-speculative decoding, which works with the model you already have by skipping layers.

Artificial Intelligence

Artificial Intelligence

Rahul U.

April 27, 2026 AT 02:16This is a really fascinating breakdown of the draft-verifier mechanism! 🚀 The part about the acceptance rate being higher for structured code compared to creative writing makes a lot of sense given the predictability of syntax. 💻

Michael Jones

April 28, 2026 AT 13:42crazy how we can just skip layers now to get speedups without needing extra vram... the efficiency gains here are just wild and it shows where the industry is heading

allison berroteran

April 29, 2026 AT 19:26It is truly remarkable to consider how this architectural approach mimics the human cognitive process of drafting and refining ideas, and while the technical overhead of managing two separate models might seem daunting at first glance, the prospect of reducing GPU idling time suggests a more sustainable path for scaling these massive systems as we move toward more complex agentic workflows that require rapid iterative cycles of thought and verification.

I find the idea of the verifier "teaching" the drafter through online learning to be the most poetic part of this entire system because it transforms a static optimization into a dynamic evolutionary process where the smaller model constantly strives to mirror the intuition of the larger one, potentially leading to a future where draft models are so efficient that the heavy lifting of the verifier is only needed for the most nuanced edge cases.

Gabby Love

April 30, 2026 AT 17:58vLLM handles this pretty well out of the box.

Jen Kay

May 1, 2026 AT 05:34Oh, wonderful. Another way for companies to squeeze more profit out of their hardware while pretending they're doing us a favor by making the chat bubble move slightly faster. 🙄