Tag: draft model

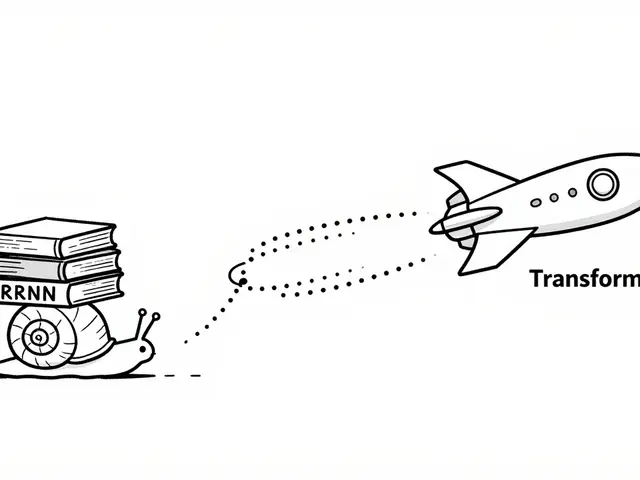

Learn how speculative decoding uses draft and verifier models to accelerate LLM inference by up to 5x without losing output quality. A deep dive into VRAM and latency.

Categories

Archives

Recent-posts

Retrieval-Augmented Generation for Generative AI: Grounding Outputs in Verified Sources

Mar, 28 2026

Artificial Intelligence

Artificial Intelligence