The core problem is that traditional retrieval prioritizes semantic similarity. While that sounds great, it often leads to high redundancy. In fact, Gartner's 2025 analysis shows that 63% of current implementations suffer from 40-60% content redundancy in their top results. To fix this, we need source selection policies that balance relevance (how well a document matches the query) with diversity (how much new information a document adds compared to others already selected).

The High Cost of Being Too Relevant

When a system only looks for the closest match, it creates blind spots. In high-stakes fields, these blind spots can be dangerous. For instance, IBM Watson is an AI platform designed for data analysis and cognitive computing demonstrated that by incorporating diverse clinical studies-some of which represented only 7% of available literature-they saw a 19% jump in diagnostic accuracy. Those rare patterns were hidden under the "more relevant" popular papers.

This isn't just about medical errors; it's about trust. Professionals are starting to realize that a single "authoritative" answer is often less useful than a nuanced one. Research by Amit Kothari in 2025 found that 78% of professionals actually prefer a slightly slower response if it comes with transparent attribution from multiple, diverse sources. They don't want a magic answer; they want to see the evidence and the conflicts.

Technical Strategies for Better Selection

How do we actually stop the AI from just picking the five most similar documents? There are a few proven ways to handle this, depending on how much computing power you have and how much accuracy you need.

Maximum Marginal Relevance (or MMR) is an iterative scoring mechanism that rewards unique contributions while penalizing redundancy is currently the industry standard. It uses a "lambda" ($ᄁ$) parameter to tune the balance. If you set lambda closer to 1, you get pure relevance. Closer to 0, and you get maximum diversity. For most businesses, the sweet spot is usually between 0.55 and 0.65.

Then there is Farthest Point Sampling (FPS) is a geometric optimization technique that selects points in a vector space to maximize distance between them . It's great for ensuring you cover the widest possible range of data, but it's a resource hog, often requiring 30-40% more computational power than MMR.

For those with deeper pockets and more complex needs, multi-objective optimization uses Pareto efficiency to ensure that improving diversity doesn't tank the relevance. According to an Atolio 2025 survey, this approach leads to 31% higher user satisfaction, though it requires up to 3.7x more processing power.

| Method | Primary Goal | Computational Cost | Typical Latency Impact | Best Use Case |

|---|---|---|---|---|

| Relevance-Only | Highest Similarity | Low | Baseline | Simple FAQs, Low-risk tasks |

| MMR | Balanced Diversity | Medium | +200-400ms | Enterprise Knowledge Bases |

| FPS | Maximum Coverage | High | +500-800ms | Exploratory Research |

| Multi-Objective | Pareto Efficiency | Very High | +800-1200ms | Legal/Medical Decision Support |

Real-World Impact Across Industries

The shift toward balanced selection isn't just theoretical-it's changing how specific industries operate. In the legal world, using diverse source selection has led to a 34% improvement in identifying relevant precedent cases from minority jurisdictions. In finance, research from MIT's Dr. Marcus Reynolds showed that balanced RAG systems reduced bias in forecasting by 37%.

But there's a catch. If you push diversity too far, you risk "diluting" the answer. Dr. Elena Rodriguez from Stanford AI Lab warned that in emergency medicine, over-emphasizing diversity can introduce distracting, marginally relevant information that slows down critical decision-making. This is why the weighting matters: healthcare usually needs a higher relevance weight (0.60-0.70), while creative brainstorming tools can afford to go lower (0.45-0.55).

Overcoming Implementation Hurdles

If you're an engineer, you know the hardest part isn't the algorithm-it's the plumbing. Gartner's 2025 report notes that 68% of failed implementations are due to authentication, permissions management, and mismatched data formats. You can't just "plug in" MMR if your data is scattered across a legacy SQL database, a modern vector store, and 5,000 PDF files with different access levels.

The most successful teams follow a "crawl-walk-run" approach. Instead of trying to integrate every possible data source at once, start with two or three. Nail the attribution (telling the user where the info came from) and the conflict handling first. Teams that did this saw an 82% success rate, compared to just 37% for those who tried to boil the ocean on day one.

When sources conflict-and they will-don't try to make the AI "pick a winner." The best practice, according to Kothari's 2025 case studies, is to present both perspectives. For example, if a policy document says one thing and a recent Slack thread says another, the AI should state: "The official policy is X, but recent internal discussions suggest Y." This transparency is what actually builds user trust.

Looking Ahead: The Future of Retrieval

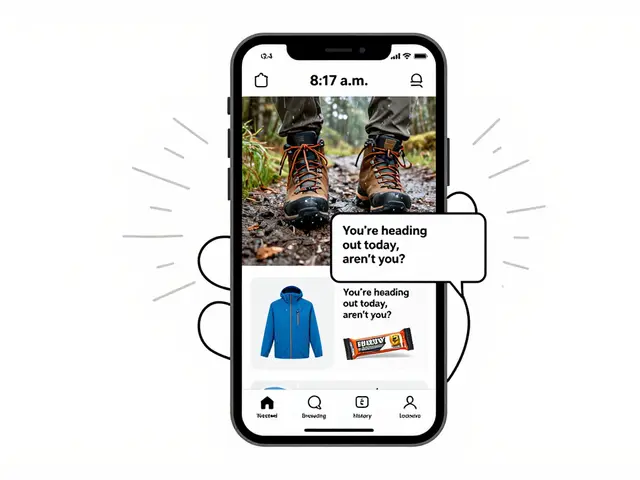

We are moving away from static retrieval. New updates, like those in Gemini Enterprise is Google's AI-powered productivity suite for businesses , use dynamic thresholding. This means the system adjusts how much it cares about diversity in real-time based on how ambiguous the user's query is. If the query is vague, the system casts a wider, more diverse net. If it's specific, it tightens the focus on relevance.

The next frontier is "causal diversity." Instead of just looking for different words or documents, systems will look for different reasons why something happened. This will move RAG from being a sophisticated search engine to a genuine reasoning tool.

What is the ideal lambda value for MMR in a business setting?

For most general enterprise applications, a lambda ($ᄁ$) between 0.55 and 0.65 is recommended. This provides a solid balance between accuracy and variety. However, if you are in a high-stakes field like healthcare, aim for 0.60-0.70 to prioritize correctness. For creative or brainstorming tasks, 0.45-0.55 is better to encourage a wider range of ideas.

Does adding diversity to RAG slow down the response time?

Yes, balanced selection policies introduce additional processing. MMR and similar techniques typically add 200-400ms to the latency. While this sounds significant, data shows that most professional users accept this delay if the output provides transparent attribution and a more comprehensive set of sources.

How do I handle conflicting information from different sources?

The most effective approach is not to resolve the conflict automatically, but to surface it. Use transparent attribution to show the user that Source A says one thing while Source B says another. This empowers the human user to make the final decision and increases the perceived trustworthiness of the AI.

What are the biggest barriers to implementing these policies?

Integration complexity is the primary hurdle. Managing permissions across disparate systems and handling various data formats account for roughly 68% of implementation failures. To mitigate this, focus on a small set of sources first and utilize federated authentication to manage access across different platforms.

Is MMR better than cosine similarity?

Cosine similarity is great for finding the most similar item, but it often results in a list of results that are nearly identical. MMR is superior for RAG because it filters out that redundancy, increasing the coverage of distinct phrases and words (by roughly 10-11 percentage points according to ACM 2024 studies) while maintaining nearly the same level of semantic accuracy.

Next Steps and Troubleshooting

If you're just starting, don't jump straight into complex multi-objective optimization. Start by implementing MMR via your vector database (like Azure AI Search) and test it with a lambda of 0.6. If you notice the answers are becoming too "random" or irrelevant, nudge the lambda up. If the results still feel repetitive, bring it down.

For those struggling with latency, consider a hybrid approach: use basic relevance for the first 2-3 results and MMR for the remaining 3-5. This gives the user the "best" answer immediately while still providing the diverse context they need for a complete picture. If integration is the main bottleneck, look into the RAG Interoperability Framework (RIF) 1.2 to help standardize how your sources talk to each other.

Artificial Intelligence

Artificial Intelligence

Kirk Doherty

April 21, 2026 AT 04:12just another way to say we need better data cleaning

Cynthia Lamont

April 22, 2026 AT 13:13Imagine thinking a 200ms delay is acceptable in a real-time environment! ABSOLUTELY RIDICULOUS. This whole approach is just a band-aid for poor indexing!

Also, the phrasing in the second paragraph is amateur at best.

Aimee Quenneville

April 22, 2026 AT 20:08oh wowww... so basically we just turn a dial until it stops lying to us??? revolutionaryyyyy!!!!

Meghan O'Connor

April 24, 2026 AT 13:26The reliance on Gartner's 2025 analysis is laughably lazy. Anyone with a basic understanding of vector spaces knows that MMR is a primitive heuristic.

The author fails to mention the impact of query expansion on diversity, and the lack of a formal proof for the 0.55-0.65 lambda range is an embarrassment. It is simply pathetic to present these "sweet spots" as gospel when they vary wildly based on the embedding model used. If you are using a dense retriever with high dimensionality, your lambda requirements change completely. This whole piece reads like a marketing brochure for Azure AI Search rather than a technical deep dive. I have seen better analysis in a freshman CS textbook. The mention of Pareto efficiency is a superficial addition to make the text seem more academic than it actually is. Honestly, just stop pretending this is a breakthrough in RAG strategy when it's just basic sampling. The author clearly doesn't understand the trade-off between precision and recall at scale. This is just a shallow attempt to summarize a complex topic. I cannot believe people actually find this "insightful". It's an insult to the field of information retrieval.

Dmitriy Fedoseff

April 25, 2026 AT 03:22The obsession with efficiency over truth is a sickness of the modern age. We sacrifice the nuance of a thousand minority voices just to save a few milliseconds of compute time. It is a moral failure to prioritize "relevance" over "comprehensive truth" in any system that affects human lives. This is not just a technical hurdle, it is a philosophical crisis!

Patrick Tiernan

April 26, 2026 AT 20:55mmr is basically just a fancy way of saying dont repeat yourself lol

Morgan ODonnell

April 27, 2026 AT 12:06I think it's actually really helpful to see the different options laid out like that. It's nice that the author mentions the human side of trust too.

Tyler Springall

April 29, 2026 AT 04:54The sheer audacity of suggesting a "crawl-walk-run" approach to anyone with a real budget. Some of us operate at a scale where "starting with two or three sources" is a joke. This is textbook middle-management advice masquerading as engineering wisdom.

Liam Hesmondhalgh

April 29, 2026 AT 18:43The grammar in the table is abysmal. "Best Use Case" is a fragment, not a proper header.

And don't get me started on the lack of consistent capitalization throughout the technical sections. It's an absolute disgrace!

Patrick Bass

April 29, 2026 AT 23:12I agree that the diversity-relevance trade-off is a key point here.