Tag: LoRA

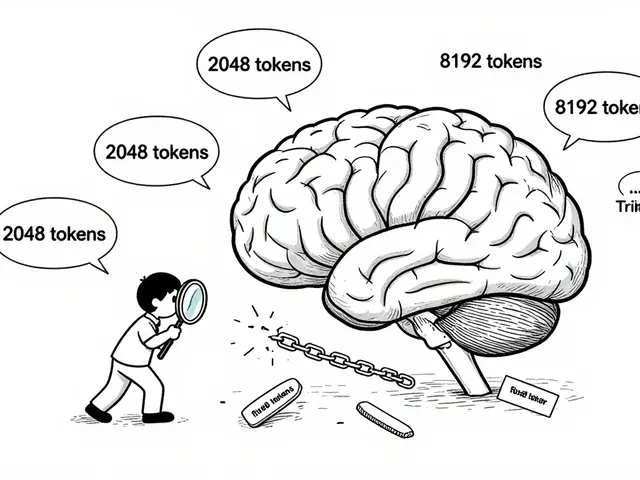

Explore proven techniques to prevent catastrophic forgetting in LLM fine-tuning. We analyze LoRA, EWC, FIP, and hybrid methods to help you preserve model knowledge.

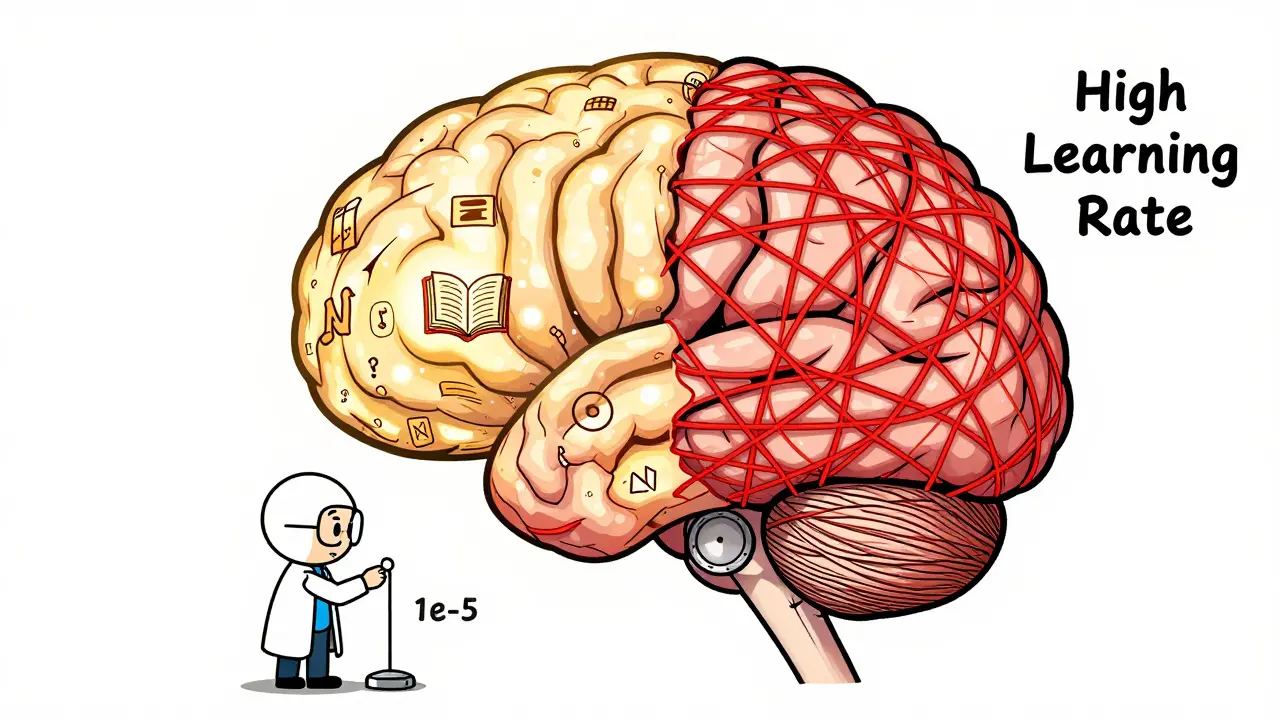

Learn how to fine-tune large language models without losing their original knowledge. Discover the best hyperparameters, methods like LoRA and FAPM, and real-world trade-offs that keep models accurate and reliable.

Few-shot fine-tuning lets you adapt large language models with as few as 50 examples, making AI usable in data-scarce fields like healthcare and law. Learn how LoRA and QLoRA make this possible-even on a single GPU.

Artificial Intelligence

Artificial Intelligence