Imagine turning a vague product idea into a working MVP in three days instead of three weeks. That's the promise of vibe coding is a high-level approach to software development where generative AI handles end-to-end coding workloads through natural language commands. Unlike the early days of autocomplete, this isn't just about finishing a line of code; it's about describing a "vibe" or a functional goal and letting an agent build the architecture, write the files, and debug the errors. But here is the catch: in a corporate environment, "vibes" alone lead to chaos. If you treat this like a magic wand, you'll end up with a codebase that works on Tuesday and collapses on Wednesday.

| Feature | AI Assistants (e.g., Copilot) | Vibe Coding (e.g., Cursor, Claude Code) |

|---|---|---|

| Scope | Line-by-line or function level | Multi-file, project-wide changes |

| Autonomy | Reactive (suggests code) | Proactive (plans, executes, tests) |

| Developer Role | Writer/Editor | Orchestrator/Reviewer |

| Speed to MVP | Incremental improvement | Exponential acceleration |

Stop Thinking About Syntax, Start Thinking About Clarity

The biggest shock for enterprise teams is the shift in where the actual work happens. In traditional dev, you spend 70% of your time wrestling with syntax and 30% on design. With vibe coding, that ratio flips. When you use tools like Cursor or Windsurf, the AI becomes a highly capable junior developer. And just like a junior, if you give it a vague instruction, it will make a guess. In a large-scale project, a "wrong guess" on a data model can derail an entire sprint.

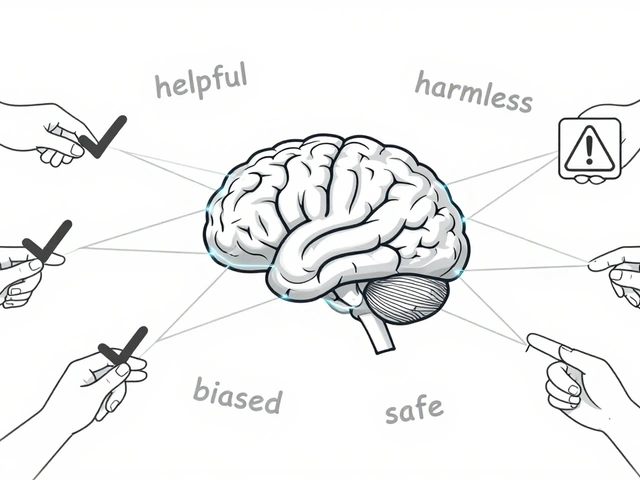

To avoid this, you have to treat software specifications as the new primary deliverable. You aren't writing code; you are designing clarity. If the AI doesn't have a crystal-clear picture of the business logic, the boundary conditions, and the naming conventions, it will hallucinate a structure that might look right but fails under enterprise load. The goal is to move from "one-shot prompting" to a real-time feedback loop of explaining, reviewing, and re-drafting.

The PLAN.md Strategy: Your Anchor for Shared Context

One of the most common failures in enterprise vibe coding is the "demo drift," where a developer shows a feature that looks great but doesn't actually meet the business requirement. This happens because the AI loses context as the conversation grows. The fix is simple but rigorous: use a PLAN.md file.

Instead of relying on the AI's memory, keep a living document at the root of your repository. This file should act as the source of truth for the agent, containing:

- Naming Conventions: Ensures the AI doesn't mix camelCase and snake_case across files.

- Feature Order: A prioritized list of what to build first to avoid circular dependencies.

- Boundaries: Explicit instructions on what the agent should not touch.

- Design Notes: Specific architectural patterns (e.g., "use a Repository pattern for database access").

By referencing this file in every session, you ensure that the agent restores previous decisions rather than inventing new ones every time you restart the IDE.

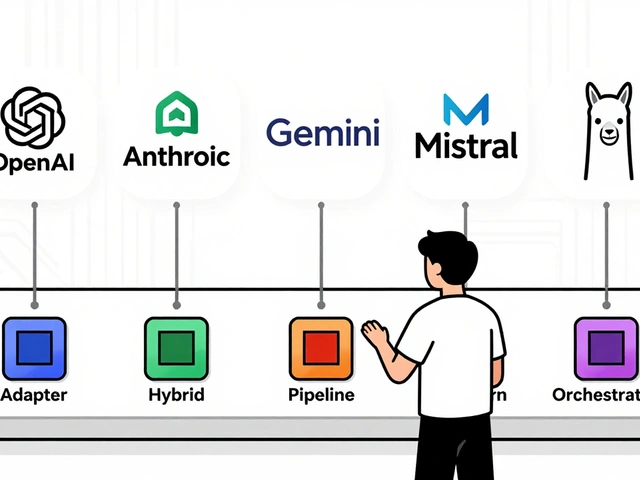

Orchestrating the Agentic Workflow

In a professional setting, you can't just let an AI commit directly to the main branch. You need an orchestration layer. Modern tools like Cascade offer different modes-Chat for brainstorming and Code for autonomous execution. The real power comes from using Agent Skills, which allow teams to package reusable workflows like automated code review checklists or specific debugging procedures.

A realistic enterprise setup looks like this: a senior engineer acts as the "Pilot," setting the direction and enforcing constraints, while the AI agents handle the scaffolding, refactoring, and boilerplate. For example, a data team can now turn a read-only dashboard into a full operational app that writes back to a database in a matter of days, provided the senior lead maintains strict governance over the database schema and access controls.

Managing the Risks of Hyper-Speed Development

When you can build a feature in an hour that used to take a week, the bottleneck shifts from production to validation. There is a dangerous temptation to relax code reviews because "the AI wrote it and it works." This is a recipe for technical debt. You must "review like a boss." Every AI-generated pull request requires a human sign-off on security, scalability, and compliance.

Furthermore, documentation needs to increase, not decrease. While Claude Code or Jules can draft READMEs and comment code, the human must ensure these documents actually explain the why behind a decision, not just the what. Without this, you'll end up with a "black box" codebase that no human actually understands.

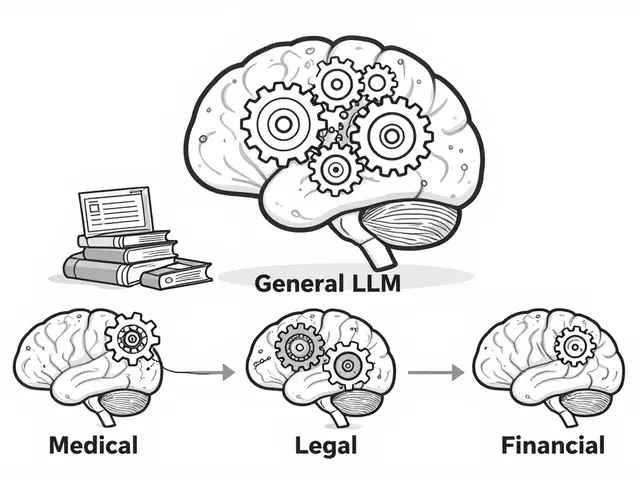

Choosing the Right Tool for the Job

Not all development tasks are suited for the "vibe" approach. Repetitive tasks, MVP prototyping, and legacy system modernization are perfect candidates. However, highly complex, bespoke business logic-the kind that requires deep domain expertise and edge-case handling-still requires manual coding.

| Tool | Best For... | Key Strength |

|---|---|---|

| Cursor | General Purpose Dev | Deep IDE integration & codebase indexing |

| Claude Code | Complex Refactoring | Strong reasoning and large context windows |

| Cascade | Autonomous Projects | Background planning agents & multi-file editing |

| Agentforce Vibes | Enterprise Workflows | Rapidly turning ideas into integrated apps |

The Cultural Shift: From Coder to Architect

Ultimately, setting realistic expectations means acknowledging that the role of the developer is changing. Experienced engineers are moving toward low-code and agentic tools not because they hate coding, but because they love solving problems. The badge of honor is no longer "I spent three weeks building this complex regex," but rather "I architected a system that delivers value to the customer in 48 hours." To succeed, leadership must provide buy-in at the director level; this isn't a tool for a few rogue developers to use in secret. It's a fundamental change in the rhythm of how software is built.

Will vibe coding replace senior developers?

No. If anything, it makes senior developers more valuable. AI agents act like junior developers; they need a senior engineer to set the direction, enforce architectural constraints, and own the final merge. The role shifts from writing lines of code to orchestrating agents and validating outcomes.

How do I handle security in an AI-generated codebase?

Never trust AI-generated code with security-critical paths without a manual audit. Use automated security scanners (SAST tools) and maintain a strict human-in-the-loop review process. The speed of vibe coding should be used to iterate on the UI and logic, but the security layer must be intentionally designed and verified by a human.

What is the best way to start implementing this in a large team?

Start with a small, low-risk pilot project. Establish a shared PLAN.md template and a set of a few agreed-upon "Agent Skills" for code reviews. Once the team sees the speed-to-value on a single MVP, it's easier to scale the methodology to larger projects.

Does vibe coding increase technical debt?

It can if you aren't careful. Because code is generated so quickly, it's easy to ignore small inconsistencies. To prevent this, you must enforce strict commit discipline-commit often, spec harder, and use the AI to refactor and clean up the code as part of the development cycle, rather than leaving it for "later."

What happens if the AI agent makes a catastrophic mistake in the files?

This is why version control and checkpointing are critical. Tools like Cascade allow you to create checkpoints before an autonomous run. Always work in a separate feature branch and never let an AI agent push directly to production without a human reviewing the diff.

Artificial Intelligence

Artificial Intelligence