There is a widespread myth in the tech world that more context equals better answers. You feed a Large Language Model (LLM) thousands of tokens, expecting it to synthesize everything perfectly. Instead, you get slower responses, higher costs, and often, worse accuracy. This counterintuitive reality-where increasing input length actually degrades performance-is the defining challenge of modern prompt engineering is the practice of designing inputs to maximize the effectiveness and accuracy of large language model outputs.

Research published in 2023 by Stanford University and Google AI shattered the assumption that 'more is better.' They found that models like GPT-4 begin to suffer significant performance degradation at around 3,000 tokens, far below their technical maximums which can exceed 100,000 tokens. This isn't just a minor glitch; it's a fundamental limitation of how these models process information. Understanding this tradeoff between prompt length is the total number of tokens or characters in the input provided to an LLM before generation begins and output quality is no longer optional for developers-it is essential for cost control and reliability.

The Hidden Cost of Long Contexts

Why does adding more text hurt performance? It comes down to the architecture of the transformer models themselves. LLMs use attention mechanisms to weigh the importance of different words in your prompt. As the number of tokens increases, the computational complexity scales quadratically. This means doubling your prompt doesn't just double the work; it multiplies the processing load exponentially.

Data from PromptLayer’s 2024 benchmarking study illustrates this starkly. When they doubled the prompt size from 1,000 to 2,000 tokens for GPT-4-turbo, processing time increased by 2.3x. Extending it further to 4,000 tokens resulted in a 5.1x latency increase. But speed isn't the only casualty. Accuracy takes a hit too. PromptPanda’s research showed a linear decline in reasoning accuracy: every additional 500 tokens reduced performance by approximately 5 percentage points. At 500 tokens, accuracy was 95%. By 3,000 tokens, it had dropped to 70%.

- Latency Spike: Longer prompts cause exponential increases in response time due to quadratic attention scaling.

- Accuracy Drop: Reasoning capabilities degrade linearly as token count rises beyond optimal thresholds.

- Cost Inflation: Enterprise audits show unnecessary prompt bloat drives up cloud computing expenses significantly.

An Altexsoft case study from 2023 demonstrated that optimizing prompt length didn't just save money-it improved results. By trimming fat from customer service chatbot prompts, they reduced cloud costs by 37% while boosting output accuracy by 22%. The lesson is clear: brevity is not just poetic; it's profitable.

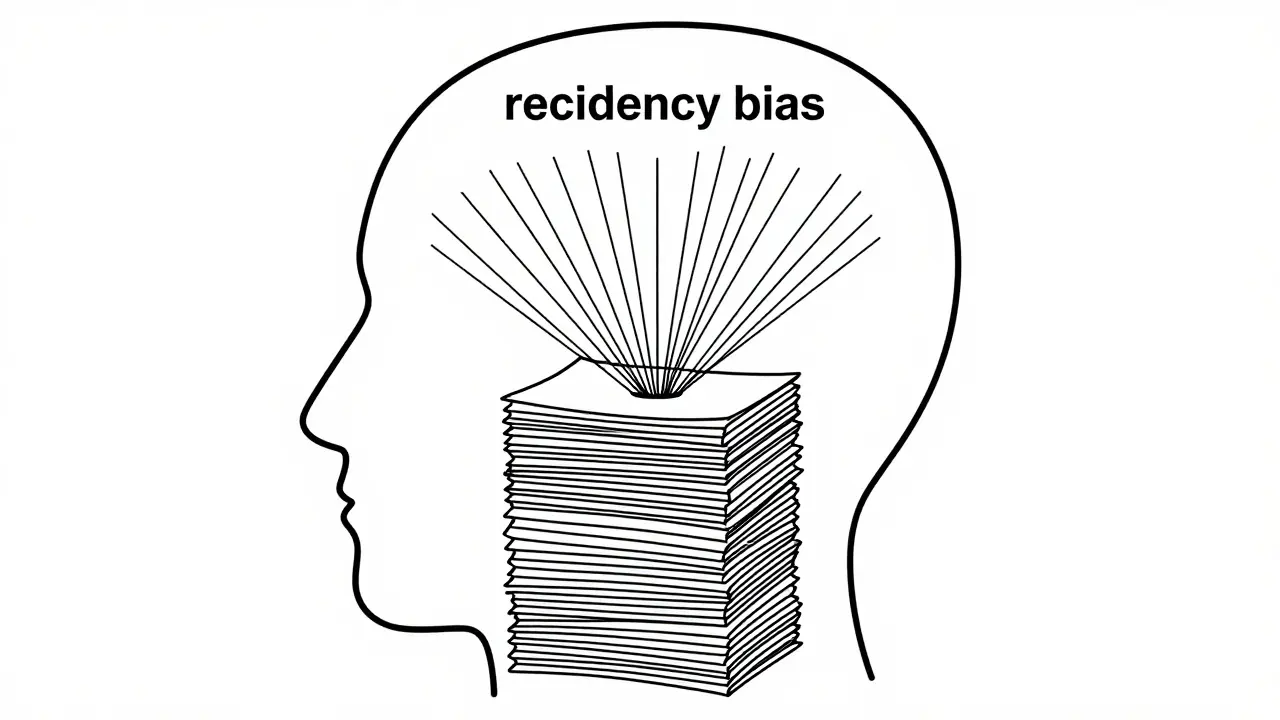

Recency Bias and the Attention Trap

Even if a model could process long texts efficiently, there is another problem: where does it look? Transformers exhibit a phenomenon known as recency bias. They disproportionately weight tokens appearing later in the sequence. In a 10,000-token prompt, critical instructions placed at the beginning might receive only 12-18% of the model's attention allocation, according to PromptLayer testing.

This leads to a frustrating experience for developers. You place crucial constraints at the start of a long document, only to find the model ignoring them because it's focused on the most recent data. A joint study by Microsoft Research and Stanford University in June 2024 quantified the damage: hallucination rates increase by 34% when prompts exceed 2,500 tokens. Additionally, bias amplification rises by 28%, leading to more problematic outputs in lengthy interactions.

To combat this, many developers repeat critical instructions at both the beginning and end of their prompts. While effective, this adds to the token count, creating a vicious cycle. The real solution lies in strategic context management rather than brute-force inclusion of all available data.

Model Comparisons: Who Handles Length Best?

Not all models degrade at the same rate. Different architectures handle long contexts with varying degrees of resilience. Independent testing by MLPerf in Q1 2025 highlighted some key differences among top-tier models.

| Model | Accuracy at 2,000 Tokens | Degradation Rate Beyond Threshold | Optimal Sweet Spot |

|---|---|---|---|

| GPT-4-turbo | 82% | High (Linear decline) | 800-1,200 tokens |

| Gemini 1.5 Pro | 88% | Moderate | 1,000-1,500 tokens |

| Claude 3 | ~90%* | 2.3% per 100 tokens over limit | ~1,800 tokens |

| Llama 3 70B | ~85%* | Low (Only 3% drop 1k-2k) | Up to 2,000+ tokens |

*Estimated based on relative performance metrics cited in MLOps Community reports.

Google's Gemini 1.5 Pro maintains higher accuracy at 2,000 tokens compared to GPT-4-turbo. Meanwhile, open-weight models like Meta's Llama 3 70B show less severe degradation, suggesting they may handle longer contexts more effectively than some proprietary counterparts. However, even advanced techniques like Chain-of-Thought (CoT) prompting fail to mitigate degradation beyond critical thresholds. CoT improved reasoning accuracy by 19% at 1,000 tokens but offered only a 6% improvement at 2,500 tokens.

The Goldilocks Principle for Prompt Design

So, what is the right length? The answer follows the Goldilocks principle: not too short, not too long, but just right. The MLOps Community’s Prompt Engineering Guide (Version 3.1, February 2025) provides specific recommendations based on task complexity.

- Simple Classification Tasks: Start with 500-700 tokens. Keep instructions concise and direct.

- Complex Reasoning: Aim for 800-1,200 tokens. Include necessary examples and context, but prune irrelevant details.

- Maximum Limit: Never exceed 2,000 tokens without empirical validation. If you must go longer, test rigorously.

Dr. Percy Liang, Director of Stanford's Center for Research on Foundation Models, put it bluntly in his October 2024 NeurIPS keynote: "Beyond 2,000 tokens, we're not giving models more context-we're giving them more noise to filter through." Dr. Anna Rohrbach of MIT’s CSAIL added that the attention mechanism's quadratic complexity creates a fundamental bottleneck that parameter scaling cannot overcome.

For specialized tasks like legal contract analysis or medical documentation, longer contexts (32,000+ tokens) can be marginally beneficial, as noted in Nature's April 2025 study. However, these are exceptions, not the rule. For 92% of use cases, shorter is stronger.

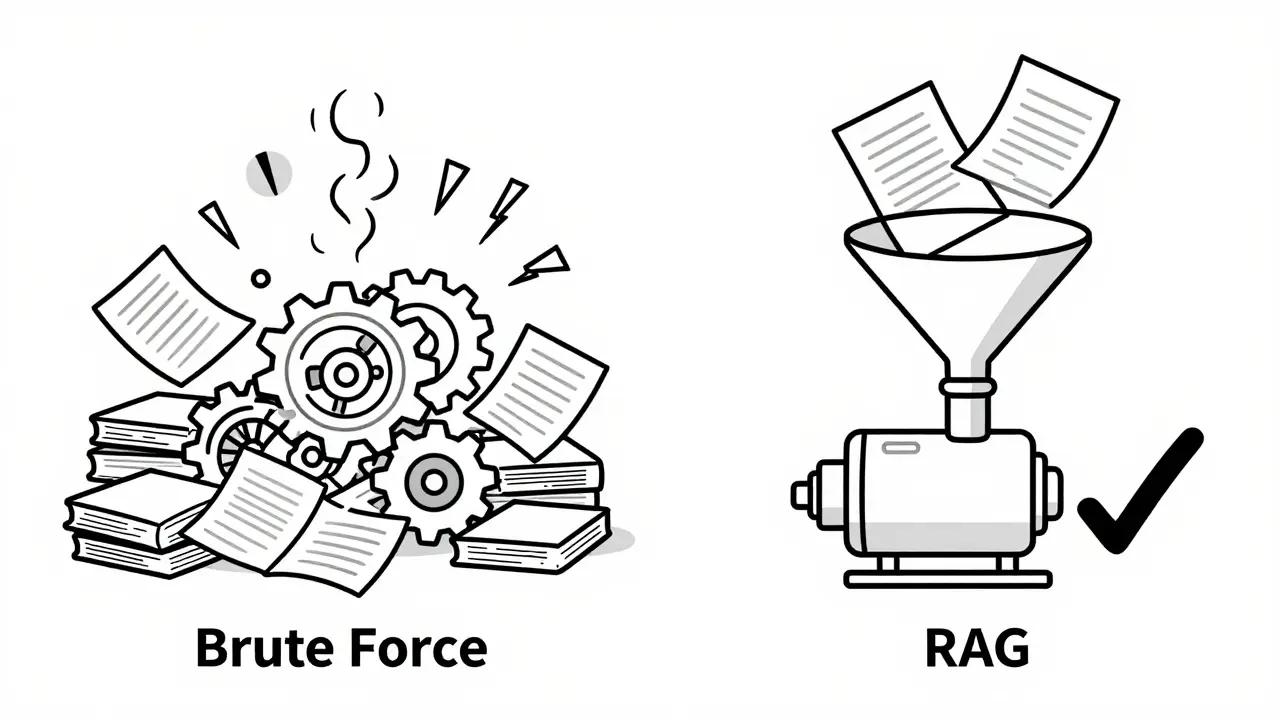

Strategic Alternatives to Brute-Force Context

If you need the model to know a lot, don't stuff it all into one prompt. Instead, use architectural strategies designed for scale. Retrieval-Augmented Generation (RAG) has emerged as the gold standard for handling extensive knowledge bases.

A PromptLayer case study showed that a well-structured 16K-token RAG implementation outperformed a monolithic 128K-token prompt by 31% in accuracy while reducing latency by 68%. RAG works by retrieving only the most relevant snippets of data and feeding them to the model dynamically. This keeps the active context window small and focused.

Other strategies include:

- Iterative Pruning: Remove sentences or paragraphs that do not directly impact the output. Test after each removal to see if accuracy holds.

- Hybrid Prompting: Combine concise core instructions with targeted context retrieval. Use external APIs to fetch data only when needed.

- Repetition of Critical Instructions: Place key constraints at both the start and end of the prompt to mitigate recency bias.

Tools like PromptLayer’s 'PromptOptimizer' feature, launched in January 2025, automate this process. Their data shows that 83% of users achieved optimal results within just 2-3 iterations using automated length testing.

Industry Trends and Future Outlook

The market for prompt optimization is booming. Gartner valued it at $287 million in 2024, growing at 63% annually. By 2028, IDC projects the total addressable market will reach $1.2 billion. This growth reflects a broader shift in how enterprises view LLM deployment. It is no longer enough to simply plug in an API; you must engineer the interaction.

Regulatory pressures are also mounting. The EU AI Act’s December 2024 draft requires 'proportionate context provision' in high-risk applications to prevent prompt-induced hallucinations. This means companies will need to justify why they are sending large amounts of data to models, pushing them toward more efficient designs.

Technological advancements are addressing these issues directly. Google released 'Adaptive Context Window' technology in January 2025, which dynamically adjusts attention focus within long prompts. Anthropic announced that Claude 3.5 would incorporate 'context relevance scoring' to automatically filter low-value tokens. Meta AI’s March 2025 paper demonstrated hierarchical attention mechanisms that improved performance on 4,000-token prompts by 29%.

By 2027, Gartner predicts that 90% of enterprise LLM implementations will use automated context optimization rather than fixed-length prompts. The era of 'dump and pray' prompting is ending. The future belongs to intelligent, streamlined, and strategically managed context windows.

What is the optimal prompt length for most LLM tasks?

For simple classification tasks, aim for 500-700 tokens. For complex reasoning, 800-1,200 tokens is ideal. Generally, you should avoid exceeding 2,000 tokens unless you have specific empirical evidence that longer contexts improve your particular use case.

Why does increasing prompt length reduce accuracy?

Longer prompts increase computational complexity quadratically due to attention mechanisms. This leads to 'attention dilution,' where the model struggles to focus on critical information. Additionally, recency bias causes the model to prioritize later tokens, often ignoring important instructions placed earlier in the text.

How can I handle large documents without hitting length limits?

Use Retrieval-Augmented Generation (RAG). Instead of feeding the entire document, retrieve only the most relevant snippets and send those to the model. This keeps the context window small and focused, improving both speed and accuracy.

Does Chain-of-Thought prompting help with long prompts?

Chain-of-Thought (CoT) helps with reasoning accuracy at moderate lengths (around 1,000 tokens), but its benefits diminish significantly as prompt length increases. At 2,500 tokens, CoT offers minimal improvement compared to shorter prompts.

Which LLM handles long contexts best?

Gemini 1.5 Pro and Llama 3 70B show better retention of accuracy at longer lengths compared to GPT-4-turbo. However, even these models experience degradation beyond 2,000-3,000 tokens. No model is immune to the effects of excessive context length.

Artificial Intelligence

Artificial Intelligence