AI coding assistants have changed how we build software. You type a prompt, and the model generates code instantly. This practice, often called vibe coding, is the use of conversational AI prompts to generate software code rapidly, speeds up development significantly. But it introduces new risks. AI models can hallucinate dependencies, insert insecure patterns, or accidentally remove critical security checks. If you merge this code without proper safeguards, your production environment becomes vulnerable.

Branch protection acts as an automated gatekeeper. It prevents vulnerable code from reaching your main branch by enforcing scanning and validation rules at the pull request level. In 2025 and 2026, industry data showed that catching vulnerabilities before they merge is far cheaper than fixing them in production. For vibe-coded repositories, robust branch protection isn't just a best practice-it's essential.

Why Vibe Coding Needs Stronger Security Gates

When developers rely on AI to write code, the speed of iteration increases dramatically. However, AI models don't always understand context or security implications. They might suggest a library that doesn't exist-a phenomenon known as package hallucination. Attackers sometimes create real packages with these fake names, introducing backdoors through typosquatting attacks.

Consider this scenario: An AI assistant suggests installing a package named auth-utils-pro. The developer accepts the suggestion, runs the install command, and merges the change. Later, security teams discover the package contains malicious code designed to steal credentials. Because the code passed traditional review processes quickly, the vulnerability went undetected until it reached production.

Branch protection addresses this risk by enforcing strict dependency verification. Tools like Snyk, a platform for finding and fixing vulnerabilities in open source dependencies scan every pull request for known compromised packages. Combined with cooldown policies that block newly published npm versions, organizations can prevent supply chain attacks before they spread.

Core Scanning Categories for Branch Protection

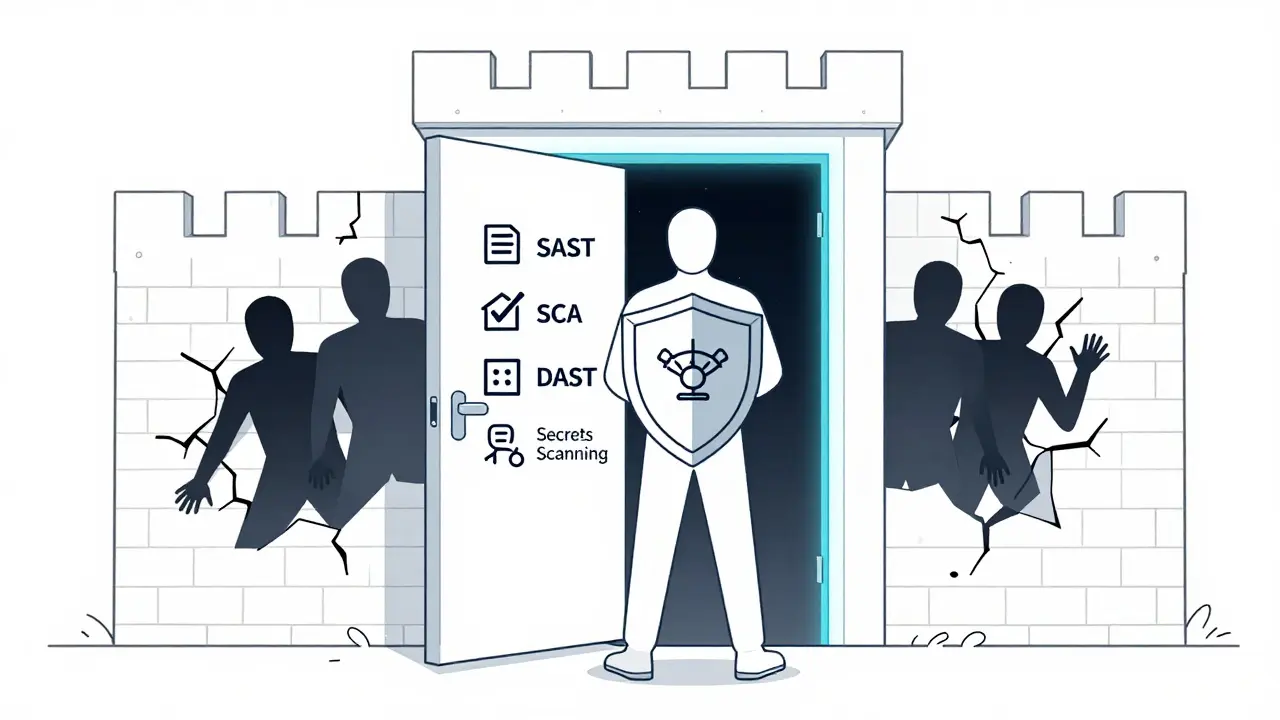

Effective branch protection requires multiple layers of scanning. Each layer targets specific vulnerability types common in AI-generated code. Here are the four primary categories:

- SAST (Static Application Security Testing): Catches SQL injection, cross-site scripting (XSS), path traversal, and insecure cryptography. Tools like Semgrep, CodeQL, and Snyk Code run pre-commit and in CI pipelines.

- SCA (Software Composition Analysis): Identifies vulnerable dependencies and license issues. Platforms such as Snyk, Trivy, and npm audit check every pull request against known vulnerability databases.

- DAST (Dynamic Application Security Testing): Finds runtime vulnerabilities like authentication bypasses and CORS misconfigurations during staging deployment. OWASP ZAP and Burp Suite perform these tests automatically.

- Secrets Scanning: Detects API keys, passwords, and tokens committed to code. Gitleaks and GitGuardian operate at both pre-commit and CI stages to block accidental secret exposure.

| Category | Vulnerability Types | Common Tools | Execution Stage |

|---|---|---|---|

| SAST | SQL Injection, XSS, Path Traversal | Semgrep, CodeQL, Snyk Code | Pre-commit & CI |

| SCA | Vulnerable Dependencies, License Issues | Snyk, Trivy, npm audit | CI Pull Requests |

| DAST | Auth Bypass, CORS Misconfiguration | OWASP ZAP, Burp Suite | Staging Deployment |

| Secrets Scanning | API Keys, Passwords, Tokens | Gitleaks, GitGuardian | Pre-commit & CI |

Implementing Automated Gatekeepers

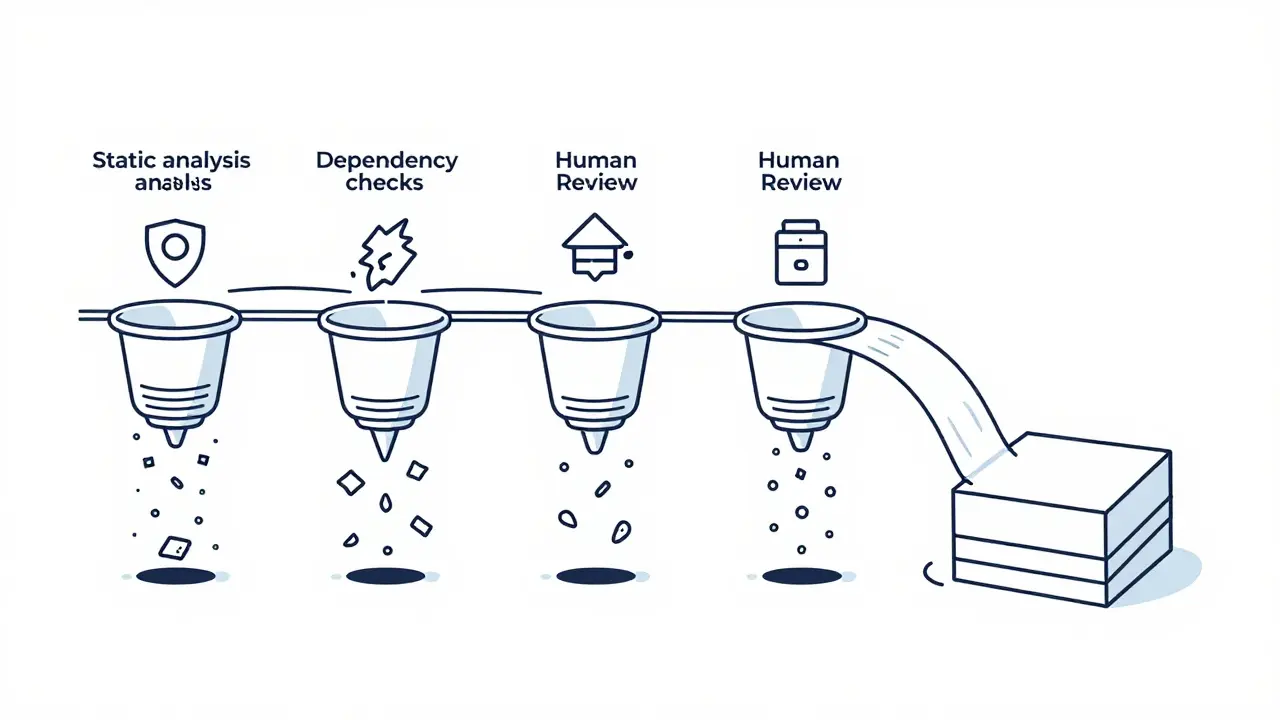

You need concrete implementation steps to enforce branch protection effectively. GitHub Actions provides a flexible framework for integrating multiple scanning tools into your CI/CD pipeline. Here’s a practical approach based on 2026 guides:

- Configure Secrets Detection: Use Gitleaks to scan commits for exposed credentials. Block merges if any secrets are found.

- Add Static Analysis: Integrate Semgrep with configurations targeting OWASP Top Ten, Node.js, and TypeScript security rules. Ensure scans fail on high-severity findings.

- Enable Dependency Scanning: Set up Snyk to analyze all dependencies in each pull request. Configure alerts for known vulnerabilities and license compliance issues.

- Enforce Cooldown Policies: Implement rules that reject newly published npm packages within a configurable time window. This protects against fresh supply chain attacks.

- Require Human Review: Mandate at least one approved review before merging, ensuring human oversight complements automated checks.

This multi-stage process creates pass/fail gates that determine whether code can proceed. When a scan detects a vulnerability, the developer must remediate the issue before gaining merge authorization. This balance between automation and human judgment maintains velocity while reducing risk.

Addressing AI-Specific Vulnerabilities

AI-generated code has unique failure modes that require targeted protections. One major concern is the "hallucinated bypass" risk, where AI accidentally removes security checks or deletes keywords like auth by mistake. To counter this, implement infrastructure-level isolation using tools like NGINX or Cloudflare Zero Trust. These systems gate entry points to applications and enforce security even if the underlying code is broken.

Another critical area is database configuration. AI models frequently generate overly permissive defaults. Branch protection should verify Row Level Security (RLS) enforcement on every table, ensuring users only read/write their own data. Never expose service role keys to clients. Additionally, mandate parameterized queries and prepared statements for all database access-concatenating user input into SQL statements must trigger immediate rejection.

Security headers represent another gap. AI rarely adds them automatically. Your branch protection rules should verify implementations like helmet.js for Express applications or manual header settings including:

- X-Content-Type-Options: nosniff

- X-Frame-Options: DENY

- Strict-Transport-Security with max-age of 31536000 seconds

- Content-Security-Policy set to default-src 'self'

- Referrer-Policy set to strict-origin-when-cross-origin

Managing Permissions and Access Controls

Narrowly scoped permissions are vital for securing AI-assisted workflows. Each service should receive a distinct identity with minimal necessary privileges. Avoid wildcard policies like *:* or AdministratorAccess, which AI assistants tend to request by default. Instead, define explicit IAM roles tailored to specific operations.

Application-level controls must also be enforced via branch protection. Verify that default user roles receive minimum required permissions with clear escalation paths. Scope API keys to particular functions rather than granting full access. File uploads should direct to isolated storage with size limits and type validation. Network access must restrict to necessary endpoints instead of permitting unrestricted internet connectivity.

Egress policy enforcement plays a crucial role here. Block unauthorized outbound traffic at DNS, HTTPS, and network layers. This prevents CI/CD credential and source code exfiltration, especially important when AI agents like Claude Code operate within GitHub Actions without built-in firewalls. Process attribution tracing helps attribute every network call to exact processes by PID, providing essential context for incident investigation.

Building Guardrails for AI Assistants

Creating behavioral guidelines for AI assistants enhances overall security posture. Establish built-in guardrails with policies and prompt rules that set clear expectations in development environments. Feature branch workflows should require developers to run tests, lint checks, security scans, license scans, and update Software Bills of Materials before completing branches.

A comprehensive security checklist framework identifies seventeen critical areas needing branch protection enforcement:

- Authentication mechanisms

- Middleware protection strategies

- Role-based access control implementation

- Sensitive data handling procedures

- Error management protocols

- Input validation techniques

- Database security measures

- Hosting environment configurations

- Secure communication standards

- Logging and monitoring setups

- Security testing and audit routines

- Backup and disaster recovery plans

- Dependency management practices

- Rate limiting and anti-abuse controls

- Data privacy compliance requirements

- Incident response and awareness training

- Infrastructure as Code security

These elements form a defense-in-depth architecture preventing AI-generated vulnerabilities from reaching production systems. By combining automated scanning tools, policy enforcement, and human review at the branch level, organizations achieve robust protection against emerging threats.

What is vibe coding?

Vibe coding refers to the practice of using AI coding assistants to generate software code through conversational prompts. Developers describe desired functionality, and the AI produces corresponding code snippets rapidly.

Why does vibe coding pose security risks?

AI models may introduce vulnerabilities such as hallucinated dependencies, typosquatting attacks, insecure patterns, or accidental removal of security checks. Without proper safeguards, these issues can reach production environments unnoticed.

How do I configure branch protection for AI-generated code?

Integrate multiple scanning tools into your CI/CD pipeline. Use Gitleaks for secrets detection, Semgrep for static analysis, Snyk for dependency scanning, and enforce cooldown policies for new packages. Require human reviews alongside automated checks.

What is package hallucination?

Package hallucination occurs when AI suggests libraries that don’t exist. Attackers exploit this by creating real packages with similar names, potentially introducing malicious code through typosquatting attacks.

Can branch protection stop supply chain attacks?

Yes, branch protection combined with cooldown policies blocks newly published packages temporarily. This delays potential supply chain attacks until community review confirms safety, protecting organizations from incidents like the Shai-Hulud campaigns.

Artificial Intelligence

Artificial Intelligence