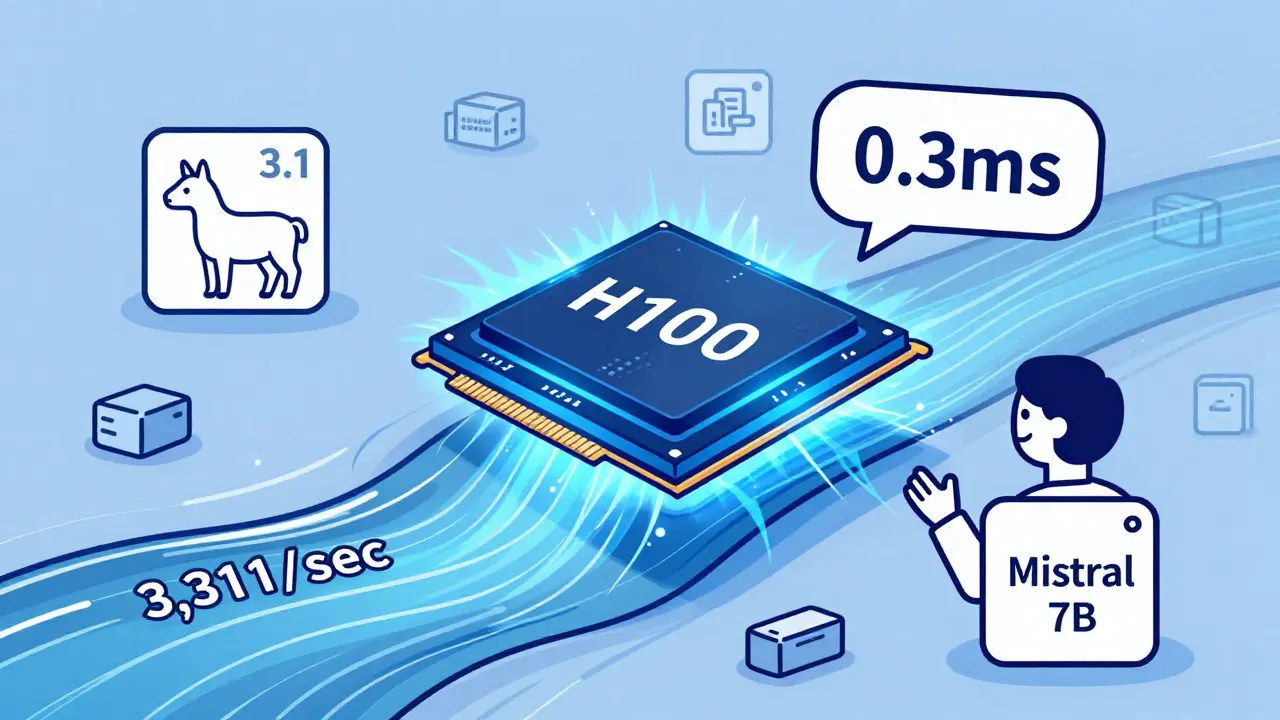

Tag: A100 GPU

Learn how to choose between NVIDIA A100, H100, and CPU offloading for LLM inference in 2025. See real performance numbers, cost trade-offs, and which option actually works for production.

Categories

Archives

Recent-posts

Template Repos with Pre-Approved Dependencies for Vibe Coding: Setup, Best Picks, and Real Risks

Feb, 20 2026

Artificial Intelligence

Artificial Intelligence