Tag: batch size

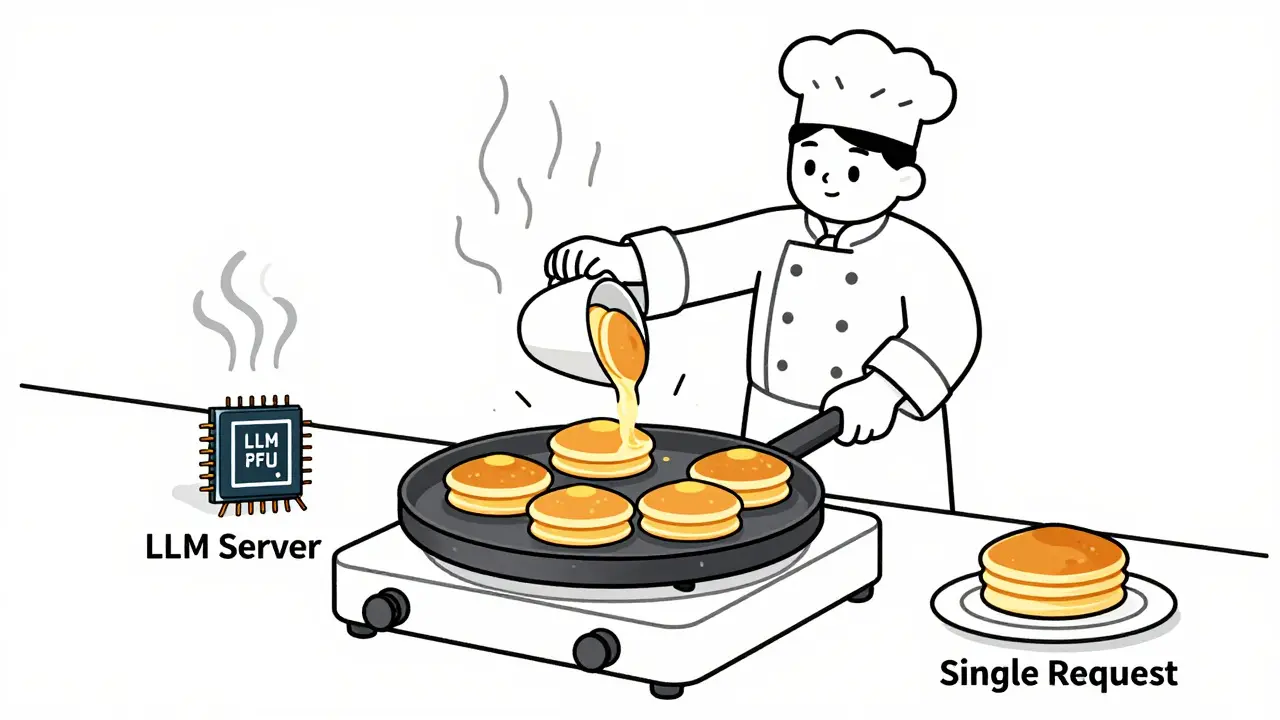

Learn how to choose optimal batch sizes for LLM serving to cut cost per token by up to 87%. Discover real-world results, batching types, hardware trade-offs, and proven techniques to reduce AI infrastructure costs.

Categories

Archives

Recent-posts

Marketing Content at Scale with Generative AI: Product Descriptions, Emails, and Social Posts

Jun, 29 2025

Domain-Specialized Generative AI Models: Why Vertical Expertise Beats General Purpose AI

Mar, 9 2026

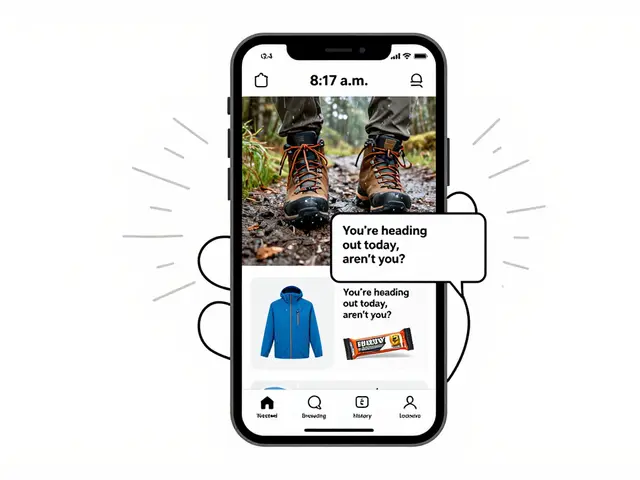

Customer Journey Personalization Using Generative AI: Real-Time Segmentation and Content

Mar, 17 2026

Artificial Intelligence

Artificial Intelligence