Tag: batch size

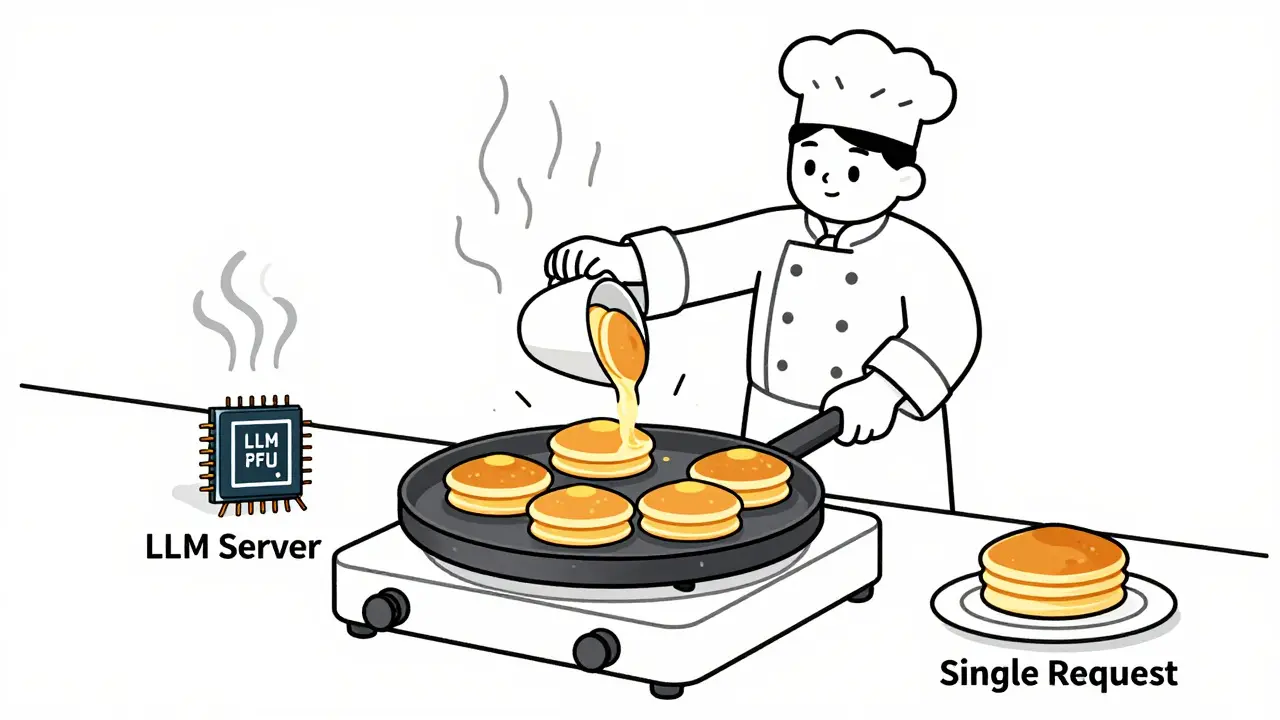

Learn how to choose optimal batch sizes for LLM serving to cut cost per token by up to 87%. Discover real-world results, batching types, hardware trade-offs, and proven techniques to reduce AI infrastructure costs.

Categories

Archives

Recent-posts

Calibration and Outlier Handling in Quantized LLMs: How to Keep Accuracy When Compressing Models

Jul, 6 2025

Benchmarking Transformer Variants: Choosing the Right LLM Architecture for Your Workload

Apr, 4 2026

Artificial Intelligence

Artificial Intelligence