Tag: chargeback models

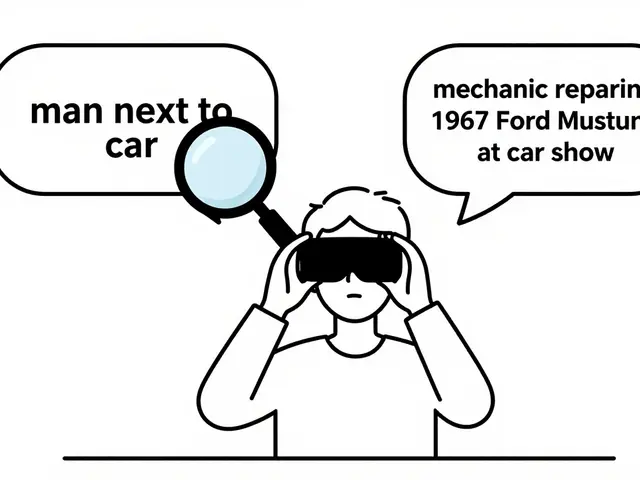

Learn how to accurately allocate LLM costs across teams using dynamic chargeback models that track token usage, RAG queries, and AI agent loops. Stop guessing. Start optimizing.

Categories

Archives

Recent-posts

Marketing Content at Scale with Generative AI: Product Descriptions, Emails, and Social Posts

Jun, 29 2025

Artificial Intelligence

Artificial Intelligence