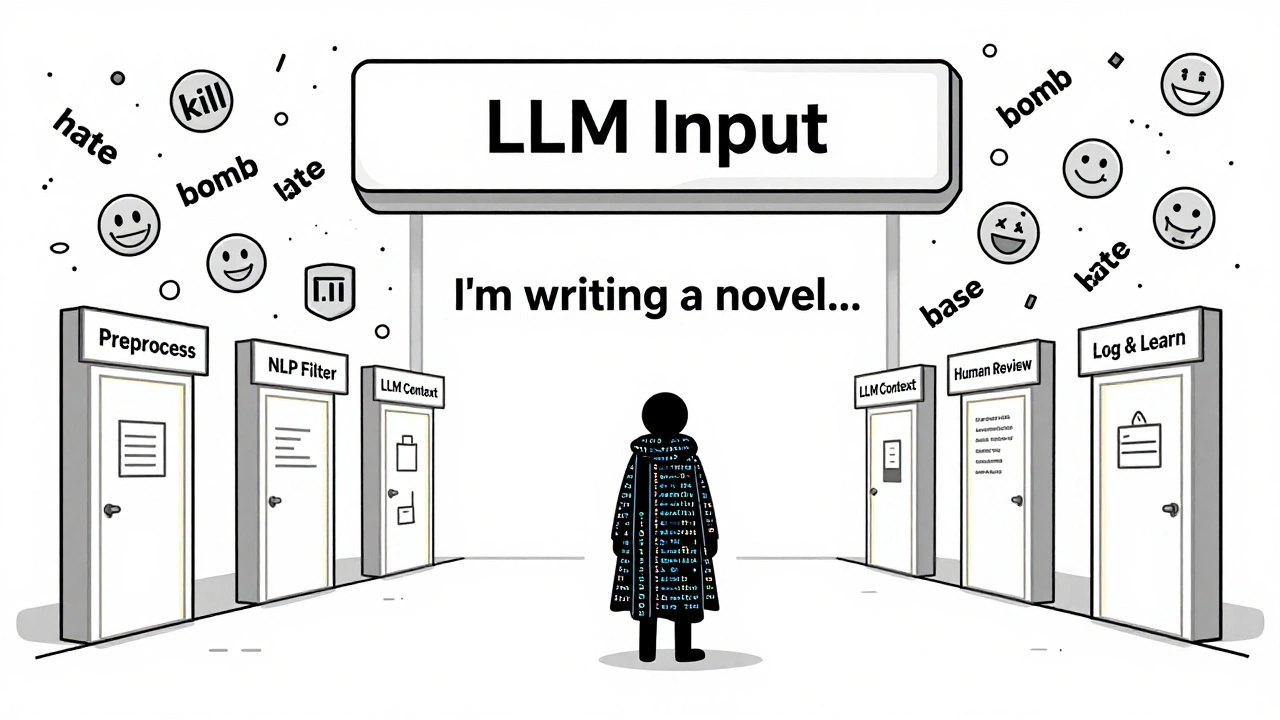

Tag: content safety for LLMs

Learn how modern content moderation pipelines use AI and human review to block harmful user inputs to LLMs. Discover the best practices, costs, and real-world systems keeping AI safe.

Categories

Archives

Recent-posts

Marketing Content at Scale with Generative AI: Product Descriptions, Emails, and Social Posts

Jun, 29 2025

Artificial Intelligence

Artificial Intelligence