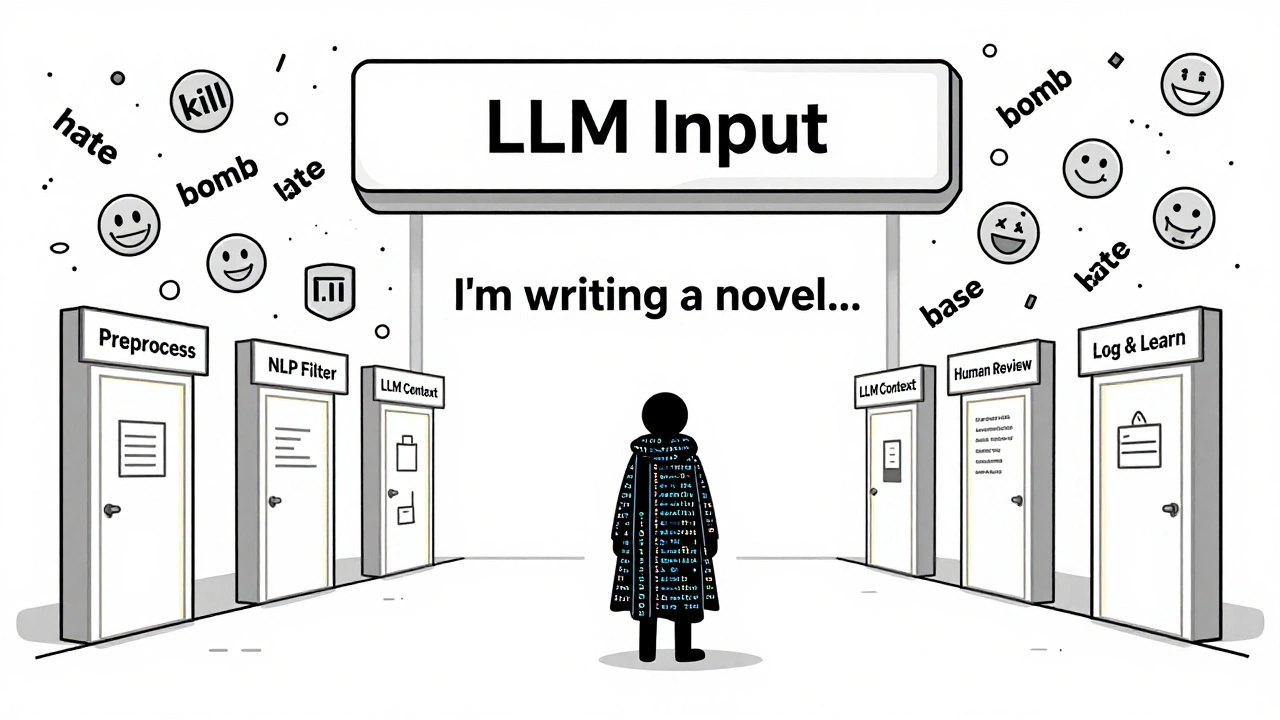

Tag: content safety for LLMs

Learn how modern content moderation pipelines use AI and human review to block harmful user inputs to LLMs. Discover the best practices, costs, and real-world systems keeping AI safe.

Categories

Archives

Recent-posts

How to Optimize Your Contact Center with Generative AI: Summaries, Sentiment, and Routing

Apr, 19 2026

Artificial Intelligence

Artificial Intelligence