Tag: fine-tuning LLMs

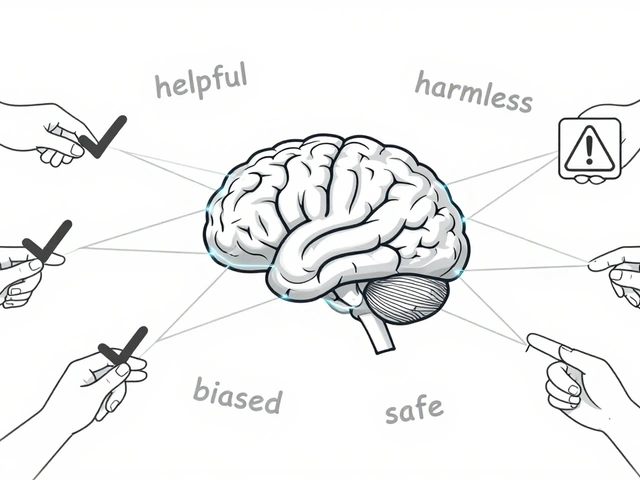

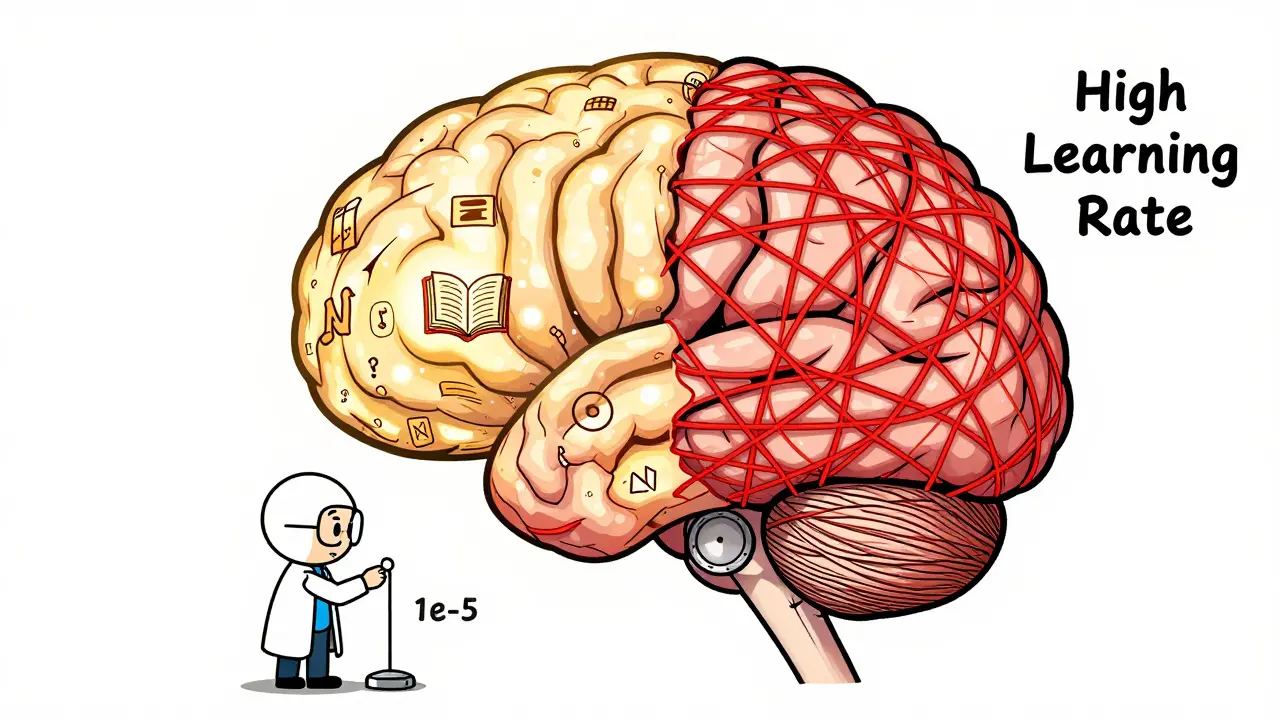

Learn how to fine-tune large language models without losing their original knowledge. Discover the best hyperparameters, methods like LoRA and FAPM, and real-world trade-offs that keep models accurate and reliable.

Categories

Archives

Recent-posts

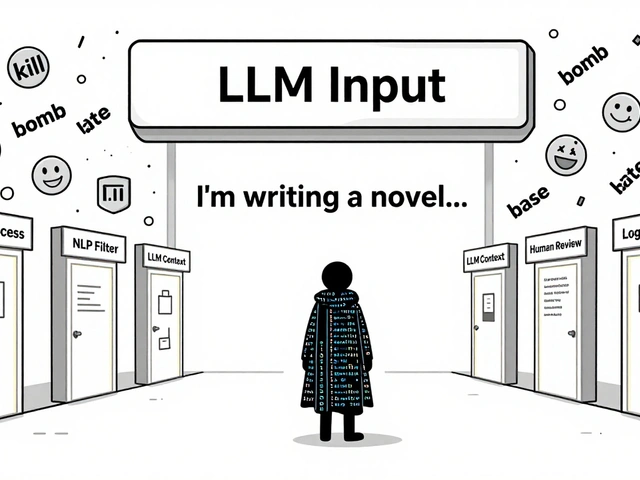

Content Moderation Pipelines for User-Generated Inputs to LLMs: How to Prevent Harmful Content in Real Time

Aug, 2 2025

Artificial Intelligence

Artificial Intelligence