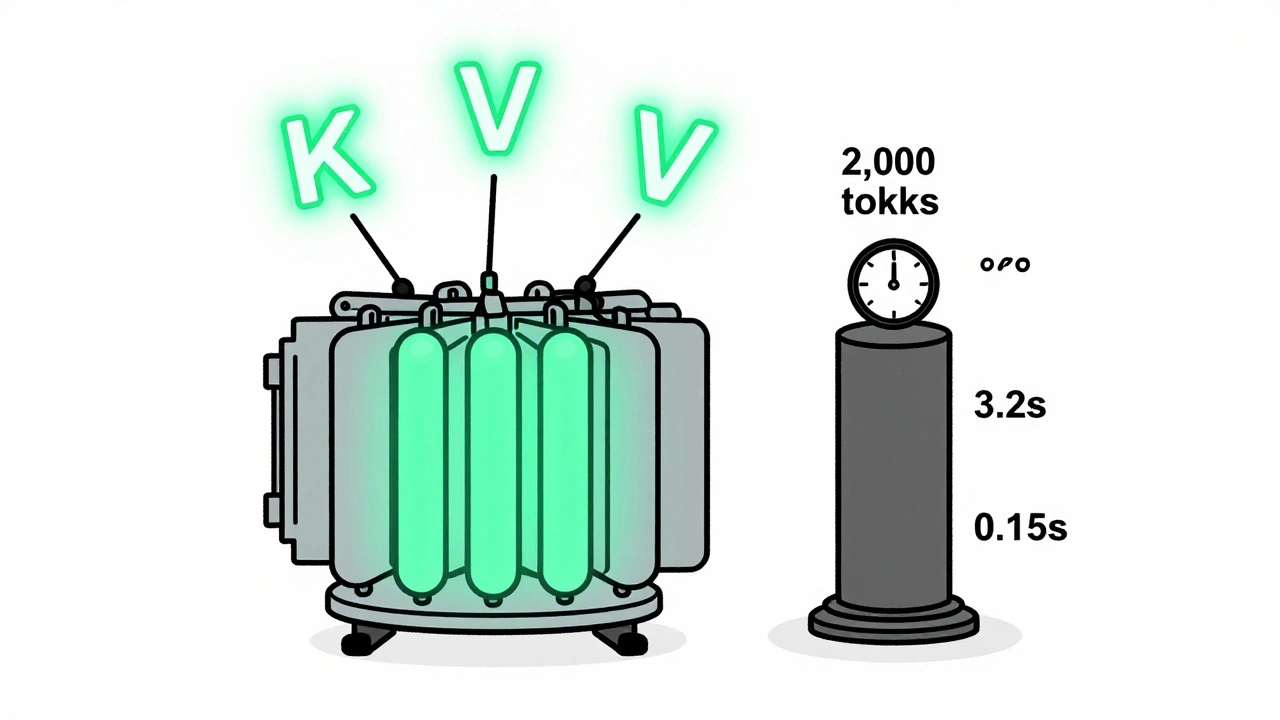

Tag: KV caching

KV caching and continuous batching are essential for fast, affordable LLM serving. Learn how they reduce memory use, boost throughput, and enable real-world deployment on consumer hardware.

Categories

Archives

Recent-posts

Domain-Specialized Generative AI Models: Why Vertical Expertise Beats General Purpose AI

Mar, 9 2026

Artificial Intelligence

Artificial Intelligence