Tag: LLM chunking

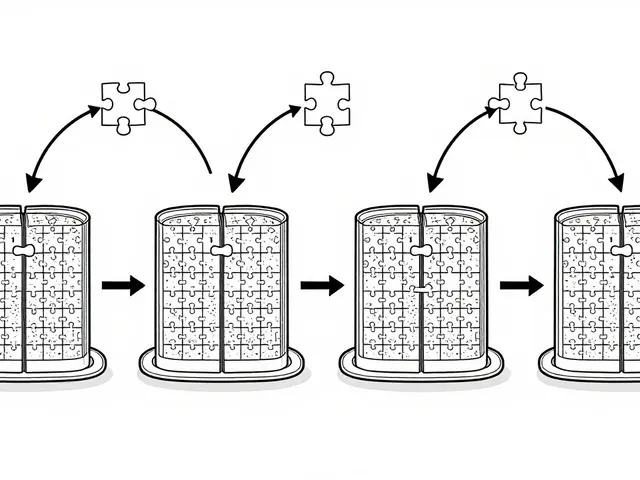

Chunking strategies determine how well RAG systems retrieve information from documents. Page-level chunking with 15% overlap delivers the best balance of accuracy and speed for most use cases, but hybrid and adaptive methods are rising fast.

Categories

Archives

Recent-posts

Calibration and Outlier Handling in Quantized LLMs: How to Keep Accuracy When Compressing Models

Jul, 6 2025

Artificial Intelligence

Artificial Intelligence